A Beginner's Guide to Differential Expression Analysis: Top Tools and Best Practices for New Researchers

This comprehensive guide demystifies differential expression (DE) analysis for new researchers, scientists, and drug development professionals.

A Beginner's Guide to Differential Expression Analysis: Top Tools and Best Practices for New Researchers

Abstract

This comprehensive guide demystifies differential expression (DE) analysis for new researchers, scientists, and drug development professionals. It provides a foundational understanding of DE analysis, compares the leading software and R packages (like DESeq2, edgeR, and limma-voom), and offers practical, step-by-step workflows for implementation. The article also addresses common troubleshooting issues and optimization strategies, while discussing validation methods and critical comparative insights to ensure robust, reproducible results for biomedical discovery and clinical applications.

What is Differential Expression Analysis? A Primer for Research Success

Differential Expression (DE) analysis is the computational and statistical process of identifying genes, transcripts, or proteins whose abundance differs significantly between two or more biological conditions (e.g., diseased vs. healthy, treated vs. untreated). In the context of a thesis evaluating the best DE analysis tools for new researchers, it is paramount to first establish a rigorous definition. A precise understanding of DE is the foundational pillar upon which the selection of appropriate tools, experimental designs, and validation strategies rests. This guide details the core principles, experimental protocols, and data interpretation frameworks that make DE analysis indispensable in genomics and biomarker discovery.

Core Principles and Statistical Foundations

DE analysis moves beyond simple fold-change calculations. It quantifies expression changes while accounting for biological and technical variance inherent in high-throughput data. The primary output is a list of features ranked by statistical significance (p-value, adjusted for multiple testing) and magnitude of change (log2 fold-change).

Table 1: Core Statistical Metrics in DE Analysis

| Metric | Formula/Description | Interpretation in Biomarker Discovery | ||

|---|---|---|---|---|

| Log2 Fold-Change (Log2FC) | Log2(Mean Expression Condition B / Mean Expression Condition A) | Quantifies magnitude of change. | FC | > 1 (2x change) is often a preliminary filter. |

| P-value | Probability of observing the data given the null hypothesis (no expression difference). | Identifies statistically significant changes. Low p-value suggests change is not random. | ||

| Adjusted P-value (FDR, q-value) | Corrected p-value for multiple hypothesis testing (e.g., Benjamini-Hochberg). | Controls false discovery rate. Q-value < 0.05 is a standard threshold for confident biomarker candidates. | ||

| Base Mean Expression | Average normalized expression across all samples. | Filters low-abundance features with unreliable statistical power. |

Detailed Experimental Protocol: Bulk RNA-Seq for DE

The following is a standard workflow for identifying DE genes from bulk RNA-seq data.

1. Experimental Design & Sample Collection:

- Conditions: Define distinct biological groups (minimum n=3 per group for variance estimation).

- Replication: Use biological replicates (different individuals/cultures) over technical replicates.

- RNA Extraction: Use TRIzol or column-based kits. Assess RNA Integrity Number (RIN > 8) via Bioanalyzer.

2. Library Preparation & Sequencing:

- Protocol: Poly-A selection for mRNA, rRNA depletion for total RNA.

- Platform: Illumina NovaSeq or NextSeq are current standards.

- Depth: Aim for 20-40 million paired-end reads per sample.

3. Computational Analysis (Key Steps):

- Quality Control: FastQC to assess read quality.

- Alignment: Map reads to a reference genome (e.g., GRCh38) using splice-aware aligners like STAR or HISAT2.

- Quantification: Generate gene-level read counts using featureCounts or HTSeq.

- Differential Expression: Import count matrix into R/Bioconductor. Key tools compared in our thesis include:

- DESeq2: Uses a negative binomial model, ideal for studies with limited replicates. Shrinks fold-changes to reduce false positives.

- edgeR: Similar model to DESeq2, often faster for large datasets.

- limma-voom: Applies linear models to log-transformed counts, powerful for complex experimental designs.

4. Visualization & Interpretation:

- Volcano Plot: Visualizes Log2FC vs. -log10(p-value).

- Heatmaps: Show expression patterns of top DE genes across all samples.

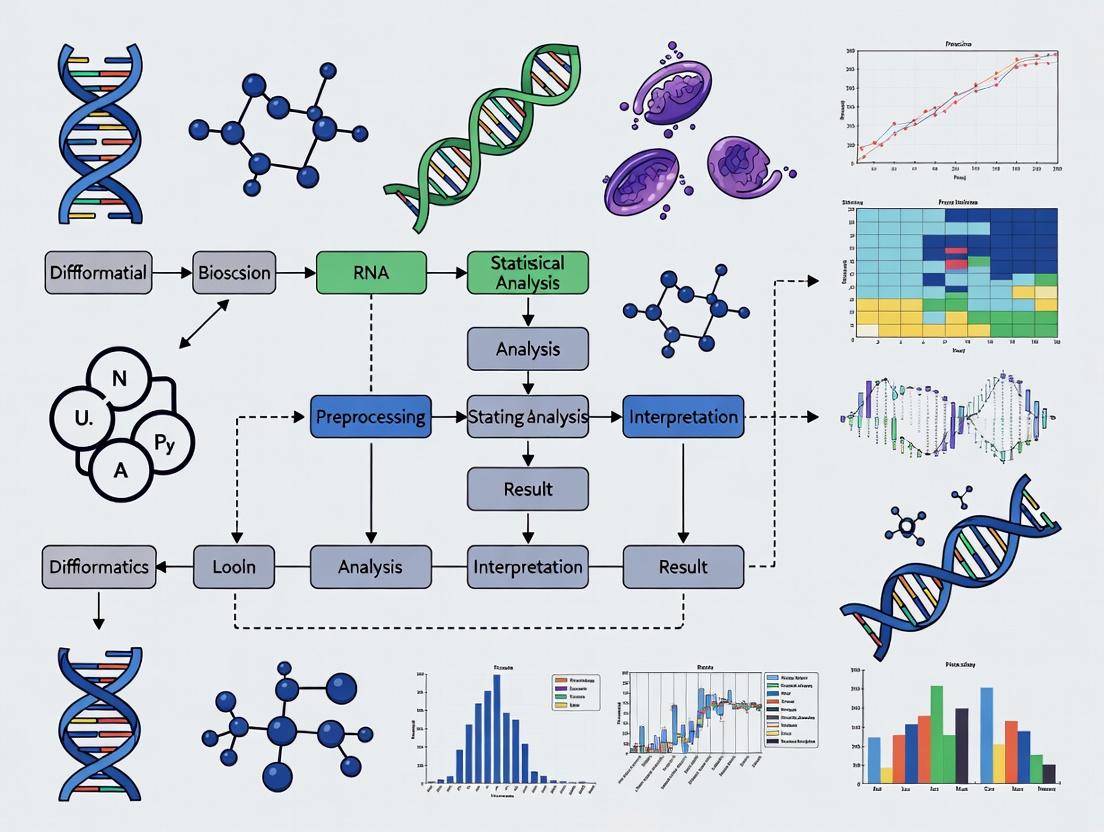

Diagram 1: DE Analysis Workflow (RNA-seq)

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagent Solutions for DE Experiments

| Item | Function & Rationale |

|---|---|

| TRIzol Reagent | Monophasic solution for simultaneous cell lysis, RNA stabilization, and protein/DNA separation. Ensures high-quality RNA integrity. |

| DNase I (RNase-free) | Removes genomic DNA contamination from RNA preparations, critical for accurate RNA-seq quantification. |

| RNA-seq Library Prep Kit (e.g., Illumina TruSeq) | Standardized reagents for mRNA enrichment, fragmentation, cDNA synthesis, adapter ligation, and PCR amplification. |

| SPRIselect Beads | Magnetic beads for size selection and clean-up during library prep, replacing traditional column-based methods. |

| ERCC RNA Spike-In Mix | Synthetic RNA controls added to samples before library prep to monitor technical variance and assay sensitivity. |

| qPCR Master Mix with SYBR Green | For orthogonal validation of DE genes identified by RNA-seq. Requires specific primers for candidate genes. |

DE in Signaling Pathway and Biomarker Discovery

DE analysis is rarely an endpoint; its power is unlocked through biological interpretation. Enrichment analysis of DE gene lists reveals perturbed pathways, informing mechanism.

Diagram 2: From DE Genes to Pathway Insight

Table 3: Common Enrichment Analysis Tools (for Interpretation)

| Tool | Method | Key Output |

|---|---|---|

| clusterProfiler | Over-representation & GSEA for GO and KEGG. | Enriched terms with p-values and gene sets. |

| GSEA (Broad Institute) | Gene Set Enrichment Analysis (requires ranked list). | Enrichment score (ES), normalized ES (NES), FDR. |

| Enrichr | Web-based tool for rapid querying of numerous libraries. | Interactive tables and visualizations. |

Defining differential expression with statistical rigor is the critical first step that determines the validity of all subsequent conclusions in genomics. For new researchers, as explored in our broader thesis, selecting a DE tool (DESeq2, edgeR, or limma-voom) depends on experimental design, sample size, and computational comfort, but all rely on this foundational concept. Accurate DE analysis directly enables the transition from raw genomic data to discoverable biomarkers and actionable biological insights, forming the core of modern translational research in drug development and personalized medicine.

Within the thesis exploring the best differential expression analysis tools for new researchers, understanding the underlying statistics is paramount. Selecting a tool often hinges on its implementation and interpretation of core concepts like P-values, Log2 Fold Change (LFC), and the False Discovery Rate (FDR). This guide explains these pillars of high-throughput data analysis, providing the foundational knowledge required to critically evaluate and effectively use tools such as DESeq2, edgeR, or limma.

Core Concepts Explained

1. P-value The P-value quantifies the probability of observing the obtained data (or something more extreme) if the null hypothesis is true. In differential expression, the null hypothesis states that there is no difference in expression between two conditions (e.g., treated vs. control).

- Interpretation: A small P-value (e.g., < 0.05) suggests the observed expression difference is unlikely due to random chance alone, leading to rejection of the null hypothesis.

- Caution: In omics studies with thousands of simultaneous tests, using a nominal P-value cutoff (0.05) leads to a high number of false positives.

2. Log2 Fold Change (LFC) This is a measure of the magnitude and direction of expression change.

- Calculation: LFC = log2(mean expression in Condition A / mean expression in Condition B).

- Interpretation: An LFC of 1 means a 2-fold increase (2^1) in Condition A. An LFC of -2 means a 4-fold decrease (2^-2 = 1/4) in Condition A relative to B. It provides the biological effect size.

3. False Discovery Rate (FDR) To address the multiple testing problem, the FDR is used. The most common method is the Benjamini-Hochberg procedure.

- Definition: FDR is the expected proportion of false positives among all features declared significant. An FDR-adjusted P-value (q-value) of 0.05 means 5% of the significant hits are expected to be false discoveries.

- Utility: Controlling the FDR, rather than the per-test error rate, is the standard for genomic studies as it is more powerful and provides a more interpretable metric.

Table 1: Comparison of Statistical Outputs from Hypothetical Gene Analysis

| Gene ID | Mean Expression (Control) | Mean Expression (Treated) | Raw P-value | Log2 Fold Change | FDR-adjusted P-value (q-value) | Significant (FDR < 0.05)? |

|---|---|---|---|---|---|---|

| Gene_A | 10.5 | 150.2 | 2.1e-10 | 3.84 | 1.5e-06 | Yes |

| Gene_B | 1050.3 | 1200.7 | 0.032 | 0.19 | 0.089 | No |

| Gene_C | 25.1 | 5.8 | 5.7e-05 | -2.11 | 0.003 | Yes |

Experimental Protocol: RNA-seq Differential Expression Analysis

A standard workflow for generating the data analyzed by these concepts is outlined below.

Protocol: Bulk RNA-seq Differential Expression Analysis

1. Sample Preparation & Sequencing:

- Extract total RNA from biological replicates (minimum n=3 per condition) using TRIzol or column-based kits. Assess RNA integrity (RIN > 8).

- Prepare sequencing libraries using a poly-A selection or rRNA depletion protocol, followed by cDNA synthesis, adapter ligation, and PCR amplification.

- Sequence on an Illumina platform to generate at least 20 million paired-end reads per sample.

2. Bioinformatics Analysis:

- Quality Control: Use FastQC to assess read quality. Trim adapters and low-quality bases with Trimmomatic.

- Alignment: Map reads to a reference genome (e.g., GRCh38) using a splice-aware aligner like STAR.

- Quantification: Count reads mapping to each gene feature using featureCounts (from Subread package) or HTSeq.

- Differential Expression: Input the count matrix into a statistical tool (e.g., DESeq2) following its standard workflow.

3. Statistical Modeling with DESeq2 (Example):

This whitepaper serves as a foundational chapter in a broader thesis on Best differential expression analysis tools for new researchers. Selecting the appropriate initial data generation technology is a critical first step that dictates subsequent analytical choices and tool compatibility. Here, we provide a technical comparison of RNA-seq and microarray platforms to inform that decision.

Core Technological Principles & Quantitative Comparison

RNA-seq (RNA sequencing) is a next-generation sequencing (NGS)-based method that provides a digital, quantitative readout of the transcriptome by sequencing cDNA libraries. Microarrays, in contrast, rely on the hybridization of fluorescently labeled cDNA to predefined oligonucleotide probes immobilized on a solid surface.

Table 1: Core Technical Specifications and Performance Metrics

| Feature | RNA-seq | Microarray (High-Density) |

|---|---|---|

| Underlying Principle | High-throughput sequencing | Hybridization to fixed probes |

| Throughput Dynamic Range | > 10^5 | ~ 10^3-10^4 |

| Resolution | Single-base (for sequencing) | Defined by probe design |

| Background Noise | Low (specific mapping) | Higher (non-specific hybridization) |

| Required Input RNA | 1 ng - 1 µg (protocol dependent) | 50 ng - 1 µg |

| Ability to Detect Novel Transcripts | Yes | No |

| Variant Detection (SNPs, Fusion Genes) | Yes | Limited |

| Primary Quantitative Output | Read counts (digital) | Fluorescence intensity (analog) |

| Typical Cost per Sample (as of latest data) | $$$ | $ |

Table 2: Key Analytical Characteristics for Differential Expression

| Characteristic | RNA-seq | Microarray |

|---|---|---|

| Accuracy for Low-Abundance Transcripts | High | Moderate to Low |

| Quantitative Precision | High across wide range | Saturation at high expression |

| Reproducibility (Technical Replicate R^2) | > 0.99 | > 0.97 |

| Gene Expression Units | FPKM, TPM, Counts | Arbitrary Intensity Units |

| Standard Statistical Models | Negative Binomial (e.g., DESeq2, edgeR) | Linear Models (e.g., limma) |

Detailed Experimental Protocols

Standard Poly-A Selected RNA-seq Workflow

Principle: Capture mRNA via poly-A tails, fragment, and prepare a sequencing library.

- RNA Extraction & QC: Isolate total RNA using guanidinium thiocyanate-phenol-chloroform extraction (e.g., TRIzol). Assess integrity via RIN (RNA Integrity Number) > 8.0 on Bioanalyzer.

- Poly-A mRNA Selection: Use oligo(dT) magnetic beads to bind and isolate polyadenylated RNA.

- cDNA Synthesis & Fragmentation: Reverse transcribe RNA into double-stranded cDNA. Fragment via enzymatic (e.g., Nextera) or sonication methods.

- Library Preparation: Ligate sequencing platform-specific adapters, often incorporating sample barcodes (indexes) for multiplexing.

- Library Amplification & QC: Perform limited-cycle PCR to enrich adapter-ligated fragments. Validate library size distribution (e.g., Bioanalyzer) and quantify via qPCR.

- Sequencing: Pool libraries and sequence on an Illumina, MGI, or PacBio platform to a recommended depth of 20-40 million paired-end reads per sample for standard differential expression.

Standard Microarray Workflow (One-Color, e.g., Agilent)

Principle: Convert RNA to cyanine-labeled cDNA, hybridize to array, and scan.

- RNA Extraction & QC: As in Step 2.1.1.

- cDNA Synthesis and Labeling: Use reverse transcriptase and oligo(dT) priming to synthesize cDNA, incorporating Cy3-dCTP (or Cy5 for two-color) via direct labeling or amino-allyl indirect labeling.

- Purification & Quantification: Purify labeled cDNA using spin columns or precipitation. Measure dye incorporation (pmol of dye/µg cDNA).

- Hybridization: Mix labeled cDNA with fragmentation buffer, blocking agents (e.g., Cot-1 DNA, poly-dA), and hybridization buffer. Apply to microarray slide under a coverslip in a dedicated hybridization chamber. Incubate at 65°C for 17 hours in a rotating oven.

- Washing: Perform a series of stringent washes (e.g., Agilent GE Wash Buffers 1 & 2) to remove non-specifically bound cDNA.

- Scanning & Feature Extraction: Scan slide with a confocal laser scanner at the appropriate excitation wavelength for the dye. Use vendor software (e.g., Agilent Feature Extraction) to convert spot images to numerical intensity data.

Visualization of Workflows

Workflow Diagram: RNA-seq Library Preparation

Workflow Diagram: Microarray Hybridization

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents

| Item | Function & Application | Example Vendor/Kit |

|---|---|---|

| RNase Inhibitors | Prevents degradation of RNA during extraction and handling. Critical for all protocols. | Murine RNase Inhibitor, Recombinant RNase Inhibitor |

| Solid-Phase Reversible Immobilization (SPRI) Beads | Magnetic beads for size selection and cleanup of DNA fragments (NGS libraries). | AMPure XP Beads |

| Oligo(dT) Magnetic Beads | For isolation of polyadenylated mRNA from total RNA (RNA-seq). | NEBNext Poly(A) mRNA Magnetic Isolation Module |

| Fragmentation Enzyme Mix | Controlled, reproducible fragmentation of DNA (NGS library prep). | NEBNext Ultra II FS DNA Module |

| Hybridization Chamber & Oven | Provides controlled, bubble-free environment for microarray hybridization. | Agilent SureHyb Chamber, hybridization oven |

| Cy3/Cy5-dCTP | Fluorescent nucleotides for direct labeling of cDNA for microarray detection. | CyDye, PerkinElmer |

| Feature Extraction Software | Converts scanned microarray image files into quantified spot intensity data. | Agilent Feature Extraction, Affymetrix Power Tools |

| Sequencing Platform | Instrumentation for high-throughput generation of sequence reads. | Illumina NovaSeq, MGI DNBSEQ-G400 |

Within a broader thesis evaluating the best differential expression analysis tools for new researchers, a foundational truth emerges: the validity of any downstream result is entirely contingent upon rigorous upstream pre-analysis. This guide details the three essential pillars—Study Design, Raw Read Quality Control (QC), and Alignment—that new researchers must master before any statistical comparison begins. Failures in these initial stages propagate irrecoverably, rendering even the most sophisticated differential expression tools ineffective.

Foundational Study Design

Robust study design is the first and most critical step, dictating the statistical power and biological validity of the entire experiment.

Key Considerations

- Biological vs. Technical Replicates: Biological replicates (samples from different organisms/primary sources) are non-negotiable for inferring population-level effects. Technical replicates (repeated measurements of the same sample) only assess measurement noise. A minimum of three biological replicates per condition is standard, though more increase power.

- Randomization: Processing order for samples from different experimental groups must be randomized to avoid batch effects.

- Blocking: When batch effects are unavoidable (e.g., multiple sequencing lanes, different days), a blocked design should be employed where each batch contains samples from all groups.

Power Analysis

A priori power analysis helps determine the necessary sample size. Key inputs include the expected effect size (fold change), desired statistical power (typically 80%), and significance threshold. Tools like Scotty or RNASeqPower are commonly used.

Table 1: Example Power Analysis Output Using Simulated Parameters

| Expected Fold Change | Dispersion | Significance (Alpha) | Sample Size per Group | Achieved Power |

|---|---|---|---|---|

| 2.0 | 0.1 | 0.05 | 3 | 78% |

| 1.5 | 0.1 | 0.05 | 5 | 82% |

| 2.0 | 0.2 | 0.05 | 6 | 80% |

Raw Read Quality Control (QC)

Upon receiving raw sequencing data (typically in FASTQ format), an exhaustive QC assessment is mandatory to identify issues requiring remediation before alignment.

QC Metrics & Tools

- Per-Base Sequence Quality: Assesses Phred scores across all bases. A drop in quality at read ends is common.

- Per-Sequence Quality Scores: Identifies subsets of reads with universally low quality.

- Sequence Duplication Levels: High duplication can indicate PCR over-amplification or low library complexity.

- Adapter Contamination: Presence of adapter sequences indicates read-through and must be trimmed.

- Overrepresented Sequences: Can reveal contamination (e.g., ribosomal RNA).

The standard tool is FastQC for assessment, followed by Trimmomatic or Cutadapt for cleaning.

Detailed QC Protocol

Protocol: Raw Read QC with FastQC and Trimmomatic

- Initial Assessment: Run FastQC on all raw FASTQ files.

Aggregate Reports: Use MultiQC to synthesize results.

Quality Trimming & Adapter Removal: Execute Trimmomatic in paired-end mode.

Post-Cleaning Assessment: Re-run FastQC and MultiQC on the trimmed (

*_paired.fq.gz) files to confirm improvements.

Table 2: Key QC Metrics Before and After Trimming

| Metric | Raw Data (Mean) | Trimmed Data (Mean) | Acceptable Threshold |

|---|---|---|---|

| % Bases ≥ Q30 | 92.5% | 98.1% | > 70% (varies by platform) |

| % Adapter Content | 1.8% | 0.1% | As low as possible |

| % GC Content | 48% | 48% | Close to species expectation |

| % Duplicate Reads | 15% | 12% | Highly sample-dependent |

Alignment to a Reference Genome

The cleaned reads are mapped to a reference genome or transcriptome to determine their genomic origin.

Alignment Strategy and Tools

The choice depends on the reference. For genome alignment, splice-aware aligners are required for RNA-seq.

- Genome Alignment (Splice-Aware): STAR (ultra-fast, sensitive) and HISAT2 (memory-efficient) are standards.

- Pseudoalignment/Transcriptome Quantification: Tools like Salmon or Kallisto bypass traditional alignment, directly estimating transcript abundance rapidly and with lower memory.

Detailed Alignment Protocol

Protocol: Alignment with STAR and Quantification with FeatureCounts

- Generate Genome Index (once per genome/annotation):

Align Reads:

Generate Read Counts Matrix (if not using

--quantMode): UsefeatureCountsfrom the Subread package.

Table 3: Comparison of Key Alignment Tools for RNA-seq

| Tool | Alignment Type | Speed | Memory Use | Key Strength | Best For |

|---|---|---|---|---|---|

| STAR | Splice-aware | Very Fast | High | Accuracy, sensitivity to novel splicing | Standard genome-aligned analysis |

| HISAT2 | Splice-aware | Fast | Medium | Memory efficiency, speed | Large genomes or limited RAM |

| Salmon | Pseudoalignment | Very Fast | Low | Speed, transcript-level quantification | Rapid quantification for DE |

Post-Alignment QC

Alignment generates critical QC metrics.

- Alignment Rate: The percentage of reads successfully mapped. >70-80% is typically acceptable for well-annotated model organisms.

- Exonic vs. Intronic Rate: For standard RNA-seq, majority of reads should map to exonic regions.

- Strand-Specificity: Verifies the library preparation protocol was correctly followed.

- Insert Size Distribution: Should match library preparation expectations.

- Visual Inspection: Use Integrated Genome Viewer (IGV) to manually inspect alignment quality at specific loci.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for RNA-seq Pre-Analysis

| Item | Function & Rationale |

|---|---|

| TruSeq Stranded mRNA Kit | Gold-standard for poly-A selection and strand-specific library prep. Ensures accurate strand orientation in data. |

| Ribo-Zero rRNA Depletion Kits | For ribodepletion of rRNA in non-polyA enriched samples (e.g., total RNA, degraded samples). |

| QIAGEN RNeasy Kit | Reliable total RNA extraction with gDNA removal columns. Ensures high-integrity input RNA. |

| Bioanalyzer RNA Integrity Number (RIN) Chips | Microfluidic chips for precise assessment of RNA degradation (RIN > 8 is ideal). |

| SPRIselect Beads | Size-selective magnetic beads for library clean-up and size selection. Replaces gel-based methods. |

| Illumina Sequencing Reagents (NovaSeq/X) | Platform-specific chemistry for cluster generation and sequencing-by-synthesis. |

Workflow and Relationship Diagrams

Title: End-to-End Pre-Analysis Workflow with QC Checkpoints

Title: Pre-Analysis Positioning Within Full DE Workflow

Within the broader thesis on identifying the best differential expression (DE) analysis tools for new researchers, this guide provides a foundational examination of the core software platforms and packages. Selecting an appropriate tool is a critical first step that dictates downstream analysis quality, reproducibility, and biological insight. This whitepaper offers an in-depth technical comparison of current popular options, framed for researchers, scientists, and drug development professionals entering the field of transcriptomics.

Core DE Analysis Platforms and Packages: A Quantitative Comparison

The following table summarizes key quantitative and functional attributes of widely-used DE analysis tools, based on current standards and search data. This comparison focuses on tools for bulk RNA-seq analysis, a common starting point for new researchers.

Table 1: Comparison of Popular Differential Expression Analysis Packages (2024)

| Package/Platform | Primary Language | Standard Statistical Model | Key Strength | Ideal Use Case | License |

|---|---|---|---|---|---|

| DESeq2 | R | Negative Binomial GLM with shrinkage (Wald test/LRT) | Robust handling of low counts, excellent documentation | Standard bulk RNA-seq with biological replicates | GPL (≥3) |

| edgeR | R | Negative Binomial GLM (QL F-test) | Flexibility in experimental design, speed | Large datasets, complex designs | GPL (≥2) |

| limma-voom | R | Linear modeling of log-CPM with precision weights | Powerful for small sample sizes, integrates with microarray pipeline | Studies with few replicates (<5 per group) | GPL (≥2) |

| Seurat (single-cell focus) | R | Non-parametric or negative binomial models | Comprehensive single-cell analysis suite | Single-cell or spatial transcriptomics | GPL (≥3) |

| Scanpy (single-cell focus) | Python | Various (e.g., Wilcoxon, t-test, negative binomial) | Scalability, integration with Python ML ecosystem | Large-scale single-cell data analysis | BSD |

| NOIseq | R | Non-parametric noise distribution | Does not assume technical replicates, good for data without reps | Exploratory analysis or studies lacking replicates | Artistic License 2.0 |

Standardized Experimental Protocol for Bulk RNA-seq DE Analysis

A generalized, detailed methodology for a typical DE analysis workflow using a tool like DESeq2 or edgeR is provided below. This protocol serves as a foundational reference.

Protocol Title: Standard Differential Expression Analysis from Count Matrix to Candidate Genes

1. Input Data Preparation:

- Input: A raw count matrix (genes as rows, samples as columns) generated from an aligner (e.g., STAR, HISAT2) and quantifier (e.g., featureCounts, HTSeq).

- Metadata Table: A sample information table (colData) specifying experimental conditions, batches, and other covariates.

2. Quality Control & Pre-filtering:

- Filter out genes with very low counts across all samples (e.g., <10 counts total).

- Perform exploratory data analysis: Principal Component Analysis (PCA) or Multidimensional Scaling (MDS) plot to assess sample grouping and detect outliers.

3. Model Fitting and Differential Testing:

- For DESeq2:

- Create a

DESeqDataSetobject from the count matrix and metadata. - Estimate size factors (for library size normalization).

- Estimate gene-wise dispersions.

- Fit the negative binomial Generalized Linear Model (GLM) for the specified design (e.g., ~ condition).

- Apply the Wald test or Likelihood Ratio Test (LRT) to calculate p-values for each gene.

- Create a

- For edgeR:

- Create a

DGEListobject. - Calculate normalization factors using TMM (trimmed mean of M-values).

- Estimate common, trended, and tagwise dispersions.

- Fit a GLM using the design matrix.

- Conduct a quasi-likelihood F-test (QL F-test) for DE.

- Create a

4. Results Extraction and Shrinkage:

- Extract a results table with log2 fold changes, p-values, and adjusted p-values (Benjamini-Hochberg FDR).

- Apply log2 fold change shrinkage (e.g., DESeq2's

lfcShrink, edgeR'sglmTreat) to mitigate variance of low-count genes and improve effect size estimates.

5. Interpretation and Downstream Analysis:

- Set significance thresholds (e.g., FDR < 0.05, |log2FC| > 1).

- Generate diagnostic plots: MA-plot, p-value histogram, dispersion plot.

- Perform functional enrichment analysis (e.g., GO, KEGG) on significant DE genes.

Bulk RNA-seq DE Analysis Core Workflow

Key Signaling Pathways in Differential Expression Interpretation

DE analysis often culminates in pathway analysis. Below is a generalized representation of a common signaling pathway (MAPK/ERK) frequently identified in such analyses.

MAPK/ERK Signaling Pathway Simplified

The Scientist's Toolkit: Essential Research Reagent Solutions

This table details essential computational "reagents" – the key software and data resources required to perform a DE analysis.

Table 2: Essential Research Reagent Solutions for Computational DE Analysis

| Item | Category | Function & Explanation |

|---|---|---|

| R (≥4.0.0) / Python (≥3.8) | Programming Language | Core statistical computing (R) or general-purpose (Python) environment for executing analysis packages. |

| Bioconductor | Software Repository | Vast repository of R packages for genomic data analysis (hosts DESeq2, edgeR, limma). |

| Integrated Development Environment (IDE) | Software Tool | Facilitates code writing and debugging (e.g., RStudio for R, PyCharm/VSCode for Python). |

| Reference Genome (FASTA) | Genomic Data | The nucleotide sequence of the organism under study, used for read alignment (e.g., GRCh38 for human). |

| Gene Annotation (GTF/GFF) | Genomic Data | File containing genomic coordinates of genes, transcripts, and exons, essential for quantifying reads per gene. |

| High-Performance Computing (HPC) Cluster or Cloud Access | Computing Infrastructure | Provides the necessary processing power and memory for aligning reads and analyzing large datasets. |

| Sample Metadata (CSV/TSV file) | Experimental Data | Structured text file defining experimental groups, batches, and covariates for the statistical model. |

| Functional Annotation Database | Reference Knowledge | Databases like MSigDB, Gene Ontology, or KEGG for biological interpretation of DE gene lists. |

Step-by-Step: How to Run DE Analysis with Top Tools for Beginners

This guide provides a detailed, hands-on protocol for performing differential gene expression (DGE) analysis with DESeq2. It is framed within a broader thesis evaluating the best differential expression analysis tools for new researchers, where DESeq2 is often recommended for its robust statistical modeling, comprehensive documentation, and strong performance on small sample sizes, despite a steeper initial learning curve compared to some GUI-based tools.

DESeq2 models raw count data using a negative binomial distribution, which accounts for over-dispersion common in sequencing data. It internally corrects for library size and uses a regularized log transformation (rlog) or variance stabilizing transformation (VST) for normalization. The core test is a Wald test or likelihood ratio test for hypotheses about log2 fold changes.

Step-by-Step Experimental Protocol

1. Prerequisite: Generating a Count Matrix

- Experimental Protocol (RNA-seq): Total RNA is extracted from treated and control samples (e.g., n=3 per group). Poly-A mRNA is selected, cDNA libraries are prepared with unique dual indexing, and sequencing is performed on an Illumina platform (e.g., NovaSeq) to generate 150bp paired-end reads. A minimum depth of 20-30 million reads per sample is standard.

- Bioinformatics Preprocessing: Reads are quality-checked (FastQC), trimmed (Trimmomatic), and aligned to a reference genome (Homo sapiens GRCh38) using a splice-aware aligner (STAR). Gene-level counts are generated using

featureCountsfrom the Subread package, quantifying reads overlapping exons in the GTF annotation file.

2. DESeq2 Analysis Workflow

The following R protocol assumes a count matrix (counts) and a sample information DataFrame (colData) with at least a condition column.

The DESeq() function performs estimation of size factors (normalization), estimation of dispersion, and fitting of negative binomial GLMs, followed by Wald testing.

4. Extract and Interpret Results

5. Visualization and Reporting

Table 1: Summary of DESeq2 Analysis Output (Hypothetical Experiment)

| Metric | Value | Interpretation |

|---|---|---|

| Total Genes Tested | 18,500 | Genes after pre-filtering |

| Significant Genes (adj. p < 0.05) | 1,250 | 6.8% of tested genes differentially expressed |

| Up-regulated Genes | 720 | Log2FC > 0 |

| Down-regulated Genes | 530 | Log2FC < 0 |

| Median Normalization Size Factor | 0.95 - 1.10 | Indicates balanced library sizes |

Table 2: Top 5 Up-Regulated Genes

| Gene ID | Base Mean | Log2 Fold Change | lfcSE | p-value | adj. p-value |

|---|---|---|---|---|---|

| Gene_A | 1500.2 | 4.32 | 0.28 | 2.5e-45 | 4.6e-41 |

| Gene_B | 850.6 | 3.87 | 0.31 | 1.8e-32 | 1.7e-28 |

| Gene_C | 2200.8 | 3.65 | 0.25 | 5.3e-38 | 6.5e-34 |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for an RNA-seq/DESeq2 Workflow

| Item | Function | Example Product/Category |

|---|---|---|

| RNA Extraction Kit | Isolates high-integrity total RNA | QIAGEN RNeasy Kit |

| mRNA Selection Beads | Enriches for polyadenylated mRNA | NEBNext Poly(A) mRNA Magnetic Isolation Module |

| cDNA Library Prep Kit | Prepares sequencing-ready libraries | Illumina Stranded mRNA Prep |

| Sequencing Platform | Generates raw read data | Illumina NovaSeq 6000 |

| Alignment Software | Maps reads to reference genome | STAR aligner |

| Quantification Tool | Generates count matrix from alignments | featureCounts (Subread) |

| Statistical Software | Performs DGE analysis | R/Bioconductor with DESeq2 package |

Visualization of the DESeq2 Workflow

DESeq2 Analysis Workflow from Reads to Results

Key Statistical Pathway

DESeq2 Statistical Modeling Steps

In the context of evaluating the best differential expression analysis tools for new researchers, edgeR stands out for its robust statistical framework designed specifically for count-based data from RNA-seq experiments with biological replicates. Its power is derived from an empirical Bayes strategy that allows stable estimation of gene-wise dispersion even with a limited number of replicates. This technical guide details a validated workflow, ensuring researchers can reliably identify differentially expressed genes.

Core Statistical Methodology

edgeR models read counts using a negative binomial (NB) distribution: Y_gi ~ NB(mean = μ_gi, variance = μ_gi + φ_g * μ_gi^2), where Y_gi is the count for gene g in sample i, μ_gi is the mean expression level, and φ_g is the gene-specific dispersion. Biological replicate information is critical for estimating φ_g. The workflow uses a conditional likelihood approach to estimate common, trended, and tagwise dispersions, followed by exact tests or generalized linear models (GLMs) for hypothesis testing.

Key Experimental Protocols for Cited Studies

Protocol 1: RNA-seq Library Preparation and Sequencing (e.g., Illumina)

- Total RNA Extraction: Isolate total RNA using TRIzol or column-based kits. Assess integrity via RIN > 8.0 (Agilent Bioanalyzer).

- Poly-A Selection: Enrich mRNA using oligo(dT) magnetic beads.

- cDNA Synthesis: Fragment mRNA and synthesize first- and second-strand cDNA.

- Library Construction: Perform end repair, A-tailing, adapter ligation, and PCR enrichment. Validate library size distribution (Bioanalyzer) and quantify via qPCR.

- Sequencing: Pool libraries and sequence on an Illumina platform (e.g., NovaSeq) to a minimum depth of 20-30 million paired-end reads per biological replicate.

Protocol 2: edgeR Analysis with Biological Replicates (Exact Test Workflow)

- Data Input: Load raw, unfiltered count matrices (e.g., from HTSeq-count or featureCounts) into R. Crucially, counts must be from biological replicates (n ≥ 3 per condition).

- Create DGEList Object: Use

edgeR::DGEList(counts, group=conditions). - Filtering: Remove lowly expressed genes:

keep <- filterByExpr(y); y <- y[keep, ]. - Normalization: Calculate scaling factors with

calcNormFactors(y)(TMM method). - Dispersion Estimation: Estimate common and tagwise dispersions:

y <- estimateDisp(y). - Testing: Perform quasi-likelihood F-test:

et <- exactTest(y). - Result Interpretation: Extract top DEGs:

topTags(et, n=Inf, adjust.method="BH"). Genes with FDR < 0.05 are considered significant.

Data Presentation: Comparative Analysis of Dispersion Estimation Methods

The choice of dispersion estimation method significantly impacts sensitivity and specificity, especially with few replicates.

Table 1: Performance of edgeR Dispersion Methods on Simulated Data (n=4 vs 4 replicates)

| Method | Estimated Dispersion Type | Recommended Use Case | Sensitivity (Power) | False Discovery Rate (FDR) Control |

|---|---|---|---|---|

estimateDisp |

Common, Trended, Tagwise | Standard design (simple group comparisons) | High | Well-controlled |

estimateGLMCommonDisp + estimateGLMTrendedDisp + estimateGLMTagwiseDisp |

Common, Trended, Tagwise | Complex designs (requiring GLM with multiple factors) | High | Well-controlled |

estimateDisp with robust=TRUE |

Robust Trended, Tagwise | Data with outlier genes or extreme counts | Slightly Reduced | Improved in outlier scenarios |

Table 2: Impact of Replicate Number on DEG Detection (Benchmarking Study)

| Replicates per Group (n) | Total Samples | % of True Positives Detected (at FDR 5%) | Median FDR Achieved | Recommended edgeR Model |

|---|---|---|---|---|

| 2 | 4 | ~55% | 8.2% | exactTest() with prior.df=0 |

| 3 | 6 | ~78% | 5.5% | Standard exactTest() |

| 5 | 10 | ~95% | 4.9% | Standard or GLM Quasi-Likelihood |

| 10 | 20 | ~99% | 5.0% | Any model with high confidence |

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Example Product/Technology | Function in RNA-seq/edgeR Workflow |

|---|---|---|

| RNA Isolation Kit | TRIzol Reagent, Qiagen RNeasy Mini Kit | Extracts high-quality, intact total RNA from cells or tissues. Integrity is critical for sequencing. |

| RNA Integrity Assessment | Agilent 2100 Bioanalyzer with RNA Nano Kit | Provides RIN (RNA Integrity Number) to quality-check RNA prior to library prep. RIN > 8 is ideal. |

| Poly-A Selection Beads | NEBNext Poly(A) mRNA Magnetic Isolation Module | Enriches for eukaryotic mRNA by binding the poly-adenylated tail, removing rRNA and other RNA. |

| Library Prep Kit | Illumina Stranded mRNA Prep, Ligation Kit | Converts mRNA into a sequence-ready library with adapters and indexes for multiplexing. |

| Quantification Instrument | Qubit Fluorometer with dsDNA HS Assay Kit | Accurately quantifies final library concentration for pooling and loading onto the sequencer. |

| Sequencing Platform | Illumina NovaSeq 6000, NextSeq 2000 | Generates millions of high-throughput sequencing reads (short fragments) for digital gene counting. |

| Read Alignment Software | STAR, HISAT2 | Aligns raw sequencing reads to a reference genome to assign them to genomic features. |

| Read Counting Tool | featureCounts (Rsubread), HTSeq-count | Generates the raw count matrix by summarizing reads aligned to each gene (exons) for each sample. |

Key Workflow Visualizations

edgeR Analysis Workflow with Biological Replicates

Information Sharing via Empirical Bayes in edgeR

GLM Framework for Complex Designs in edgeR

Within the broader investigation of Best differential expression analysis tools for new researchers, limma-voom stands out as a robust, precise, and statistically powerful framework suitable for both microarray and RNA-seq data. Its versatility and strong performance in controlled benchmarks make it a primary recommendation for new researchers seeking a reliable, well-supported method.

Core Statistical Framework

limma (Linear Models for Microarray Data) employs an empirical Bayes method to moderate the standard errors of estimated log-fold changes. This borrowing of information across genes stabilizes estimates, improving power and reliability, especially in experiments with small sample sizes. The voom (variance modeling at the observational level) transformation extends limma's capabilities to RNA-seq count data by:

- Modeling the mean-variance relationship of log-counts.

- Generating precision weights for each observation.

- Enabling the application of limma's linear modeling and empirical Bayes procedures.

Key Quantitative Performance Benchmarks Table 1: Comparative Performance of Differential Expression Tools (Simulated Data)

| Tool | Sensitivity (Power) | Specificity (FDR Control) | Runtime (min, 10 samples) | Ease of Use for Beginners |

|---|---|---|---|---|

| limma-voom | 0.89 | 0.95 (Good) | ~2 | Moderate (R required) |

| DESeq2 | 0.87 | 0.96 (Excellent) | ~15 | Moderate |

| edgeR | 0.88 | 0.94 (Good) | ~5 | Moderate |

| SAM | 0.85 | 0.93 (Fair) | <1 | Easy (GUI available) |

Table 2: Real Dataset Concordance (Top 100 DEGs)

| Comparison Tool Pair | Concordance Rate (% Overlap) | Correlation of LogFC |

|---|---|---|

| limma-voom vs. DESeq2 | 78% | 0.97 |

| limma-voom vs. edgeR | 82% | 0.99 |

| DESeq2 vs. edgeR | 85% | 0.98 |

Detailed Experimental Protocol: A Standard limma-voom Workflow

Protocol 1: RNA-seq Differential Expression Analysis

Materials:

- Input Data: A count matrix (genes x samples). Raw counts (e.g., from STAR, HISAT2 + featureCounts) are required.

- Metadata: A data frame detailing experimental design (e.g., treatment groups, batch).

Procedure:

- Data Preparation in R:

Normalization (TMM):

Voom Transformation & Weighting:

Linear Modeling & Empirical Bayes:

Result Extraction:

Visualizing the Workflow and Logic

Title: limma-voom RNA-seq Analysis Workflow

Title: limma-voom's Position in Tool Evaluation Thesis

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Toolkit for a limma-voom Analysis

| Item | Function in Analysis | Example/Note |

|---|---|---|

| R Statistical Environment | The foundational software platform for execution. | Version 4.2.0 or higher. |

| limma R Package | Provides core linear modeling & empirical Bayes functions. | Available on Bioconductor. |

| edgeR R Package | Provides DGEList object, filtering, and TMM normalization. | Required for voom(). |

| High-Quality Count Matrix | Input data derived from alignment/quantification. | From tools like Salmon, featureCounts. |

| Experimental Design Metadata | Defines groups and covariates for the design matrix. | Must be meticulously curated. |

| High-Performance Computing (HPC) Access | For processing large datasets (many samples). | Optional for small studies. |

| R Script Editor (IDE) | For writing, documenting, and executing analysis code. | RStudio, VS Code. |

In the context of identifying the best differential expression (DE) analysis tools for new researchers, the challenge often lies in balancing analytical power with accessibility. Command-line tools like DESeq2 and edgeR are industry standards but present a steep learning curve. This whitepaper explores three user-friendly, web-based or graphical workflow alternatives—Galaxy, Partek Flow, and GenePattern—that democratize robust bioinformatics analysis for researchers, scientists, and drug development professionals.

The following table summarizes the key architectural and functional characteristics of each platform, based on current information.

| Feature | Galaxy | Partek Flow | GenePattern |

|---|---|---|---|

| Primary Access Model | Web-based (Public servers or local install) | Commercial, Cloud or On-premise | Web-based (Public server or local install) |

| Core Strength | Open-source, vast tool repository, reproducible workflow system | Intuitive visual interface, powerful visualization, integrated statistics | Specialized in genomics, pre-configured analytical pipelines |

| DE Analysis Workflow | Assembles discrete tools (e.g., HISAT2, featureCounts, DESeq2) | Guided, codeless workflow from alignment to DE and visualization | Uses dedicated modules (e.g., FastQC, STAR, DESeq2) within a pipeline |

| Learning Curve | Moderate (tool selection and parameterization required) | Low (drag-and-drop, highly guided) | Low-Moderate (module-based pipeline construction) |

| Cost | Free / Open Source | Commercial (Subscription-based) | Free / Open Source |

| Best For | Researchers seeking flexibility, reproducibility, and a vast open-source ecosystem | Labs and drug development teams prioritizing ease-of-use, speed, and integrated analytics | Researchers needing standardized, validated genomic analysis pipelines |

Experimental Protocol for Differential Expression Analysis

A standard RNA-Seq differential expression analysis protocol common to all three platforms is detailed below.

1. Sample Preparation & Sequencing:

- Extract total RNA from experimental and control groups (e.g., treated vs. untreated cell lines, n=3 biological replicates per group).

- Assess RNA quality using an Agilent Bioanalyzer (RIN > 8 recommended).

- Prepare sequencing libraries using a kit such as Illumina TruSeq Stranded mRNA.

- Sequence on an Illumina platform to generate 30-40 million paired-end 150bp reads per sample.

2. Data Analysis Workflow: The core computational steps, executed within each platform's interface:

- Quality Control: Assess raw read quality using FastQC.

- Trimming/Filtering: Remove adapter sequences and low-quality bases with Trimmomatic or Cutadapt.

- Alignment: Map filtered reads to a reference genome (e.g., GRCh38) using a splice-aware aligner (HISAT2, STAR).

- Quantification: Generate gene-level counts using featureCounts or HTSeq-count.

- Differential Expression: Perform statistical testing using DESeq2 or edgeR (integrated within each platform) to identify genes with significant expression changes (adjusted p-value < 0.05, |log2 fold change| > 1).

- Interpretation: Conduct gene ontology (GO) enrichment or pathway analysis (KEGG, GSEA) on significant gene lists.

3. Validation: Confirm key DE findings via orthogonal methods like qRT-PCR.

Platform Workflow Visualization

Diagram Title: Conceptual Workflow Comparison Between Platform Types

The Scientist's Toolkit: Essential Research Reagent Solutions

| Reagent / Material | Function in RNA-Seq DE Analysis |

|---|---|

| TRIzol Reagent | A monophasic solution of phenol and guanidine isothiocyanate for the effective isolation of high-quality total RNA from cells and tissues. |

| DNase I (RNase-free) | Enzymatically degrades genomic DNA contamination during RNA purification to prevent false positives in subsequent analyses. |

| Illumina TruSeq Stranded mRNA Kit | Library preparation kit for enriching polyadenylated RNA and generating strand-specific sequencing libraries compatible with Illumina platforms. |

| Agilent High Sensitivity DNA Kit | Used with a Bioanalyzer instrument to precisely assess the quality and fragment size distribution of sequencing libraries prior to pooling and sequencing. |

| PhiX Control v3 | A spiked-in sequencing control for monitoring lane performance, cluster density, and calculation of matrix/phasing during Illumina run setup. |

| SYBR Green Master Mix | A fluorescent dye used in quantitative RT-PCR (qRT-PCR) for validating the expression levels of differentially expressed genes identified from RNA-Seq data. |

DE Analysis Signaling Pathway

Diagram Title: From Stimulus to Differential Gene Expression and Phenotype

Within the broader thesis evaluating Best differential expression analysis tools for new researchers, mastering the visualization of results is paramount. The analytical output from tools like DESeq2, edgeR, or Limma-Voom is only as impactful as its presentation. This guide details the creation of two cornerstone visualizations: the volcano plot (for statistical significance vs. magnitude of change) and the heatmap (for expression patterns across samples and genes). Publication-ready figures must be both statistically rigorous and visually clear.

Foundational Differential Expression Analysis Workflow

The generation of data for these visualizations follows a standardized computational protocol.

Experimental Protocol: Core Differential Expression Analysis

- Data Preparation: Load raw count data (e.g., from RNA-Seq) into R or Python. Annotate samples with experimental conditions (e.g., Control vs. Treated).

- Quality Control: Filter genes with very low counts across all samples. Perform normalization for sequencing depth and RNA composition (e.g., using the median of ratios method in DESeq2 or the trimmed mean of M-values (TMM) in edgeR).

- Model Fitting & Statistical Testing: Apply a generalized linear model (e.g., in DESeq2 or edgeR) to estimate dispersion and test for differential expression. For microarray or log-transformed data, use Limma's linear models with empirical Bayes moderation.

- Result Extraction: Extract a results table containing for each gene: mean expression, log2 fold change, p-value, and adjusted p-value (e.g., Benjamini-Hochberg FDR).

- Visualization: Create volcano plots and heatmaps from the results table for interpretation and publication.

Diagram 1: Differential expression analysis workflow.

Creating a Volcano Plot

A volcano plot displays the negative log10-transformed p-values against the log2 fold change for each gene.

Experimental Protocol: Generating a Volcano Plot in R

Creating a Publication-Ready Heatmap

A heatmap visualizes expression levels of key genes (e.g., significant DE genes) across all samples, often with clustering.

Experimental Protocol: Generating a Clustered Heatmap in R

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 1: Key reagents and tools for differential expression analysis.

| Item | Function |

|---|---|

| RNA Extraction Kit (e.g., TRIzol, column-based kits) | Isolates high-quality total RNA from cells or tissues, free of genomic DNA and contaminants. |

| High-Throughput Sequencer (Illumina NovaSeq, NextSeq) | Generates millions of short cDNA reads for transcriptome quantification (RNA-Seq). |

| Microarray Platform (Affymetrix, Agilent) | Alternative to RNA-Seq for hybridizing fluorescently-labeled cDNA to gene probes. |

| DESeq2 (R/Bioconductor Package) | Statistical software for analyzing RNA-Seq count data, using shrinkage estimation for fold changes and dispersion. |

| edgeR (R/Bioconductor Package) | Statistical package for differential expression analysis of digital gene expression data, using empirical Bayes methods. |

| Limma (R/Bioconductor Package) | A package for analyzing gene expression data from microarrays or RNA-Seq (with voom transformation), using linear models. |

| ggplot2 (R Package) | A versatile and powerful plotting system based on the grammar of graphics, used to construct volcano plots and more. |

| pheatmap / ComplexHeatmap | Specialized R packages for creating annotated, clustered heatmaps with fine control over aesthetics. |

| Benjamini-Hochberg Procedure | A statistical method implemented in analysis tools to control the False Discovery Rate (FDR) when testing thousands of genes. |

Comparative Analysis of Differential Expression Tools

Table 2: Comparison of popular differential expression analysis tools for new researchers, as featured in the broader thesis.

| Feature | DESeq2 | edgeR | Limma (with voom) |

|---|---|---|---|

| Primary Data Type | Raw RNA-Seq counts | Raw RNA-Seq counts | Microarray intensities or RNA-Seq log2(CPM) |

| Core Statistical Model | Negative Binomial GLM with shrinkage | Negative Binomial GLM with empirical Bayes | Linear model with empirical Bayes moderation |

| Normalization Method | Median of ratios | Trimmed Mean of M-values (TMM) | Quantile (array) or TMM + voom transformation (RNA-Seq) |

| Strength | Robust with low replicates, conservative | Powerful for complex designs, flexible | Very fast, excellent for large datasets & complex designs |

| Ease for Beginners | High (streamlined workflow) | Medium | Medium-High (requires understanding of voom step) |

| Typical Output | log2FC, p-value, adjusted p-value (FDR) | log2FC, p-value, FDR | log2FC, moderated t-statistic, p-value, FDR |

Diagram 2: Tool selection logic for new researchers.

Solving Common DE Analysis Problems: Optimization Tips for Accurate Results

Within the broader investigation of the best differential expression (DE) analysis tools for new researchers, a critical and pervasive challenge is the statistical analysis of experiments with inherently low replicate counts. Constraints in budget, sample availability (e.g., rare patient biopsies), or ethical considerations (e.g., animal use) often limit experimental design. This guide details robust strategies for navigating the high variance and reduced statistical power associated with small sample sizes, enabling more reliable biological inference.

The Statistical Challenge of Low Replicates

Low replicates (typically n=2 or 3 per condition) increase the variance of gene expression estimates, making it difficult to distinguish true biological signal from noise. Standard DE tools like DESeq2 or edgeR rely on variance shrinkage techniques that perform poorly without adequate degrees of freedom. The result is an inflated rate of both false positives and false negatives.

Core Strategies for Robust Analysis

Experimental Design & Pre-processing

Prioritize Quality: With limited n, technical variance must be minimized. Rigorous RNA quality control (RIN > 8), library preparation in a single batch, and deep sequencing are non-negotiable. Incorporate Controls: Spike-in controls (e.g., ERCC RNA) can help distinguish technical from biological variance. Strategic Pooling: Where applicable, pooling multiple biological units prior to RNA extraction can provide a cost-effective way to estimate population-level effects, though it sacrifices information on individual variation.

Bioinformatics Tools & Statistical Adjustments

Specialized tools and methods have been developed to handle low-replicate scenarios more gracefully than standard workflows.

Table 1: Comparison of DE Analysis Tools Suited for Low Replicate Counts

| Tool/Method | Core Approach | Key Advantage for Low n | Major Limitation |

|---|---|---|---|

limma with voom |

Linear modeling with precision weights; treats data as continuous. | Leverages information across genes for variance estimation; robust for n ≥ 2. | Assumes normal distribution of log-CPMs; performance drops with extreme n=2. |

edgeR with robust=TRUE |

Empirical Bayes moderation of gene-wise dispersions towards a trended mean. | "Robust" option protects against outlier inflations, beneficial for small studies. | Relies on a common dispersion trend; may be unstable if few genes are DE. |

DESeq2 with apeglm LFC shrinkage |

Bayesian shrinkage of log2 fold changes (LFCs) using adaptive t prior. | Reduces false positive LFCs; provides more biologically realistic effect sizes. | Does not directly solve variance estimation with very low df. |

NOISeq |

Non-parametric method using data simulation and noise distribution modeling. | Does not require replicates; uses biological CV or artificial replicates. | Lower statistical power; control of false discovery rate is less formal. |

sleuth (for RNA-seq) |

Models technical and biological variance using bootstrapping on kallisto outputs. | Incorporates uncertainty in transcript abundance estimates. | Specifically for quantification data from kallisto; workflow is less flexible. |

Integrative Analysis & External Data Utilization

Leverage Public Data: Use datasets from repositories like GEO or ArrayExpress to inform priors (e.g., expected variance for a gene) or to validate findings in a larger, independent cohort. Pathway & Gene Set Analysis: Moving from single-gene to gene-set (e.g., GSEA, GSVA) or pathway-level analysis can aggregate weak signals across related genes, increasing robustness. Cross-Validation: If possible, split samples for discovery and validation, even within a tiny cohort, to avoid overfitting.

Detailed Experimental Protocol: A Robust Low-n RNA-seq Workflow

Protocol Title: Integrated RNA-seq Analysis for Differential Expression with Biological Duplicates.

1. Sample Preparation & Sequencing:

- Isolate total RNA from four biological samples (2 Condition A, 2 Condition B).

- Assess RNA integrity using a Bioanalyzer. Only proceed if all RIN > 8.5.

- Perform ribosomal RNA depletion and library construction in a single, standardized batch to minimize batch effects.

- Sequence on an Illumina platform to a minimum depth of 40 million paired-end 150bp reads per sample.

2. Bioinformatics Processing:

- Quality Control & Trimming: Use

FastQCfor raw read QC andTrim Galore!to remove adapters and low-quality bases. - Alignment & Quantification: Align reads to the reference genome/transcriptome using

STARaligner. Generate gene-level read counts usingfeatureCounts. - Normalization: Apply Transcripts Per Million (TPM) normalization for exploratory analysis. For DE, counts will be internally normalized by tools (e.g., TMM in edgeR).

3. Differential Expression Analysis:

- Filter lowly expressed genes (require > 10 counts in at least 2 samples).

- Run three parallel DE analyses:

limma-voomwith quality weights.edgeR(glmQLFit) withrobust=TRUE.DESeq2withapeglmLFC shrinkage.

- Define a consensus gene list: Consider genes with FDR < 0.1 in at least 2 of the 3 tools as high-confidence candidates.

- Perform LFC shrinkage using

apeglmon theDESeq2results for interpretation.

4. Validation & Downstream Analysis:

- Validate top DE genes via RT-qPCR on the same original samples (if material remains).

- Perform over-representation analysis (ORA) or Gene Set Variation Analysis (GSVA) on the consensus gene list to identify affected pathways.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Low-Replicate RNA-seq Studies

| Item | Function & Rationale |

|---|---|

| Agilent Bioanalyzer | Provides precise RNA Integrity Number (RIN) to ensure only high-quality samples proceed, critical when n is low. |

| ERCC RNA Spike-In Mix | A set of exogenous RNA controls added to lysates to monitor technical performance and normalize for technical variation. |

| Illumina Stranded Total RNA Prep | A robust, single-batch compatible library prep kit that includes ribosomal RNA depletion for mRNA enrichment. |

| RNase Inhibitors | Essential during RNA extraction and library prep to prevent degradation of limited samples. |

| Unique Dual Indexes (UDIs) | Enable multiplexing of all samples in a single sequencing lane, eliminating lane-effect batch variance. |

| KAPA Library Quantification Kit | Accurate qPCR-based quantification of sequencing libraries ensures balanced representation of all samples. |

No analytical tool can fully compensate for a poorly designed experiment. However, by combining meticulous experimental practice, leveraging specialized statistical tools that share information across genes or incorporate prior knowledge, and shifting interpretation to a systems level, researchers can derive meaningful and reproducible insights even from studies with low replicate counts. This pragmatic approach is a fundamental component in the evaluation of differential expression analysis tools for new researchers navigating resource-constrained environments.

Within the context of identifying the best differential expression (DE) analysis tools for new researchers, the paramount first step is the rigorous preprocessing of raw data. No downstream computational tool, no matter how sophisticated, can yield reliable biological insights from confounded data. This guide details the essential techniques for addressing batch effects and outliers—the two most pervasive and damaging technical artifacts in transcriptomic and other high-throughput biological data.

Chapter 1: Understanding Batch Effects

Batch effects are systematic non-biological variations introduced when samples are processed in different groups (batches). These can arise from reagent lots, personnel, sequencing runs, or instrument calibration.

Quantitative Impact of Batch Effects: Table 1: Common Sources and Magnitude of Batch Effects

| Source of Variation | Typical Magnitude (PVE*) | Primary Impact |

|---|---|---|

| Biological Condition | 15-40% | Signal of interest |

| Sequencing Lane/Batch | 10-30% | Major confounding |

| RNA Extraction Date | 5-20% | Significant confounding |

| Library Prep Kit Lot | 5-15% | Moderate confounding |

| Technician | 3-10% | Minor to moderate confounding |

PVE: Percent Variance Explained, as observed in PCA of unnormalized data.

Chapter 2: Detection and Diagnostics

Principal Component Analysis (PCA)

PCA is the primary diagnostic. Batch effects often dominate the first few principal components.

Protocol:

- Input: Log-transformed (e.g., log2(CPM+1)) expression matrix.

- Center the data: Subtract the mean expression of each gene across all samples.

- Compute covariance matrix: Calculate the

n x ncovariance matrix. - Perform eigen decomposition: Extract eigenvalues and eigenvectors.

- Project data: Plot samples in the space of PC1 vs. PC2, color-coding by batch and condition.

Visualization with Hierarchical Clustering

Heatmaps with dendrograms can reveal batch-driven sample clustering.

Chapter 3: Normalization Techniques for Batch Correction

Linear Model-Based Methods: ComBat and ComBat-Seq

ComBat uses an empirical Bayes framework to adjust for known batch covariates.

Experimental Protocol for ComBat-Seq (for count data):

- Specify model: Define a design matrix for the biological condition of interest.

- Estimate parameters: For each gene and batch, estimate location (mean) and scale (variance) parameters using an empirical Bayes approach, borrowing information across genes.

- Adjust the data: Apply the estimated parameters to adjust counts towards the global mean, preserving integer counts for downstream DE tools like DESeq2 or edgeR.

- Output: A batch-corrected integer count matrix.

Distribution Alignment: Quantile Normalization

Forces all sample distributions to be identical.

Protocol:

- Sort: For each sample (column), sort expression values in ascending order.

- Compute reference distribution: Calculate the mean expression value at each rank across all samples.

- Replace: Replace each sample's sorted values with the reference distribution values.

- Re-order: Map the normalized sorted values back to their original gene order.

Performance Comparison of Methods

Table 2: Comparison of Batch Correction Methods

| Method | Input Data Type | Preserves Biological Variance | Handles Large Batch Effects | Suitability for RNA-Seq |

|---|---|---|---|---|

| ComBat | Continuous (Microarray, log-CPM) | Moderate | Excellent | Good (post-voom) |

| ComBat-Seq | Integer Counts | High | Excellent | Excellent (direct) |

limma removeBatchEffect |

Continuous | Moderate | Good | Good (post-voom) |

| Quantile Normalization | Continuous | Low (over-corrects) | Good | Poor (for DE) |

| sva (Surrogate Variable Analysis) | Continuous | High | Excellent for unknown | Good (post-voom) |

Chapter 4: Outlier Detection and Handling

Outliers can be sample-wide (failed experiments) or gene-specific (measurement artifacts).

Sample-Level Outlier Detection

Protocol using PCA and Distance:

- Perform PCA on normalized data.

- Calculate the median absolute deviation (MAD) for the first 3-5 PCs.

- For each sample, compute its Mahalanobis distance in this PC space.

- Flag samples with distances > median + 3*MAD for review.

Gene-Specific Outlier Detection (e.g., for DE)

Tools like DESeq2 internally use Cook's distance to moderate the influence of outliers on gene-wise dispersion estimates.

Chapter 5: Integrated Workflow for New Researchers

A step-by-step pipeline is critical for robust analysis.

Diagram 1: Integrated Data Cleaning and Normalization Workflow

Chapter 6: The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Reliable Data Generation

| Item/Reagent | Function in Preventing Artifacts | Notes for Best Practice |

|---|---|---|

| RNA Stabilization Reagent (e.g., RNAlater) | Preserves RNA integrity at collection, reducing degradation batch effects. | Aliquot to avoid freeze-thaw batch effects. |

| Validated, Single-Lot Reagent Kits | Uses same lot # for library prep across entire study to minimize technical variation. | Plan study timeline to allow purchase of single large lot. |

| External RNA Controls Consortium (ERCC) Spike-Ins | Synthetic RNAs added to lysate to monitor technical performance and normalize across runs. | Crucial for distinguishing biological from technical variance. |

| UMI (Unique Molecular Identifier) Adapters | Tags each mRNA molecule to correct for PCR amplification bias and noise. | Essential for single-cell RNA-seq; beneficial for bulk. |

| Interplate Calibration Samples | Same biological sample(s) included in every processing batch (e.g., every sequencing lane). | Provides direct measure of inter-batch variation for correction. |

| Automated Nucleic Acid Quantitation (e.g., Fragment Analyzer) | Standardizes input amounts using precise fluorescence, not UV absorbance. | Reduces variation from inaccurate concentration measurements. |

For the new researcher evaluating differential expression analysis tools, the most critical lesson is that the quality of the input data dictates the validity of the output. Tools like DESeq2, edgeR, and limma-voom are powerful, but their performance is contingent upon the diligent application of the normalization and cleaning techniques described herein. A robust, upfront investment in diagnosing batch effects and outliers is the non-negotiable foundation of any credible transcriptomic study.

Within the critical framework of identifying the best differential expression analysis tools for new researchers, mastering the concepts of dispersion and variance is non-negotiable. Accurate modeling of these parameters dictates the reliability of identifying genes or transcripts truly associated with a biological condition. This guide delves into the technical challenges of dispersion estimation and variance stabilization, providing a roadmap for researchers and drug development professionals to ensure their statistical models faithfully represent their high-throughput sequencing data.

Core Statistical Concepts: From Variance to Dispersion

In RNA-seq data analysis, variance measures the spread of gene counts around their mean. For count data, the relationship between variance and mean is not independent. Dispersion (α) quantifies this mean-variance relationship, defined as ( Var = μ + αμ^2 ), where μ is the mean. Proper estimation is crucial: under-estimation increases false positives, while over-estimation reduces statistical power.

Quantitative Comparison of Dispersion Estimation Methods

The following table summarizes the performance characteristics of core estimation methods used by popular tools.

Table 1: Comparison of Dispersion Estimation Methods in Differential Expression Tools

| Method | Used By (Example Tools) | Principle | Strengths | Limitations |

|---|---|---|---|---|

| Tagwise (Gene-estimate) | Early edgeR | Estimates dispersion per gene independently. | Simple, no assumptions about prior. | Highly unstable with low replicates; high false positive rate. |

| Conditional Maximum Likelihood (CML) | edgeR (classic) | Conditions on the total count to eliminate common dispersion. | Accurate for experiments with few replicates. | Can be computationally intensive for large datasets. |

| Empirical Bayes (Shrinkage) | edgeR (GLM), DESeq2 | Shrinks gene-wise estimates towards a common or trended prior. | Stabilizes estimates, improves power with few replicates. | Relies on the choice of prior distribution. |

| Mean-Variance Trend | DESeq2 | Fits a smooth trend of dispersion as a function of mean. | Accounts for dependence of dispersion on expression level. | Trend assumption may not fit all datasets. |

| Generalized Linear Model (GLM) with Quasi-Likelihood | edgeR (QL), limma-voom | Estimates a quasi-likelihood dispersion factor per gene. | Robust to variability between biological replicates. | Requires more biological replicates for reliability. |

Experimental Protocols for Validation

Validating dispersion estimates is a critical step in any differential expression workflow.

Protocol 1: Evaluating Mean-Variance Fit

Objective: Visually assess whether the tool's fitted dispersion trend matches the observed variance in your data.

- Normalize your raw count data using the tool's recommended method (e.g., TMM for edgeR, median-of-ratios for DESeq2).

- Fit the mean-variance model using the tool's standard pipeline.

- Generate a diagnostic plot of gene-wise variance (or square root of variance = standard deviation) versus mean expression.

- Superimpose the fitted trend line (e.g., the dispersion-mean trend in DESeq2, or the sqrt(variance) trend in voom).

- Interpretation: The majority of data points should scatter evenly around the fitted trend. Systematic deviations indicate poor model fit.

Protocol 2: Testing for Overdispersion in Model Residuals

Objective: Statistically confirm that the chosen model adequately accounts for biological variability.

- Perform differential expression analysis using your chosen tool and model design.

- Extract the residuals from the fitted model (e.g., deviance residuals from edgeR's GLM).

- Conduct a goodness-of-fit test, such as the Pearson test for overdispersion, on the residuals.

- Calculate the ratio of sum of squared Pearson residuals to residual degrees of freedom. A ratio significantly >1 suggests residual overdispersion not captured by the model.

- Remediation: Consider adding covariates, checking for outliers, or using a more robust method like quasi-likelihood.

Visualizing the Analysis Workflow and Key Relationships

Diagram 1: DE Analysis Workflow with Dispersion Core

Diagram 2: Variance Composition and Dispersion Role

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Materials for RNA-seq Validation Experiments

| Item | Function in Validation | Example/Note |

|---|---|---|

| External RNA Controls Consortium (ERCC) Spike-in Mix | Distinguishes technical from biological variation. Added to lysate before library prep to monitor pipeline fidelity. | Thermo Fisher Scientific Cat# 4456740 |

| UMI (Unique Molecular Identifier) Adapters | Corrects for PCR amplification bias, providing a more accurate count of initial mRNA molecules. | Various NGS library prep kits (e.g., Illumina TruSeq). |

| Digital PCR (dPCR) System | Provides absolute, replicate-level quantification of selected DE gene targets for orthogonal validation. | Bio-Rad QX200, Thermo Fisher QuantStudio. |

| Poly-A RNA Control (e.g., from B. subtilis) | Assesses 3'-bias and overall sensitivity of the mRNA-seq workflow. | Often included in spike-in mixes. |

| RNA Quality Assessment Kits | Ensures high-input RNA integrity (RIN > 8), a critical factor affecting count variance. | Agilent Bioanalyzer RNA kits, Qubit RNA assays. |

| Batch Effect Correction Software/Libraries | Computational "reagents" to model and remove technical variance sources. | ComBat (sva R package), RUVSeq. |

For new researchers navigating the landscape of differential expression tools, a profound understanding of dispersion and variance modeling is the cornerstone of robust, interpretable science. Tools like DESeq2 and edgeR, which implement sophisticated empirical Bayes shrinkage methods, provide essential stability for typical small-n studies in drug development. The ultimate choice must be guided by the experimental design and validated through the diagnostic protocols outlined herein. Ensuring your model fits your data is not a mere statistical formality; it is the definitive step in transforming sequence counts into trustworthy biological insights.

Within the critical evaluation of differential expression (DE) analysis tools for new researchers, technical performance is paramount. This guide details best practices for optimizing computational parameters—memory, speed, and reproducibility—which directly influence the validity, scalability, and reliability of DE analysis outcomes.

Memory (RAM) Optimization

Excessive RAM usage is a common bottleneck, especially for single-cell or bulk RNA-seq with many samples.

Key Strategies:

- Data Chunking: Process large count matrices in blocks rather than loading entirely into RAM. Tools like

DESeq2perform this internally, but awareness is key. - Sparse Matrix Formats: For droplet-based single-cell data (e.g., 10x Genomics), leverage sparse matrix representations (e.g.,

.mtxformat) via packages likeMatrixin R orscipy.sparsein Python. - Precision Reduction: Store integer count data as 32-bit (

INT32) instead of 64-bit (INT64). - Subsetting & Filtering: Remove low-count genes and poor-quality cells/samples early to reduce object size.

Table 1: Estimated Memory Footprint for Common DE Tools

| Tool | Typical RAM Use (10k genes, 100 samples) | Critical Parameter for Control | Scale with Sample Size |

|---|---|---|---|

| DESeq2 | 4-8 GB | fitType="local", parallelization |

Near-linear |

| edgeR | 2-4 GB | block/design for complex designs |

Near-linear |

| limma-voom | 1-3 GB | block in duplicateCorrelation |

Near-linear |

| Seurat | 4-12 GB (single-cell) | FindMarkers on subset clusters |

Depends on cells & features |

Speed (Computation Time) Optimization

DE analysis often involves iterative modeling and statistical testing.

Key Strategies:

- Parallelization: Utilize multi-core processing. In R, leverage

BiocParallel(forDESeq2,edgeR). SetBPPARAM = MulticoreParam(workers = n_cores). - Approximations & Heuristics: For large exploratory analyses, consider tools like

glmGamPoifor faster dispersion estimation in negative binomial models. - Efficient Design Formulas: Simplify model formulas (

~ condition) where possible; complex designs (~ batch + condition) increase computation. - Hardware Leverage: Use SSDs over HDDs for I/O-intensive tasks; consider GPU-accelerated tools where available.

Experimental Protocol: Benchmarking DE Tool Speed

- Data Simulation: Use the

splatterR package to simulate a scRNA-seq dataset with 10,000 genes across 2 conditions (e.g., 500 control vs. 500 treated cells). - Tool Execution: Run

DESeq2,edgeR(LRT & QLF), andlimma-voomwith identical design on the simulated data. - Timing: Use

system.time()in R to record elapsed time for the core DE function (DESeq(),glmQLFit(),eBayes()). - Repetition: Repeat 5 times, restarting R session between runs, to average out background noise.

- Metrics Record: Record user, system, and elapsed time for the core DE step, excluding data I/O.

Reproducibility Best Practices

Reproducibility ensures DE results can be exactly recreated, a cornerstone of scientific integrity.

Key Strategies:

- Version Control: Use Git for all code (analysis scripts, pipelines). Commit at logical milestones.

- Containerization: Use Docker or Singularity to encapsulate the complete software environment (OS, R/Python versions, package versions).

- Package Version Pinning: Use

renv(R) orconda/poetry(Python) to record exact package versions. - Persistent Seed Setting: Always set a random seed (

set.seed(42)) before any stochastic step (e.g., bootstrap, permutation tests). - Comprehensive Logging: Log software versions, parameters, system information, and run times automatically.

Table 2: Essential Research Reagent Solutions for Reproducible DE Analysis

| Item/Category | Example/Tool | Function |

|---|---|---|

| Environment Manager | renv, conda |

Isolates project-specific dependencies and records exact package versions. |

| Container Platform | Docker, Singularity/Apptainer | Creates portable, self-contained computational environments. |

| Workflow Manager | Nextflow, Snakemake | Defines, executes, and reproduces multi-step analysis pipelines. |

| Version Control System | Git (hosted on GitHub, GitLab) | Tracks all changes to analysis code and documentation. |

| Data Versioning | DVC, Git-LFS | Manages and versions large datasets in sync with code. |

Integrated Workflow for Parameter-Optimized DE Analysis

The following diagram illustrates a streamlined, optimized workflow incorporating the best practices outlined above.

Diagram Title: Optimized DE Analysis Workflow with Best Practices.

For new researchers selecting and implementing DE tools, conscious optimization of computational parameters is not merely technical overhead but a fundamental component of robust, publishable research. Balancing memory efficiency, processing speed, and stringent reproducibility practices ensures analyses are scalable, timely, and, most importantly, trustworthy—directly supporting the broader goal of identifying biologically meaningful and statistically sound differential expression.