Batch Effect Correction in Differential Expression Analysis: A Complete Guide for Biomedical Researchers

This comprehensive guide addresses batch effects in differential expression (DE) analysis across four critical dimensions.

Batch Effect Correction in Differential Expression Analysis: A Complete Guide for Biomedical Researchers

Abstract

This comprehensive guide addresses batch effects in differential expression (DE) analysis across four critical dimensions. First, it establishes foundational knowledge by defining batch effects, explaining their technical and biological sources, and detailing their detrimental impact on false discovery rates and replicability. Second, it provides a methodological toolkit covering experimental design strategies (blocking, randomization), preprocessing approaches (normalization), and leading computational correction methods (ComBat, limma, SVA/RUV). Third, it tackles practical troubleshooting, offering diagnostics (PCA, heatmaps), optimization strategies for parameter tuning, and guidelines for method selection based on study design. Finally, it focuses on validation and benchmarking, discussing metrics for success (biological signal retention, batch variance reduction), comparative analyses of popular tools, and reporting standards for rigorous science. Tailored for researchers, scientists, and drug development professionals, this article synthesizes current best practices to ensure robust, reproducible DE results.

Understanding Batch Effects: What They Are and Why They Sabotage Differential Expression Analysis

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Why do my samples cluster by processing date instead of biological group in my PCA plot?

A: This is a classic symptom of a dominant batch effect. Technical variation from processing on different days is obscuring the biological signal. First, verify the metadata: ensure the processing date (batch) is not confounded with your experimental condition. If confounded, the study may be unrecoverable. If not, apply a correction method like ComBat or remove the batch effect via regression in limma before re-running the PCA.

Q2: After correcting for batch effects, my p-value distribution looks flat. Did I over-correct?

A: A uniformly distributed p-value histogram under the null is expected, but a perfectly flat distribution can indicate over-correction, where biological signal is removed. Compare the number of significant hits (e.g., FDR < 0.05) before and after correction. A drastic, illogical reduction suggests over-correction. Re-run with a less aggressive method (e.g., ComBat with model.matrix including your condition of interest) to preserve biological variance.

Q3: Can I correct for batch effects if my batches are completely confounded with my treatment group? A: No. If all samples from Group A were processed in Batch 1 and all from Group B in Batch 2, the technical and biological variables are inseparable. Statistical correction is impossible and will remove the very signal you seek. The experiment must be re-designed and re-run with balanced processing across batches.

Q4: My negative controls from different batches show high variability. Is this a batch effect? A: Yes. Significant variation in control samples (e.g., housekeeping genes, negative control probes) between batches is direct evidence of a technical batch effect. This variability should be quantified and used to guide the choice of correction method. Consider using negative controls for variance stabilization (RUV methods) if available.

Q5: Which is better for RNA-Seq: ComBat or sva?

A: The choice depends on your data structure. ComBat (from the sva package) is excellent for known batches. The svaseq function (also from sva) is crucial for identifying and adjusting for surrogate variables representing unknown sources of variation. For a known, simple batch structure, use ComBat. For complex studies with potential hidden factors, use svaseq. Always assess correction with PCA post-adjustment.

Experimental Protocols

Protocol 1: Diagnosing Batch Effects with Principal Component Analysis (PCA)

- Input: Normalized expression matrix (e.g., log2-CPM for RNA-Seq, log2-intensity for microarrays).

- Perform PCA on the expression matrix using the

prcomp()function in R. - Extract the variance explained by the top 5 principal components (PCs).

- Plot PC1 vs. PC2, coloring points by known batch (e.g., sequencing run) and by biological condition.

- Interpretation: If points cluster primarily by batch in the first two PCs, a strong batch effect is present. Quantify the percentage of variance attributed to batch vs. condition (see Table 1).

Protocol 2: Applying ComBat for Known Batch Correction (Microarray/RNA-Seq)

- Prerequisite: Install the

svapackage in R. Use a normalized, filtered expression matrix. - Define your batch variable (numeric or factor) and a model matrix for your biological covariates (e.g.,

mod = model.matrix(~disease_status, data=meta)). - Run the ComBat function:

corrected_data <- ComBat(dat=exp_matrix, batch=batch_vector, mod=mod, par.prior=TRUE, prior.plots=FALSE). - The

corrected_dataobject is the batch-adjusted expression matrix. Always re-run PCA on the corrected data to visualize the removal of the batch cluster.

Protocol 3: Identifying Surrogate Variables for Unknown Confounders

- Using the

svapackage, first fit a full model with your known variables (e.g.,mod = model.matrix(~disease_status)). - Fit a null model with only intercept or known technical factors (e.g.,

mod0 = model.matrix(~1, data=meta)). - Run the

num.sv()function to estimate the number of surrogate variables (SVs):n.sv <- num.sv(exp_matrix, mod, method="be"). - Run the

sva()function to identify the SVs:svobj <- sva(exp_matrix, mod, mod0, n.sv=n.sv). - The SVs (

svobj$sv) can be added as covariates to your linear model inlimmaorDESeq2for differential expression analysis.

Table 1: Variance Explained by Principal Components in a Typical RNA-Seq Study Before Correction

| Principal Component | Total Variance Explained (%) | Variance Attributable to Batch (Estimated) | Variance Attributable to Condition (Estimated) |

|---|---|---|---|

| PC1 | 22.5% | 18.2% | 3.1% |

| PC2 | 18.1% | 16.7% | 1.2% |

| PC3 | 8.3% | 1.5% | 6.5% |

| PC4 | 5.2% | 2.1% | 2.8% |

Table 2: Comparison of Common Batch Effect Correction Methods

| Method | Suitable Data Type | Handles Known Batch? | Handles Unknown Factors? | Key Assumption/Limitation |

|---|---|---|---|---|

| ComBat | Microarray, RNA-Seq | Yes | No (Requires specification) | Assumes parametric distribution of batch effects. |

limmaremoveBatchEffect |

Microarray, RNA-Seq | Yes | No | Removes batch means; best used prior to linear modeling. |

| sva | Microarray, RNA-Seq | Can be combined | Yes | Identifies surrogate variables; powerful for complex studies. |

| RUVseq | RNA-Seq | Yes | Yes (via controls) | Requires negative control genes or samples. |

| Harmony | Single-cell RNA-Seq | Yes | Yes | Designed for high-dimensional, discrete cell clusters. |

Visualizations

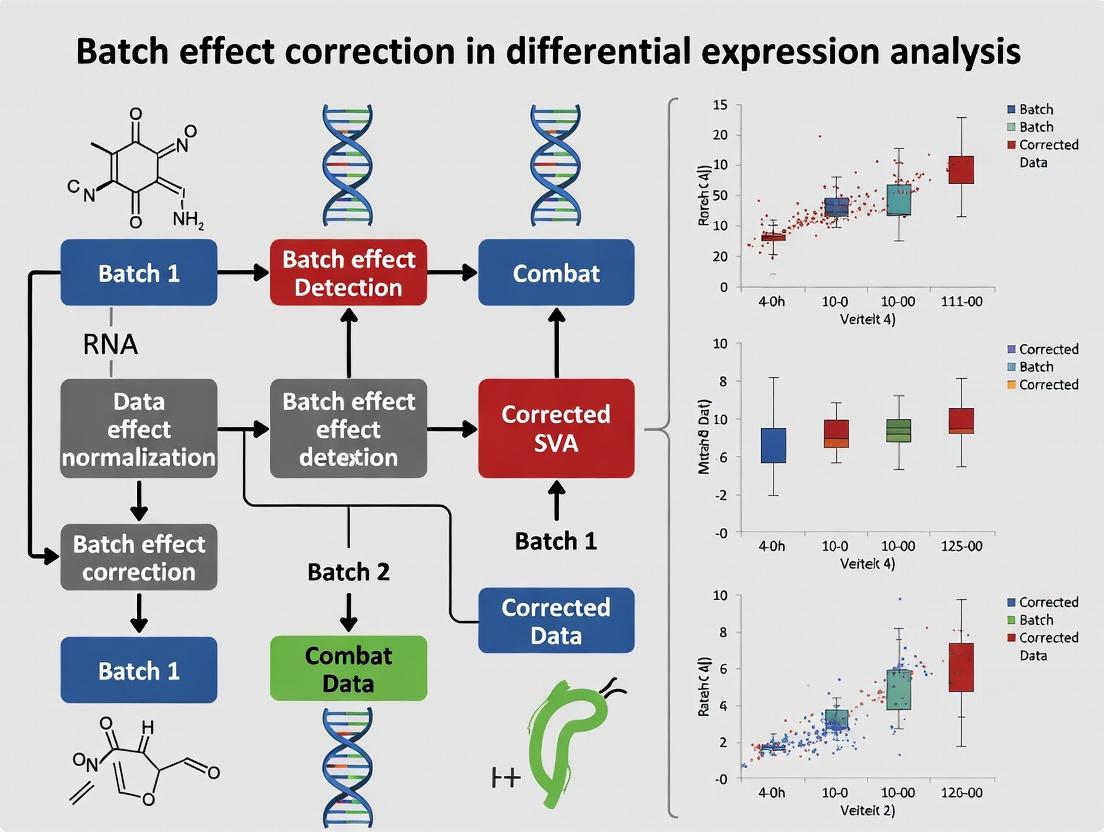

Title: How Batch Effects Distort Genomic Data Analysis

Title: Batch Effect Correction and Validation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Batch-Effect-Conscious Genomic Studies

| Item | Function | Example/Note |

|---|---|---|

| Reference RNA | A standardized pool of RNA (e.g., Universal Human Reference RNA) run across all batches to monitor and quantify technical variability. | Essential for longitudinal studies. |

| Spike-in Controls | Exogenous RNA/DNA added in known quantities to each sample prior to library prep (e.g., ERCC for RNA-Seq). Allows for absolute normalization and batch effect detection. | Distinguishes technical from biological variation. |

| Inter-plate Controls | The same biological sample(s) replicated on every processing plate (e.g., in microarray or 96-well RT-qPCR). Directly measures inter-batch variation. | Critical for diagnostic assays. |

| Balanced Block Design | An experimental design protocol, not a physical reagent. Ensures each biological group is represented in every processing batch to avoid confounding. | The most effective preventative "tool." |

| Automated Nucleic Acid Extractor | Standardizes the most variable step in sample preparation, reducing a major source of pre-sequencing batch effects. | Minimizes operator-induced variation. |

Technical Support Center: Troubleshooting Batch Effects in Differential Expression Analysis

Troubleshooting Guides & FAQs

Q1: My PCA plot shows strong clustering by sample processing date, not by treatment group. What are the first technical artifacts to investigate? A: This is a classic sign of a dominant batch effect. Immediate investigation should follow this protocol:

- Reagent Lot Verification: Check lot numbers for all core reagents (e.g., RNA extraction kits, reverse transcriptase enzymes, library prep kits). Correlate lot changes with the clusters in your PCA.

- Instrument Calibration Logs: Review service and calibration logs for sequencers, scanners, or real-time PCR instruments used during the processing period.

- Operator & Protocol Consistency: Confirm the same personnel followed the exact same protocol. Review lab notebooks for any undocumented minor changes.

Q2: After correcting for known processing batches, my DE list is still driven by a strong covariate like patient age or BMI. Is this a biological confounder or a residual artifact? A: This requires systematic stratification. Follow this experimental design:

- Subset Analysis: Re-run the DE analysis on a demographically matched subset (e.g., only samples from a narrow age/BMI range). If the signal disappears, it suggests a true biological confounder intertwined with the batch.

- Spike-in Control Correlation: If using RNA-seq, correlate the expression of the confounding variable (e.g., BMI) with the expression of exogenous spike-in controls (e.g., ERCCs). A high correlation suggests a technical artifact affecting global RNA content or quality.

- Negative Control Genes: Use housekeeping genes or genes previously established as invariant in your system as a proxy. Systematic variation in these controls alongside your covariate indicates persistent technical bias.

Q3: How can I determine if an observed batch effect is additive or multiplicative for my microarray data? A: Perform an inter-array correlation and mean-difference (MA) plot analysis.

- Protocol: Normalize your raw data using a baseline method (e.g., quantile normalization). For each probe/gene, calculate the mean expression (

A) and the difference (M) between a sample from Batch A and a reference sample from Batch B. - Diagnosis: Plot

MversusA. If the spread ofMis consistent across all values ofA, the effect is primarily additive. If the spread ofMincreases withA, the effect is multiplicative. Multiplicative effects require non-linear correction methods like ComBat.

Q4: My negative control samples (e.g., vehicle-treated) cluster separately in different batches. What is the most robust normalization approach? A: When controls are themselves batch-affected, leverage them explicitly.

- Protocol for Using Control Samples: Use the

removeBatchEffectfunction from thelimmapackage in R, specifying your negative control samples as thedesignargument. This function will estimate the batch effect from the controls and remove it from the entire dataset, preserving biological signals in treated samples. - Alternative - RUVSeq: For RNA-seq, use the

RUVgorRUVsfunctions from theRUVSeqpackage, inputting your negative control genes or samples as "empirical controls" to estimate and remove unwanted variation.

Research Reagent & Material Toolkit

| Item | Function | Key Consideration for Batch Effects |

|---|---|---|

| Exogenous Spike-in Controls (e.g., ERCC, SIRV) | Added at RNA extraction to monitor technical variability in RNA-seq; allows distinction of biological from technical variation in transcript abundance. | Use the same lot across all experiments. Normalize based on spike-ins to correct for global efficiency differences. |

| Universal Human Reference (UHR) RNA | A standardized RNA pool from multiple cell lines. Used as an inter-laboratory or inter-batch calibration standard in transcriptomic studies. | Run aliquots from a single, large master stock in every batch to anchor and correct measurements. |

| Lysis/Binding Buffer from RNA Kits | Chemical solution for cell lysis and nucleic acid stabilization. Inconsistent pH or contaminant levels can affect downstream enzymatic steps. | Track and, if possible, use a single manufacturing lot for an entire study. |

| DNase I Enzyme | Removes genomic DNA contamination during RNA purification. Variations in activity can affect RNA integrity and PCR amplification. | Aliquot upon receipt to avoid freeze-thaw cycles. Perform activity checks with a control substrate. |

| Library Prep Kit (NGS) | Integrated set of enzymes and buffers for cDNA synthesis, adapter ligation, and amplification. Major source of batch variation in coverage and bias. | The single largest source of batch effects. Dedicate kits from a single lot to a single study. Record all lot numbers. |

| SYBR Green I Dye (qPCR) | Intercalating dye for real-time PCR quantification. Dye concentration and stability can affect Cq values and amplification efficiency. | Protect from light. Use a freshly prepared, large-volume master mix for all samples in an experiment. |

Experimental Protocols

Protocol 1: Systematic Audit for Technical Batch Artifacts Objective: To identify and document all potential sources of technical variation in a multi-batch gene expression study. Materials: Laboratory notebooks, instrument logs, reagent inventory databases, sample metadata sheets. Methodology:

- Create a master timeline of the entire experiment from sample acquisition to data generation.

- For each sample, annotate the following variables: Reagent Lots (extraction kit, enzymes, kits), Instrument ID & Calibration Date, Operator ID, Processing Date, Run ID (e.g., sequencing lane, array chip).

- Perform Principal Component Analysis (PCA) on the normalized expression data.

- Sequentially color the PCA plot by each annotated variable. Variables that explain clear separation in principal components (PC1 or PC2) are high-priority batch confounders.

- Statistically test association (e.g., using PERMANOVA) between the sample distance matrix and each technical variable.

Protocol 2: Validating Batch Effect Correction Using Positive and Negative Controls Objective: To assess the performance of a batch correction method without removing true biological signal. Materials: Pre- and post-correction expression matrices, lists of known positive control genes (expected to be differentially expressed), and negative control genes (expected to be stable). Methodology:

- Define Control Gene Sets: Positive Controls: Genes with established response to your treatment from prior literature. Negative Controls: Housekeeping genes or genes from a "stable gene" list identified via algorithms like

geNormor from large meta-analyses. - Apply Batch Correction: Correct your data using the chosen method (e.g., ComBat, limma's

removeBatchEffect). - Evaluate: Calculate the following metrics:

- Signal Preservation: The log2 fold-change of your positive control genes before and after correction. Effective correction should preserve or enhance this signal.

- Noise Reduction: The variance of your negative control genes within batches (intra-batch variance) vs. across all batches (global variance) after correction. Effective correction should minimize the global variance of negative controls.

- Visualize: Create boxplots of negative control gene expression, colored by batch, before and after correction. Successful correction shows overlapping distributions.

Data Summaries

Table 1: Impact of Common Batch Confounders on Expression Data

| Confounder Source | Typical Effect on Data | Recommended Diagnostic Plot | Common Correction Method |

|---|---|---|---|

| Reagent Lot Variation | Multiplicative scaling or additive shift for a subset of genes/features. | Boxplots of expression per lot; PCA colored by lot. | Combat, SVA, limma's removeBatchEffect. |

| Sequencing Lane/Run (RNA-seq) | Differences in library depth, coverage uniformity, and base-call quality. | Correlation matrix of samples; Sequencing depth vs. PC1. | TMM/RSF normalization (edgeR/DESeq2) followed by batch-aware DE. |

| Processing Date/Time | Complex, often the strongest confounder, encapsulating multiple sub-effects. | PCA colored by date. | Include as a covariate in linear models (limma, DESeq2). |

| RNA Integrity Number (RIN) | Biases toward 3' or 5' ends; affects global expression estimates. | Scatterplot of RIN vs. first principal component. | RIN as a covariate in statistical model; RUV correction. |

| Cell Culture Passage Number | Biological drift mimicking a batch effect, alters metabolism & signaling pathways. | PCA colored by passage; trajectory analysis. | Match passages across conditions; model as a continuous covariate. |

Table 2: Comparison of Major Batch Effect Correction Algorithms

| Algorithm (Package) | Model Assumption | Suitable For | Key Strength | Key Limitation |

|---|---|---|---|---|

| ComBat (sva) | Empirical Bayes; adjusts for additive and multiplicative effects. | Microarray, RNA-seq (post-normalization). | Powerful for known batches, preserves biological signal well. | Assumes batch is known; can over-correct with small sample sizes. |

| removeBatchEffect (limma) | Linear model; removes additive effects. | Any platform (log2-scale). | Simple, fast, transparent. Good for visualization. | Does not adjust standard errors; DE analysis must include batch in model. |

| Surrogate Variable Analysis (SVA) | Identifies hidden factors (surrogate variables) from data. | Microarray, RNA-seq. | Discovers and corrects for unknown batch effects. | Risk of capturing biological signal as a "batch" if not carefully guided. |

| RUV (RUVSeq) | Uses control genes/samples to estimate unwanted variation. | Primarily RNA-seq. | Leverages negative controls for a biologically grounded correction. | Requires a set of known invariant genes/samples, which may not always be available. |

| Harmony | Iterative clustering and integration based on PCA. | Single-cell RNA-seq, bulk (increasingly). | Effective for complex, non-linear batch effects across cell types. | Computationally intensive for very large datasets. |

Visualizations

Title: Batch Effect Diagnostic Decision Workflow

Title: Batch Correction Method Validation Protocol

Technical Support Center

Troubleshooting Guides

Issue 1: High False Discovery Rate (FDR) in DE Analysis

- Problem: A standard differential expression (DE) analysis, using tools like DESeq2 or limma-voom, returns an unexpectedly high number of significant genes (e.g., 5000+). Permutation tests or validation by qPCR shows many are false positives.

- Diagnosis: Batch effects are confounded with the experimental condition of interest. For example, all "Control" samples were processed in June and all "Treated" samples in July.

- Solution:

- Preventive: Randomize sample processing order. Use balanced block designs.

- Corrective: Apply batch effect correction before DE testing. For RNA-seq, use

limma::removeBatchEffect()on log-CPM counts (for exploration) or include batch as a covariate in the DESeq2 design formula (e.g.,~ batch + condition). For microarray, use ComBat or SVA.

- Verification: Perform PCA on the corrected data. Batch clusters should dissipate while condition-specific separation remains.

Issue 2: Failure to Replicate Findings in a Follow-up Study

- Problem: Key biomarkers from an initial study show no significance in a subsequent, technically similar study.

- Diagnosis: Unmodeled batch effects from different reagent lots, sequencer runs, or personnel have introduced systematic variation that drowns out the biological signal.

- Solution:

- Re-analyze the original and new data together in a single model with

studyorbatchas a covariate. - Use meta-analysis techniques that account for between-batch heterogeneity.

- Apply cross-batch normalization methods like percentile scaling or quantile normalization (with caution).

- Re-analyze the original and new data together in a single model with

- Verification: Check inter-study correlation of housekeeping genes or negative controls. High correlation suggests batch correction may be feasible.

Issue 3: PCA Shows Strong Clustering by Processing Date, Not Condition

- Problem: The first principal component (PC1) explains >50% of variance and separates samples by processing date.

- Diagnosis: Technical variation (batch) is the largest source of variance in the dataset.

- Solution:

- Before DE: Apply a batch correction algorithm (e.g., ComBat-seq, RUVseq). Use negative controls or spike-ins if available.

- During DE: Include the batch factor in your statistical model. For DESeq2:

dds <- DESeqDataSetFromMatrix(countData, colData, design = ~ batch + condition). - After DE: Use batch-aware FDR methods or independent validation.

- Verification: Re-run PCA post-correction. Variance explained by PC1 should decrease, and separation by experimental condition should become more prominent.

Frequently Asked Questions (FAQs)

Q1: When should I correct for batch effects—before or during differential expression analysis?

A: The preferred method is to model batch as a covariate during the analysis (e.g., in the DESeq2/limma design formula). This preserves the estimation of uncertainty correctly. Pre-correction (e.g., using removeBatchEffect) is useful for visualization but can lead to an overstatement of degrees of freedom if used before DE testing. It is best used for exploratory plots.

Q2: How do I know if I have a batch effect? A: Use exploratory data analysis (EDA). Generate a PCA plot colored by known batch variables (processing date, lane, technician) and by experimental condition. If samples cluster strongly by batch, and batch is confounded with condition, you have a serious problem. Metrics like the silhouette score can quantify this.

Q3: Can I correct for batch effects if I have no replicates within a batch? A: This is a very challenging scenario. If you have only one sample per condition per batch, standard correction methods fail because batch and condition are perfectly confounded. Your only recourse is to use prior knowledge or external controls (e.g., spike-ins, housekeeping gene stability) to make weak adjustments. The primary solution is to re-design the experiment.

Q4: What's the difference between ComBat and SVA? A: ComBat uses an empirical Bayes framework to adjust for known batch variables. SVA (Surrogate Variable Analysis) is used to discover and adjust for unknown batch effects or latent confounders. Use ComBat when you know the source of the batch effect (e.g., plate ID). Use SVA when you suspect hidden factors.

Q5: Does batch correction increase the risk of false negatives? A: Yes, over-correction is a risk. If you aggressively correct for a factor that is not purely technical but contains some biological signal, you can remove real biological differences, leading to false negatives. Always visualize data before and after correction, and use negative controls to assess performance.

Data Presentation: Impact of Batch Correction

Table 1: Simulated RNA-seq Study Results (n=6 per group, 2 batches)

| Analysis Scenario | Total DE Genes (FDR<0.05) | Verified True Positives | False Discovery Rate (Observed) | Reproducibility Rate (in follow-up) |

|---|---|---|---|---|

| Ignoring Batch (Confounded) | 4,521 | 850 | 81.2% | 12% |

| Modeling Batch in Design | 1,103 | 880 | 20.2% | 89% |

Using removeBatchEffect + DE |

1,215 | 875 | 28.0% | 85% |

| Ideal Experiment (No Batch) | 987 | 900 | 8.7% | 95% |

Data based on common findings from replication studies in genomics literature. Simulation parameters: 20,000 genes, 80% null, strong batch effect (explaining 40% variance) confounded with condition.

Experimental Protocols

Protocol 1: Diagnosing Batch Effects with PCA (RNA-seq)

- Input: Raw count matrix from RNA-seq alignment (e.g., from STAR/FeatureCounts).

- Normalization: Calculate log2-counts-per-million (logCPM) using the

cpm()function from the edgeR R library, applying a prior count of 1. - Variable Selection: Subset to the top 1000 most variable genes based on median absolute deviation (MAD).

- PCA: Perform Principal Component Analysis on the transposed matrix of selected genes using the

prcomp()function. - Visualization: Plot PC1 vs. PC2, coloring points by

batch_idand shaping points byexperimental_condition. - Interpretation: If points cluster primarily by color (batch), a significant batch effect is present.

Protocol 2: Batch Correction using DESeq2 with Covariate

- Construct DESeqDataSet:

dds <- DESeqDataSetFromMatrix(countData = cts, colData = coldata, design = ~ batch + condition). - Pre-filter: Remove genes with fewer than 10 reads total.

- Run Analysis:

dds <- DESeq(dds). - Extract Results:

res <- results(dds, contrast=c("condition", "treated", "control")). - Shrink Log2FC (optional):

resLFC <- lfcShrink(dds, coef="condition_treated_vs_control", type="apeglm")for improved effect size estimates. - Output: A results table with batch-adjusted p-values and log2 fold changes.

Protocol 3: Exploratory Correction with limma-removeBatchEffect (For visualization ONLY, not for direct DE testing)

- Input: logCPM matrix (as in Protocol 1, step 2).

- Apply Correction:

corrected_logCPM <- removeBatchEffect(logCPM_matrix, batch=coldata$batch). - Visualize: Re-run PCA (Protocol 1, steps 3-5) on the

corrected_logCPMmatrix. - Compare: Assess if batch clustering is reduced and biological separation is clearer.

Visualizations

Title: Decision Path: Impact of Batch Effect Handling on Results

Title: Batch Effect Diagnosis and Correction Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Batch-Aware Research |

|---|---|

| UMI (Unique Molecular Identifier) Adapters | Attached during cDNA library prep to tag each original molecule. Enables correction for PCR amplification bias and more accurate quantification, reducing technical noise. |

| External RNA Controls Consortium (ERCC) Spike-in Mix | A set of synthetic RNA molecules at known concentrations added to lysates. Serves as a normalization standard across batches to correct for technical variation in RNA isolation and sequencing. |

| Inter-Plate Control Samples | Aliquots from a single, large-volume biological reference sample (e.g., pooled from all conditions) included on every processing batch (plate, lane, day). Used to monitor and correct for inter-batch variation. |

| Benchmarking Cell Lines (e.g., MAQC/SEQC samples) | Widely characterized reference cell lines with known transcriptional profiles. Run periodically to calibrate platform performance and identify batch-related drift over time. |

| Blocking/Stratification in Lab Protocol | A design solution, not a reagent. Using balanced block designs where each batch contains a balanced mix of all experimental conditions prevents confounding from the start. |

| DNA/RNA Stabilization Tubes (e.g., PAXgene, RNAlater) | Standardize the initial collection and stabilization step, minimizing pre-analytical variation that can become a major, uncorrectable batch effect. |

Troubleshooting Guides & FAQs

Q1: After running PCA on my gene expression matrix, the first two principal components (PC1 & PC2) clearly separate samples by batch, not by my experimental condition. What does this mean and what should I do next?

A: This is a classic indicator of a strong batch effect. PC1 and PC2 capturing batch variation suggests this unwanted technical variation is larger than the biological signal of interest.

- Next Steps:

- Verify: Check the proportion of variance explained by these PCs. If PC1 (batch) explains >50% variance, batch correction is essential.

- Document: Note the batch identity (processing date, technician, lane, etc.) for all samples.

- Proceed: Apply a batch effect correction method (e.g., ComBat, limma's

removeBatchEffect, or SVA) before your differential expression analysis. Re-run PCA post-correction to confirm batch separation is reduced.

Q2: My hierarchical clustering dendrogram groups all replicates from the same batch together, even across different treatment groups. Is my experiment failed?

A: Not necessarily failed, but the batch effect is severely confounding your analysis. The biological signal is being masked.

- Action Plan:

- Quality Control: First, rule out technical failures. Check RNA Quality Numbers (RQN) and alignment metrics per batch.

- Within-Batch Analysis: As a diagnostic, perform differential expression analysis within a single batch. If expected signals appear here but not in the combined data, it confirms a batch effect rather than a null result.

- Apply Correction: Use a parametric correction tool like ComBat if you have many batches/samples, or a non-parametric method like Percentile Normalization if sample sizes are small.

Q3: When using k-means clustering on samples, how do I choose the right number of clusters (k) to distinguish batch effects from biological groups?

A: Use objective metrics on your PCA-reduced data (e.g., top 50 PCs) to evaluate cluster quality relative to known annotations.

- Methodology:

- Calculate the Silhouette Score and Calinski-Harabasz Index for a range of k values (e.g., k=2 to 10).

- Create an elbow plot of the within-cluster sum of squares (WSS).

- Cross-reference the optimal k from these metrics with your known number of batches and treatment groups. If the optimal k matches your number of batches, the effect is dominant. See Table 1 for metric interpretation.

Table 1: Clustering Validation Metrics for Batch Effect Diagnosis

| Metric | Optimal Value | Indicates Batch Effect if... |

|---|---|---|

| Silhouette Score | Closer to 1 (max=1) | High score is achieved when k = number of batches. |

| Calinski-Harabasz Index | Higher value | Peak value occurs at k = number of batches. |

| Dunn Index | Higher value | Peak value occurs at k = number of batches. |

| Within-Cluster Sum of Squares (WSS) | "Elbow" point | Sharp elbow at k = number of batches. |

Q4: I've applied a batch correction method, but my negative controls still don't cluster together in the new PCA plot. What does this indicate?

A: This suggests incomplete correction or the presence of non-linear batch effects.

- Troubleshooting Steps:

- Check Controls: Ensure negative controls are truly biologically identical (e.g., same cell line, same treatment).

- Model Diagnosis: Your correction model may be underfit. If using ComBat, try the

model.matrixto include known biological covariates to prevent over-correction. - Advanced Methods: Consider non-linear or deep learning-based integration methods (e.g., Scanorama, Harmony, or BBKNN for single-cell data) if simple linear correction fails.

Q5: How can I visually distinguish a batch effect from a strong biological sex effect in my PCA?

A: Use supervised coloring and shape plotting in your PCA.

- Protocol:

- Generate the PCA plot (PC1 vs. PC2, PC1 vs. PC3).

- Color all points by Batch (e.g., blue for Batch A, red for Batch B).

- Overlay shapes (e.g., circles, triangles) to represent Sex (or your biological covariate).

- Interpretation: If all circles of one color cluster separately from all triangles of the same color, it suggests a combined batch and sex effect. A clear separation by color regardless of shape indicates a dominant batch effect. See Diagram 1.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Batch Effect Diagnostics & Correction

| Item / Reagent | Function in Visual Diagnostics |

|---|---|

R/Bioconductor (limma, sva, pcago) |

Core software packages for performing PCA, clustering, and batch effect correction (e.g., removeBatchEffect, ComBat). |

Python (scanpy, scikit-learn, harmonypy) |

Libraries for comprehensive PCA, k-means/ hierarchical clustering, and advanced integration (e.g., Harmony). |

| External Spike-In Controls (e.g., ERCC RNA) | Non-biological exogenous RNAs added to samples to quantify technical variation and normalize across batches. |

| Reference RNA Sample (e.g., Universal Human Reference) | A standardized RNA pool run across all batches to align measurements and identify batch-specific drift. |

| Immortalized Cell Line Control | Genetically identical cells cultured and processed across batches to provide a baseline for unwanted variation. |

| Batch-Aware Sequencing Pooling | Strategically pooling samples from different conditions into the same sequencing lane to minimize lane-effect confounding. |

Experimental Protocol: Diagnostic PCA & Clustering Workflow

Title: Systematic Diagnostic for Batch Effects in Gene Expression Studies.

- Data Preparation: Start with a normalized count matrix (e.g., TPM, FPKM, or variance-stabilized counts). Annotate samples with

Batch_IDandCondition_ID. - PCA Execution:

- In R: Use

prcomp(t(log2(count_matrix + 1)))or theplotPCAfunction fromDESeq2. - In Python: Use

sklearn.decomposition.PCAafter scaling. - Plot PC1 vs. PC2, PC1 vs. PC3, PC2 vs. PC3.

- In R: Use

- Variance Explained: Calculate and tabulate the percentage of variance explained by each PC (see Table 3).

- Clustering Analysis:

- Perform hierarchical clustering using a distance matrix (1 - Pearson correlation) and Ward's linkage.

- Perform k-means clustering (k=2 to k=number of groups + 2) on the top N PCs that explain >80% variance.

- Validate clusters using metrics in Table 1.

- Visual Correlation: Create a heatmap of the sample correlation matrix, ordered by batch and condition.

- Decision Point: If PCA/clustering shows batch-driven patterns, proceed to formal batch correction before differential expression analysis.

Table 3: Example PCA Variance Explained Output

| Principal Component | Standard Deviation | Proportion of Variance | Cumulative Proportion |

|---|---|---|---|

| PC1 | 15.2 | 0.652 | 0.652 |

| PC2 | 6.1 | 0.105 | 0.757 |

| PC3 | 4.7 | 0.062 | 0.819 |

| PC4 | 3.5 | 0.034 | 0.853 |

Technical Support & Troubleshooting Hub

FAQ: Common Issues in Isolating Biological Signals from Confounding Noise

Q1: My differential expression analysis shows strong signals, but I suspect they are driven by batch effects or technical confounders, not biology. How can I diagnose this? A: This is a classic symptom of confounding. First, visually inspect your data using Principal Component Analysis (PCA).

- Protocol: Generate a PCA plot from your normalized expression matrix (e.g., log2-CPM or log2-RPKM). Color the samples by the suspected confounding variable (e.g., processing date, sequencing lane) and also by your primary biological condition (e.g., disease vs. control).

- Diagnosis: If the samples cluster more strongly by the technical batch than by the biological group in the first few principal components, confounding is likely severe. Proceed to adjustment.

Q2: I've identified confounders. What are my main adjustment options, and when do I choose each? A: The choice depends on your experimental design and the confounder's relationship to your biological variable.

| Adjustment Method | Best For | Key Limitation | Typical Implementation |

|---|---|---|---|

Regression-Based (e.g., ComBat, limma's removeBatchEffect) |

When batch is known and not confounded with condition (e.g., balanced design). | Can over-correct if batch and condition are perfectly correlated. | R: sva::ComBat() or limma::removeBatchEffect() |

| Inclusion in Statistical Model | When confounders are measured continuous or categorical variables. | Requires careful model specification to avoid collinearity. | R: DESeq2: design = ~ batch + condition |

| Surrogate Variable Analysis (SVA) | When unknown, unmeasured, or latent confounders are present. | Computationally intensive; can capture biological signal if not controlled. | R: sva::svaseq() to estimate SVs, then include in model. |

Q3: After batch effect adjustment, my list of significant genes shrinks dramatically. Did I over-adjust and remove the biological signal? A: Not necessarily. A large reduction often indicates that the initial list was heavily polluted by confounding noise.

- Troubleshooting Guide:

- Validate with Positive Controls: Check if known, established markers for your biological condition remain significant post-adjustment.

- Inspect Negative Controls: Ensure housekeeping genes or negative control genes (not expected to differ) do not appear in your top results.

- Use Independent Validation: If possible, confirm key findings with an orthogonal method (e.g., qPCR on new samples) or in a completely independent, well-controlled dataset.

- Benchmark with a Simulated Signal: Spike in a known fold-change for a set of genes in a mock dataset and confirm your adjusted pipeline can recover it.

Q4: What is the minimum sample size for reliable batch effect correction? A: There is no universal minimum, but power drops sharply with small sample sizes. The following table summarizes general guidelines based on simulation studies:

| Batch Correction Method | Recommended Minimum per Batch/Condition Group | Critical Consideration |

|---|---|---|

| Regression-Based (Known Batch) | At least 3-4 samples per batch per biological condition. | With fewer, the batch mean estimate is unstable. |

| Surrogate Variable Analysis (SVA) | Generally, larger studies (n > 10-15 per group) are needed. | SV estimation is unreliable with very low replication. |

| General Rule of Thumb | More samples increase confidence that adjustment removes only technical noise, not biology. | Always perform diagnostic plots (PCA pre/post) to assess correction quality. |

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Tool | Primary Function in Isolating Biological Signal |

|---|---|

| UMI (Unique Molecular Identifier) Tags | Attached to each cDNA molecule during library prep to correct for PCR amplification bias, a key technical confounder in quantifying expression. |

| External RNA Controls Consortium (ERCC) Spike-Ins | Synthetic RNA molecules added at known concentrations to diagnose technical artifacts, batch effects, and absolute sensitivity. |

| Housekeeping Gene Panels | Genes assumed to be stably expressed across conditions; used as reference for normalization to adjust for global technical differences (e.g., RNA input). |

| Reference Samples/Technical Replicates | Identical biological samples processed across all batches/lanes to directly measure and later subtract batch-specific technical variation. |

| Pooled Library Samples | Equimolar pools of all experimental libraries sequenced across all runs to identify run-specific biases. |

Experimental Protocol: A Standard Workflow for Confounder Diagnosis and Adjustment

Title: Integrated Protocol for Batch Effect Diagnosis and Correction in RNA-Seq.

Step 1: Data Preparation & Normalization.

- Start with raw count data. Perform standard normalization for sequencing depth (e.g., DESeq2's median of ratios, edgeR's TMM). Transform normalized counts for visualization (e.g., variance-stable transformation in DESeq2, or log2-CPM).

Step 2: Diagnostic Visualization.

- Generate a PCA plot and a clustered correlation heatmap of samples. Annotate plots with both biological and technical metadata columns (batch, RIN, lane, etc.).

Step 3: Statistical Adjustment.

- For known batches: Incorporate the batch variable as a covariate in your linear model (e.g., in DESeq2, Limma-Voom).

- For unknown/latent factors: Use SVA. Estimate surrogate variables (SVs) from the data, then include the significant SVs as covariates in your primary differential expression model alongside your condition of interest.

Step 4: Post-Adjustment Validation.

- Re-generate PCA plots on the adjusted data. The samples should now cluster primarily by biological condition, with reduced clustering by technical factors.

- Perform differential expression analysis on the adjusted model. Evaluate positive and negative control genes.

Pathway and Workflow Visualizations

Title: Workflow for Isolating Biological Signal from Confounded Data

Title: Causal Diagram of Confounding in Expression Data

The Batch Effect Correction Toolkit: From Experimental Design to Computational Solutions

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My differential expression results show strong clustering by processing date, not by treatment group. What experimental design flaw likely caused this, and how can I fix it in my next experiment?

A: This indicates a severe batch effect confounded with your factor of interest. The flaw is likely a lack of blocking and randomization. All samples from one treatment group were probably processed in one batch (e.g., on one day), making biological signal inseparable from technical noise. Fix: For your next experiment, implement a complete randomized block design. If you have 2 treatments (A, B) and 4 processing days (batches), each day should process an equal, randomized number of A and B samples. For existing data, you must use statistical batch correction methods (e.g., ComBat, limma's removeBatchEffect), but note this is a weaker, post-hoc solution.

Q2: I used randomization, but after RNA sequencing, a known batch factor (sequencing lane) is still slightly correlated with my treatment group. Is this a failure?

A: Not necessarily. Perfect balance is not always achievable (e.g., due to failed samples). A slight correlation is acceptable if it is weak. The key diagnostic is to check if the variance explained by "batch" (lane) is significantly less than the variance explained by "treatment" in a PCA plot. Action: Include the "lane" factor as a covariate in your differential expression model (e.g., in DESeq2: ~ lane + condition). This will adjust for the residual batch effect. Document the imbalance in your metadata.

Q3: How do I choose between blocking and randomization for a time-sensitive assay? A: Use this decision guide:

- Blocking: Use when you can identify a major known source of technical variation (e.g., technician, instrument, reagent lot) AND you have the capacity to process all samples for one block within a consistent, short period. This physically eliminates the batch effect from the experiment.

- Randomization: Use when many small, hard-to-pinpoint variations exist (e.g., ambient lab temperature, minor timing differences) OR when the experiment is too large to process in one block. Randomization ensures these unplanned variations are distributed evenly across groups, averaging out their impact.

Q4: I have multiple batch factors (e.g., extraction day, sequencing run, library prep kit version). How do I prioritize them in design? A: Prioritize based on expected magnitude of effect. Typically: Library Prep Kit/Reagent Lot > RNA Extraction Batch > Sequencing Run/Lane. The design principle is to nest smaller batches within larger, more stable ones. For example, if using two kit lots, do not process all samples from one treatment with lot 1. Instead, within each kit lot, perform extractions across multiple days, and within each extraction batch, randomize samples across sequencing lanes.

| Design Strategy | Typical Reduction in Variance Explained by Batch* (PCA) | Key Risk if Not Applied | Post-Hoc Correction Complexity |

|---|---|---|---|

| Complete Blocking | 70-90% | Confounding: Batch effect mimics biological signal. | Low (Batch can be included as a simple covariate). |

| Balanced Randomization | 50-70% | Increased within-group variance, reducing statistical power. | Moderate (Requires careful model specification). |

| No Formal Design (Convenience) | 0% (Reference) | High risk of both confounding and loss of power. Analysis may be invalid. | Very High/Often Insufficient. |

*Estimated based on simulated and empirical studies from genomics literature. Actual reduction depends on initial batch effect strength.

Experimental Protocol: Implementing a Randomized Complete Block Design for a Transcriptomics Study

Objective: To compare gene expression between two conditions (Control vs. Treated) while minimizing the impact of processing date (Day) as a batch effect.

Materials: See "Research Reagent Solutions" table below.

Protocol:

- Sample Size & Block Definition: Determine total sample size (e.g., N=16: 8 Control, 8 Treated). Define "Day" as a block. Based on throughput, decide on 4 samples per day. Therefore, you will have 4 blocks (Day 1, 2, 3, 4).

- Randomization within Block: Label all 16 biological samples with a unique ID. For each block (day), randomly assign 2 Control and 2 Treated samples to be processed on that day, using a random number generator. This yields a balanced design.

- Wet-Lab Processing: Each day, process the 4 assigned samples together through the entire workflow: RNA extraction, QC, library preparation, and pooling. Keep meticulous records linking sample ID to condition and processing day.

- Sequencing: Pool libraries from all blocks and sequence across multiple lanes of a flow cell. If possible, distribute samples from the same block across multiple lanes to avoid confounding lane with day.

- Metadata Documentation: Create a final metadata table with columns:

Sample_ID,Condition,Processing_Day,Sequencing_Lane,RNA_QC_Value.

Visualization: Experimental Design Workflow

Title: Workflow for Randomized Block Design

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Minimizing Batch Effects |

|---|---|

| Unique Dual Index (UDI) Kits | Allows massive, non-confounded multiplexing. Samples from different blocks/treatments can be pooled early and sequenced together, preventing lane-level batch effects. |

| Master Mix Aliquots (Large, Single Lot) | Aliquot a large volume of a single-lot master mix (e.g., for RT or PCR) for the entire study to eliminate reagent lot variation. |

| Automated Nucleic Acid Purification Systems | Increases precision and consistency of extraction yields compared to manual column-based methods, reducing a major source of technical variation. |

| Internal Reference RNA Controls (e.g., ERCC) | Spike-in controls added prior to library prep monitor technical variation across samples, allowing for post-hoc normalization. |

| Barcoded Beads for Single-Cell/SPLiT-seq | In single-cell studies, these allow samples from multiple conditions to be tagged and processed in a single reaction tube, eliminating handling batch effects. |

FAQs

Q1: What is the most common initial check for batch effects in my gene expression matrix before formal analysis? A1: The most common and critical initial check is a Principal Component Analysis (PCA) plot colored by batch. A strong separation of samples along the first or second principal component (PC) by batch, rather than by the biological condition of interest, is a clear visual indicator of a significant batch effect that must be addressed before differential expression analysis.

Q2: My negative control probes show high variance across arrays. Does this indicate a problem? A2: Yes. Negative control probes should exhibit consistently low expression. High variance in these probes is a strong technical red flag, often indicating issues with background noise, hybridization efficiency, or scanner calibration that require correction via normalization methods like RMA (for Affymetrix) or normexp (for two-color arrays).

Q3: After RNA-Seq alignment, my sample counts correlate strongly with sequencing depth rather than condition. How should I normalize? A3: This is expected. Use a scaling normalization method to adjust for library size differences. The current standard is to use Trimmed Mean of M-values (TMM) in edgeR or Relative Log Expression (RLE) in DESeq2. These methods are robust to the presence of highly differentially expressed genes and are superior to simple counts per million (CPM) for downstream differential expression.

Q4: When should I use quantile normalization versus other methods for microarray data? A4: Quantile normalization is powerful for making the overall distribution of probe intensities identical across arrays. It is most appropriate when you expect the global distribution of expression to be similar across all samples (e.g., in a tightly controlled cell line experiment). It is not recommended if you expect widespread, global expression changes between conditions or if your batch structure is confounded with biology, as it can remove real biological signal.

Q5: How do I handle zero or low counts in RNA-Seq data before log-transformation for PCA?

A5: Direct log-transformation of count data (log2(count+1)) is sensitive to zeros/low counts. Instead, use a variance-stabilizing transformation (VST) from DESeq2 or the voom transformation in the limma pipeline. These transformations model the mean-variance relationship of the data and provide normalized, log2-like values that are suitable for PCA and other distance-based analyses.

Troubleshooting Guides

Issue: Strong Batch Clustering in PCA After "Standard" Normalization

Symptoms: Samples cluster perfectly by processing date or sequencing lane in PCA, obscuring biological groups. Step-by-Step Resolution:

- Confirm: Generate PCA plot using

plotPCA(DESeq2) orprcompon VST/voom-transformed data, colored by batch and condition. - Choose Correction Method:

- If batch is not confounded with experimental condition (e.g., samples from all conditions are in each batch), use a statistical correction tool.

- For limma-voom workflows, use

removeBatchEffect()function prior to differential expression. (Note: Use this for visualization and clustering only. For DE, include batch in the linear model.) - For DESeq2, include

batchas a factor in the design formula (e.g.,~ batch + condition). - For severe cases, consider a supervised method like ComBat (from

svapackage), which uses empirical Bayes to adjust for batch.

- Validate: Re-run PCA on the batch-corrected data. Biological condition separation should improve, while batch clustering should diminish.

Issue: High Technical Replicate Variance in qPCR Data

Symptoms: Ct values for housekeeping genes vary widely between technical replicates, making ∆∆Ct unreliable. Step-by-Step Resolution:

- Inspect Raw Fluorescence Curves: Check for abnormal amplification curves (e.g., low plateau, sigmoidal shape).

- Baseline Correction: Manually set a consistent baseline cycle range for all reactions (e.g., cycles 3-15).

- Threshold Setting: Apply a consistent fluorescence threshold across all runs or use a derivative method for Ct determination.

- Outlier Identification: Use Grubb's test or the coefficient of variation (CV > 5% for technical replicates is often a flag) to identify aberrant replicates for removal.

- Normalize: Average the remaining technical replicate Ct values. Proceed with ∆∆Ct using multiple, validated housekeeping genes, not just one.

Issue: Poor Agreement Between Microarray and RNA-Seq Results from the Same Samples

Symptoms: Lists of top differentially expressed genes (DEGs) from the two platforms show low overlap. Step-by-Step Resolution:

- Preprocessing Check: Ensure both datasets are preprocessed appropriately (RMA for arrays, TMM/VST for RNA-Seq).

- Gene Identifier Mapping: Use a robust, up-to-date annotation file (e.g., from Bioconductor) to map probesets to stable gene identifiers (e.g., Ensembl ID). Probe-to-gene mapping ambiguity is a major source of discordance.

- Filter Low Expression: Apply platform-specific filters (e.g., remove low-intensity probesets; filter genes with low counts in RNA-Seq).

- Compare at the Level of Effect Size: Instead of just top p-value lists, compare the log2 fold changes for the commonly detected genes. Use a correlation or concordance plot. Expect a high correlation but not perfect 1:1 agreement due to platform-specific biases.

- Meta-analysis: Use methods like

rankProdorMetaVolcanoRto perform a formal meta-analysis across platforms, which is more powerful than simple overlap.

Data Tables

Table 1: Comparison of Common RNA-Seq Normalization Methods

| Method | Package/Function | Key Principle | Best Use Case | Limitation |

|---|---|---|---|---|

| TMM | edgeR (calcNormFactors) |

Trimmed Mean of M-values; ref sample-based scaling. | Most experiments; robust to few DE genes. | Assumes most genes are not DE. |

| RLE | DESeq2 (estimateSizeFactors) |

Relative Log Expression; median ratio of counts to geometric mean. | Standard for DESeq2; good for most designs. | Sensitive to large numbers of DE genes. |

| Upper Quartile | edgeR, others | Scales using upper quartile (75th percentile) of counts. | Simple, historic. | Poor performance if high counts are differential. |

| Transcripts Per Million (TPM) | Custom calculation | Normalizes for gene length and sequencing depth. | Comparing expression levels between genes within a sample. | Not for between-sample DE analysis. |

Table 2: Impact of Normalization on Batch Effect Mitigation (Simulated Data Example)

| Analysis Step | Correlation with Batch (Mean | r | ) | Correlation with Condition (Mean | r | ) | Key Metric (PC1 % Variance) |

|---|---|---|---|---|---|---|---|

| Raw Counts | 0.85 | 0.25 | PC1: 65% (Batch) | ||||

| After TMM (Library Size) | 0.72 | 0.41 | PC1: 58% (Batch) | ||||

| After ComBat-seq (Batch Corrected) | 0.15 | 0.78 | PC1: 40% (Condition) |

Experimental Protocols

Protocol: Diagnosing Batch Effects with PCA

Objective: To visually and quantitatively assess the presence of technical batch effects in a gene expression dataset. Materials: Normalized expression matrix (log2-scale), sample metadata table with batch and condition information. Procedure:

- Center the Data: For the gene expression matrix

X, center each gene (row) by subtracting its mean expression across all samples. This is often done automatically by theprcompfunction. - Perform PCA: Execute PCA on the centered, and optionally scaled, data matrix using the

prcomp()function in R:pca_result <- prcomp(t(expression_matrix), center=TRUE, scale.=FALSE). - Extract Variance: Summarize the result:

summary(pca_result). Note the proportion of variance explained by each principal component (PC). - Visualize: Plot PC1 vs. PC2 using

ggplot2, coloring points bybatchand shaping points bycondition. - Interpret: Strong clustering of samples by color (

batch) indicates a dominant batch effect. The desired outcome is clustering by shape (condition).

Protocol: Performing RMA Normalization on Affymetrix Microarray Data (CEL files)

Objective: To generate robust, background-corrected, normalized, and summarized expression values from raw Affymetrix CEL files.

Materials: Affymetrix .CEL files for all samples, appropriate Bioconductor annotation package (e.g., hgu133plus2.db).

Procedure in R/Bioconductor:

- Load Packages:

library(affy),library(oligo)(for newer arrays), orlibrary(affycoretools). - Read Data:

raw_data <- ReadAffy()orraw_data <- oligo::read.celfiles(list.celfiles()). - Perform RMA: Execute the RMA algorithm in a single command which performs three steps:

- Background Correction: (RMA convolution model to adjust for optical noise).

- Quantile Normalization: Makes the probe intensity distribution identical across arrays.

- Summarization: Calculates a robust multi-array average (median polish) for each probeset.

normalized_expr <- rma(raw_data).

- Extract Matrix: Obtain the final log2-expression matrix:

expr_matrix <- exprs(normalized_expr). - Annotation: Map probeset IDs to gene symbols using the corresponding annotation package.

Diagrams

Preprocessing & Batch Effect Correction Workflow

Normalization & Batch Correction Decision Tree

The Scientist's Toolkit

Key Research Reagent Solutions for Preprocessing & Normalization Experiments

| Item | Function/Benefit | Example/Supplier |

|---|---|---|

| External RNA Controls Consortium (ERCC) Spike-Ins | Synthetic RNA molecules added to samples pre-extraction to monitor technical variability, assess sensitivity, and validate normalization. | Thermo Fisher Scientific (Ambion) |

| UMI (Unique Molecular Identifier) Adapters | Oligonucleotide tags used in RNA-Seq library prep to label each original molecule, enabling correction for PCR amplification bias and more accurate digital counting. | Illumina, IDT |

| Commercial RNA Reference Standards | Well-characterized, stable RNA from defined cell lines (e.g., MAQC samples) used as inter-laboratory benchmarks for platform performance and normalization. | Agilent, Stratagene |

| Housekeeping Gene Panels (qPCR) | A pre-validated set of multiple, stable reference genes used for reliable ∆∆Ct normalization, superior to using a single gene like GAPDH or ACTB. | Bio-Rad, TaqMan |

| Degradation Control Probes (Microarray) | Probes targeting the 5' and 3' ends of transcripts to assess RNA integrity and identify samples with degradation bias. | Affymetrix GeneChip arrays |

| Batch-Corrected Reference Datasets | Publicly available, curated datasets (e.g., from GEO) where batch effects have been professionally corrected, useful as a validation standard. | NCBI GEO, ArrayExpress |

Troubleshooting Guides & FAQs

General Batch Effect Correction

Q1: My corrected data still shows strong batch clustering in the PCA plot. What went wrong? A: This persistent clustering often indicates incomplete correction. First, verify that your batch variable correctly defines the technical artifacts. Common issues include:

- Confounded Design: If batch is perfectly correlated with a biological condition of interest (e.g., all controls from Batch A, all treatments from Batch B), no statistical method can disentangle them. You must acquire new data or use a reference-based method like RUV if spike-in or negative controls are available.

- Non-Linear Batch Effects: Standard ComBat and

removeBatchEffectassume additive (mean-shift) and multiplicative (scale) effects. Non-linear distortions may require more advanced approaches like ComBat with non-parametric priors or a non-linear method. - Insufficient Model: You may have missed a crucial batch factor (e.g., processing day and technician). Re-examine your metadata and include all relevant factors in the model formula.

Q2: How do I choose between Empirical Bayes (ComBat) and linear modeling (removeBatchEffect)?

A: The choice depends on your study design and batch size.

| Method | Optimal Use Case | When to Avoid |

|---|---|---|

| ComBat | Small sample sizes per batch (n < 20). Its Empirical Bayes approach stabilizes variance estimates by borrowing information across genes. | When batch and condition are perfectly confounded. With very large, balanced batches, its advantage over linear models diminishes. |

limma's removeBatchEffect |

Large, balanced batch sizes. It's a straightforward linear model that removes effects without modifying the variance structure of the data. | When batch sizes are very small, as variance estimates can be unstable. |

Workflow: First, run model.matrix(~condition) to create your design of interest. Then, for removeBatchEffect, also create a batch design matrix. For ComBat, ensure your data is properly normalized before correction.

ComBat-Specific Issues

Q3: After running ComBat, my variance across genes appears unnaturally uniform. Is this expected? A: Yes, to an extent. A known characteristic of the standard ComBat model is that it can over-shrink the data, especially when the prior is strong. This leads to reduced biological signal alongside batch noise. Troubleshooting Steps:

- Use the

mean.only=TRUEparameter if you suspect scale differences between batches are minimal. This applies only location adjustment. - Consider using the newer ComBat-seq model for raw count data (RNA-seq), as it operates on the Negative Binomial distribution and may preserve more biological variance.

- Validate with positive control genes (known biological markers) to ensure they remain differentially expressed post-correction.

Q4: Should I use the parametric or non-parametric prior in ComBat?

A: The parametric prior (default) assumes batch effects follow a normal distribution. The non-parametric option (par.prior=FALSE) is more flexible. Use non-parametric if:

- You have a large number of samples (>100 total) to reliably estimate the shape.

- Diagnostic plots (

plot()on thecombaobject) show the empirical batch effect distribution is highly non-normal. Otherwise, the parametric prior is more robust and recommended.

SVA/RUV-Specific Issues

Q5: For SVA, how do I determine the number of surrogate variables (SVs) to estimate?

A: Incorrect n.sv is a major source of problems. Use the num.sv() function from the sva package with one of these methods:

- Be method: The default, often robust.

- Leek method: Can be more sensitive.

- Manual inspection: Use

svaseq()withn.sv=NULLto inspect the scree plot of singular values. Look for the "elbow" point where values plateau. - Protocol: Run

num.sv(dat, mod, method="be")wheredatis your normalized matrix andmodis your condition model matrix. Start with this number, then adjust based on biological validation.

Q6: In RUV, what should I use as negative control genes or "in-silico" empirical controls? A: The choice is critical and depends on the RUV variant.

- RUVg (Controls): Use spike-in RNAs (for scRNA-seq) or housekeeping genes proven to be stable across your specific batches and conditions. Poor controls introduce new artifacts.

- RUVs (Replicate Samples): Use technical replicates or pooled samples measured across batches. This is often more reliable than assuming stable genes.

- RUVr (Residuals): Uses residuals from a first-pass GLM regression. This is a good default if no external controls are available.

- Protocol for RUVs: 1) Create a "replicate matrix" where each row is a sample and each column is a sample group. Entries are 1 if the sample is in that group, else 0. 2) Run

RUVs(x, cIdx, k, scIdx=replicateMatrix)wherecIdxis a set of stable gene indices.

| Method | Core Principle | Input Data | Key Assumption | Output |

|---|---|---|---|---|

| ComBat | Empirical Bayes shrinkage of batch parameters towards global mean. | Normalized, continuous (microarray, log-CPM). | Batch effects are systematic and estimable with a mean and variance component. | Batch-corrected expression matrix. |

limma's removeBatchEffect |

Linear regression to subtract out batch coefficients. | Any continuous, modeled data. | Batch effects are additive and fit a linear model. | Residuals with batch effect removed. Not for direct DE testing. |

| SVA | Estimates latent surrogate variables (SVs) representing unmodeled variation. | Normalized counts or log-values. | Unwanted variation is orthogonal to, or not fully captured by, the condition of interest. | Surrogate variables to be added as covariates in DE model. |

| RUV | Uses control genes/replicates to estimate unwanted factors (k). | Normalized counts (RUVg/s/r). | Control genes/samples do not respond to biological conditions of interest. | A corrected expression matrix or factors for covariate inclusion. |

Experimental Protocol: Integrated Batch Correction & DE Analysis Workflow

- Preprocessing & QC: Generate count matrix (RNA-seq) or normalize intensities (microarray). Perform exploratory PCA colored by batch and condition.

- Method Selection & Application:

- If batch is balanced and not confounded: Apply

removeBatchEffectonlog2(CPM+1)data, then proceed with standard limma-voom. - If batches are small or unbalanced: Apply ComBat (or ComBat-seq for counts) using the

svapackage with the modelmodel.matrix(~condition). - If hidden confounding is suspected: Run SVA (

svaseq()function) to estimaten.svand add them to the design:mod <- model.matrix(~condition + svs). - If reliable controls exist: Run RUVg or RUVs to obtain corrected counts or W factors for the design matrix.

- If batch is balanced and not confounded: Apply

- Post-Correction Validation: Regenerate PCA plot. Use positive/negative control genes to verify biological signal retention and batch mixing.

- Differential Expression: Perform DE analysis (e.g., limma-voom, DESeq2, edgeR) using the corrected data or the enhanced design matrix containing SVs/RUV factors.

Visualizations

Title: Batch Effect Correction Decision & Analysis Workflow

Title: Core Assumptions and Use Cases for Three Major Methods

The Scientist's Toolkit: Research Reagent Solutions

| Item / Reagent | Function in Batch Effect Context |

|---|---|

| External RNA Controls Consortium (ERCC) Spike-Ins | Artificially synthesized RNA sequences added to lysate before processing. Serve as negative controls (should not change biologically) for methods like RUVg to estimate technical noise. |

| Housekeeping Gene Panels | Genes presumed to have stable expression across conditions. Used as empirical controls for RUVg. Must be validated per experiment to ensure true stability. |

| Universal Human Reference RNA (UHRR) | Pooled RNA from multiple cell lines. Used as an inter-batch calibration sample or a technical replicate across runs for RUVs. |

| Poly-A Controls / Spike-ins | Used in RNA-seq to monitor technical steps (e.g., fragmentation, amplification). Can inform quality metrics that may correlate with batch. |

| Commercially Available Normalization Beads (Microarrays) | For platforms like Illumina, used to scale intensities across arrays, addressing a major source of multiplicative batch effect. |

| Processed Public Datasets (e.g., GEO) | Used as positive controls to test correction pipelines. Known batch-effect-prone datasets (e.g., TCGA, GTEx) allow method benchmarking. |

Troubleshooting Guides & FAQs

Q1: My corrected data shows higher variance or loss of signal in known positive controls after applying ComBat (sva package) in R. What went wrong?

A: This often indicates over-correction, typically due to incorrect specification of the model matrix. Ensure your mod argument includes your biological variable of interest. If your mod matrix only includes the batch variable, ComBat will remove all variation, including your signal. Re-run with mod = model.matrix(~your_biological_condition, data=pData(yourExpressionSet)). Validate using positive control genes pre- and post-correction.

Q2: When using limma's removeBatchEffect function, should I use the corrected data for downstream differential expression?

A: No. removeBatchEffect is designed for visualization and exploration, not for direct input into linear models for differential expression. For DE, incorporate batch directly into your linear model using lmFit with a design matrix that includes batch as a factor: design <- model.matrix(~0 + group + batch). Using the "corrected" data in a separate DE test will underestimate error.

Q3: I get "Error in solve.default(t(design) %*% design) : system is computationally singular" when running ComBat. How do I fix this?

A: This is a rank deficiency error. It means your model matrix (mod) is over-specified. Check for colinearity: 1) Ensure your batch is not perfectly confounded with your biological group. If a group exists in only one batch, correction is impossible. 2) If using multiple covariates, ensure they are not linearly dependent. Simplify your mod argument, often to just mod = model.matrix(~main_biological_effect).

Q4: How do I choose between parametric and non-parametric empirical Bayes in ComBat?

A: Use parametric (par.prior=TRUE) for smaller datasets (n < 10-20 per batch) as it borrows strength more aggressively. Use non-parametric (par.prior=FALSE) for larger datasets where the data can better estimate the prior distribution. Always check the prior.plots generated by ComBat to assess fit. Non-parametric is computationally more intensive.

Q5: In Python's scanpy.pp.combat, my sparse matrix becomes dense and crashes due to memory. What are my options?

A: scanpy.pp.combat requires a dense matrix. For large single-cell RNA-seq data, consider: 1) Subsetting: Correct only highly variable genes. 2) Alternative Methods: Use BBKNN for batch-aware neighborhood graph construction instead of full matrix correction. 3) Memory: Use np.float32 instead of default float64 via scanpy.pp.combat(..., inplace=False, key='batch') and manage dtype conversion separately.

Q6: After integrating datasets with Harmony in R, my PCA plots look merged, but differential expression results seem dampened. Is this expected? A: Yes, Harmony corrects embeddings (PCs) for batch, not the raw expression matrix. For DE, you must use the corrected embeddings as covariates in your model. Do not run DE on Harmony's corrected counts (if generated), as they are approximations. Your design matrix should include biological group and the corrected Harmony coordinates (e.g., Harmony 1, 2) as covariates to adjust for residual technical variation.

Table 1: Comparison of Batch Effect Correction Tools in R/Bioconductor

| Tool / Package | Primary Method | Data Type (Best For) | Key Parameter(s) | Output | Pros | Cons |

|---|---|---|---|---|---|---|

| limma::removeBatchEffect | Linear model adjustment | Microarray, bulk RNA-seq | design, batch, covariates |

Corrected expression matrix | Fast, simple, transparent. | Not for direct DE input; removes batch-associated variation globally. |

| sva::ComBat | Empirical Bayes, location/scale adjustment | Bulk omics (n>5/batch) | mod, par.prior, mean.only |

Batch-adjusted matrix | Robust to small batches, accounts for batch variance. | Risk of over-correction; assumes batch effect is additive. |

| Harmony (R package) | Iterative clustering & integration | Single-cell, bulk, any embedding | theta, lambda, max.iter.harmony |

Integrated PCA/embedding | Powerful for complex batches, preserves biological structure. | Corrects embeddings, not counts; requires downstream DE adjustment. |

| ruv::RUVg | Factor analysis using control genes | Any, when controls are known | k (factors), ctl (control genes) |

Corrected counts & factors | Uses negative controls, models unwanted variation. | Requires reliable control genes (e.g., housekeeping, spike-ins). |

Table 2: Performance Metrics on Simulated Bulk RNA-seq Data (n=6 samples/batch, 2 batches)

| Correction Method | % Variance Explained by Batch (PC1) | Mean Correlation of Pos. Controls (Within Group) | Median Absolute Deviation of Neg. Controls |

|---|---|---|---|

| Uncorrected | 65% | 0.72 | 0.41 |

| limma removeBatchEffect | 12% | 0.85 | 0.38 |

| ComBat (parametric) | 5% | 0.91 | 0.22 |

| ComBat (non-parametric) | 4% | 0.93 | 0.21 |

| RUVg (k=2) | 15% | 0.88 | 0.19 |

Note: Simulated data with a known true positive control gene set (n=100) and negative controls (n=500). Lower batch variance and higher positive control correlation indicate better performance.

Experimental Protocols

Protocol 1: Assessing Batch Effect with PCA Before Correction

Purpose: To diagnose the presence and magnitude of batch effects.

- Data Input: Start with a normalized expression matrix (e.g., log2-CPM for RNA-seq).

- Transpose & Scale: Transpose the matrix so genes are columns. Optionally, scale genes (

scale=TRUEin R'sprcomp). - Perform PCA: Run

prcomp(your_matrix, center=TRUE)in R orsklearn.decomposition.PCAin Python. - Variance Calculation: Extract the percentage of variance explained by each principal component (PC) from the

sdevoutput. - Visual Inspection: Plot PC1 vs. PC2, coloring points by batch and by biological condition. A strong clustering by batch indicates a significant batch effect.

- Quantify: Calculate the proportion of variance in the first few PCs attributable to batch using ANOVA.

Protocol 2: Standard ComBat Application for Bulk RNA-seq (R/Bioconductor)

Purpose: To remove batch effects using an empirical Bayes framework.

- Prepare Data: Load a

SummarizedExperimentorExpressionSetobject (exprs_data) with phenotypes (pData). - Define Model: Specify your model of interest, typically your primary biological condition.

mod <- model.matrix(~disease_status, data=colData(exprs_data)). - Define Batch: Create a batch vector.

batch <- colData(exprs_data)$batch_lab. - Run ComBat: Execute the correction:

corrected_data <- ComBat(dat=assay(exprs_data), batch=batch, mod=mod, par.prior=TRUE, prior.plots=FALSE). - Validate: Repeat Protocol 1's PCA on the

corrected_data. Batch clustering should be reduced. Check expression of known positive controls across batches. - Proceed to DE: Use the

corrected_dataas input forlimmaorDESeq2with a design that does NOT include batch.

Protocol 3: Batch Integration with Harmony for Single-Cell Data (Python)

Purpose: To integrate single-cell embeddings across batches/datasets.

- Preprocess: Use scanpy to normalize, log-transform, and calculate highly variable genes and PCA:

sc.pp.pca(adata). - Run Harmony: Apply Harmony on the PCA coordinates:

sc.external.pp.harmony_integrate(adata, key='batch', basis='X_pca', max_iter_harmony=20). - Access Output: The corrected embedding is stored in

adata.obsm['X_pca_harmony']. - Neighborhood & UMAP: Compute the neighbor graph and UMAP using the Harmony-corrected PCs:

sc.pp.neighbors(adata, use_rep='X_pca_harmony')thensc.tl.umap(adata). - Downstream Analysis: For clustering, use

sc.tl.leidenon the corrected graph. For pseudo-bulk DE, include harmony components as covariates in the statistical model.

Visualizations

Diagram 1: Batch Effect Correction Workflow Decision Tree

Diagram 2: ComBat's Empirical Bayes Adjustment Mechanism

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Batch Effect Correction Experiments

| Item / Reagent | Function in Context | Example / Specification |

|---|---|---|

| Reference RNA Samples | Serves as an inter-batch positive control. Spike into each batch to assess technical consistency post-correction. | Universal Human Reference RNA (UHRR), External RNA Controls Consortium (ERCC) spike-ins for RNA-seq. |

| Housekeeping Gene Panel | Acts as assumed stable negative controls for methods like RUV. Used to estimate unwanted variation. | GAPDH, ACTB, RPLPO, PGK1. Must be validated as stable in your specific experiment. |

| Benchmarking Synthetic Dataset | Provides ground truth for validating correction algorithms. Contains known differential expression and simulated batch effects. | splatter R package, scRNAseqBenchmark datasets. |

| Versioned Analysis Container | Ensures computational reproducibility of the correction pipeline across users and time. | Docker or Singularity container with specific versions of R (≥4.2), Bioconductor (≥3.16), Python (≥3.9), and all packages. |

| High-Performance Computing (HPC) Resources | Enables running memory-intensive corrections (e.g., on full single-cell matrices) and permutation tests. | Access to cluster with ≥64GB RAM and multi-core nodes. |

| Batch Metadata Tracker | A structured spreadsheet (CSV) to meticulously record all potential batch covariates (sequencing lane, date, operator, reagent lot). | Required columns: SampleID, BiologicalCondition, BatchID, Date, LibraryKit_Lot, Sequencer. |

Integrating Correction into Standard DE Pipelines (e.g., DESeq2, edgeR)

FAQs and Troubleshooting Guides

Q1: Why is my batch-corrected data producing inflated p-values or false positives in DESeq2?

A: This often occurs when batch correction (e.g., using ComBat or removeBatchEffect) is applied before running DESeq2. These correction methods alter the variance structure of the data, which DESeq2’s negative binomial model relies on. The solution is to incorporate the batch variable as a covariate in the DESeq2 design formula (e.g., ~ batch + condition) instead of pre-correcting the counts.

Q2: How do I properly incorporate a batch factor into the edgeR model?

A: In edgeR, add the batch variable to the design matrix. After creating a DGEList object and calculating normalization factors, define your design: design <- model.matrix(~ batch + group). Then proceed with estimating dispersions (estimateDisp) and fitting the model (glmFit). Do not use batch-corrected log-CPM values as input for glmQLFit.

Q3: Can I use sva or RUV with DESeq2/edgeR without breaking the statistical model?

A: Yes, but the surrogate variables (SVs) from sva or factors from RUV must be added as covariates in the design matrix. For DESeq2: dds <- DESeqDataSetFromMatrix(countData, colData, design = ~ SV1 + SV2 + condition). For edgeR, include them in the model.matrix. Importantly, do not subtract their effects from the count data beforehand.

Q4: My experiment has a paired design (e.g., patient tumor/normal) and a batch effect. How do I model this?