From Raw Reads to Reliable Inputs: A Comprehensive Guide to Preprocessing CLIP-seq Data for CNN Models in Biomedical Research

This article provides a complete, step-by-step guide for researchers and bioinformaticians preparing CLIP-seq (Cross-Linking and Immunoprecipitation followed by sequencing) data for training Convolutional Neural Networks (CNNs).

From Raw Reads to Reliable Inputs: A Comprehensive Guide to Preprocessing CLIP-seq Data for CNN Models in Biomedical Research

Abstract

This article provides a complete, step-by-step guide for researchers and bioinformaticians preparing CLIP-seq (Cross-Linking and Immunoprecipitation followed by sequencing) data for training Convolutional Neural Networks (CNNs). We cover foundational concepts of CLIP-seq technology and its relevance to drug target discovery, detail a modern preprocessing pipeline from FASTQ to formatted tensors, address common pitfalls and optimization strategies for model performance, and discuss methods for validating preprocessed data quality and comparing preprocessing tools. This guide is essential for ensuring that high-quality, biologically meaningful data fuels downstream deep learning applications in genomics and therapeutics development.

Understanding CLIP-seq Data: The Foundation for Accurate CNN Modeling in Genomics

What is CLIP-seq? Core Principles and Biological Significance for RBPs.

CLIP-seq (Crosslinking and Immunoprecipitation followed by sequencing) is a high-throughput method for identifying RNA-protein interaction sites at nucleotide resolution. It is the gold standard for defining the binding landscape of RNA-binding proteins (RBPs), which are critical regulators of post-transcriptional gene expression. This technical guide details its core principles, protocols, and biological significance, framed within the context of preprocessing CLIP-seq data for training Convolutional Neural Networks (CNNs) to predict RBP binding motifs and functions.

Core Principles

CLIP-seq combines ultraviolet (UV) crosslinking, immunoprecipitation (IP), and next-generation sequencing (NGS). UV light (254 nm) creates covalent bonds between RBPs and their bound RNAs at zero-distance interactions, "freezing" transient interactions. Subsequent rigorous purification, including RNA digestion and size selection, yields protein-bound RNA fragments for sequencing. This process maps RBP binding sites across the transcriptome.

Detailed Experimental Protocol

Standard CLIP-seq Workflow

- In Vivo Crosslinking: Live cells or tissues are irradiated with UV-C light (254 nm, 150-400 mJ/cm²).

- Cell Lysis: Cells are lysed in stringent RIPA buffer, and RNAs are partially digested with RNase I to leave ~50-100 nucleotide fragments protected by the bound RBP.

- Immunoprecipitation: A specific antibody against the target RBP is used to purify the RNA-protein complexes. Beads (e.g., Protein A/G) facilitate pulldown.

- RNA Linker Ligation & Radiolabeling: A 3' RNA adapter is ligated to the RNA fragment. The complex is then labeled with P³² via T4 Polynucleotide Kinase for visualization.

- Membrane Transfer & Complex Isolation: Complexes are resolved by SDS-PAGE, transferred to a nitrocellulose membrane, and the region corresponding to the RBP's molecular weight is excised.

- Proteinase K Digestion & RNA Isolation: Proteinase K digests the protein, releasing the crosslinked RNA fragment.

- Reverse Transcription & cDNA Library Construction: RNA is reverse-transcribed, often with template-switching, a 5' adapter is ligated, and the cDNA is PCR-amplified for sequencing.

Key Variants

- HITS-CLIP (High-Throughput Sequencing CLIP): The standard protocol described above.

- PAR-CLIP (Photoactivatable-Ribonucleoside-Enhanced CLIP): Incorporates nucleoside analogs (4-thiouridine) during cell culture, which upon UV crosslinking at 365 nm induces T-to-C transitions in sequencing reads, providing precise binding site identification.

- iCLIP (Individual-nucleotide resolution CLIP): Uses a modified linker and circularization to capture cDNAs that truncate at the crosslink site, pinpointing the interaction to a single nucleotide.

- eCLIP (Enhanced CLIP): Incorporates size-matched input controls and improved ligation steps to reduce adapter-dimer artifacts, significantly enhancing specificity.

Biological Significance for RBPs

CLIP-seq has revolutionized the understanding of RBP function by providing genome-wide maps of their binding sites. This reveals their roles in:

- Alternative Splicing Regulation: Identifying exonic and intronic splicing enhancers/silencers.

- RNA Stability & Decay: Mapping binding in 3'UTRs associated with miRNA targeting or AU-rich elements.

- RNA Localization & Translation: Identifying zipcode sequences in transcripts for subcellular localization.

- Non-coding RNA Function: Characterizing protein interactions with lncRNAs and miRNAs.

- Disease Mechanisms: Discovering aberrant RBP binding in conditions like cancer (e.g., ELAVL1), neurodegeneration (e.g., TDP-43, FUS), and genetic disorders.

CLIP-seq Data Preprocessing for CNN Training

For CNN-based motif discovery and binding prediction, raw CLIP-seq data requires specialized preprocessing to isolate high-confidence signals.

- Data Acquisition: Download raw FASTQ files from repositories like GEO (e.g., GSEXXXXX).

- Quality Control & Trimming: Use FastQC and Trimmomatic to remove low-quality bases and adapter sequences.

- Alignment: Map reads to the reference genome (e.g., hg38) using STAR or HISAT2, allowing for mismatches (critical for PAR-CLIP data).

- PCR Duplicate Removal: Use tools like

UMI-tools(for UMI-based protocols) orpicard MarkDuplicatesto mitigate amplification bias. - Peak Calling: Identify significant binding sites ("peaks") using specialized callers (e.g.,

CLIPper,Piranha) that model crosslinking-induced truncations. - Negative Set Generation: Create matched input/control sequences or use genomic background sampling to train CNNs for discrimination.

- Sequence Extraction & Encoding: Extract peak sequences and flanking regions, converting them into one-hot encoded or k-mer frequency matrices as CNN input tensors.

Table 1: Comparison of Major CLIP-seq Variants

| Parameter | HITS-CLIP | PAR-CLIP | iCLIP | eCLIP |

|---|---|---|---|---|

| Crosslink Type | UV-C (254 nm) | UV-A (365 nm) + 4SU | UV-C (254 nm) | UV-C (254 nm) |

| Key Identifier | Truncation sites | T-to-C transitions | cDNA truncation at crosslink site | Size-matched input control |

| Resolution | ~30-60 nt | Single-nucleotide (via mutations) | Single-nucleotide (via truncations) | ~30-60 nt |

| Primary Advantage | Robust, widely used | Highest precision mapping | Single-nucleotide resolution, captures crosslink site | High specificity, reduced background |

| Challenge | Ambiguity in exact site | Requires 4SU incorporation | Complex library prep | More steps required |

Table 2: Typical CLIP-seq Output Metrics from a Successful Experiment

| Metric | Typical Range/Value | Description |

|---|---|---|

| Reads Post-QC | 20-50 million | High-quality sequencing reads for analysis. |

| Unique Mapping Rate | 60-85% | Percentage of reads mapping uniquely to the genome. |

| Number of Peaks | 10,000 - 50,000 | High-confidence binding sites called. |

| Peak Distribution | ~40% CDS, ~35% 3'UTR | Common distribution for many mRNA-binding RBPs. |

| Motif Enrichment (E-value) | < 1e-10 | Statistical significance of discovered sequence motif. |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for CLIP-seq Experiments

| Item | Function & Description |

|---|---|

| UV Crosslinker (254 nm) | Creates covalent bonds between RBP and RNA at direct contact points. Critical for "freezing" interactions. |

| RNase I | Partially digests unprotected RNA, leaving protein-bound fragments for precise binding site mapping. |

| Magnetic Beads (Protein A/G) | Coupled with specific antibodies to immunoprecipitate the target RBP-RNA complex. |

| T4 PNK (Phosphatase-/Kinase-) | Radiolabels RNA fragments for visualization (kinase+) and removes 3' phosphates for adapter ligation (phosphatase+). |

| T4 RNA Ligase 1/2, truncated | Catalyzes the ligation of pre-adenylated DNA adapters to RNA 3' ends, a key step in library construction. |

| Proteinase K | Digests the protein component of the isolated complex to release the crosslinked RNA fragment for library prep. |

| Template-Switching Reverse Transcriptase (e.g., SMARTScribe) | Enables efficient cDNA synthesis from fragmented, adapter-ligated RNA, often used in iCLIP/eCLIP. |

| UMI (Unique Molecular Identifier) Adapters | Short random nucleotide sequences added to fragments pre-amplification to enable accurate PCR duplicate removal. |

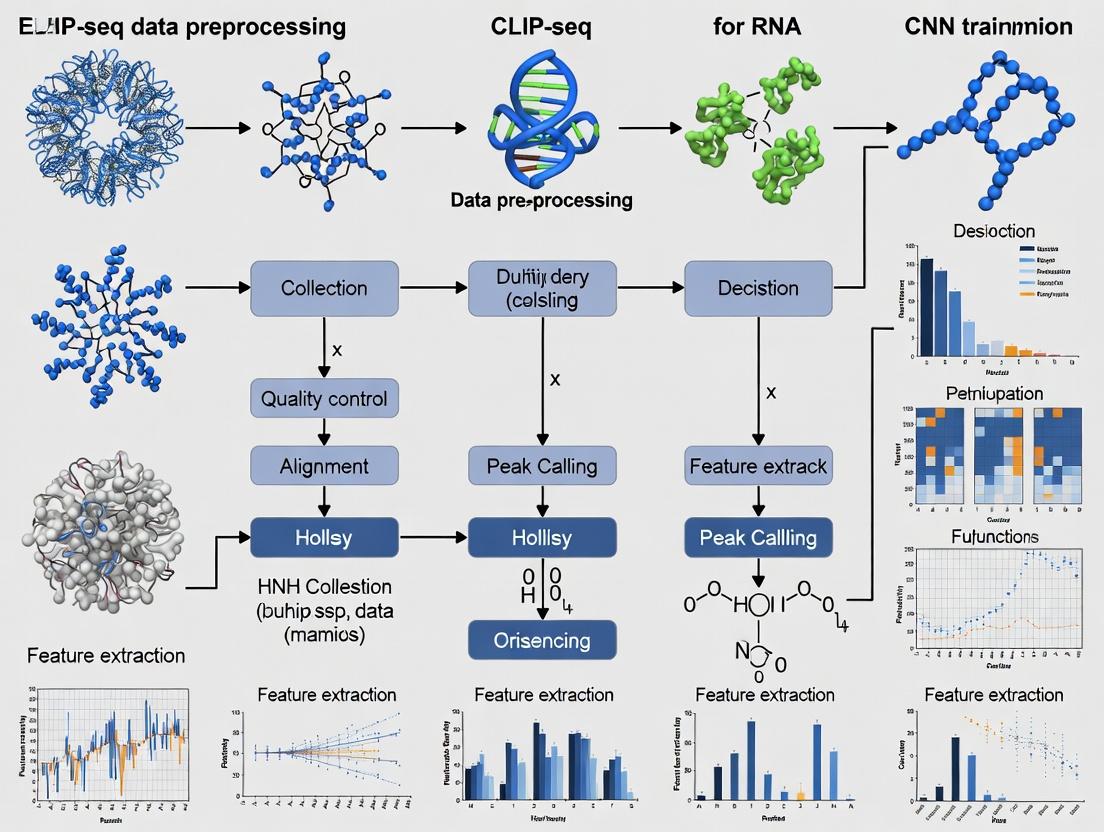

Visualizations

CLIP-seq Core Experimental Workflow

CLIP-seq Data Preprocessing for CNN Training

Biological Significance of CLIP-seq for RBP Function

This technical guide details the transformation of raw sequencing data into interpretable protein-RNA interaction maps, a critical preprocessing pipeline for downstream Convolutional Neural Network (CNN) training. Within the broader thesis of optimizing CLIP-seq data for deep learning applications, consistent and biologically accurate data processing is paramount. High-quality, standardized interaction maps serve as the foundational training labels for CNNs aimed at predicting binding motifs, identifying novel interactions, or diagnosing RNA-centric disease mechanisms.

Core Data Processing Workflow & Quantitative Benchmarks

The journey from sequencer output to a high-confidence interaction map involves discrete, quantifiable steps. The table below summarizes key metrics and outputs for each stage, critical for evaluating data quality before CNN training.

Table 1: Key Data Outputs and Quality Metrics Across the CLIP-seq Pipeline

| Processing Stage | Primary Input | Key Output | Typical Yield/Volume | Critical Quality Metric | Target Threshold |

|---|---|---|---|---|---|

| 1. Raw Sequencing | Library Fragments | FASTQ Files | 20-100 million reads per sample | Q-score (Phred) | ≥30 for >80% of bases |

| 2. Preprocessing & Adapter Trimming | FASTQ Files | Trimmed FASTQ | 15-95 million reads (75-95% retention) | % Reads with Adapter | <5% post-trimming |

| 3. Genomic Alignment | Trimmed FASTQ | BAM/SAM File | 10-90 million aligned reads (60-85% alignment rate) | Uniquely Mapping Reads | >70% of aligned reads |

| 4. CLIP-Specific Processing (Duplicate Removal, Crosslink Site Refinement) | Aligned BAM | Deduplicated BAM, BED Files | 2-20 million unique crosslink events | PCR Duplicate Rate | <20% (varies by protocol) |

| 5. Peak Calling (Interaction Map Generation) | Crosslink Site BED | Peak BED/GRanges | 5,000 - 50,000 high-confidence peaks | False Discovery Rate (FDR) | FDR ≤ 0.05 |

| 6. Final Interaction Map | Called Peaks | Normalized BigWig, BED, or Matrix File | Genome-wide signal track | Signal-to-Noise Ratio (Peak vs. Flanking) | ≥ 5:1 |

Detailed Experimental Protocols for Key Steps

Protocol 3.1: CLIP-seq Library Preparation (Adapted from eCLIP) Objective: Generate a sequencing library enriched for protein-bound RNA fragments.

- In Vivo Crosslinking: Culture cells are UV-irradiated (254 nm, 400 mJ/cm²) to covalently link RNA-binding proteins (RBPs) to RNA.

- Cell Lysis and Partial RNase Digestion: Lyse cells in stringent RIPA buffer. Treat with a titrated amount of RNase I to fragment bound RNA (~50-100 nt fragments).

- Immunoprecipitation (IP): Incubate lysate with antibody-coated magnetic beads targeting the RBP of interest. Wash under high-stringency conditions.

- 3' Dephosphorylation and Adapter Ligation: Treat beads with T4 PNK (no ATP) to repair 3' ends. Ligate a pre-adenylated DNA adapter to the RNA 3' end.

- 5' Radiolabeling & Transfer: Label the RNA 5' end with P³²-ATP using T4 PNK. Transfer to a nitrocellulose membrane via SDS-PAGE. Expose membrane to film; excise the region corresponding to the RBP-RNA complex.

- Proteinase K Digestion and RNA Extraction: Digest proteins on the membrane with Proteinase K. Extract and purify RNA.

- Reverse Transcription and cDNA Circularization: Reverse transcribe using a primer complementary to the 3' adapter. Circularize the cDNA with Circligase.

- PCR Amplification: Amplify with indexed primers for multiplexing. Clean up and quantify the final library.

Protocol 3.2: Computational Peak Calling with PEAKachu Objective: Identify statistically significant clusters of crosslink sites (peaks) from aligned reads.

- Input Preparation: Use the deduplicated BAM file containing unique crosslink sites (read start + 1 offset for most CLIP variants).

- Model Training: Run

PEAKachu trainon a sample BAM and a corresponding background BAM (e.g., size-matched input or IgG control) to learn model parameters:peakachu train -t treatment.bam -c control.bam -o model.pkl. - Peak Prediction: Run

PEAKachu predictgenome-wide using the trained model:peakachu predict -i treatment.bam -m model.pkl -o peaks.bed -s hg38. - Peak Filtering: Filter output BED file by the assigned confidence score (e.g.,

score ≥ 0.95) and optionally by a minimum fold-enrichment over background (e.g.,fold-enrichment ≥ 8).

Visualization of Workflows and Relationships

Title: CLIP-seq Data Pipeline for CNN Training

Title: Logic of Peak Calling for Interaction Maps

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for CLIP-seq and Interaction Mapping

| Item | Function | Example Product/Catalog |

|---|---|---|

| UV Crosslinker | Creates covalent bonds between RBP and RNA in vivo. | Spectrolinker XL-1000 (254nm) |

| RNase I | Fragments RNA bound to the protein to define binding footprint. | Thermo Fisher AM2294 |

| Magnetic Protein A/G Beads | Captures antibody-RBP-RNA complexes during immunoprecipitation. | Pierce Anti-HA Magnetic Beads (88836) |

| Pre-adenylated 3' Adapter | Enables ligation to RNA 3' end without ATP, reducing adapter dimer formation. | Truncated TruSeq Small RNA Adapter |

| T4 PNK (with/without ATP) | For 3' end repair (no ATP) and 5' radiolabeling (with γ-P³² ATP). | NEB M0201/M0236 |

| Proteinase K | Digests the RBP to release crosslinked RNA fragments for library construction. | Invitrogen 25530049 |

| High-Fidelity PCR Mix | Amplifies final cDNA library with minimal bias and errors. | KAPA HiFi HotStart ReadyMix (KK2602) |

| Size Selection Beads | Precisely selects library fragments in the desired size range (e.g., 150-250 bp). | SPRIselect (Beckman Coulter B23318) |

| Peak Calling Software | Computationally identifies significant binding sites from aligned data. | PEAKachu, CLIPper, PARalyzer |

Why CNNs for CLIP-seq Analysis? Advantages for Motif and Peak Detection.

The systematic preprocessing of CLIP-seq (Crosslinking and Immunoprecipitation followed by sequencing) data into formats amenable for Convolutional Neural Network (CNN) training is a critical step in modern computational biology. This whitepaper, framed within a broader thesis on CLIP-seq data preprocessing for CNN research, details why CNNs have become a preeminent tool for analyzing such data. We focus on their intrinsic advantages for the dual core tasks of cis-regulatory motif discovery and protein-RNA binding peak detection, moving beyond traditional statistical and position-weight matrix (PWM) based methods.

The Case for CNNs in CLIP-seq Analysis

CLIP-seq data presents a complex, high-dimensional signal across the genome. Traditional peak-calling tools (e.g., PEAKachu, CLIPper) often rely on heuristic thresholds and struggle with variable signal-to-noise ratios and ambiguous binding landscapes. CNN architectures are uniquely suited to this challenge.

Core Advantages:

- Hierarchical Feature Learning: CNNs autonomously learn a hierarchy of features from raw sequence data—from simple k-mers and nucleotide patterns in early layers to complex composite motifs and spatial relationships in deeper layers. This eliminates the need for manual feature engineering.

- Translational Invariance: Through convolutional filters and pooling operations, CNNs can detect a motif regardless of its exact position within the input sequence window, a critical property for motif scanning.

- Capacity for Integrative Learning: CNNs can be trained on multi-modal input, including not only nucleotide sequence (one-hot encoded) but also concurrent data tracks such as RNA secondary structure propensity, conservation scores, or regional read density, providing a more holistic binding model.

- Superior Discrimination: Trained end-to-end, CNNs learn to distinguish true binding sites from background genomic sequence with high accuracy, often outperforming methods based on PWMs or generalized linear models.

Quantitative Performance Comparison

The superiority of CNN-based approaches is evidenced in recent benchmarking studies. The following table summarizes key performance metrics comparing a representative CNN model (DeepBind, DeepCLIP) against traditional methods on held-out test sets from eCLIP experiments targeting RBPs like ELAVL1 (HuR) and IGF2BP1.

Table 1: Performance Comparison of Methods for CLIP-seq Peak & Motif Detection

| Method Category | Example Tool | AUC-ROC (Peak Detection) | Motif Recovery (TomTom p-value vs. known motifs) | Key Limitation |

|---|---|---|---|---|

| Traditional Statistical | CLIPper, PEAKachu | 0.82 - 0.88 | Moderate to Low (p > 1e-5) | Heuristic thresholds, no de novo motif learning. |

| PWM / Discriminative | DREME, MEME-ChIP | N/A | High (p < 1e-10) | Treats positions independently; poor at peak calling. |

| CNN-Based (End-to-End) | DeepCLIP, DanQ | 0.92 - 0.97 | Highest (p < 1e-15) | Requires large, high-quality training sets; potential for overfitting. |

Detailed Experimental Protocol for CNN Training on CLIP-seq Data

This protocol outlines the core methodology for preprocessing CLIP-seq data and training a CNN for joint peak and motif detection, as cited in current literature.

A. Data Acquisition and Preprocessing:

- Dataset Curation: Download aligned BAM files for your RBP of interest (e.g., from ENCODE eCLIP portal). Include matched input or smRNA control samples.

- Peak Calling (Initial Training Set): Use a conventional tool (e.g., CLIPper) with relaxed thresholds to generate an initial set of positive genomic regions. Manually review a subset via IGV for quality assessment.

- Sequence Extraction: Extract genomic sequences (± 150 bp around peak summits for positive class). Generate a matched negative set from regions lacking signal, controlling for GC content and mappability.

- Sequence Encoding: Convert sequences to a 4-channel (A, C, G, T) one-hot encoded matrix of dimensions (N_samples, Sequence_Length, 4). Optionally add additional channels (e.g., conservation, structure).

- Dataset Splitting: Partition data into training (70%), validation (15%), and held-out test (15%) sets, ensuring no chromosomal overlap to prevent data leakage.

B. CNN Architecture and Training:

- Model Design: Implement a sequential model:

- Input Layer: Accepts (Sequence_Length, 4) tensor.

- Convolutional Blocks: 2-3 blocks, each with: Conv1D layer (128 filters, kernel size=19 for motif detection), ReLU activation, BatchNormalization, MaxPooling1D (pool size=4).

- Dense Classifier: Flatten layer, followed by Dense layers (e.g., 256 units, ReLU) with Dropout (rate=0.5) for regularization.

- Output Layer: Dense layer (1 unit, sigmoid activation) for binary classification (binding site vs. not).

- Training Configuration: Use Adam optimizer (lr=1e-4), binary cross-entropy loss. Train for 50-100 epochs with batch size=64, using the validation set for early stopping.

- Motif Extraction: Apply in silico mutagenesis or filter visualization techniques (e.g., TF-MoDISco) on the first convolutional layer's filters to extract learned de novo motifs.

Visualizing the CNN-Based CLIP-seq Analysis Workflow

Diagram 1: End-to-End CLIP-seq CNN Analysis Pipeline

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagent Solutions for CLIP-seq & Subsequent CNN Validation

| Reagent / Material | Function in CLIP-seq/Validation | Example Product / Kit |

|---|---|---|

| RNase Inhibitor | Prevents RNA degradation during cell lysis and IP. Critical for preserving RNA-protein complexes. | Murine RNase Inhibitor (NEB) |

| Proteinase K | Digests protein after cross-linking, crucial for RNA fragment recovery prior to library prep. | Proteinase K, recombinant (PCR grade) |

| Biotinylated Nucleotide | Enables efficient ligation of adapters to RNA 3' ends during library construction. | Cytidine Bisphosphate (pCp), Biotinylated |

| Streptavidin Magnetic Beads | High-affinity capture of biotinylated RNA-adapter complexes for stringent purification. | Dynabeads MyOne Streptavidin C1 |

| High-Fidelity Reverse Transcriptase | Generates cDNA from crosslinked, fragmented RNA with high accuracy and processivity. | SuperScript IV Reverse Transcriptase |

| Phusion High-Fidelity DNA Polymerase | Amplifies cDNA library with minimal bias for high-quality sequencing libraries. | Phusion High-Fidelity PCR Master Mix |

| Validated Antibody for Target RBP | Specific immunoprecipitation of the RNA-protein complex of interest. | Verified antibodies (e.g., from Cell Signaling, Abcam) |

| UV Crosslinker | Induces covalent bonds between RNA and closely interacting proteins (254 nm). | Spectrolinker XL-1000 UV Crosslinker |

| In-cell Crosslinker (Optional) | For in vivo CLIP variants (e.g., PAR-CLIP), uses photoactivatable nucleosides. | 4-Thiouridine |

| SDS-PAGE & Transfer System | For size selection of protein-RNA complexes prior to excision and RNA extraction. | Mini-PROTEAN Tetra Vertical Electrophoresis Cell |

This whitepaper addresses the foundational preprocessing challenges that directly impact the training of Convolutional Neural Networks (CNNs) for RNA-binding protein (RBP) site prediction from CLIP-seq data. A core thesis in this field posits that systematic noise reduction and artifact correction in raw sequencing data are prerequisites for building robust, generalizable models. Failure to address these challenges propagates biases into trained networks, limiting their predictive power in downstream drug discovery pipelines aimed at modulating RBP function.

Quantifying the Noise Landscape in Raw CLIP Data

The signal in CLIP experiments is obfuscated by multiple, quantifiable noise layers.

Table 1: Primary Noise Sources and Their Typical Magnitude in Raw CLIP Data

| Noise/Artifact Category | Source | Typical Impact on Read Population | Effect on CNN Training |

|---|---|---|---|

| PCR Duplicates | Library Amplification | 10-50% of mapped reads | Inflates apparent coverage, introduces sequence-based bias. |

| Adapter Background | Incomplete adapter trimming | 5-25% of raw reads (varies by protocol) | Creates false genomic alignments, adds spurious signals. |

| Non-Specific RNA Binding | Experimental conditions | Highly variable; can be >50% in some RBPs | Teaches CNN to recognize non-functional binding motifs. |

| UV-Induced RNA Damage | 254nm crosslinking | Causes truncations and mutations at crosslink sites | Can obscure true crosslink nucleotide, alters input sequence. |

| Sequence-Dependent Bias | RNA fragmentation, reverse transcription | Systematic skew in nucleotide representation | CNN learns experimental artifacts, not biological specificity. |

| Genomic DNA Contamination | Carryover from RNA isolation | Usually <5% but can be higher | Creates reads mapping to intronic/non-transcribed regions. |

Detailed Methodologies for Critical Preprocessing Experiments

Protocol for Duplicate Removal Benchmarking

Objective: To evaluate the efficacy of different duplicate removal tools (e.g., umi_tools, picard MarkDuplicates, CLIPtoolkit) in recovering true biological signal.

- Data Simulation: Use software like

ARTorPolyesterto generate in silico CLIP reads from a set of known RBP binding sites. Introduce controlled rates of PCR duplication (20%, 40%, 60%). - Tool Application: Process the simulated dataset through each duplicate removal tool with default and optimized parameters for CLIP data (e.g., considering UMIs if simulated).

- Metric Calculation: For each tool, calculate:

- Precision: (True Positives after dedup) / (All reads retained after dedup).

- Recall: (True Positives after dedup) / (All true biological reads in simulation).

- F1-score: Harmonic mean of precision and recall.

- Validation: Apply top-performing tools to an experimental eCLIP dataset (e.g., from ENCODE) and assess the reproducibility of peaks between technical replicates using metrics like IDR (Irreproducible Discovery Rate).

Protocol for Adapter Contamination and Trimming Assessment

Objective: To quantify adapter residue and optimize trimming parameters.

- Adapter Content Profiling: Use

FastQCon raw FASTQ files to determine the per-base frequency of adapter sequences (e.g., Illumina TruSeq). - Systematic Trimming: Process reads with

cutadaptusing increasing stringency:- Set A: Allow 1 mismatch, overlap=5 bp.

- Set B: Allow 1 mismatch, overlap=3 bp.

- Set C: Allow 0 mismatches, overlap=5 bp.

- Post-Trim Analysis: Align all output sets to the reference genome using

STAR. Calculate:- Alignment rate (%).

- Reads mapping to non-canonical chromosomes (proxy for spurious alignment).

- Mean read length after trimming.

- Optimal Parameter Selection: Select the parameter set that maximizes alignment rate while minimizing reads mapping to non-canonical chromosomes and retaining sufficient read length for peak calling.

Protocol for Background Signal Isolation via Size-Matched Input Controls

Objective: To empirically define background noise using control experiments.

- Control Library Preparation: Perform the entire CLIP protocol (including UV crosslinking) on a cell line lacking the RBP of interest (knockout) or without the immunoprecipitation antibody (mock-IP). This captures background from non-specific RNA interactions, genomic DNA, and general RNA fragmentation.

- Sequencing & Processing: Sequence the control library to a depth equal to or greater than the experimental IP. Process identically (trimming, alignment).

- Background Modeling: Use peak callers like

CLIPperorPURE-CLIPthat explicitly incorporate the control sample to statistically distinguish true peaks from background. The model learns a noise distribution from the control. - CNN Training Application: Instead of using raw read counts, train the CNN on log-odds ratios or normalized signals (e.g., IP count / (Control count + pseudocount)) at each genomic position.

Visualization of Workflows and Relationships

Title: CLIP-seq Data Preprocessing Workflow for CNN Training

Title: Noise Sources, CNN Impacts, and Preprocessing Solutions

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Tools for Robust CLIP-seq Preprocessing

| Item | Category | Function in Addressing Noise/Artifacts |

|---|---|---|

| UMI (Unique Molecular Identifier) Adapters | Wet-Lab Reagent | Enzymatically ligated to RNA fragments pre-amplification. Enables precise computational removal of PCR duplicates by tagging each original molecule. |

| RNase Inhibitors (e.g., RNasin, SUPERase•In) | Wet-Lab Reagent | Minimizes RNA degradation during IP and library prep, reducing artifactual fragments that contribute to background. |

| Size-Matched Input Control Library | Experimental Control | The single most critical control for defining non-specific background binding and RNA fragmentation patterns. |

| High-Fidelity DNA Polymerase (e.g., Q5, KAPA HiFi) | Wet-Lab Reagent | Reduces PCR errors and minimizes bias during library amplification, leading to more uniform representation. |

| cutadapt | Software Tool | Precisely removes adapter sequences from read termini, preventing misalignment and false signal generation. |

| umi_tools | Software Tool | Extracts UMIs from read headers and performs network-based deduplication, collapsing reads originating from the same RNA fragment. |

| STAR Aligner | Software Tool | Performs splice-aware alignment. Can be parameterized to allow for mismatches/soft-clipping at crosslink sites (UV damage). |

| PURE-CLIP | Software Tool | Peak caller that uses a probabilistic model to distinguish crosslink-induced mutations from sequencing errors, directly addressing RNA damage artifacts. |

| BEDTools | Software Toolkit | Suite for genomic arithmetic. Used to compare peak sets, calculate coverage, and filter artifacts (e.g., removing peaks in genomic blacklist regions). |

| DeepTools | Software Toolkit | Generates normalized coverage bigWig files and quality metrics, essential for visualizing and preparing signal tracks for CNN input. |

This whitepaper delineates the essential file formats—FASTQ, BAM, BED, and BigWig—within the context of preprocessing CLIP-seq data for training Convolutional Neural Networks (CNNs) in RNA-binding protein (RBP) research. A precise understanding of these formats is critical for transforming raw sequencing data into structured inputs suitable for deep learning models, thereby accelerating drug discovery targeting RNA-protein interactions.

CLIP-seq (Crosslinking and Immunoprecipitation followed by sequencing) is a pivotal technique for mapping RBP binding sites genome-wide. The preprocessing pipeline involves a series of format transformations, each encapsulating specific data facets. This guide details these formats' structures, their roles in the CLIP-seq-to-CNN pipeline, and their quantitative benchmarks.

The File Format Ecosystem: Structures and Roles

FASTQ: Raw Sequencing Output

The primary output from high-throughput sequencers, containing both sequence and quality information.

Structure per Record:

- @ReadID: Instrument and run identifiers.

- Nucleotide Sequence: The called bases (A, C, G, T, N).

- + (Optional separator, sometimes repeats ReadID).

- Quality Scores: Per-base Phred-scaled quality encoded in ASCII (e.g., !"#$%...).

Role in CLIP-seq/CNN Pipeline: The starting point. Preprocessing involves adapter trimming, quality filtering, and demultiplexing to yield clean reads for alignment.

BAM: Aligned Sequence Data

The binary, compressed version of a SAM (Sequence Alignment/Map) file, storing alignment positions of reads relative to a reference genome.

Core Fields (Per Alignment):

- QNAME: Read name.

- FLAG: Bitwise flag indicating alignment properties (paired, mapped, strand, etc.).

- RNAME: Reference sequence name.

- POS: 1-based leftmost mapping position.

- CIGAR: String describing alignment matches, insertions, deletions, and clipping.

- SEQ: Read sequence.

- QUAL: Read base quality scores.

- Optional tags (e.g.,

NM: edit distance;XS: strand for splicing).

Role in CLIP-seq/CNN Pipeline: After aligning CLIP-seq reads (e.g., with STAR or Bowtie2), BAM files are used to identify crosslink sites, often via diagnostic mutations or truncations. For CNN input, BAMs are processed into coverage maps.

BED: Genomic Interval Annotations

A simple, tab-delimited text format for defining genomic intervals (0-based start, half-open).

Standard BED (3-12 fields):

- chr, start, end: (Required) Defines the interval.

- name: (Optional) Identifier for the feature.

- score: (Optional) e.g., confidence score (0-1000) or read count.

- strand: (Optional) +, -, or .

- thickStart, thickEnd: For display of coding regions.

- itemRgb: Display color.

- blockCount, blockSizes, blockStarts: For subdivided features like exons.

BED6 (first 6 fields) is common for representing called peaks from CLIP-seq data (e.g., from PEAKachu, CLIPper).

Role in CLIP-seq/CNN Pipeline: BED files define positive training examples (RBP binding sites) for CNN training. They specify the genomic coordinates where binding events occur, which are converted into fixed-length sequence windows.

BigWig: Dense, Indexed Coverage Data

A binary, indexed format for efficient storage and visualization of continuous-valued data across the genome (e.g., read coverage profiles).

Key Properties:

- Compressed: Uses wiggle (WIG) data converted to binary.

- Indexed: Allows for rapid range queries without loading entire file.

- Scalable: Suitable for genome-wide coverage tracks from BAM files (created via

bamCoveragefrom deepTools orwigToBigWig).

Role in CLIP-seq/CNN Pipeline: BigWig files can represent the quantitative crosslink signal (read depth) at single-nucleotide resolution. This signal can be used directly as an input channel to a CNN, complementing the one-hot encoded DNA sequence to provide experimental evidence of binding.

Quantitative Format Comparisons & Benchmarks

Table 1: Core Characteristics of Essential Genomics File Formats

| Format | Encoding | Primary Content | Size Efficiency | Random Access | Key Tool for Generation (CLIP-seq) |

|---|---|---|---|---|---|

| FASTQ | Text (ASCII) | Raw reads & quality scores | Low (uncompressed) | No | Illumina sequencer, fastp (trimming) |

| BAM | Binary (compressed) | Aligned reads & mapping info | High (BGZF compressed) | Yes (with index) | STAR, Bowtie2, HISAT2 |

| BED | Text (tab-delimited) | Genomic intervals & annotations | High | With tabix | PEAKachu, CLIPper, MACS2 |

| BigWig | Binary (indexed) | Genome-wide continuous scores | Very High | Yes | bamCoverage (deepTools), wigToBigWig |

Table 2: Typical File Sizes in a CLIP-seq Preprocessing Pipeline (Human Genome)

| Processing Stage | Format | Typical Size Range (per sample) | Notes |

|---|---|---|---|

| Raw Sequencing Output | FASTQ | 10-50 GB | Depends on sequencing depth (e.g., 20-50M reads) |

| Aligned Reads | BAM | 4-15 GB | ~30-50% compression vs. FASTQ. Size depends on alignment rate. |

| Called Binding Peaks | BED | 1-10 MB | Highly variable based on RBP and peak-caller stringency. |

| Genome-wide Signal | BigWig | 100-500 MB | Resolution (e.g., 1-base or binning) significantly impacts size. |

Experimental Protocol: From CLIP-seq to CNN Input

Protocol: Generation of Training Data from eCLIP Datasets

Objective: Process publicly available eCLIP data (e.g., from ENCODE) into sequence windows and corresponding signal tracks for CNN training.

Materials & Input Data:

- eCLIP Data: Paired-end FASTQ files for IP and input control samples from an RBP of interest.

- Reference Genome: FASTA file and corresponding gene annotation (GTF).

- Software: fastp, STAR, samtools, PEAKachu, deepTools, bedtools.

Methodology:

- Quality Control & Trimming:

- Use

fastpto remove adapters and low-quality bases from all FASTQ files. - Generate QC reports to assess read quality pre- and post-trimming.

- Use

- Alignment:

- Align trimmed reads to the reference genome using

STARin two-pass mode for splice-aware alignment. - Convert output SAM to sorted, indexed BAM files using

samtools sortandsamtools index.

- Align trimmed reads to the reference genome using

- Peak Calling (Positive Example Generation):

- Run

PEAKachuon the IP BAM with the matched input control BAM to call significant binding peaks. - Output is a BED6 file (

peak_sites.bed) with genomic coordinates of high-confidence binding events.

- Run

- Signal Track Generation:

- Generate normalized genome coverage tracks using

bamCoveragefrom deepTools. - Command:

bamCoverage -b IP.bam -o signal.bw --normalizeUsing CPM --binSize 1. - This creates a BigWig file of crosslink signal in counts per million (CPM).

- Generate normalized genome coverage tracks using

- Training Example Extraction:

- Use

bedtools slopto extend peaks frompeak_sites.bedby a fixed distance (e.g., 50bp) upstream and downstream to create awindows.bedfile. - Extract DNA sequences for each window from the reference FASTA using

bedtools getfasta. - Extract the corresponding signal values for each window from the

signal.bwBigWig file using a custom script (e.g., withpyBigWig).

- Use

- Data Matrix Construction:

- Sequence Channel: One-hot encode the extracted DNA sequences (A->[1,0,0,0], C->[0,1,0,0], etc.).

- Signal Channel: Use the extracted BigWig signal values as a second input channel or as a complementary label.

- Assemble into a multi-dimensional array suitable for CNN input (e.g., [Nsamples, sequencelength, 4+1 channels]).

Visualizing the CLIP-seq to CNN Workflow

Title: CLIP-seq Data Preprocessing Pipeline for CNN Input

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Tools & Resources for CLIP-seq Data Preprocessing

| Item | Function in Pipeline | Example/Provider | Notes |

|---|---|---|---|

| FastQC / MultiQC | Initial quality assessment of FASTQ files. | Babraham Bioinformatics | Identifies adapter contamination, sequence quality drops. |

| fastp / cutadapt | Adapter trimming and quality filtering. | Open Source | Critical for removing CLIP-seq-specific adapters. |

| STAR / Bowtie2 | Spliced or unspliced alignment to reference genome. | Open Source | STAR is preferred for spliced RBPs; Bowtie2 for others. |

| samtools | Manipulation, sorting, indexing, and viewing of BAM files. | Open Source | Ubiquitous toolkit for handling aligned data. |

| PEAKachu / CLIPper | Calling significant binding peaks from CLIP-seq BAMs. | Open Source | Specifically designed for CLIP-seq peak calling. |

| deepTools | Generation of normalized coverage BigWig files and QC plots. | Open Source | bamCoverage is standard for BigWig creation. |

| bedtools | Intersection, windowing, and extraction of genomic intervals. | Open Source | Essential for creating training windows from BED files. |

| pyBigWig / pyBedTools | Python APIs for programmatic access to BigWig and BED files. | Open Source | Enables custom script integration for CNN data prep. |

| Reference Genome & Annotations | Baseline for alignment and annotation. | GENCODE, UCSC | Use consistent versions throughout the pipeline. |

| ENCODE eCLIP Datasets | Publicly available, validated CLIP-seq data for training. | ENCODE Project | Primary source for benchmark datasets. |

The efficient transformation of CLIP-seq data through the FASTQ, BAM, BED, and BigWig formats is a foundational computational step in building robust CNN models for RBP binding prediction. Mastery of these formats' specifications, strengths, and interconversions enables researchers to construct high-quality, biologically relevant training sets. This pipeline is crucial for de novo motif discovery, binding site prediction, and ultimately, the rational design of therapeutics that modulate RNA-protein interactions in disease.

Building Your Pipeline: A Step-by-Step CLIP-seq Preprocessing Workflow for CNN Training

This guide details the critical first step in preprocessing CLIP-seq (Crosslinking and Immunoprecipitation followed by sequencing) data for downstream Convolutional Neural Network (CNN) training. The accuracy of CNN models in predicting RNA-protein binding sites or regulatory motifs is fundamentally dependent on the quality of input data. Rigorous initial QC and precise adapter removal are therefore not merely preparatory steps but foundational to generating reliable, high-confidence training datasets for robust predictive model development in computational biology and drug discovery pipelines.

The Imperative of Initial Quality Assessment with FastQC

FastQC provides a comprehensive diagnostic overview of raw sequencing read quality, identifying issues like pervasive low-quality scores, adapter contamination, or unusual nucleotide compositions that could derail subsequent analysis.

Key FastQC Modules and Interpretations:

- Per Base Sequence Quality: Visualizes Phred quality scores across all bases. Scores below 20 (Q20) indicate potential errors.

- Adapter Content: Quantifies the proportion of adapter sequence present. Any non-zero detection necessitates trimming.

- Per Sequence Quality Scores: Identifies subsets of reads with universally low quality.

- Sequence Duplication Levels: High duplication in CLIP-seq can indicate PCR over-amplification or true biological signal (e.g., abundant RNA targets).

Experimental Protocol for FastQC Analysis:

- Command:

fastqc -o [output_dir] -t [number_of_threads] [input_reads.fastq.gz] - Output: An HTML report file (

[input_reads_fastqc.html]) and a data directory. - Assessment: Manually inspect the HTML report, focusing on modules flagged as "Warning" or "Fail" in the summary. Context is key; some failures (e.g., high duplication) are expected in CLIP-seq.

Adapter Trimming and Quality Filtering with Cutadapt

CLIP-seq libraries, especially those from iCLIP or eCLIP protocols, contain complex adapter structures. Cutadapt precisely removes these and performs simultaneous quality-based trimming.

Core Cutadapt Functionalities for CLIP-seq:

- Adapter Trimming: Removes specified 3' and, if necessary, 5' adapter sequences.

- Quality Trimming: Trims low-quality bases from the 3' end.

- Length Filtering: Discards reads that become too short after processing.

- UMI Handling: Can be configured to extract Unique Molecular Identifiers (UMIs) embedded in adapter sequences, a common feature in CLIP-seq protocols to mitigate PCR duplicates.

Detailed Experimental Protocol for Cutadapt:

- Identify Adapter Sequence: Determine the exact adapter sequence used in your library preparation kit (e.g., Illumina TruSeq).

- Basic Trimming Command:

Advanced Command for CLIP-seq (with UMI extraction):

"ADAPTER_SEQUENCE;required...UMI{5}": Anchored adapter trimming whereUMI{5}extracts 5 random bases preceding the adapter as the UMI.-u 4 -u -4: Removes 4 fixed nucleotides from the 5' start and 3' end of each read (common in iCLIP).--rename='id_{cut_prefix}': Appends the extracted UMI sequence to the read identifier.

Post-trimming QC: Always run FastQC on the trimmed output to confirm adapter removal and improved quality scores.

Data Presentation: Typical QC Metrics Before and After Processing

Table 1: Representative CLIP-seq Read Statistics Pre- and Post-Processing

| Metric | Raw Reads (FastQC) | Trimmed Reads (FastQC) | Interpretation & Target |

|---|---|---|---|

| Total Sequences | 25,000,000 | 22,500,000 | ~10% loss acceptable, depends on adapter content. |

| % Adapter Content | 15-40% | < 0.1% | Primary goal of Cutadapt step. Must be near zero. |

| % Reads ≥ Q30 | 85% | 92% | Quality trimming improves overall read confidence. |

| Mean Read Length | 75 bp | 42 bp | Significant reduction expected due to adapter/quality trimming. |

| % GC Content | 45% (may vary) | 45% (stable) | Should remain consistent with organism's genomic background. |

| Sequence Duplication Level | High (Expected) | High (Persistent) | Biological duplicates in CLIP are retained; PCR duplicates are addressed later via UMIs. |

The Scientist's Toolkit: Essential Research Reagents & Software

Table 2: Key Reagents and Tools for CLIP-seq Preprocessing

| Item | Function/Description | Example/Version |

|---|---|---|

| Raw CLIP-seq FASTQ Files | The primary input data containing sequenced reads and quality scores. | Output from Illumina HiSeq/NovaSeq. |

| FastQC | Visual quality control tool for high-throughput sequence data. | v0.12.1 (Java-based) |

| Cutadapt | Finds and removes adapter sequences, primers, and other unwanted sequence artifacts. | v4.6 (Python-based) |

| Computational Resources | High-performance computing cluster or cloud instance for processing large files. | Linux server with ≥ 16GB RAM, multi-core CPU. |

| Adapter Sequence File | Text file containing the exact nucleotide sequences of adapters used in library prep. | Illumina TruSeq Small RNA 3' Adapter (ATCTCGTATGCCGTCTTCTGCTTG) |

| UMI-aware Demultiplexing Script | Custom script to handle UMI information extracted by Cutadapt for downstream deduplication. | Python or Bash script. |

Workflow and Logical Pathway Visualization

Diagram 1: CLIP-seq Preprocessing Workflow for CNN Training

Diagram 2: Decision Logic for Processing Based on FastQC Output

Within the pipeline for preprocessing CLIP-seq data to train Convolutional Neural Networks (CNNs) for RNA-binding protein (RBP) site prediction, read alignment is the critical step that translates raw sequencing reads into genomic coordinates. The choice of aligner directly impacts the quality of the training dataset by influencing mapping accuracy, splice junction discovery, and the resolution of multi-mapping reads—a common challenge in RBP-RNA interaction data. This guide provides a technical comparison of the two predominant aligners, STAR and HISAT2, for this specific context.

Algorithmic Comparison and Performance Metrics

STAR (Spliced Transcripts Alignment to a Reference) uses a sequential maximum mappable seed search in uncompressed suffix arrays, followed by clustering and stitching for splice junction discovery. HISAT2 employs a hierarchical indexing scheme based on the Burrows-Wheeler Transform and the Ferragina-Manzini index, facilitating efficient mapping across the genome and splice sites.

Recent benchmarks on CLIP-seq-like datasets (e.g., simulated crosslink-centered reads with modifications) highlight key quantitative differences:

Table 1: Performance Comparison of STAR vs. HISAT2 on Simulated CLIP-seq Data

| Metric | STAR | HISAT2 | Notes |

|---|---|---|---|

| Alignment Speed | 50-60 GB/hr | 70-90 GB/hr | HISAT2 is generally faster for equivalent compute resources. |

| Memory Footprint | High (~32 GB for GRCh38) | Moderate (~8 GB for GRCh38) | STAR loads the entire genome index into RAM. |

| Default Alignment Rate | 88-92% | 85-90% | Simulated reads with 3' adapters and 2-5% mismatches. |

| Splice Junction Detection (Recall) | >95% | ~90% | STAR excels in novel junction discovery from RNA-seq data. |

| Multi-mapping Read Handling | Reports all loci | Configurable (--k, --max) | Critical for CLIP-seq; both allow output of all alignments. |

| Base-level Precision at Crosslink Sites | High | Slightly Higher | HISAT2's local alignment can better resolve mutational sites. |

Detailed Experimental Protocols for CLIP-seq Alignment

Protocol A: Alignment with STAR for CLIP-seq

- Index Generation: Generate a genome index with splice junction overhang optimized for your read length (typically

--sjdbOverhang= read length - 1). - Alignment: Execute alignment, enabling modifications crucial for CLIP-seq.

- Output: The key output

Aligned.sortedByCoord.out.bamis used for downstream peak calling and training data extraction.

Protocol B: Alignment with HISAT2 for CLIP-seq

- Index Generation: Use pre-built indices or generate with the

--ssand--exonoptions for enhanced splice awareness. - Alignment: Perform alignment with parameters tuned for CLIP-seq.

- Post-processing: Index the BAM file (

samtools index) for downstream analysis.

Visualization of Alignment Workflows in CLIP-seq Pipeline

Title: CLIP-seq Alignment Step: STAR vs. HISAT2 Decision Workflow

Title: Core Algorithmic Steps: STAR vs. HISAT2 for CLIP Reads

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for CLIP-seq Read Alignment

| Tool/Reagent | Function in Alignment Step | Specific Application Note |

|---|---|---|

| STAR (v2.7.11+) | Spliced-aware aligner for rapid, sensitive junction mapping. | Preferred for datasets with complex splicing or for maximizing junctional read recovery. |

| HISAT2 (v2.2.1+) | Memory-efficient aligner with hierarchical indexing for DNA/RNA. | Ideal for high-throughput environments or when local alignment for mutation resolution is prioritized. |

| SAMtools (v1.19+) | Utilities for processing SAM/BAM files (sort, index, view). | Mandatory for post-alignment file manipulation, filtering, and format conversion. |

| GENCODE Annotation | Comprehensive human genome annotation (GTF format). | Used by both aligners for guided splice junction indexing, improving accuracy. |

| UCSC Genome Browser | Visualisation platform for aligned BAM files. | Critical for manual inspection of alignment patterns at candidate RBP binding sites. |

| Picard Tools | Java-based utilities for handling sequencing data. | Used for duplicate marking (if required) and BAM file quality metrics (CollectAlignmentSummaryMetrics). |

Within the broader thesis on preprocessing CLIP-seq data for training Convolutional Neural Networks (CNNs) to predict RNA-protein interactions, Step 3 is critical for data fidelity. Raw CLIP-seq reads contain artifacts from the experimental protocol, notably PCR amplification duplicates and systematic biases from crosslinking and reverse transcription. Failure to address these leads to skewed training data, compromising the CNN's ability to learn genuine biological signals versus experimental noise. This step ensures the input data for feature extraction (Step 4) is a high-fidelity representation of in vivo binding events.

Core Principles and Quantitative Artifact Prevalence

PCR duplicates arise from the amplification of identical DNA fragments prior to sequencing. In CLIP, additional artifacts include mismatches from non-templated nucleotide additions during reverse transcription and truncations at crosslink sites. The table below summarizes the typical prevalence of these artifacts based on recent literature.

Table 1: Common CLIP-seq Artifacts and Their Estimated Prevalence

| Artifact Type | Cause | Typical Prevalence in Raw Reads | Impact on Downstream Analysis |

|---|---|---|---|

| PCR Duplicates | Amplification of identical fragments | 15-50% | Inflates read counts at specific positions, creating false peaks. |

| Non-templated Nucleotide Adds | Reverse transcriptase activity (e.g., +1A, +1C) | 5-20% of reads | Causes misalignment if not modeled, shifting apparent crosslink site. |

Truncated Reads (read1) |

Reverse transcriptase stalling at crosslinked nucleotide | 30-70% of read1 (iCLIP) |

Key signal for precise crosslink site identification. |

| Chimeric Reads | Ligation of non-contiguous RNAs | 1-5% | Creates false cis-binding signals. |

Detailed Methodologies for Duplicate Removal

Standard PCR Duplicate Removal (forcDNA-based CLIP)

This protocol is used for methods like HITS-CLIP where the final sequenced fragment is the full cDNA.

- Input: Aligned reads (BAM/SAM file) from Step 2 (Alignment).

- Coordinate Consolidation: For each read, extract the unique set of alignment coordinates: chromosome, start position, end position, and strand.

- Molecular Identifier (UMI) Integration (if available):

- If UMIs were incorporated during library prep (e.g., in iCLIP, enhanced CLIP), extract the UMI sequence from the read header or sequence.

- The unique key becomes:

[UMI] + [Chromosome] + [Start] + [End] + [Strand]. - Reads sharing an identical key are considered PCR duplicates originating from the same original RNA molecule.

- Duplicate Identification & Retention:

- Without UMIs: All reads with identical genomic coordinates and strand are considered PCR duplicates. Only one (often the highest quality) is retained.

- With UMIs: Reads sharing coordinates and an identical UMI are collapsed. Reads sharing coordinates but with different UMIs are considered independent molecules and are retained. This is the gold standard.

- Output: A BAM file with duplicate reads removed, preserving only unique molecular events.

CLIP-specific Truncation Handling (iCLIP protocol)

iCLIP exploits truncations as a signal. The protocol requires specialized tools (e.g., iCount, PYRMBL) to analyze read1 start sites (cDNA start sites).

- Input Separation: Separate

read1(truncated at crosslink site) andread2(adapter sequence) into different analysis streams. - Crosslink Site Definition: For each

read1, the nucleotide position immediately upstream of the read's 5' start is defined as the putative crosslink site (XLS). - Truncation Site Counting: Count all

read1start positions genome-wide. Genuine crosslink sites are supported by an enrichment of independent truncation events (unique UMIs) at a single nucleotide. - Background Modeling: Use downstream regions or randomized controls to model the expected background distribution of truncation starts.

- Peak Calling: Identify significant clusters of crosslink sites above background, using the truncation count as the primary signal.

Experimental Protocol for Artifact Validation

To empirically determine artifact levels in a given dataset, the following in silico experiment can be performed.

Title: In silico Quantification of PCR Duplication Rate in CLIP-seq Data

Methodology:

- Data Partitioning: Start with the aligned BAM file before duplicate removal.

- UMI-Based Grouping: Group reads by their genomic coordinate and UMI.

- Counting:

- Let N = Total number of reads.

- Let M = Number of unique molecular identifiers (unique coordinate-UMI pairs).

- Let D = N - M = Number of putative PCR duplicate reads.

- Calculation:

- Duplication Rate = (D / N) * 100%.

- Complexity = (M / N) * 100%.

- Visualization: Plot a histogram of read counts per unique molecule. A high-skew distribution (many molecules with high read counts) indicates severe duplication.

- Post-Removal Check: Repeat counts after duplicate removal.

Mshould equal the total reads in the output file.

Visualizations

Title: CLIP-seq Artifact Removal Workflow for CNN Training

Title: CLIP Reverse Transcription Artifacts & Signals

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Tools for CLIP-seq Artifact Handling

| Item | Function in Duplicate/Artifact Handling | Example/Note |

|---|---|---|

| UMI Adapters | Provides unique molecular barcodes to distinguish PCR duplicates from independent biological fragments. | TruSeq UMIs, Randomer-based ligation adapters (iCLIP2). |

| High-Fidelity Polymerase | Minimizes PCR errors during amplification, but does not prevent duplication of templates. | KAPA HiFi, Q5. |

| RNase Inhibitor | Prevents RNA degradation during library prep, preserving original molecule diversity. | RNasin, SUPERase•In. |

| iCount | Software suite specifically designed to analyze iCLIP data, modeling truncations and calling crosslink sites. | Critical for iCLIP artifact-to-signal conversion. |

| UMI-tools | General software for deduplication based on UMIs and genomic coordinates. | Standard for UMI-aware duplicate removal. |

| Pysam (Python) | API for reading/writing BAM files. Enables custom scripting for complex artifact filtering. | Essential for bespoke pipeline development. |

SAMtools rmdup |

Basic duplicate removal tool. Caution: Use only for non-UMI data; ignores molecular identity. | Legacy tool, limited for modern CLIP. |

In the broader thesis on CLIP-seq data preprocessing for Convolutional Neural Network (CNN) training, peak calling represents the critical transition from raw sequencing data to defined, high-confidence regions of RNA-protein interaction. This step directly influences the quality of the training labels for subsequent CNN models designed to predict binding motifs or regulatory functions. Accurate peak calling eliminates noise and artifacts, ensuring that the CNN learns from biologically relevant signals, which is paramount for applications in drug target discovery and mechanistic studies.

Core Peak Calling Algorithms: A Comparative Analysis

The choice of peak caller is fundamental. The table below contrasts two prominent tools suitable for different CLIP-seq variants.

Table 1: Comparison of PEAKachu and PureCLIP for CLIP-seq Peak Calling

| Feature | PEAKachu | PureCLIP |

|---|---|---|

| Primary Design | Machine learning-based (Random Forests), general for CLIP-seq and PAR-CLIP. | Probabilistic modeling-based, specifically optimized for eCLIP and iCLIP. |

| Core Algorithm | Trains on replicate concordance and genomic features to classify peaks. | Uses a hidden Markov model (HMM) to assign each crosslink site to a background or binding state. |

| Input Requirement | Aligned reads (.bam) and optionally control sample (.bam). | Aligned reads (.bam), requires a control sample for best practices. |

| Key Output | High-confidence peak regions in .bed format. | Precisely defined crosslink sites and broader enriched regions in .bed format. |

| Strengths | Robust to noise, good with technical replicates, user-friendly. | High resolution, models crosslink events explicitly, statistically rigorous. |

| Considerations for CNN Training | Provides broader peaks suitable for region-based classification tasks. | Delivers nucleotide-resolution data ideal for precise motif discovery and sequence-based CNN architectures. |

Detailed Experimental Protocols

Protocol for Peak Calling with PEAKachu

1. Prerequisite Data: Processed, deduplicated, and aligned reads in BAM format from Step 3 (Mapping). A control IP or size-matched input BAM is strongly recommended.

2. Installation:

3. Peak Calling Execution:

4. Post-processing: The resulting BED file contains consensus peaks. For CNN training, these regions are commonly extended symmetrically (e.g., ±50 bp) around the summit to create a uniform input window.

Protocol for Peak Calling with PureCLIP

1. Prerequisites: As above, plus the genome sequence in FASTA format corresponding to the reference used for alignment.

2. Installation:

3. Peak Calling Execution:

4. Post-processing: The -o output gives crosslink sites, while -or provides consensus regions. The regions file is typically used as the final peak set for downstream analysis and CNN label generation.

Visualization of Workflows

Title: Comparative Peak Calling Workflows for CNN Training Data

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagent Solutions for CLIP-seq Peak Calling & Validation

| Reagent/Material | Function in Experiment |

|---|---|

| Nuclease-Free Water | All molecular biology steps to prevent RNA degradation and sample contamination. |

| High-Fidelity DNA Polymerase | Required for library amplification post-crosslinking and immunoprecipitation; maintains sequence fidelity. |

| Proteinase K | Crucial for reversing crosslinks after IP to release the bound RNA fragments for sequencing. |

| RNase Inhibitors | Added throughout the protocol post-lysis to preserve the integrity of RNA-protein complexes and extracted RNA. |

| Magnetic Beads (Protein A/G) | For antibody-mediated pull-down of the RNA-binding protein complex of interest. |

| Size Selection Beads (SPRI) | To isolate cDNA fragments of the desired size range (e.g., 70-200 nt) during library preparation, removing adapter dimers. |

| Benchmark Dataset (e.g., from ENCODE) | Validated eCLIP/iCLIP data for a known RBP (like RBFOX2) to benchmark and optimize the peak calling pipeline. |

| Genome Annotation File (GTF) | Essential for annotating called peaks to genomic features (exons, introns, UTRs) during downstream analysis. |

Within the broader thesis on developing a robust preprocessing pipeline for CLIP-seq data to train Convolutional Neural Networks (CNNs) for cis-regulatory element prediction, Step 5 is the critical transformation of biological sequence and binding data into numerical tensors. This stage converts genomic coordinates, nucleotide sequences, and crosslink event counts into structured, machine-readable formats suitable for deep learning. The quality of this transformation directly impacts the CNN's ability to learn predictive patterns of protein-RNA interactions.

Core Tensor Components

One-hot Encoding of Genomic Sequences

Genomic DNA sequences, represented as strings of nucleotides (A, C, G, T), are converted into a binary matrix. This encoding provides a sparse, orthogonal representation that CNNs can efficiently process.

Methodology: For a genomic window of length L, one-hot encoding creates a 4 x L matrix. Each nucleotide is represented by a 4-bit vector:

- A → [1, 0, 0, 0]

- C → [0, 1, 0, 0]

- G → [0, 0, 1, 0]

- T → [0, 0, 0, 1] Ambiguous bases (e.g., N) are typically encoded as [0.25, 0.25, 0.25, 0.25].

Table 1: One-hot Encoding Scheme for Nucleotides

| Nucleotide | Position A | Position C | Position G | Position T |

|---|---|---|---|---|

| Adenine (A) | 1 | 0 | 0 | 0 |

| Cytosine (C) | 0 | 1 | 0 | 0 |

| Guanine (G) | 0 | 0 | 1 | 0 |

| Thymine (T) | 0 | 0 | 0 | 1 |

| Ambiguous (N) | 0.25 | 0.25 | 0.25 | 0.25 |

Coverage Tracks from CLIP-seq Data

Coverage tracks quantify protein binding intensity across the genomic window, derived from aligned CLIP-seq reads. Multiple tracks can represent different data facets.

Experimental Protocol for Track Generation:

- Input: Aligned read files (BAM format) from CLIP-seq experiment (e.g., eCLIP, PAR-CLIP) and a matched size-matched input control.

- Crosslink Site Deduction: For single-nucleotide resolution protocols (e.g., iCLIP), the position immediately 5' of the cDNA start is identified as the crosslink site. For others, read 5' ends or peak centers are used.

- Signal Normalization: Normalize counts to Reads Per Million (RPM) or use a more sophisticated method like log₂(IP RPM / Control RPM + 1) to control for background and library size.

- Track Creation: For a genomic window, create a 1 x L vector where each genomic coordinate's value is the normalized read count overlapping that position. Separate tracks are generated for:

- IP Signal: The experimental immunoprecipitation signal.

- Control Signal: The matched input control signal.

- Enrichment Track: The log-ratio of IP vs. Control.

Table 2: Common CLIP-seq Coverage Track Types

| Track Name | Data Source | Description | Typical Normalization |

|---|---|---|---|

| IP Coverage | CLIP IP Sample | Raw binding signal intensity. | RPM |

| Control Coverage | Size-matched Input | Background noise and genomic bias. | RPM |

| Enrichment | IP & Control | Specific signal over background. | log₂(IP RPM / Control RPM + pseudocount) |

| Mutation Track (PAR-CLIP) | T→C transitions | Highlights crosslink-induced mutations. | Count at position |

Labeling for Supervised Learning

Labels define the prediction target for the CNN. For CLIP-seq, this is typically a binary or probabilistic classification of whether a genomic window contains a binding site.

Protocol for Binary Label Generation:

- Peak Calling: Use tools like

CLIPperorPiranhaon the IP vs. control data to identify statistically significant binding peaks. - Window Annotation: A genomic window (e.g., 500bp) is assigned a positive label (1) if its center lies within a called peak region. Windows without a peak are assigned a negative label (0). A balanced dataset often requires careful negative selection, such as sampling from regions with control signal but no IP peaks.

Final Input Tensor Assembly

The final input tensor for a single training example is a multi-channel 2D matrix with dimensions (Channels, Sequence Length).

- Channel 1-4: The one-hot encoded DNA sequence.

- Channel 5: IP coverage track.

- Channel 6: Control coverage track.

- Channel 7: Enrichment track. The corresponding label is a scalar (0 or 1). A batch of N examples forms a 3D tensor of shape (N, 7, L).

Table 3: Example Tensor Structure for a 500bp Window

| Channel Index | Content | Data Type | Shape per Example |

|---|---|---|---|

| 0 | One-hot A | float32 | 1 x 500 |

| 1 | One-hot C | float32 | 1 x 500 |

| 2 | One-hot G | float32 | 1 x 500 |

| 3 | One-hot T | float32 | 1 x 500 |

| 4 | IP Coverage | float32 | 1 x 500 |

| 5 | Control Coverage | float32 | 1 x 500 |

| 6 | Enrichment | float32 | 1 x 500 |

| – | Label | int8 | 1 |

Visualizing the Tensor Generation Workflow

Title: CLIP-seq Data to CNN Input Tensor Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Resources for CLIP-seq Tensor Generation

| Item | Function in Pipeline | Example/Tool |

|---|---|---|

| High-Throughput Sequencing Data | Raw source of protein-RNA binding events. | Illumina NovaSeq CLIP-seq reads. |

| Reference Genome Assembly | Provides genomic context for alignment and sequence extraction. | GRCh38 (human) or GRCm39 (mouse). |

| CLIP-seq Peak Caller | Identifies significant binding sites for labeling. | CLIPper, PEAKachu, Piranha. |

| Genomic Coordinate Manipulation Tools | Extracts windows, overlaps features, and processes BED files. | BEDTools, pybedtools. |

| Sequence Encoding Library | Performs one-hot encoding and tensor operations. | NumPy, TensorFlow, PyTorch. |

| Normalization Software | Calculates RPM and enrichment scores from BAM files. | deepTools bamCoverage, custom scripts. |

| Visualization Suite | Inspects coverage tracks and tensor alignment. | IGV (Integrative Genomics Viewer), matplotlib. |

Within the context of CLIP-seq (Cross-Linking and Immunoprecipitation followed by sequencing) data preprocessing for Convolutional Neural Network (CNN) training in genomic research, data partitioning is a critical, non-trivial step. Improper splitting can lead to data leakage, over-optimistic performance estimates, and models that fail to generalize to novel biological conditions or drug targets. This guide details rigorous strategies tailored for the high-dimensional, correlated, and biologically structured nature of CLIP-seq datasets, which map protein-RNA interactions essential for understanding gene regulation in disease and therapy.

Core Partitioning Strategies & Quantitative Comparison

The choice of partitioning strategy depends on the experimental design, biological question, and the need for generalizability. Below is a comparative analysis of key methodologies.

Table 1: Quantitative Comparison of Data Partitioning Strategies for CLIP-seq/CNN Pipelines

| Strategy | Typical Split Ratio (Train/Val/Test) | Key Advantage | Key Risk/Pitfall | Ideal Use Case in CLIP-seq Context |

|---|---|---|---|---|

| Simple Random | 70/15/15 or 80/10/10 | Maximizes data usage; simple implementation. | Data Leakage: Highly correlated peaks from same biological replicate or experiment can appear in both train and test sets, inflating performance. | Preliminary proof-of-concept with a single, homogeneous cell line under one condition. |

| Chromosome-Holdout | Varies by genome | Mimics true de novo genome-wide prediction; prevents leakage via sequence similarity. | Chromosomal bias (e.g., gene-dense vs. sparse regions) may skew performance. | Final evaluation of a model intended for discovering binding events on uncharacterized genomic regions. |

| Experiment/Holdout | 60/20/20 | Tests generalizability across experimental batches or conditions. | Requires multiple independent CLIP-seq experiments. | Validating robustness to technical variation (e.g., different labs, protocols). |

| Biological Replicate Holdout | ~1 replicate per set | Most rigorous test of biological reproducibility. | Requires multiple replicates (≥3). Often leads to smaller test sets. | Benchmarking model's ability to capture consistent biological signal over noise. |

| Condition-Based Holdout | Defined by study design | Tests generalization to novel biological states (e.g., drug-treated vs. untreated). | Requires carefully designed multi-condition studies. | Drug development: training on vehicle-control data, testing on compound-treated data to predict therapy-induced changes. |

| k-Fold Cross-Validation | (k-1)/1/0 (iterative) | Robust performance estimate with limited data; uses all data for training/validation. | Computationally expensive for CNNs; does not provide a single, fixed test set for final evaluation. | Hyperparameter tuning and model selection during development phases. |

Detailed Methodologies for Key Experimental Protocols

Chromosome-Holdout Partitioning Protocol

This is a gold-standard for genomic deep learning to ensure the model learns sequence features, not memorized genomic locations.

- Input Data: A unified set of peak regions (BED format) from CLIP-seq analysis, with corresponding genomic sequences (FASTA) and binding intensity scores.

- Chromosome Categorization:

- Holdout Chromosomes: Designate one or more entire chromosomes (e.g., chr8, chr9) as the test set. These are completely excluded from training/validation.

- Validation Chromosomes: Designate a separate, non-overlapping chromosome(s) (e.g., chr7) as the validation set.

- Training Chromosomes: All remaining chromosomes form the training set.

- Stratification (Critical): Within training and validation chromosomes, perform random splitting while stratifying by key biological features (e.g., peak strength quantiles, gene biotype) to maintain similar label distributions.

- Sequence Extraction: Extract ±150bp sequences centered on each peak summit for all sets. Ensure no overlap between regions in different sets.

- Verification: Use tools like

BEDTools intersectto confirm zero overlap between the genomic coordinates of the final train, validation, and test sets.

Condition-Based Holdout for Drug Development

This protocol assesses a model's predictive power in a novel therapeutic context.

- Experimental Design: CLIP-seq data for RNA-binding protein (RBP) of interest is generated under two conditions: Condition A (Vehicle/DMSO) and Condition B (Drug/Compound).

- Data Curation: Process raw data from both conditions through a uniform pipeline (alignment, peak calling, quantification).

- Partitioning:

- Training Set: 100% of data from Condition A (Vehicle).

- Validation Set: A subset from Condition A, used for early stopping and hyperparameter tuning.

- Test Set: 100% of data from Condition B (Drug). This tests the model's ability to predict binding alterations induced by the compound.

- Normalization: Apply global normalization (e.g., using spike-ins or housekeeping RNA interactions) to mitigate technical batch effects between the two conditions before partitioning.

Visualizations

Title: Data Partitioning Workflow for CLIP-seq CNN Training

Title: Condition-Based Holdout Strategy for Drug Response Prediction

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for CLIP-seq Data Partitioning & Validation

| Item / Reagent | Function in Partitioning Context | Key Consideration |

|---|---|---|

| High-Quality, Replicated CLIP-seq Datasets (e.g., from ENCODE, GEO) | Provides the fundamental biological data for splitting. Ensures robustness when using replicate-holdout strategies. | Prioritize datasets with ≥3 biological replicates and consistent metadata. |

| BEDTools Suite | Critical for manipulating genomic intervals. Used to verify zero overlap between splits, merge replicates, and extract sequences. | Essential for implementing clean chromosome- or region-based holdout. |

| PyBigWig / deeptools | Enables extraction of continuous signal profiles (e.g., binding strength) across partitions for model training and label stratification. | Helps maintain signal distribution consistency across splits. |

| scikit-learn | Provides robust implementations for stratified splitting, k-fold cross-validation, and label preprocessing within defined partitions. | Use GroupShuffleSplit to group peaks by biological replicate or experiment ID to prevent leakage. |

| TensorFlow/PyTorch DataLoader with Custom Samplers | Manages efficient, leak-proof batching of large genomic sequence datasets during CNN training based on predefined partition indices. | Custom samplers prevent accidental shuffling of data between splits during training epochs. |

| Spike-in Control Normalized Data | For condition-based holdout, global normalization using exogenous spike-ins (e.g., SIRVs) corrects batch effects, ensuring splits reflect biology, not technical artifacts. | Crucial for translational studies comparing across drug treatments or cell lines. |

Within the broader thesis on CLIP-seq data preprocessing for Convolutional Neural Network (CNN) training, Step 7 addresses the critical challenge of limited and imbalanced genomic datasets. CLIP-seq (Crosslinking and Immunoprecipitation followed by sequencing) experiments are resource-intensive, often yielding sparse data for rare RNA-binding protein (RBP) motifs or conditions. Data augmentation artificially expands the training set by creating modified versions of existing sequences, improving model generalization, reducing overfitting, and enhancing robustness to experimental noise and biological variation. This guide details technical augmentation strategies specifically tailored for genomic sequence data, such as CLIP-seq peaks, within a machine learning pipeline.

Core Augmentation Techniques for Genomic Sequences

Genomic sequence data, represented as one-hot encoded matrices or k-mer frequency vectors, requires domain-specific augmentations that preserve biological plausibility. The following techniques are most applicable.

Nucleotide-Level Perturbations

These techniques introduce changes at the individual base-pair level, simulating natural variation and sequencing errors.

- Random Substitution (Point Mutation): Randomly select a position within the sequence and substitute the nucleotide with one of the other three bases (A, C, G, T/U). The substitution rate is a key hyperparameter.

- Random Insertion/Deletion (Indel): Insert a random nucleotide at a random position, or delete a nucleotide. These simulate small indel errors common in sequencing.

- Random Swap: Swap the positions of two randomly selected nucleotides within a short window.

Sequence-Level Transformations

These operations manipulate larger segments of the sequence.

- Random Cropping (Subsequence Sampling): Given a longer sequence (e.g., a 101bp window around a CLIP-seq peak), randomly extract a contiguous subsequence of a fixed, shorter length (e.g., 50bp). This forces the CNN to learn features invariant to exact positional context.

- Random Translation (Shifting): For a fixed-length window, randomly shift the start position upstream or downstream within a defined genomic region, then take the fixed-length window from the new start. This augments positional variability.

- Reverse Complement: Generate the reverse complement of the input sequence. This is a biologically valid transformation, as DNA/RNA is double-stranded and binding motifs can appear on either strand. It effectively doubles the dataset.

Signal-Level Augmentation for Coverage Vectors

CLIP-seq data often includes a crosslink coverage signal (density) alongside the primary sequence. This signal can also be augmented.

- Gaussian Noise Addition: Add random Gaussian noise to the coverage values, simulating variability in crosslinking efficiency and read sampling.

- Random Scaling: Randomly scale the coverage signal by a small factor (e.g., 0.9 to 1.1), simulating differences in experimental yield.

Synthetic Sequence Generation

More advanced techniques use generative models to create novel, realistic sequences.

- k-mer Based Resampling: Use a Markov model or a simpler probabilistic model trained on the background genomic k-mer distribution to generate new sequences that maintain local k-mer statistics.

- GAN-based Generation: Employ a Generative Adversarial Network (GAN) trained on positive CLIP-seq peaks to generate synthetic binding sequences. This is computationally intensive but powerful for highly imbalanced classes.

Table 1: Comparison of Genomic Data Augmentation Techniques

| Technique | Biological Justification | Primary Effect on Model | Key Hyperparameter(s) | Risk/Benefit |

|---|---|---|---|---|

| Random Substitution | Point mutations, sequencing errors. | Robustness to single nucleotide variants. | Substitution rate (e.g., 0.01-0.05). | Low risk if rate is kept low. |

| Random Cropping | Motif core is central, flanking sequence varies. | Positional invariance, focus on core motif. | Cropped output length. | High benefit; critical for CNN. |

| Reverse Complement | Double-stranded nature of DNA/RNA. | Doubles data; enforces strand-agnostic learning. | None (deterministic). | Very high benefit, zero risk. |

| Gaussian Noise (Signal) | Experimental noise in read counts. | Robustness to coverage fluctuations. | Noise standard deviation. | Moderate benefit for signal-based models. |

| GAN-based Generation | Captures complex motif & context patterns. | Addresses severe class imbalance. | GAN architecture, training stability. | High potential benefit, high complexity. |

Experimental Protocols for Benchmarking Augmentation

To evaluate the efficacy of augmentation strategies within a CLIP-seq/CNN thesis, a controlled benchmarking experiment is essential.

Protocol: Controlled Augmentation Ablation Study