RNA-Seq Data Analysis Demystified: A Step-by-Step Guide for Biomedical Researchers from Raw Reads to Biological Insights

This comprehensive guide provides biomedical researchers, scientists, and drug development professionals with a foundational understanding of RNA-seq analysis.

RNA-Seq Data Analysis Demystified: A Step-by-Step Guide for Biomedical Researchers from Raw Reads to Biological Insights

Abstract

This comprehensive guide provides biomedical researchers, scientists, and drug development professionals with a foundational understanding of RNA-seq analysis. It systematically navigates through core principles, from experimental design and raw data preprocessing to differential expression, pathway analysis, and advanced applications like single-cell RNA-seq. The article addresses common pitfalls, troubleshooting strategies, and best practices for method validation and selection, equipping readers with the knowledge to design robust transcriptomic studies and interpret results for hypothesis generation and biomarker discovery in clinical and translational research.

The RNA-Seq Blueprint: From Experimental Design to Transcriptomic Landscapes

Within the framework of a thesis on Basic principles of RNA-seq data analysis research, understanding the foundational measurement principles and experimental design is paramount. RNA sequencing (RNA-Seq) is a high-throughput technology that leverages next-generation sequencing (NGS) to provide a quantitative snapshot of the transcriptome at a given moment. This in-depth guide outlines its core principles and the critical experimental considerations that underpin robust, reproducible data generation for researchers, scientists, and drug development professionals.

What RNA-Seq Measures: Core Outputs

At its core, RNA-Seq measures the presence and quantity of RNA molecules in a biological sample. The primary data outputs are digital counts of sequenced cDNA fragments, which are used to infer RNA abundance.

Table 1: Core Quantitative Outputs of a Standard RNA-Seq Experiment

| Output Metric | Description | Typical Units/Form | Key Interpretation |

|---|---|---|---|

| Raw Read Counts | The total number of sequenced reads per sample before any filtering. | Integer (e.g., 30,000,000) | Indicates sequencing depth; crucial for library complexity assessment. |

| Aligned/ Mapped Reads | The subset of reads successfully aligned to a reference genome or transcriptome. | Integer & Percentage (e.g., 28.5M, 95%) | Measure of data quality and sample-reference compatibility. |

| Gene/Transcript Expression Level | The abundance estimate for a genomic feature, derived from read alignments. | Counts (raw), FPKM (Fragments Per Kilobase per Million), TPM (Transcripts Per Million), CPM (Counts Per Million) | Raw counts are input for differential expression. FPKM/TPM enable within-sample comparison of different gene lengths. |

| Differential Expression | Statistically significant change in expression between experimental conditions. | Log2 Fold Change (log2FC) and Adjusted p-value (FDR) | Identifies up-regulated (log2FC > 0) and down-regulated (log2FC < 0) genes. |

| Alternative Splicing Events | Detection of differentially used exons or splice junctions. | Percent Spliced In (PSI), Junction Read Counts | Reveals isoform-level regulation beyond whole gene expression. |

| Variant Calling | Identification of single nucleotide variants (SNVs) or insertions/deletions (indels) within expressed regions. | Genotype, Allele Frequency | Used in allele-specific expression or transcriptome mutation analysis. |

Key Experimental Considerations

The biological validity of conclusions drawn from RNA-Seq data is directly contingent on rigorous experimental design and execution.

Experimental Design

- Replication: Biological replicates (samples from different biological units) are non-negotiable for statistical power to detect differential expression. Technical replicates (multiple libraries from the same sample) control for library preparation noise but cannot replace biological replicates.

- Randomization: Processing order of samples should be randomized to avoid batch effects.

- Sample Size/Power: Power analysis should be conducted a priori to determine the number of replicates needed to detect a biologically meaningful fold-change with sufficient statistical power.

Detailed Methodological Protocol: A Standard Bulk RNA-Seq Workflow

Protocol: Poly-A Selection Based mRNA Sequencing

Objective: To profile the protein-coding transcriptome from eukaryotic total RNA.

Materials: See The Scientist's Toolkit below.

Procedure:

RNA Extraction & QC:

- Extract total RNA using a guanidinium thiocyanate-phenol-chloroform method (e.g., TRIzol) or spin-column kits. Treat samples with DNase I to remove genomic DNA.

- Assess RNA integrity using an Agilent Bioanalyzer or TapeStation. An RNA Integrity Number (RIN) > 8.0 is generally recommended for high-quality libraries.

mRNA Enrichment:

- Use oligo(dT) magnetic beads to selectively bind the poly-A tails of messenger RNA (mRNA). Wash away ribosomal RNA (rRNA) and other non-polyadenylated RNA.

cDNA Library Construction:

- Fragmentation: Fragment the purified mRNA enzymatically or via divalent cations at elevated temperature (~94°C).

- First-Strand Synthesis: Reverse transcribe fragmented RNA using random hexamer primers and reverse transcriptase to produce cDNA.

- Second-Strand Synthesis: Synthesize the second cDNA strand using DNA Polymerase I and RNase H, creating double-stranded cDNA (ds-cDNA).

- End Repair & A-Tailing: Blunt the ends of the ds-cDNA fragments and add a single 'A' nucleotide to the 3' ends to facilitate ligation to 'T'-overhang adapters.

- Adapter Ligation: Ligate platform-specific sequencing adapters (containing unique molecular indices/UMIs and sample barcodes) to the cDNA fragments.

- Library Amplification: Perform limited-cycle PCR to enrich for adapter-ligated fragments and add full sequencing primer binding sites.

Library QC & Sequencing:

- Purify the final library and quantify using qPCR for accurate molarity.

- Assess library size distribution using a Bioanalyzer.

- Pool equimolar amounts of uniquely barcoded libraries.

- Sequence the pooled library on an NGS platform (e.g., Illumina NovaSeq) using a paired-end strategy (e.g., 2x150 bp).

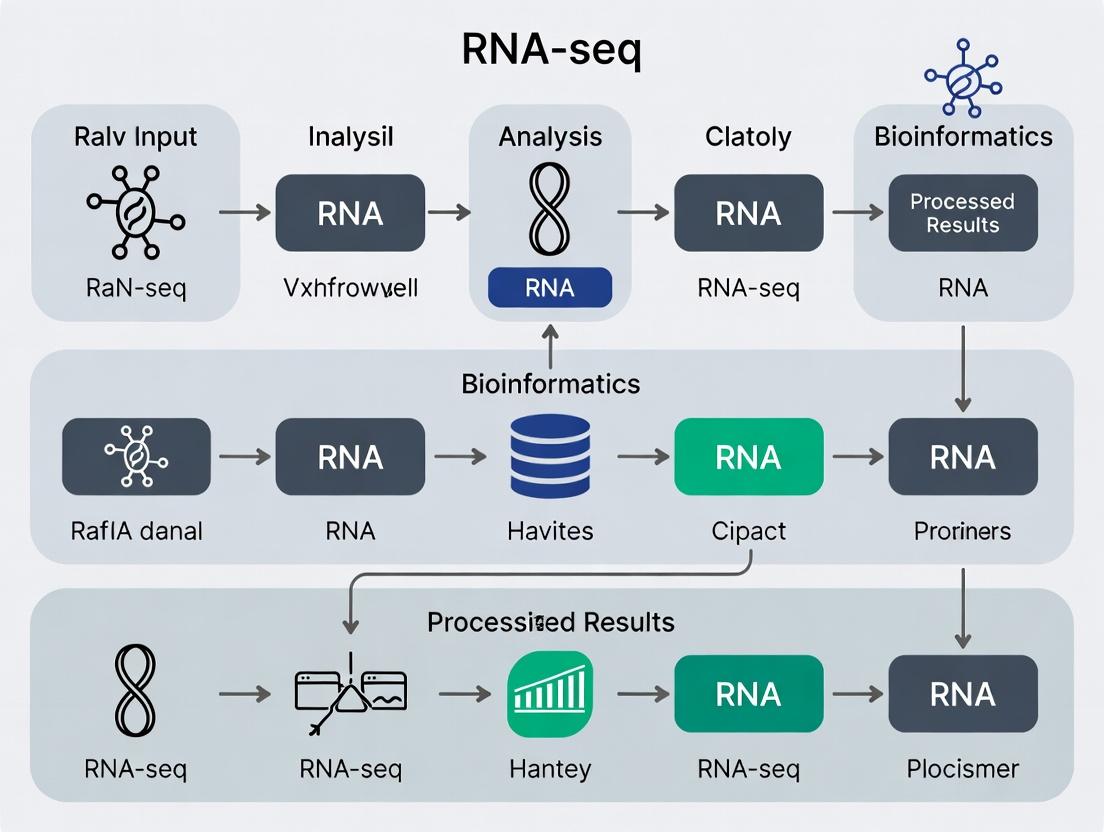

Visualization of Core Workflow and Data Relationships

Figure 1: Bulk RNA-Seq Experimental and Computational Workflow

Figure 2: From Count Data to Biological Interpretation

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Research Reagent Solutions for RNA-Seq Library Preparation

| Item | Function & Description | Example Vendor/Kit |

|---|---|---|

| RNA Extraction Kit | Isolates high-integrity total RNA, free of genomic DNA, proteins, and other contaminants. | QIAGEN RNeasy, Thermo Fisher TRIzol, Zymo Research Quick-RNA. |

| RNA Integrity Assessor | Microfluidics-based system for quantitative assessment of RNA degradation (RIN). | Agilent Bioanalyzer/TapeStation, Thermo Fisher Fragment Analyzer. |

| Poly-A Selection Beads | Magnetic beads coated with oligo(dT) to selectively bind and purify polyadenylated mRNA. | NEBNext Poly(A) mRNA Magnetic Isolation Module, Illumina Poly-A Tail Kit. |

| RNA-Seq Library Prep Kit | All-in-one reagent suite for cDNA synthesis, adapter ligation, and library amplification. | Illumina Stranded mRNA Prep, NEBNext Ultra II RNA Library Prep, Takara Bio SMART-Seq. |

| Unique Molecular Indices (UMIs) | Short random nucleotide sequences added during reverse transcription to tag individual mRNA molecules, enabling PCR duplicate removal. | Included in kits like Illumina Stranded mRNA UDI or as separate oligos. |

| Size Selection Beads | Magnetic beads (e.g., SPRI) used to select cDNA fragments of a specific size range, controlling library insert size. | Beckman Coulter AMPure XP, homemade SPRI beads. |

| Library Quantification Kit | qPCR-based assay for accurate, specific quantification of amplifiable library fragments for pooling. | Kapa Biosystems Library Quantification Kit, Thermo Fisher Collibri qPCR Kit. |

| High-Throughput Sequencer | Instrument performing massively parallel sequencing of pooled libraries. | Illumina NovaSeq/NextSeq, MGI DNBSEQ-G400, PacBio Sequel IIe (for Iso-Seq). |

Within the broader thesis on the basic principles of RNA-seq data analysis research, this guide details the core computational workflow. This pipeline transforms raw sequencing data into biological insight and is fundamental for research and drug development.

The Core RNA-Seq Analysis Workflow

The standard workflow proceeds through distinct, sequential stages.

Key Experimental Protocols & Methodologies

Library Preparation (Wet-Lab)

The process begins with converting RNA to a sequencing-ready library.

- Input: Total RNA or mRNA.

- Fragmentation: RNA is fragmented (chemically or enzymatically) to ~200-500bp.

- cDNA Synthesis: First and second-strand cDNA synthesis are performed using reverse transcriptase and DNA polymerase.

- End Repair & A-tailing: DNA fragments are blunted, and a single 'A' nucleotide is added to 3' ends.

- Adapter Ligation: Platform-specific sequencing adapters are ligated.

- PCR Amplification: The library is amplified with indexed primers for multiplexing.

- QC & Quantification: Library size distribution is checked (e.g., Bioanalyzer), and concentration is quantified (e.g., qPCR).

Primary Data Analysis (Base Calling & Demultiplexing)

This occurs on the sequencer's onboard software.

- Base Calling: Raw signals (images or electrical) are converted to nucleotide sequences (FASTQ files).

- Demultiplexing: Reads are sorted by sample-specific index (barcode) into separate FASTQ files.

- Output: Paired-end (R1, R2) or single-end FASTQ files per sample.

Quantitative Metrics & QC Standards

Critical quantitative thresholds must be met at each stage to ensure data integrity.

Table 1: Key Quality Control Metrics and Benchmarks

| Stage | Metric | Typical Threshold | Purpose & Rationale |

|---|---|---|---|

| Raw Read QC | Total Reads | >20-30M per sample | Ensures statistical power for detection. |

| Q30 Score | >70-80% of bases | Indicates high base-call accuracy. | |

| GC Content | Matches organism norm | Flags contamination or bias. | |

| Alignment | Alignment Rate | >70-80% (mRNA-seq) | Measures specificity to reference genome. |

| Exonic Rate | >50-60% (total RNA) | Assesses enrichment for intended targets. | |

| Gene Level | Detected Genes | >10,000 (human) | Indifies library complexity. |

| % Mitochondrial Reads | <10-20% (cells/tissues) | Flags cellular stress or apoptosis. |

Table 2: Common Differential Expression Analysis Tools

| Tool | Algorithm Core | Key Feature | Typical Input |

|---|---|---|---|

| DESeq2 | Negative Binomial GLM with shrinkage | Robust to small replicates, widely adopted. | Raw count matrix |

| edgeR | Negative Binomial models | Flexible for complex designs, fast. | Raw count matrix |

| limma-voom | Linear modeling with precision weights | Powerful for large sample sizes. | Log-CPM counts |

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Materials for RNA-Seq Experiments

| Item | Function in Workflow | Key Considerations |

|---|---|---|

| Poly(A) Selection Beads | Enriches for messenger RNA (mRNA) by binding poly-A tail. | Reduces ribosomal RNA background; not suitable for non-polyadenylated RNA. |

| Ribosomal Depletion Probes | Removes abundant ribosomal RNA (rRNA) from total RNA. | Essential for total RNA-seq, bacterial RNA-seq, or degraded samples (FFPE). |

| RNA Fragmentation Buffer | Chemically breaks RNA into uniform fragments of desired size. | Critical for controlling insert size and achieving even coverage. |

| Reverse Transcriptase | Synthesizes first-strand cDNA from RNA template. | High processivity and fidelity reduce bias and handle complex structures. |

| Strand-Specific Library Prep Kit | Preserves the original orientation of the RNA transcript. | Allows determination of which genomic strand is transcribed. |

| Unique Dual Index (UDI) Adapters | Oligonucleotides containing sample barcodes for multiplexing. | Enables pooling of many samples, reducing cost and batch effects. |

| Size Selection Beads (SPRI) | Magnetic beads that bind DNA by size for clean-up and selection. | Removes adapter dimers and selects the optimal insert size range. |

| High-Fidelity DNA Polymerase | Amplifies the final cDNA library with minimal bias. | Maintains representation and diversity during PCR enrichment. |

Downstream Analysis & Biological Interpretation

Following differential expression, results are interpreted in a biological context.

Protocol for Functional Enrichment Analysis

- Input: A ranked list of genes (by log2 fold change) or a thresholded list of significant DEGs.

- Gene Set Database Selection: Choose relevant databases (e.g., Gene Ontology Biological Process, KEGG, Hallmark gene sets).

- Analysis Method:

- Over-Representation Analysis (ORA): Tests if genes in a predefined set are overrepresented in your DEG list using a hypergeometric test (e.g., via clusterProfiler).

- Gene Set Enrichment Analysis (GSEA): Uses all ranked genes to identify enriched sets at the top or bottom without arbitrary significance thresholds.

- Multiple Testing Correction: Apply Benjamini-Hochberg procedure to control False Discovery Rate (FDR). Accept results with FDR < 0.05 or 0.25 (for GSEA).

- Visualization: Generate dot plots, enrichment plots (GSEA), or pathway topology diagrams to interpret results.

Within the thesis on the basic principles of RNA-seq data analysis research, a foundational understanding of the core file formats is paramount. These formats are the lingua franca for representing sequencing data, alignments, and genomic annotations, forming the critical infrastructure upon which all downstream biological interpretation rests. This guide decodes these essential formats for researchers, scientists, and drug development professionals.

The Data Lifecycle: From Reads to Interpretation

RNA-seq analysis is a pipeline where each stage is defined by a specific file format.

Diagram Title: RNA-seq Analysis Pipeline with Core File Formats

Decoding the Core Formats

FASTQ: The Raw Sequencing Read

The primary output of next-generation sequencing platforms. Each sequence read is represented by four lines:

- @Read Identifier: Instrument and location data.

- Nucleotide Sequence.

- + (Optionally repeats identifier).

- Quality Scores: Per-base Phred-scaled probability of an incorrect call.

Table 1: FASTQ Line Example & Quality Score Meaning

| Line | Example Content | Purpose |

|---|---|---|

| 1 | @INST:run:lane:tile:x:y#index/1 | Unique read identifier with metadata. |

| 2 | AGTCTAGCATCGATCGATCGATCGATCG | The actual nucleotide sequence. |

| 3 | + | Separator. |

| 4 | BBBFFFFFFFFFFIIIIIIIIIIIIIII | Encoded quality scores (Phred+33). |

| Phred Score | Probability of Incorrect Base Call | Base Call Accuracy |

| 10 | 1 in 10 | 90% |

| 20 | 1 in 100 | 99% |

| 30 | 1 in 1000 | 99.9% |

| 40 | 1 in 10,000 | 99.99% |

SAM & BAM: The Alignment Maps

The Sequence Alignment/Map (SAM) format is a human-readable, tab-delimited text file storing alignment information of reads to a reference genome. The Binary Alignment/Map (BAM) is its compressed, indexed, and machine-efficient binary counterpart.

Table 2: Key SAM/BAM Alignment Fields

| Field Number | Column Name | Description | Example/Values |

|---|---|---|---|

| 1 | QNAME | Query (read) name | Read_12345 |

| 2 | FLAG | Bitwise flag indicating alignment properties | 99 (paired, properly paired, mapped, etc.) |

| 3 | RNAME | Reference sequence name | chr1 |

| 4 | POS | 1-based leftmost mapping position | 1000000 |

| 5 | MAPQ | Mapping quality (Phred-scaled) | 60 |

| 6 | CIGAR | String describing alignment match/indel pattern | 50M3I47M |

| 10 | SEQ | Read sequence (as in FASTQ) | AGTCTAGC... |

| 11 | QUAL | Read quality scores (as in FASTQ) | BBBFFFFF... |

Experimental Protocol: Converting SAM to BAM and Indexing

- Alignment: Use an aligner (e.g., STAR, HISAT2) to generate a SAM file.

STAR --genomeDir /path/to/index --readFilesIn sample.fastq --outFileNamePrefix sample --outSAMtype SAM

- Convert to BAM: Use

samtools viewto compress SAM to BAM.samtools view -S -b sample.sam > sample.bam

- Sort BAM: Sort by genomic coordinates for downstream analysis.

samtools sort sample.bam -o sample.sorted.bam

- Index BAM: Create a rapid-access index file (

.bai).samtools index sample.sorted.bam

GTF & GFF: The Genomic Annotation Guides

Gene Transfer Format (GTF) and General Feature Format (GFF/GFF3) are used to annotate features on DNA sequences (genes, exons, transcripts, etc.). GFF3 is the most recent specification.

Table 3: Comparison of GFF3 and GTF Format Structures

| Aspect | GFF3 (General Feature Format v3) | GTF (Gene Transfer Format) |

|---|---|---|

| Purpose | General-purpose genomic annotation. | Evolved from GFF2; specific to gene annotation. |

| Key Fields | 9 tab-separated: seqid, source, type, start, end, score, strand, phase, attributes. | Same 9 fields as GFF2. |

| Attributes | Flexible, semicolon-separated tag=value pairs. |

Semicolon-separated; specific mandated tags (e.g., gene_id, transcript_id). |

| Gene Model | Implicit via hierarchical Parent/ID relationships in attributes. |

Explicit via gene_id and transcript_id grouping. |

| Example | chr1 Ensembl exon 1000 1200 . + . ID=exon00001;Parent=transcript01 |

chr1 Ensembl exon 1000 1200 . + . gene_id "gene01"; transcript_id "transcript01"; |

Diagram Title: Hierarchical Relationship in GFF3/GTF Annotation

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Tools for RNA-seq Data Processing

| Tool / Reagent | Category | Primary Function |

|---|---|---|

| STAR | Aligner Software | Spliced-aware alignment of RNA-seq reads to a reference genome. |

| HISAT2 | Aligner Software | Efficient alignment with low memory footprint, supports splicing. |

| SAMtools | Utility Suite | Manipulation, viewing, sorting, and indexing of SAM/BAM files. |

| Picard Tools | Utility Suite | Java-based tools for handling high-throughput sequencing data (BAM metrics, deduplication). |

| featureCounts (Subread) | Quantification Tool | Counts reads mapping to genomic features (e.g., genes) using an annotated GTF file. |

| HTSeq | Quantification Tool | Python framework for processing high-throughput sequencing data, including htseq-count. |

| StringTie | Assembly/Quantification | Assembles transcripts and estimates their abundance from aligned RNA-seq reads. |

| R/Bioconductor | Analysis Environment | Ecosystem for statistical analysis, visualization, and differential expression (e.g., DESeq2, edgeR). |

| Reference Genome FASTA | Data File | The nucleotide sequence of the organism's genome for alignment. |

| Annotation GTF/GFF | Data File | The coordinates of known genes, transcripts, and exons for quantification. |

| Illumina Sequencing Kits | Wet-Lab Reagent | Generate the cDNA libraries for sequencing (e.g., TruSeq Stranded mRNA). |

| RNA Extraction Kits | Wet-Lab Reagent | Isolate high-quality total RNA from tissue/cell samples (e.g., Qiagen RNeasy). |

Integrated Workflow: From BAM to Counts

Experimental Protocol: Generating a Count Matrix using featureCounts

- Input Preparation: You require a sorted BAM file (

sample.sorted.bam) and a validated annotation file (annotation.gtf). - Run featureCounts: Execute the command to assign reads to gene features.

featureCounts -a annotation.gtf -o gene_counts.txt -p -s 2 sample.sorted.bam-p: Indicates paired-end reads.-s 2: Strand specificity (e.g., '2' for reverse-stranded libraries common in stranded RNA-seq).

- Output: The primary output

gene_counts.txtis a tab-delimited matrix where rows are genes and columns include counts for each sample. This matrix is the direct input for differential expression analysis tools like DESeq2.

Diagram Title: Read Quantification Logic with BAM and GTF

This guide, framed within the thesis on Basic principles of RNA-seq data analysis research, provides a technical manual for accessing and leveraging two cornerstone public repositories: the Gene Expression Omnibus (GEO) and the Sequence Read Archive (SRA). For researchers and drug development professionals, these repositories are indispensable sources of high-throughput functional genomics data, enabling secondary analysis, meta-analysis, and hypothesis generation without incurring primary sequencing costs.

The National Center for Biotechnology Information (NCBI) hosts both repositories, but they serve distinct purposes. GEO is a public functional genomics data repository supporting MIAME-compliant data submissions. It stores curated gene expression profiles, non-array-based data like RNA-seq, and epigenetic data. The SRA is the primary archive for high-throughput sequencing raw read data, serving as the substrate for computational analysis.

Table 1: Core Characteristics of GEO and SRA

| Feature | Gene Expression Omnibus (GEO) | Sequence Read Archive (SRA) |

|---|---|---|

| Primary Data Type | Processed data (matrices), sample metadata, curated datasets. | Raw sequencing reads (FASTQ, BAM). |

| Submission Standard | MIAME (Minimum Information About a Microarray Experiment) / MINSEQE. | SRA metadata schemas. |

| Typical Access Point | GEO Datasets / GEO DataSets browser. | SRA Run Selector, direct FTP. |

| Key Identifiers | GSE (Series), GDS (DataSet), GSM (Sample), GPL (Platform). | SRP (Study), SRS (Sample), SRX (Experiment), SRR (Run). |

| Primary Use Case | Retrieving normalized expression matrices for differential expression. | Downloading raw reads for custom alignment and analysis. |

Accessing Data: A Technical Workflow

Querying and Identifying Relevant Studies

Effective navigation begins with precise querying using the NCBI Entrez system. Use field tags like [GSE] or [SRP] and Boolean operators.

Search results must be critically evaluated. For GEO, prioritize series (GSE) with:

- Sufficient sample size and replication.

- Detailed protocols in the

SeriesandSamplesmetadata. - Presence of processed data files (e.g.,

*_matrix.txt.gz). For SRA, prioritize studies (SRP) with: - Comprehensive sample attributes.

- Availability of

FASTQfiles for download.

Downloading Data

GEO Download:

- Processed Data: Directly download Series Matrix files via FTP or the

Download familybutton on the GEO Series page. - Raw Data (SRA files): Use the

SRAaccessions (e.g., SRR numbers) linked from the GEO sample (GSM) page.

SRA Download via SRA Toolkit:

The SRA Toolkit (fastq-dump, prefetch, fasterq-dump) is the standard command-line utility.

Table 2: Common SRA Toolkit Commands

| Command | Function | Key Parameters |

|---|---|---|

prefetch |

Downloads SRA file to local cache. | -o <output_name.sra> |

fasterq-dump |

Faster conversion to FASTQ format. | --split-files (for paired-end), -O <output_dir> |

fastq-dump |

Legacy conversion tool. | --gzip, --split-files |

Metadata Acquisition and Curation

Accurate experimental metadata is critical for downstream analysis. Download the SRA Run Selector table for SRA studies or the SOFT formatted family file for GEO. Use this metadata to construct a sample phenotype table essential for differential expression analysis tools like DESeq2 or edgeR.

From Raw Data to Expression Matrix: A Core RNA-seq Protocol

This section details the standard workflow for analyzing RNA-seq data downloaded from SRA, a fundamental principle in the field.

Experimental Protocol 1: RNA-seq Data Processing Workflow

1. Quality Control (QC) of Raw Reads.

- Tool: FastQC.

- Method: Assess per-base sequence quality, adapter contamination, and GC content.

- Command:

fastqc SRR1234567_1.fastq.gz SRR1234567_2.fastq.gz - Reagent Solution: Trimmomatic or Cutadapt for adapter trimming and quality filtering.

2. Read Alignment to a Reference Genome.

- Tool: STAR (Spliced Transcripts Alignment to a Reference).

- Method: Generate a genome index once, then align reads.

- Commands:

3. Quantification of Gene/Transcript Abundance.

- Tool: featureCounts (gene-level) or Salmon (transcript-level).

- Method: Assign aligned reads to genomic features.

- Command (featureCounts):

- Output: A count matrix (genes x samples) for differential expression analysis.

The Scientist's Toolkit: Essential Research Reagent Solutions for RNA-seq Analysis

| Item / Solution | Function in RNA-seq Workflow |

|---|---|

| SRA Toolkit | Core utility for downloading and converting data from the SRA repository. |

| FastQC | Provides initial quality assessment of raw FASTQ sequence data. |

| Trimmomatic | Removes adapter sequences and low-quality bases from reads. |

| STAR Aligner | Performs accurate, fast spliced alignment of RNA-seq reads to a reference genome. |

| featureCounts | Summarizes aligned reads into a count matrix based on gene annotation. |

| DESeq2 R Package | Statistical framework for differential expression analysis from count matrices. |

| RSeQC | Evaluates RNA-seq data quality post-alignment (e.g., read distribution). |

Title: RNA-seq Data Analysis Core Workflow

Integrating GEO Datasets for Meta-Analysis

Using processed data from GEO requires careful normalization and batch correction. The workflow involves downloading Series Matrix files, loading them into R/Bioconductor, and using packages like limma or sva to harmonize data from different studies before combined analysis.

Title: GEO Data Integration for Meta-Analysis

Best Practices and Ethical Considerations

- Reproducibility: Always record the exact accession IDs (GSE, SRP, SRR) and software versions used.

- Data Provenance: Trace and cite the original study. Adhere to any specific data use agreements.

- Computational Resources: Be aware that SRA data downloads and alignment require significant storage and CPU time; plan for cloud or HPC resources for large studies.

Mastering the navigation of GEO and SRA is a foundational skill in modern RNA-seq research. By following the technical protocols outlined—from targeted querying and efficient data retrieval to executing the core analysis workflow—researchers can robustly leverage vast public data resources to advance scientific discovery and drug development.

Within the framework of basic RNA-seq data analysis research, the initial definition of the biological question is paramount. This choice fundamentally dictates the experimental design, sequencing strategy, computational workflow, and statistical interpretation. Two distinct philosophical approaches dominate: hypothesis-driven (confirmatory) and exploratory (discovery-driven) analysis. This guide details the principles, protocols, and practical execution of both paradigms.

Paradigm Comparison: Core Principles and Applications

Table 1: Hypothesis-Driven vs. Exploratory RNA-seq Analysis

| Aspect | Hypothesis-Driven Analysis | Exploratory Analysis |

|---|---|---|

| Primary Goal | Confirm or refute a specific, pre-defined biological hypothesis. | Generate novel hypotheses or patterns without strong prior assumptions. |

| Question Form | "Does knockout of gene X alter the expression of pathway Y in condition Z?" | "What are the transcriptomic differences between clinical subtypes of disease A?" |

| Experimental Design | Controlled, often with few conditions (e.g., WT vs. KO). Requires careful power analysis and replication. | Broader, surveying many conditions, time points, or tissues. May have larger sample cohorts. |

| Sequencing Depth | Moderate to high depth per sample to detect specific differential expression. | Can vary; often moderate depth focused on increasing sample number for diversity. |

| Statistical Focus | Rigorous control of Type I error (false positives). Use of adjusted p-values (e.g., FDR). | Dimensionality reduction, clustering, and visualization. Control of false discovery in later stages. |

| Key Tools/Methods | DESeq2, edgeR, limma-voom. Specific contrast testing. | PCA, t-SNE, UMAP, hierarchical clustering. WGCNA, trajectory inference. |

| Outcome | A binary decision on the hypothesis with effect size estimates. | A set of novel patterns, candidate genes, or subtypes for future validation. |

Experimental Protocols

Protocol 1: Hypothesis-Driven RNA-seq Workflow (Testing a Specific Contrast)

- Hypothesis Formulation: State a falsifiable hypothesis. Example: "TNF-α treatment induces a pro-inflammatory gene signature in primary endothelial cells."

- Experimental Design:

- Groups: Vehicle control vs. TNF-α (10 ng/mL, 6-hour treatment). n=5 biologically independent replicates per group.

- Power Analysis: Using pilot data or public data, calculate sample size needed to detect a 2-fold change with 80% power at FDR < 0.1 (e.g., using

PROPERR package).

- Library Preparation & Sequencing:

- Extract total RNA (≥ 100 ng) using a silica-membrane column kit. Assess integrity (RIN > 8.5, Bioanalyzer).

- Perform poly-A selection and construct stranded cDNA libraries (e.g., Illumina TruSeq Stranded mRNA).

- Sequence on an Illumina platform to a depth of 30-40 million 150bp paired-end reads per sample.

- Computational Analysis:

- Alignment: Map reads to the reference genome (e.g., GRCh38) using a splice-aware aligner (STAR or HISAT2).

- Quantification: Generate gene-level read counts using featureCounts or HTSeq.

- Differential Expression: Import count matrix into DESeq2. Model:

~ treatment. Perform Wald test on the "TNFvsVehicle" contrast. Apply independent filtering and FDR (Benjamini-Hochberg) correction. - Validation: Select top differentially expressed genes (DEGs) for confirmation by RT-qPCR.

Protocol 2: Exploratory RNA-seq Workflow (Atlas or Cohort Study)

- Question Formulation: Define the scope of discovery. Example: "Characterize the transcriptional landscape across 10 primary human tissues."

- Experimental Design:

- Cohort: 3 donors, 10 tissues per donor (total n=30 samples). Minimize batch effects by randomizing library prep.

- Library Preparation & Sequencing:

- Standardized RNA extraction and library prep as in Protocol 1 across all samples.

- Sequence to a moderate depth of 20-25 million reads per sample.

- Computational Analysis:

- Alignment & Quantification: As in Protocol 1.

- Normalization: Use variance-stabilizing transformation (DESeq2) or log-CPM (edgeR) for downstream analyses.

- Exploratory Steps:

- Quality Control: Perform multi-dimensional scaling (MDS) or PCA to identify major sources of variation and outliers.

- Unsupervised Clustering: Apply hierarchical clustering to genes and samples using Pearson correlation distance.

- Dimensionality Reduction: Visualize sample relationships using UMAP.

- Co-expression Analysis: Use WGCNA to identify modules of co-expressed genes correlated with tissue types.

Visualization of Workflows and Relationships

Title: Hypothesis-Driven RNA-seq Analysis Workflow

Title: Exploratory RNA-seq Analysis Workflow

Title: Decision Tree for Selecting Analysis Paradigm

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents and Materials for RNA-seq Studies

| Item | Function | Example Product/Catalog |

|---|---|---|

| RNA Stabilization Reagent | Preserves RNA integrity immediately post-collection, inhibiting RNases. Critical for in vivo or clinical samples. | RNAlater Stabilization Solution, TRIzol Reagent. |

| Total RNA Isolation Kit | Purifies high-quality, DNA-free total RNA from cells/tissues. Silica-membrane columns ensure consistency. | QIAGEN RNeasy Mini Kit, Zymo Research Quick-RNA MiniPrep. |

| RNA Integrity Number (RIN) Assay | Microfluidic capillary electrophoresis to quantitatively assess RNA degradation. Essential QC step. | Agilent RNA 6000 Nano Kit (Bioanalyzer). |

| Poly-A mRNA Selection Beads | Enriches for polyadenylated mRNA, depleting ribosomal RNA. Standard for eukaryotic mRNA-seq. | NEBNext Poly(A) mRNA Magnetic Isolation Module, Dynabeads mRNA DIRECT Purification Kit. |

| Stranded cDNA Library Prep Kit | Converts RNA to a sequencing-ready, strand-preserving cDNA library with dual-index adapters. | Illumina TruSeq Stranded mRNA, Takara Bio SMART-Seq v4. |

| Dual-Index UDIs (Unique Dual Indexes) | Multiplexing with unique dual indexes per sample dramatically reduces index hopping cross-talk. | Illumina IDT for Illumina RNA UD Indexes. |

| RT-qPCR Master Mix & Assays | For independent validation of differentially expressed genes identified by RNA-seq. | TaqMan Gene Expression Assays, SYBR Green Master Mix. |

The RNA-Seq Pipeline in Action: A Practical Guide from QC to Pathway Analysis

Within the thesis on Basic principles of RNA-seq data analysis research, Raw Data Quality Control (QC) stands as the critical first analytical step. It is the process of evaluating the quality of the raw sequencing reads generated by platforms such as Illumina, ensuring that any downstream analysis—alignment, quantification, and differential expression—is built upon a reliable foundation. This guide details the methodologies for performing this QC assessment using the industry-standard tools FastQC and MultiQC.

Core Tools and Their Functions

FastQC is a Java-based tool that provides a modular set of analyses on raw sequencing data in FASTQ format. It generates an HTML report with graphical summaries of various quality metrics. MultiQC aggregates results from multiple FastQC runs (and many other bioinformatics tools) into a single, interactive report, enabling comparative analysis across all samples in a project.

Experimental Protocol: From Sequencer to QC Report

The following protocol is essential for initiating any RNA-seq study.

Protocol: Comprehensive Pre-Alignment Quality Assessment

1. Data Acquisition & Preparation:

- Input: Compressed FASTQ files (

*.fastq.gz) from the sequencing facility. - Organization: Create a structured project directory. Place raw data in a

./raw_data/folder. - Environment Setup: Ensure a conda environment or module system provides access to FastQC and MultiQC.

2. Running FastQC on Individual Samples:

- Execute FastQC on all files. Using a shell loop for efficiency is standard practice.

- Parameters:

--outdirspecifies the output directory.--threadsallocates CPU cores for faster processing. - Output: For each FASTQ file, FastQC produces an HTML report (

sample_fastqc.html) and a ZIP file containing the raw data for the plots.

3. Aggregating Results with MultiQC:

- Run MultiQC in the directory containing the FastQC output.

- Parameters:

-odefines the output directory.--filenamesets the name of the final report. - Output: A single HTML report (

project_multiqc_report.html) summarizing all samples.

4. Interpretative Analysis:

- Systematically review the MultiQC report, focusing on key modules detailed in Section 4.

Key QC Metrics: Interpretation and Quantitative Thresholds

The table below summarizes the primary metrics assessed by FastQC, their ideal results, and potential causes for flags.

Table 1: Core FastQC Module Interpretation Guide for RNA-seq Data

| Metric Module | Ideal Profile for RNA-seq | Warning/Flag Cause | Potential Biological/Technical Issue |

|---|---|---|---|

| Per Base Sequence Quality | Quality scores (Phred) > 28 across all bases. | Qualities dropping below 20, especially at read ends. | Degraded RNA, adapter contamination, or sequencer cycle errors. |

| Per Sequence Quality Scores | Tight distribution with a high median (e.g., >30). | Broad distribution or low median. | Sample-specific issues or mixed-quality runs. |

| Per Base Sequence Content | Relative stability of A/T and G/C proportions after the first ~10 bases. | Large deviations from equality after position ~10-12. | Overrepresented sequences, adapter contamination, or biased fragmentation. |

| Adapter Content | No adapters detected, or very low (<0.1%). | Any adapter sequence detected. | Incomplete adapter trimming during library prep. Requires trimming. |

| Overrepresented Sequences | No sequences make up >0.1% of the total. | Any sequence exceeds 0.1% threshold. | PCR duplication, adapter contamination, or ribosomal RNA (rRNA) carryover. |

| Per Tile Sequence Quality | Uniform blue color indicating consistent quality across all tiles. | Dark blue or purple tiles. | Defective flow-cell tile or bubble during sequencing run. |

Visualizing the QC Workflow

Diagram Title: RNA-seq Raw Data QC and Decision Workflow

The Scientist's Toolkit: Essential QC Reagents & Solutions

Table 2: Key Research Reagent Solutions for RNA-seq Library QC

| Item | Function in QC Context | Notes |

|---|---|---|

| Bioanalyzer / TapeStation | Assesses RNA integrity (RIN/RQN) and final library fragment size distribution. Critical pre-sequencing QC. | Uses microfluidics/capillary electrophoresis. Agilent 2100 Bioanalyzer or equivalent. |

| Qubit Fluorometer & dsDNA HS Assay | Precisely quantifies the concentration of double-stranded DNA libraries. More accurate for sequencing loading than spectrophotometry. | Uses fluorescent dyes specific to dsDNA, avoiding RNA/carbohydrate interference. |

| SPRIselect Beads | Used for post-library cleanup and size selection (e.g., removing primer dimers). Impacts the insert size profile seen in FastQC. | Beckman Coulter AMPure XP or similar solid-phase reversible immobilization (SPRI) beads. |

| Universal Adapters (Illumina) | Oligonucleotide sequences ligated to fragments for cluster generation and sequencing. Their over-representation is a key metric in FastQC's "Adapter Content" module. | Indexed adapters enable sample multiplexing. |

| Low-Input / Ultra-Low Input RNA Library Kits | Enable library prep from minute amounts of starting RNA (e.g., single-cell or laser-captured samples). QC is especially crucial here due to increased technical noise. | Examples include SMART-Seq, NEB Next Single Cell/Low Input kits. |

| ERCC RNA Spike-In Mix | A set of synthetic RNA controls at known concentrations. Used to evaluate technical sensitivity, dynamic range, and quantification accuracy of the entire workflow. | Spike-in analysis is a separate, powerful QC step beyond FastQC. |

Rigorous assessment of raw reads with FastQC and MultiQC is a non-negotiable prerequisite in RNA-seq data analysis. It directly informs data cleaning steps (e.g., adapter trimming, quality filtering) and provides early warnings for potential technical artifacts that could confound biological interpretation. Mastery of this initial QC phase, as framed within the broader thesis on RNA-seq fundamentals, ensures the integrity and reliability of all subsequent analytical conclusions in research and drug development contexts.

This technical guide addresses a core module of the broader thesis on Basic principles of RNA-seq data analysis research. Following library preparation and sequencing, the accurate alignment of reads to a reference genome and the precise quantification of transcript abundance are foundational steps. This document provides an in-depth comparison of three seminal tools—STAR, HISAT2, and Salmon—detailing their strategies, protocols, and appropriate use cases to inform researchers, scientists, and drug development professionals.

Strategic Philosophies

- STAR (Spliced Transcripts Alignment to a Reference): Employs a sequential, maximum mappable seed search followed by clustering and stitching. It is designed for high sensitivity in detecting canonical and non-canonical splice junctions, making it ideal for de novo splice junction discovery and rapid processing of large datasets.

- HISAT2 (Hierarchical Indexing for Spliced Alignment of Transcripts 2): Uses a hierarchical graph FM-index (GFM) representing a global genome graph and tens of thousands of small local graphs. This strategy balances speed and memory efficiency, excelling in mapping reads across polymorphic or highly similar genomic regions.

- Salmon (Selective Alignment & Mapping-Mode): Introduces a dual-mode strategy. In its alignment-free mode, it performs lightweight mapping to a transcriptome using quasi-mapping, bypassing traditional alignment for extreme speed. In its selective alignment mode, it uses a lightweight alignment step to validate mappings, improving accuracy without the computational cost of traditional aligners.

Quantitative Performance Comparison

Table 1: Comparative Tool Performance Metrics (Representative Data)

| Feature | STAR | HISAT2 | Salmon (Alignment-Free) |

|---|---|---|---|

| Primary Method | Seed-and-Extend Aligner | Hierarchical Graph FM-index | Quasi-mapping + EM Algorithm |

| Speed (CPU hrs) | ~15-30 (for 30M paired-end) | ~10-20 (for 30M paired-end) | ~0.5-1 (for 30M paired-end) |

| RAM Usage | High (~30-40 GB) | Moderate (~8-12 GB) | Low (~4-8 GB) |

| Alignment Rate | High | High | Not Applicable (maps to transcriptome) |

| Splice Awareness | Excellent | Excellent | Requires annotated transcriptome |

| Dependence on Annotation | Beneficial but not required | Beneficial but not required | Required |

| Ideal Use Case | Novel junction discovery, large-scale studies | Polymorphic genomes, standard splicing analysis | Rapid quantification, large-scale meta-analyses |

Experimental Protocols

Protocol A: Standard RNA-seq Alignment Workflow with STAR & FeatureCounts

1. Genome Index Generation:

2. Read Alignment:

3. Transcript Quantification (via FeatureCounts):

Protocol B: Alignment and Quantification with HISAT2 and StringTie2

1. Index Generation (if not using pre-built):

2. Read Alignment:

3. Convert, Sort, and Assemble/Quantify:

Protocol C: Direct Quantification with Salmon

1. Transcriptome Index Creation:

2. Quantification (Alignment-Free Mode):

3. Quantification (Selective Alignment Mode):

Mandatory Visualizations

Title: STAR Two-Pass Alignment and Quantification Workflow

Title: HISAT2 Hierarchical Indexing and Assembly Workflow

Title: Salmon Quasi-mapping and EM Quantification Strategy

Title: Decision Logic for Selecting Alignment/Quantification Tool

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for RNA-seq Alignment & Quantification

| Item | Function in Experiment | Example/Note |

|---|---|---|

| High-Quality Total RNA | Starting biological material. Integrity (RIN > 8) is critical for accurate splicing analysis. | Isolated via column-based kits (e.g., miRNeasy, TRIzol). |

| Strand-Specific RNA-seq Library Prep Kit | Creates cDNA libraries preserving the original directionality of transcripts. | Illumina TruSeq Stranded mRNA, NEBNext Ultra II. |

| Reference Genome FASTA | The DNA sequence against which reads are aligned. | Human: GRCh38.p14 from GENCODE/UCSC. |

| Annotation File (GTF/GFF3) | Provides coordinates of known genes, transcripts, and exons for alignment guidance and quantification. | GENCODE or Ensembl annotations. |

| Computational Cluster/Server | High-performance computing environment required for memory-intensive alignment tasks. | Minimum 16-32 cores, 64+ GB RAM for mammalian genomes. |

| STAR Aligner Software | Performs fast, sensitive spliced alignment. | https://github.com/alexdobin/STAR |

| HISAT2 Aligner Software | Provides memory-efficient alignment using a graph-based index. | https://daehwankimlab.github.io/hisat2/ |

| Salmon Quantifier Software | Enables ultra-fast transcript-level quantification. | https://github.com/COMBINE-lab/salmon |

| SAM/BAM Tools (samtools) | Utilities for processing and viewing alignment files. | http://www.htslib.org/ |

| Quantification Aggregator (tximport) | Summarizes transcript-level estimates (from Salmon) to gene-level for DEG analysis in R/Bioconductor. | Critical for downstream analysis with tools like DESeq2. |

This whitepaper details a fundamental module within the broader thesis on Basic Principles of RNA-seq Data Analysis Research. The generation of a count matrix from aligned sequencing reads is a critical, quantifiable step that transforms raw genomic data into a structured numerical table suitable for statistical analysis and biological interpretation. This process directly underpins downstream analyses like differential expression, which informs research in functional genomics, biomarker discovery, and therapeutic target identification in drug development.

Core Workflow and Quantitative Metrics

The standard workflow involves processing aligned reads (in BAM/SAM format) to assign them to genomic features (primarily genes) and aggregating these assignments into a counts-per-feature table.

Table 1: Key Quantitative Metrics in Count Matrix Generation

| Metric | Typical Range/Value | Impact on Final Matrix |

|---|---|---|

| Total Reads per Sample | 20-50 million (bulk RNA-seq) | Determines library depth and statistical power. |

| Alignment Rate | >70-90% (species-dependent) | Low rates indicate poor sample/ reference quality. |

| Exonic Mapping Rate | >50-70% | Key indicator of RNA enrichment efficacy. |

| Ambiguous Read Fraction | 5-20% (varies with method) | Reads mapping to multiple genes; handled by counting strategy. |

| Duplicate Read Rate | 10-50% (protocol-dependent) | Influenced by PCR amplification; affects variance estimation. |

| Final Genes Detected | 10,000-20,000 (human) | Genes with non-zero counts; depends on sensitivity. |

Detailed Experimental Protocols for Key Steps

Protocol: Read Assignment with FeatureCounts

Objective: Assign aligned reads to gene features using featureCounts (from the Subread package).

- Input: Coordinate-sorted BAM files, a genome annotation file in GTF format.

- Strandedness Specification: Determine library protocol (e.g., unstranded, stranded reverse). Verify using

infer_experiment.pyfrom RSeQC. Command:infer_experiment.py -r <bed_file> -i <sample.bam> - Run featureCounts: Use parameters tailored to the experiment.

Base Command:

- Output: A count matrix file (

counts.txt) and a summary file with assignment statistics.

Protocol: Handling Ambiguity with Pseudoalignment (Kallisto/Salmon)

Objective: Generate count estimates directly from raw reads using lightweight pseudoalignment, suitable for transcript-level analysis.

- Input: Raw FASTQ files, a transcriptome index.

- Index Building: Create a de Bruijn graph index from transcript sequences.

Command for Kallisto:

kallisto index -i <transcriptome.idx> <transcriptome.fasta> - Quantification: Run the pseudoalignment and expectation-maximization algorithm.

Command for Salmon:

- Aggregation: Use

tximport(R/Bioconductor) to summarize transcript abundances to the gene level, generating a gene-level count matrix.

Signaling Pathways and Workflow Visualization

Title: RNA-seq Quantification Pathways to Count Matrix

The Scientist's Toolkit: Research Reagent & Software Solutions

Table 2: Essential Tools and Materials for Count Matrix Generation

| Item | Function | Example Product/Software |

|---|---|---|

| Stranded RNA Library Prep Kit | Converts RNA to a strand-specific, sequencing-ready library. Illumina TruSeq Stranded mRNA, NEBNext Ultra II. | |

| Alignment Software | Maps sequencing reads to a reference genome. STAR, HISAT2, Subread aligner. | |

| Genome Annotation File | Defines genomic feature coordinates. GENCODE, Ensembl, or RefSeq GTF/GFF3 files. | |

| Quantification Software | Counts reads per feature or estimates abundance. featureCounts, HTSeq-count, Kallisto, Salmon. | |

| High-Performance Computing (HPC) | Provides computational resources for processing large BAM/FASTQ files. Local cluster or cloud (AWS, Google Cloud). | |

| Quality Control Suite | Assesses alignment and count data quality. RSeQC, Qualimap, MultiQC. | |

| R/Bioconductor Packages | For matrix manipulation and downstream analysis. tximport, DESeq2, edgeR, SummarizedExperiment. | |

| Reference Genome Index | Pre-built index for fast alignment/pseudoalignment. Generated by STAR, Kallisto index, Salmon index. |

Within the broader thesis on the basic principles of RNA-seq data analysis research, a fundamental objective is the identification of genes with statistically significant differences in expression between experimental conditions. Three core, count-based statistical models have become standard: DESeq2, edgeR, and limma-voom. This guide provides an in-depth technical comparison of their methodologies, applications, and performance.

Core Statistical Models and Quantitative Comparison

The three packages model RNA-seq count data using a generalized linear model (GLM) framework, assuming a negative binomial (NB) distribution to account for biological variability (dispersion) beyond Poisson sampling error. Key distinctions lie in their approaches to estimating dispersion and fitting models.

Table 1: Core Algorithmic Comparison of DESeq2, edgeR, and limma-voom

| Feature | DESeq2 | edgeR | limma-voom |

|---|---|---|---|

| Primary Distribution | Negative Binomial | Negative Binomial | Gaussian (after transformation) |

| Dispersion Estimation | Empirical Bayes shrinkage towards a trended mean, using a prior distribution. | Empirical Bayes shrinkage, either towards a common (CR) or trended (QL) mean. | Calculated from mean-variance trend of log-CPMs; incorporated into weights. |

| Normalization | Median-of-ratios (size factors). | Trimmed Mean of M-values (TMM) or relative log expression (RLE). | Uses normalized log-CPMs (often with TMM). |

| Model Fitting | GLM with iterative dispersion estimation. | GLM with quasi-likelihood (QL) or likelihood ratio test (LRT). | Linear modeling of precision-weighted log-CPMs. |

| Key Strength | Robustness with small sample sizes, stringent control of false positives. | Flexibility with complex designs; QL F-test for reliable error control. | Leverages mature linear modeling infrastructure; excellent for complex designs. |

| Typical Use Case | Standard comparisons, small n, high sensitivity required. | Complex experiments with multiple factors, bulk or single-cell. | Large-scale experiments with many factors or batch effects. |

Table 2: Typical Performance Metrics from Benchmarking Studies

| Metric | DESeq2 | edgeR (QL) | limma-voom | Notes |

|---|---|---|---|---|

| False Discovery Rate (FDR) Control | Generally conservative | Good control with QL | Good control | All three are reliable when assumptions are met. |

| Sensitivity | High | Very High | High | edgeR often recovers most true positives; DESeq2 may be slightly more conservative. |

| Computation Speed | Moderate | Fast | Very Fast | limma-voom benefits from linear model speed. |

| Optimal Sample Size | n ≥ 3-5 per group | n ≥ 2 per group | n ≥ 3-5 per group | All can handle small n, but stability improves with larger n. |

Detailed Experimental Protocols

A standard differential expression (DE) analysis workflow, applicable to all three tools, is outlined below.

Protocol 1: Core RNA-seq DE Analysis Workflow

- Read Counting: Align reads (e.g., using STAR, HISAT2) to a reference genome and generate gene-level count matrices using tools like featureCounts or HTSeq.

- Quality Control: Assess sample-level metrics (library size, distribution of counts, % of ribosomal RNA) and multivariate analysis (PCA, MDS) to identify outliers and batch effects.

- Filtering: Remove lowly expressed genes (e.g., genes with < 10 counts in less than n samples, where n is the size of the smallest group) to improve power and reduce multiple testing burden.

- Normalization & Modeling:

- DESeq2: Create a

DESeqDataSetobject, estimate size factors, estimate gene-wise dispersions, shrink dispersions using empirical Bayes, and fit a negative binomial GLM. - edgeR: Create a

DGEListobject, calculate normalization factors (calcNormFactors), estimate dispersion (estimateDisp), and fit a GLM (glmQLFitfor QL F-test). - limma-voom: Create a

DGEListand calculate normalization factors as in edgeR. Convert counts to log-CPMs, estimate mean-variance relationship, compute observational-level weights. UselmFitandeBayeson the weighted data.

- DESeq2: Create a

- Hypothesis Testing: Perform statistical tests (Wald test in DESeq2, QL F-test in edgeR, empirical Bayes moderated t-test in limma-voom) for contrasts of interest.

- Result Interpretation: Apply a multiple testing correction (Benjamini-Hochberg) to control FDR. Genes with an adjusted p-value (FDR) < 0.05 and |log2 fold change| > 1 are commonly considered significant. Conduct downstream enrichment analysis (GO, KEGG).

Visualization of Workflows and Relationships

Differential Gene Expression Analysis Core Workflow

Conceptual Relationship Between Core DGE Models

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Computational Tools & Resources for DGE Analysis

| Item | Function in DGE Analysis |

|---|---|

| STAR Aligner | Spliced-aware alignment of RNA-seq reads to a reference genome, producing files for downstream quantification. |

| featureCounts / HTSeq | Summarizes aligned reads into a count matrix per gene (or exon), assigning reads to genomic features. |

| DESeq2 R Package | Implements the DESeq2 model for differential analysis, providing robust normalization and statistical testing. |

| edgeR R Package | Implements the edgeR model, offering high flexibility for complex experimental designs via GLMs. |

| limma + voom R Packages | Provides the linear modeling framework and the voom transformation for handling RNA-seq count data. |

| Reference Genome & Annotation (GTF/GFF) | The genomic sequence and gene structure definitions required for alignment and feature quantification (e.g., GRCh38, GRCm39). |

| R/Bioconductor Environment | The essential open-source software platform for statistical computing and genomic analysis. |

| High-Performance Computing (HPC) Cluster or Cloud Instance | Necessary for processing large-scale RNA-seq data, particularly for alignment and memory-intensive steps. |

This document constitutes a core chapter in a broader thesis on Basic principles of RNA-seq data analysis research. Following the quantification of gene expression and the identification of differentially expressed genes (DEGs), the critical next step is biological interpretation. Downstream functional analysis translates gene lists into mechanistic insights, hypothesizing the biological processes, pathways, and molecular functions perturbed in the experimental condition. This guide details three cornerstone methodologies: Gene Ontology (GO) enrichment analysis, Kyoto Encyclopedia of Genes and Genomes (KEGG) pathway analysis, and Gene Set Enrichment Analysis (GSEA).

Core Methodologies & Quantitative Frameworks

Gene Ontology (GO) Enrichment Analysis

GO provides a controlled, structured vocabulary (ontologies) to describe gene attributes across three domains:

- Biological Process (BP): Broad biological objectives (e.g., "inflammatory response").

- Molecular Function (MF): Molecular-scale activities (e.g., "kinase activity").

- Cellular Component (CC): Locations within the cell (e.g., "nuclear membrane").

Statistical Foundation: Enrichment analysis typically uses a hypergeometric test or Fisher's exact test to determine if DEGs are over-represented in specific GO terms compared to a background gene set (e.g., all genes measured).

Key Metric: The False Discovery Rate (FDR) or adjusted p-value corrects for multiple testing. An FDR < 0.05 is commonly used as a significance threshold.

KEGG Pathway Analysis

KEGG is a database resource integrating genomic, chemical, and systemic functional information. Pathway analysis maps DEGs onto manually curated reference pathways (e.g., MAPK signaling, Glycolysis) to infer activated or suppressed biological systems.

Statistical Foundation: Similar to GO, over-representation analysis (ORA) is used. Advanced methods like Pathway Topology Analysis incorporate gene position and interactions within the pathway.

Gene Set Enrichment Analysis (GSEA)

GSEA differs fundamentally from ORA methods. It evaluates genome-wide expression data (all genes, not just DEGs) against a priori defined gene sets (e.g., from GO, KEGG, or MSigDB). It identifies subtle, concordant changes that may be missed by DEG cut-offs.

Core Algorithm:

- Rank all genes by a correlation metric (e.g., signal-to-noise ratio) between expression and phenotype.

- Calculate an Enrichment Score (ES) by walking down the ranked list, increasing the score when a gene is in the set, decreasing it otherwise.

- The maximal deviation from zero is the final ES.

- Significance is assessed by permutation testing (phenotype or gene set).

- The Normalized Enrichment Score (NES) accounts for gene set size and correlations.

Key Advantage: GSEA can detect modest but coordinated expression changes in biologically related genes.

Table 1: Comparison of Core Functional Analysis Methods

| Feature | GO Enrichment / KEGG ORA | GSEA |

|---|---|---|

| Input Requirement | A list of significant DEGs (threshold-based). | The entire, ranked genome-wide expression dataset. |

| Underlying Question | Are my DEGs over-represented in a specific functional set? | Are genes in a pre-defined set coordinately up/down-regulated, without stringent DEG cut-off? |

| Statistical Test | Typically Hypergeometric / Fisher's Exact Test. | Kolmogorov-Smirnov-like running sum statistic. |

| Primary Output | Enriched terms/pathways with p-value/FDR. | Enriched gene sets with NES, p-value, and FDR. |

| Sensitivity | May miss subtle, coordinated changes across many genes. | Designed to capture broader, subtle shifts in expression. |

| Leading Edge | Not provided. | Identifies the subset of genes contributing most to the enrichment signal. |

Detailed Experimental Protocols

Protocol 4.1: Standard Over-Representation Analysis (GO/KEGG)

- DEG Identification: Generate a list of gene identifiers (e.g., Entrez IDs) for significantly up- and down-regulated genes from RNA-seq analysis (e.g., DESeq2, edgeR). Common threshold: |log2FC| > 1 & adjusted p-value < 0.05.

- Background Definition: Define a suitable background list (e.g., all genes expressed and tested in the experiment).

- Tool Execution: Use R packages (clusterProfiler, topGO) or web tools (DAVID, g:Profiler).

- R (clusterProfiler) Example:

- R (clusterProfiler) Example:

- Result Interpretation: Filter and sort results by Count (number of DEGs in term) and adjusted p-value. Visualize via dotplot or barplot.

Protocol 4.2: Gene Set Enrichment Analysis (GSEA)

- Data Preparation: Create a ranked gene list. The ranking metric is often the signed -log10(p-value) multiplied by the sign of the fold change.

- Gene Set Selection: Download or select relevant gene sets (e.g., "c2.cp.kegg.v7.5.1.symbols.gmt" from MSigDB).

- Tool Execution: Use GSEA software (Broad Institute) or R packages (fgsea, clusterProfiler for GSEA).

- R (fgsea) Example:

- R (fgsea) Example:

- Result Interpretation: Identify significant gene sets (FDR < 0.25 is a common, lenient threshold per Broad's recommendation). Analyze the enrichment plot, which visualizes the ES, ranked list, and leading edge.

Visualizations

Diagram 1: Workflow of Functional Analysis Methods (100 chars)

Diagram 2: Example KEGG Pathway with DEG Overlay (99 chars)

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Functional Analysis

| Item / Resource | Category | Primary Function & Explanation |

|---|---|---|

| clusterProfiler (R/Bioconductor) | Software Package | Integrative tool for ORA and GSEA of GO terms and KEGG pathways. Streamlines statistical analysis and visualization. |

| fgsea (R package) | Software Package | Fast, efficient algorithm for pre-ranked GSEA, allowing rapid testing of many gene sets. |

| MSigDB (Molecular Signatures Database) | Gene Set Collection | Curated collection of >30,000 gene sets for GSEA, including Hallmark, KEGG, and Reactome pathways. |

| DAVID / g:Profiler | Web Service | User-friendly web servers for performing GO and pathway ORA without programming. |

| GSEA Software (Broad Institute) | Standalone Software | Original, powerful Java-based desktop application for GSEA with extensive visualization and reporting. |

| OrgDb Packages (e.g., org.Hs.eg.db) | Annotation Database | Species-specific R packages providing gene identifier mappings and GO annotations. Essential for linking gene IDs to functional data. |

| KEGG REST API / KEGG.db | Pathway Database Access | Provides programmatic or local access to current KEGG pathway maps and gene-pathway associations. |

| ggplot2 / enrichplot (R) | Visualization Package | Critical for creating publication-quality plots (dotplots, barplots, enrichment plots, cnetplots) of results. |

This whitepaper details advanced applications of RNA-sequencing, building upon the basic principles of bulk RNA-seq data analysis. While bulk RNA-seq provides an average gene expression profile for a tissue sample, it obscures cellular heterogeneity and spatial organization. Single-cell and spatial transcriptomics are transformative extensions that resolve these limitations, enabling the discovery of novel cell types, developmental trajectories, and tissue microenvironments critical for both fundamental biology and targeted drug development.

Core Technologies & Quantitative Comparisons

Table 1: Comparison of Key Transcriptomic Technologies

| Feature | Bulk RNA-seq | Single-Cell RNA-seq (scRNA-seq) | Spatial Transcriptomics (10x Visium) |

|---|---|---|---|

| Resolution | Tissue-average (millions of cells) | Single-cell | Near-single-cell / Multi-cell (55μm spots) |

| Primary Output | Aggregate gene expression matrix | Cell-by-gene matrix | Spot-by-gene matrix with spatial coordinates |

| Key Metric | Reads per gene per sample | Unique Molecular Identifiers (UMIs) per cell | UMIs per spot |

| Typical Cells/Spots | 1 sample = 1 data point | 1,000 - 10,000+ cells per run | ~5,000 spots per tissue section |

| Spatial Context | Lost | Lost | Preserved |

| Main Application | Differential expression between conditions | Cell type discovery, heterogeneity, trajectories | Tissue architecture, spatially-resolved expression |

Table 2: Current Performance Metrics (2023-2024)

| Platform/Assay | Median Genes/Cell (3' scRNA-seq) | Median Reads/Cell | Recommended Cells/Lane | Spatial Spot Resolution |

|---|---|---|---|---|

| 10x Genomics Chromium X | 2,000 - 4,000 | 50,000 | 10,000 - 20,000 | 55μm (Visium) |

| Parse Biosciences Evercode | 3,000 - 6,000 | 50,000+ | Up to 1 million+ (pooled) | N/A |

| Nanostring CosMx SMI | N/A | N/A | N/A | ~0.5-1μm (subcellular) |

| Vizgen MERSCOPE | N/A | N/A | N/A | ~0.5-1μm (subcellular) |

Detailed Experimental Protocols

Protocol: 10x Genomics Single-Cell 3' RNA-seq (v3.1/v3.1 Dual Index)

Objective: To generate single-cell gene expression profiles from a fresh or frozen cell suspension. Key Reagents: Chromium Next GEM Chip K, Single Cell 3' Gel Beads, Partitioning Oil.

- Cell Preparation: Generate a high-viability (>90%) single-cell suspension. Adjust concentration to 700-1,200 cells/μL in PBS + 0.04% BSA.

- Master Mix Preparation: Combine Reverse Transcription (RT) reagents with cells, Master Mix, and Gel Beads containing barcoded oligonucleotides (cell barcode, UMI, poly-dT).

- Partitioning & Barcoding: Load the master mix onto a Chromium Chip. Microfluidic partitioning co-encapsulates a single cell, a single Gel Bead, and RT reagents into a droplet in oil (GEM). Within each GEM, the Gel Bead dissolves, and poly-dT primers bind mRNA for reverse transcription. This step labels all cDNA from a single cell with a unique cell barcode and each transcript with a unique molecular identifier (UMI).

- Post GEM-RT: Break droplets, pool barcoded cDNA. Perform cleanup with Silane magnetic beads.

- Library Construction: Amplify cDNA via PCR, followed by enzymatic fragmentation, end-repair, A-tailing, and adapter ligation. A final index PCR adds sample indices for multiplexing.

- Sequencing: Libraries are sequenced on an Illumina platform (e.g., NovaSeq 6000). Standard read configuration: Read 1 (28 cycles: cell barcode + UMI), i7 index (10 cycles), i5 index (10 cycles), Read 2 (90 cycles: transcript).

Protocol: 10x Genomics Visium Spatial Gene Expression

Objective: To generate spatially-resolved, whole-transcriptome data from a intact tissue section. Key Reagents: Visium Spatial Tissue Optimization Slide & Kit, Visium Spatial Gene Expression Slide & Kit.

- Tissue Optimization (TO): Prior to the main experiment, perform a fluorescent imaging-based optimization on a consecutive section to determine the optimal permeabilization time (e.g., 12, 18, 24 minutes) for releasing RNA from the fixed tissue.

- Tissue Preparation: Fresh-frozen tissue is cryosectioned at 10μm thickness and placed onto the Visium Gene Expression Slide. Each slide contains four capture areas, each with ~5,000 barcoded spots in a known spatial layout. Each spot contains millions of oligonucleotides with a unique spatial barcode, a UMI, and a poly-dT sequence.

- Fixation & Staining: Tissue is fixed, stained with H&E, and imaged for morphological context.

- Permeabilization & Capture: Tissue is permeabilized (using the optimized time from TO) to release mRNA, which diffuses and binds to the spatially-barcoded oligonucleotides on the adjacent spots.

- On-Slide Reverse Transcription: Reverse transcription occurs on the slide, creating spatially-barcoded cDNA.

- Library Construction & Sequencing: cDNA is harvested, amplified, and processed into an Illumina-compatible sequencing library. Sequencing follows a similar structure to scRNA-seq, with reads mapping back to the spatial coordinates via the spot-specific barcodes.

Visualizations

Workflow for Single-Cell RNA Sequencing

Workflow for Visium Spatial Transcriptomics

Core Bioinformatic Analysis Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for scRNA-seq & Spatial Transcriptomics

| Item | Function & Explanation |

|---|---|

| Chromium Chip & Reagents (10x) | Microfluidic consumables for deterministic partitioning of cells into nanoliter-scale droplets (GEMs) for barcoding. |

| Visium Spatial Gene Expression Slide | Glass slide with patterned capture areas containing spatially-barcoded oligos. The core substrate for spatial transcriptomics. |

| Single Cell 3' Gel Beads | Polymer beads containing millions of copies of a barcoded oligonucleotide (Cell Barcode + UMI + Poly-dT) for labeling cellular mRNA. |

| Partitioning Oil | Creates a stable emulsion, isolating individual GEMs to prevent barcode mixing between cells. |

| DTT (Dithiothreitol) | Reducing agent used in tissue permeabilization for Visium to break disulfide bonds, enhancing RNA accessibility. |

| SSC Buffer (Saline-Sodium Citrate) | Used in Visium wash steps; ionic strength affects hybridization stringency and background. |

| Silane Magnetic Beads | Workhorse for post-RT cleanup, size selection, and library purification by binding nucleic acids. |

| SPRIselect Beads | Size-selective magnetic beads for precise fragment selection during library construction. |

| SMP (Sample Multiplexing) Oligos | For cell hashing or multiplexing samples in a single run, reducing costs and batch effects. |

| Viability Dye (e.g., DAPI, PI) | Critical for assessing cell suspension health pre-loading; dead cells increase background noise. |

Debugging Your RNA-Seq Analysis: Solving Common Issues and Optimizing Results

Diagnosing and Remedying Poor Sequencing Quality and Low-Complexity Libraries

Within the broader thesis on the basic principles of RNA-seq data analysis, the integrity of the sequencing library is paramount. Poor sequencing quality and low-complexity libraries are critical failure points that can invalidate downstream differential expression and pathway analysis. This technical guide details systematic diagnostic approaches and robust experimental remedies, ensuring data reliability for research and drug development.

Diagnosing Sequencing Quality Issues

Key Quality Metrics and Interpretation

Sequencing quality is quantifiable through several key metrics derived from the raw base call files (BCL or FASTQ). Systematic monitoring of these metrics is the first line of defense.

Table 1: Core Sequencing Quality Metrics and Thresholds

| Metric | Description | Optimal Range | Threshold for Concern |

|---|---|---|---|

| Q-score (Phred Score) | Probability of an incorrect base call. Q30 = 99.9% accuracy. | ≥ Q30 for >80% of bases. | < Q30 for >20% of bases. |

| % Bases ≥ Q30 | Percentage of bases with a Phred score of 30 or higher. | > 80% | < 75% |

| Total Reads | Total number of sequenced reads. | Project-dependent. | Significant deviation from expected yield. |

| Cluster Density | Number of clusters per mm² on the flow cell. | Optimal range varies by instrument (e.g., 180-220K for NovaSeq). | Too high: overlapping clusters (phasing); Too low: poor yield. |

| % PF (Pass Filter) | Percentage of clusters passing internal chastity/purity filters. | > 80% | < 70% |

| Phasing/Prephasing | Loss of sync within clusters during sequencing-by-synthesis. | < 0.25% per cycle | > 0.5% per cycle |

| Average Read Length | Mean length of sequenced reads. | As per library prep design. | Shorter than expected. |

Visual Diagnostics with FastQC and MultiQC

Experimental Protocol: Initial Quality Assessment

- Tool: FastQC (v0.12.0+) for individual samples; MultiQC (v1.15+) for aggregate reporting.

- Input: Unaligned FASTQ files (raw data).

- Command:

fastqc sample_R1.fastq.gz sample_R2.fastq.gz -o ./qc_results/ - Aggregation:

multiqc ./qc_results/ -o ./multiqc_report/ - Critical Modules to Inspect:

- Per Base Sequence Quality: Look for drops in quality at read starts/ends.

- Per Sequence Quality Scores: Should be a single, sharp peak.

- Sequence Duplication Levels: High duplication (>50%) indicates low complexity.

- Overrepresented Sequences: Identifies adapter contamination or PCR artifacts.

- Adapter Content: Quantifies the proportion of adapter sequence in reads.

Diagram Title: RNA-seq Initial Quality Control Workflow

Diagnosing and Remedying Low-Complexity Libraries

Low-complexity libraries, characterized by high levels of PCR duplication and limited unique molecular diversity, stem from insufficient starting material, poor RNA quality, or suboptimal PCR amplification.

Diagnostic Criteria

- High Duplication Rate: >50-60% of reads are marked as duplicates by tools like Picard MarkDuplicates.

- Skewed Gene Body Coverage: 3' bias in coverage plots due to RNA degradation.

- Low Number of Detected Genes: Significantly fewer genes detected compared to similar studies.

Experimental Remedies and Protocols

A. Improving Input RNA Quality

- Protocol: RNA Integrity Number (RIN) Assessment

- Tool: Agilent Bioanalyzer or TapeStation.

- Reagent: RNA Integrity Kit (e.g., Agilent RNA 6000 Nano Kit).

- Procedure: Load 1 µL of total RNA. Electrophoretic separation generates an electropherogram.

- Analysis: RIN > 8.0 is ideal for standard mRNA-seq. RIN 7-8 may be acceptable with specialized kits. For RIN < 7, consider ribo-depletion and use of UMIs.

B. Utilizing Unique Molecular Identifiers (UMIs) UMIs are short, random barcodes ligated to each original molecule before PCR amplification, allowing bioinformatic distinction between PCR duplicates and true biological duplicates.

- Protocol: UMI-Based Library Preparation (Example)

- Fragmentation & Reverse Transcription: Perform first-strand synthesis using a primer containing a cell barcode and a random UMI (e.g., 10bp N sequence).

- Second Strand Synthesis & Library Construction: Proceed with standard library prep (end repair, A-tailing, adapter ligation).

- PCR Amplification: Use limited PCR cycles (8-12).

- Bioinformatic Processing: Use tools like

UMI-toolsorfgbioto extract UMIs, correct errors, and deduplicate reads based on genomic coordinates and UMI identity.

Diagram Title: UMI Integration for Library Complexity Rescue

C. Optimizing PCR Amplification

- Protocol: PCR Cycle Titration

- Setup: Aliquot identical library prep reactions post-adapter ligation.

- Amplification: Amplify aliquots with 8, 10, 12, and 14 cycles of PCR.

- Assessment: Run products on a Bioanalyzer. Select the lowest cycle number that yields sufficient library mass (e.g., >15 nM). Excessive cycling (>15 cycles) dramatically increases duplicate rates.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents for Quality RNA-seq Library Preparation

| Reagent / Kit | Primary Function | Key Consideration for Quality/Complexity |

|---|---|---|

| RNase Inhibitors (e.g., Recombinant RNasin) | Inhibits RNase activity during RNA isolation and handling. | Critical for preserving full-length transcripts and preventing 3' bias. |

| Magnetic Bead-based Cleanup Systems (SPRIselect) | Size selection and purification of cDNA/library fragments. | Prefer over column-based methods for better recovery and size precision. |

| Ribo-depletion Kits (e.g., Illumina Ribo-Zero Plus) | Removes ribosomal RNA from total RNA. | Essential for degraded samples (low RIN) to retain informative reads. |

| Strand-Specific Library Prep Kits (e.g., Illumina Stranded TruSeq) | Preserves strand-of-origin information during cDNA synthesis. | Reduces ambiguity in alignment, improving accurate gene quantification. |

| UMI Adapter Kits (e.g., IDT for Illumina UMI Adapters) | Provides unique molecular identifiers during adapter ligation. | The definitive solution for accurate quantification and PCR duplicate removal. |

| High-Fidelity PCR Master Mix (e.g., KAPA HiFi, NEB Next Ultra II Q5) | Amplifies library with high fidelity and minimal bias. | Reduces PCR errors and over-amplification artifacts. |

| qPCR Library Quantification Kit (e.g., KAPA SYBR Fast) | Accurate, sensitive quantification of amplifiable library molecules. | Prevents over- or under-loading of the sequencer flow cell. |

Integrated Remediation Workflow

A systematic approach is required when quality issues are identified.

Table 3: Decision Matrix for Common RNA-seq Issues

| Symptom (From FastQC/MultiQC) | Potential Cause | Diagnostic Follow-up | Remedial Action |

|---|---|---|---|

| Poor per-base quality at read ends | Signal decay on sequencer. | Check phasing/prephasing metrics from sequencing run. | Trim reads with Trimmomatic or Cutadapt. |

| High adapter content | Insufficient size selection or fragment loss. | Review Bioanalyzer trace post-library prep. | Re-run size selection; use more stringent bead ratios. |

| High sequence duplication | Low input RNA, over-amplification. | Check input RIN and PCR cycle count. | Re-prep with more input (if available), use UMIs, reduce PCR cycles. |

| Low number of detected genes | Poor RNA quality, inefficient capture. | Check RIN and ribosomal RNA ratio (Bioanalyzer). | Use ribo-depletion kit, consider SMARTer or other low-input protocols. |

| Skewed gene body coverage (3' bias) | Partially degraded RNA. | Confirm low RIN value. | Use a protocol designed for degraded RNA (e.g., stranded with ribo-depletion and random priming). |

Diagram Title: Integrated Diagnostic and Remediation Pathway

Robust RNA-seq analysis is built upon the foundation of high-quality, complex sequencing libraries. By rigorously applying the diagnostic metrics and experimental protocols outlined herein—from initial QC with FastQC to the strategic implementation of UMIs and PCR optimization—researchers can proactively identify and correct common pitfalls. This systematic approach ensures the generation of biologically meaningful data, fulfilling a core tenet of the basic principles of RNA-seq data analysis and enabling reliable discovery in research and drug development.

Within the foundational principles of RNA-seq data analysis research, a critical step is the accurate alignment of sequencing reads to a reference genome. Low alignment rates present a significant bottleneck, undermining downstream quantification and interpretation. This technical guide dissects the principal causes rooted in reference genome issues and provides actionable, evidence-based solutions.

Core Causes of Low Alignment Rates