The Complete Guide to Bulk RNA Sequencing: From Foundational Principles to Clinical Applications

This comprehensive guide details the end-to-end workflow of bulk RNA sequencing, a powerful and cost-effective method for profiling average gene expression across cell populations.

The Complete Guide to Bulk RNA Sequencing: From Foundational Principles to Clinical Applications

Abstract

This comprehensive guide details the end-to-end workflow of bulk RNA sequencing, a powerful and cost-effective method for profiling average gene expression across cell populations. Tailored for researchers and drug development professionals, it covers foundational concepts, best-practice methodologies from library prep to data analysis, and critical troubleshooting for experimental design. The article further explores advanced applications like computational deconvolution and integrated multi-omic assays, providing a validated framework for leveraging bulk RNA-seq in both basic research and clinical translation, ultimately enabling robust biomarker discovery and therapeutic development.

Bulk RNA-Seq Fundamentals: Unraveling Transcriptomic Averages

Bulk RNA Sequencing (RNA-seq) is a powerful genomic technique designed to measure the average gene expression levels across populations of cells. When applied to complex tissues, it provides a global transcriptomic profile, capturing the collective messenger RNA (mRNA) content from the heterogeneous cell types present in a sample. This technical guide details the core principles, standard workflows, and analytical frameworks that define bulk RNA-seq, positioning it as an indispensable tool for researchers and drug development professionals investigating biological systems, disease mechanisms, and therapeutic responses.

Bulk RNA-seq functions by extracting and sequencing the RNA from a sample comprising thousands to millions of cells. The resulting data represents a population-average of transcriptional activity, making it exceptionally powerful for comparing gene expression between different conditions—such as diseased versus healthy tissue, or treated versus untreated samples [1] [2]. The fundamental unit of measurement is the "read," a short sequence of cDNA derived from an RNA molecule. By aligning millions of these reads to a reference genome and counting their gene of origin, researchers can quantify the relative abundance of thousands of genes simultaneously [3] [2].

A key distinction lies between bulk RNA-seq and its modern counterpart, single-cell RNA-seq (scRNA-seq). While scRNA-seq reveals heterogeneity within a tissue by profiling individual cells, bulk RNA-seq provides a consolidated, quantitative overview of the transcriptome. This makes it ideally suited for studies where the primary goal is to identify overall expression differences between conditions, rather than to deconstruct cellular composition [4]. Its robustness, cost-effectiveness for replicated experiments, and well-established analytical pipelines ensure its continued centrality in biological and translational research [5].

Experimental Workflow and Pipeline Standards

The journey from a biological sample to interpretable gene expression data involves a series of standardized steps, encompassing wet-lab procedures and a defined computational pipeline.

From Sample to Sequence

The experimental protocol begins with the collection of tissue or cells. RNA is then isolated, typically enriching for polyadenylated mRNA or depleting ribosomal RNA (rRNA). The purified RNA is converted into a sequencing library, a process that involves fragmenting the RNA, reverse-transcribing it into complementary DNA (cDNA), attaching adapter sequences, and amplifying the library for sequencing on a high-throughput platform [2]. A notable advancement is the development of early barcoding protocols like Prime-seq, which incorporate sample-specific barcodes during the cDNA synthesis step. This allows for the pooling of samples early in the workflow, dramatically reducing library preparation costs and hands-on time while maintaining data quality comparable to standard methods like TruSeq [5].

Uniform Processing Pipeline

Major consortia like the Encyclopedia of DNA Elements (ENCODE) have established uniform processing pipelines to ensure reproducibility and data quality. The ENCODE pipeline for bulk RNA-seq is designed to handle both paired-end and single-end reads from strand-specific or non-strand-specific libraries [1] [6]. The core steps are as follows:

- Alignment: Reads are mapped to a reference genome using a splice-aware aligner, most commonly STAR [1] [3].

- Quantification: The abundance of genes and transcripts is estimated from the aligned reads. The ENCODE pipeline has evolved in its quantification tool of choice. The earlier version used RSEM (RNA-Seq by Expectation Maximization) for gene and transcript quantification [1], while the more recent ENCODE4 pipeline uses kallisto for transcript quantification and RSEM to generate gene-level quantifications [6]. This highlights a community shift towards fast, alignment-free quantification methods.

- Signal Track Generation: Normalized signal files (bigWig format) are generated for visualization in genome browsers.

- Quality Metrics: The pipeline produces key quality metrics, including Spearman correlation between replicates to assess reproducibility [1] [6].

Table 1: Key Inputs and Outputs of the ENCODE Bulk RNA-seq Pipeline

| Category | Item | Format | Description |

|---|---|---|---|

| Inputs | Raw Sequencing Data | FASTQ | Gzipped files containing the sequence reads and quality scores. |

| Genome Reference | FASTA/Indices | Reference genome sequence and pre-built aligner indices (e.g., for STAR). | |

| Gene Annotation | GTF/GFF | File specifying the coordinates of genes and transcripts. | |

| Spike-in Controls | FASTA | Sequences of exogenous RNA controls (e.g., ERCC spike-ins) for normalization. | |

| Outputs | Alignments | BAM | Binary files storing the location of each read in the genome. |

| Gene Quantifications | TSV | Tab-separated file with counts (e.g., expected_count), TPM, and FPKM for each gene. | |

| Transcript Quantifications | TSV | Similar to gene quantifications, but for transcript isoforms (use with caution). | |

| Normalized Signal | bigWig | Files for visualizing expression signal across the genome. |

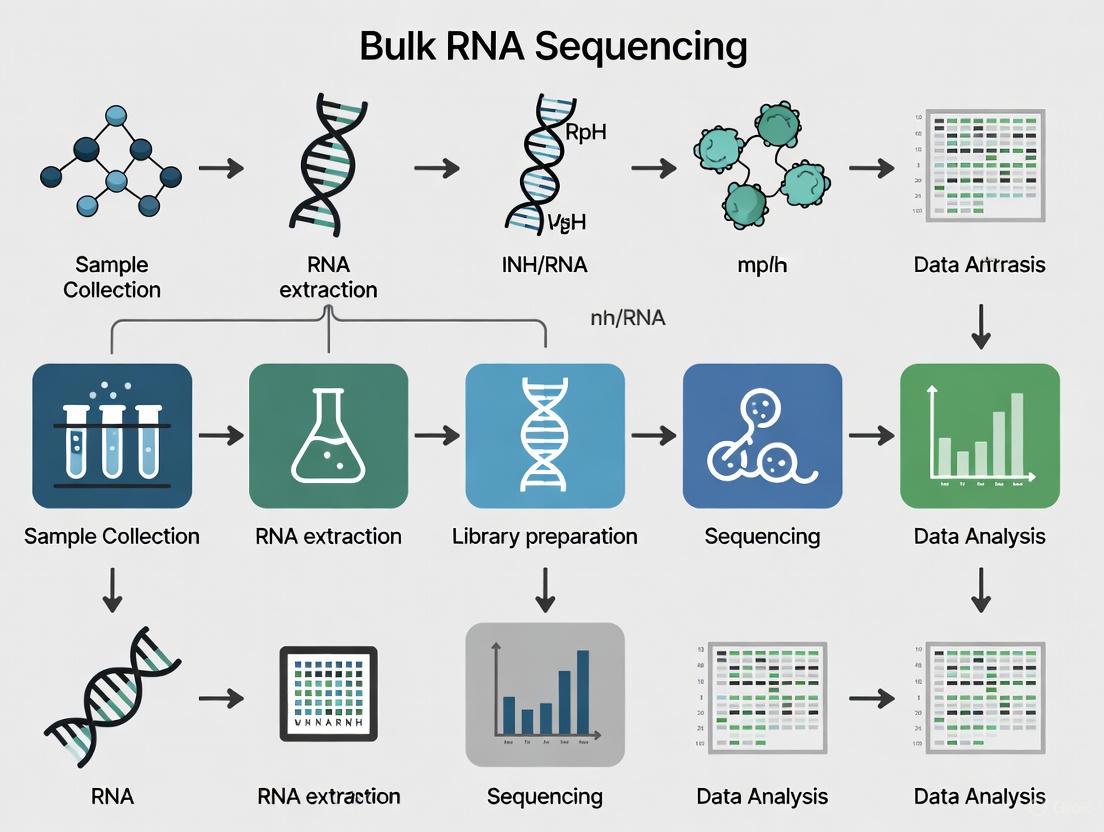

The following diagram illustrates the sequential steps of a standard bulk RNA-seq analysis workflow, from raw data to biological insight:

Essential Computational Analysis

From Alignment to Count Matrix

The primary goal of the initial computational steps is to generate a count matrix—a table where rows represent genes, columns represent samples, and the values are the number of reads assigned to each gene in each sample [7] [2]. Two primary approaches exist:

- Alignment-Based Quantification: This method first uses splice-aware aligners like STAR to map reads to the genome. The resulting BAM file is then used by tools like HTSeq-count or RSEM to assign reads to genes and generate counts, accounting for ambiguities in read mapping [8] [3].

- Pseudoalignment: Tools like Salmon and kallisto bypass full alignment. They use a reference transcriptome to rapidly determine the transcript of origin for each read probabilistically, which is much faster and is increasingly considered a best practice [7] [6].

A recommended hybrid approach, implemented in automated workflows like the nf-core RNA-seq pipeline, uses STAR for alignment and quality control (QC) metrics, then leverages Salmon in its alignment-based mode to perform accurate quantification from the BAM files, combining the strengths of both methods [7].

Differential Gene Expression Analysis

Once a count matrix is obtained, statistical testing identifies differentially expressed genes (DEGs). The DESeq2 package in R is a widely used and powerful tool for this purpose [8] [3]. Its analysis process incorporates several critical steps:

- Normalization: DESeq2 uses a median-of-ratios method to correct for differences in sequencing depth and RNA composition between samples [8].

- Modeling: It models the count data using a negative binomial distribution to account for over-dispersion common in sequencing data.

- Hypothesis Testing: The default is the Wald test to assess the significance of the difference in expression between groups. The resulting p-values are then adjusted for multiple testing using the Benjamini-Hochberg procedure to control the False Discovery Rate (FDR) [8].

- Effect Size Estimation: To prevent inflation of fold-changes from lowly expressed genes, shrinkage estimators like

apeglmare applied to the log2 fold-change values, providing more robust and biologically meaningful estimates [8].

Table 2: Standard Bulk RNA-seq Analysis Tools and Their Functions

| Tool Name | Primary Function | Key Features |

|---|---|---|

| STAR | Read Alignment | Splice-aware, fast, accurate. Generates BAM files for further QC. |

| Salmon/kallisto | Quantification | Fast, alignment-free "pseudoalignment". Can use transcriptome or alignments. |

| DESeq2 | Differential Expression | Uses negative binomial model. Provides FDR-adjusted p-values and shrunken LFC. |

| limma | Differential Expression | Linear modeling framework; can be adapted for RNA-seq count data with voom. |

| HTSeq-count | Quantification | Generates count matrices from aligned BAM files based on a GTF annotation. |

| nf-core/rnaseq | Workflow Management | Automated, reproducible pipeline that integrates multiple tools (STAR, Salmon, etc.). |

Experimental Design and Quality Control

Foundational Design Principles

Robust experimental design is paramount for generating meaningful bulk RNA-seq data. Key considerations include:

- Replication: Biological replicates (samples derived from different biological units) are essential for capturing natural variation and enabling statistical inference. The ENCODE standards mandate a minimum of two biological replicates, though more are always beneficial. Technical replicates (repeated measurements of the same biological sample) are less critical with modern, stable protocols [1] [2].

- Batch Effects: Uncontrolled technical variation (e.g., from different library preparation days or sequencing runs) can confound results. To mitigate this, samples from different experimental groups should be randomly distributed across processing batches [2].

- Sequencing Depth: The number of reads per sample directly impacts the power to detect expressed genes, especially those with low abundance. ENCODE standards recommend a minimum of 30 million aligned reads per replicate for standard bulk RNA-seq experiments [1] [6].

- Spike-in Controls: Using exogenous RNA controls, such as the ERCC spike-in mix, provides an external standard for monitoring technical performance and aiding in normalization [1] [6].

Quality Assessment

Rigorous quality control is performed at multiple stages:

- Pre-alignment QC: Tools like FastQC assess raw read quality, per-base sequence content, and adapter contamination. Trimming tools like Trimmomatic are used to remove low-quality sequences and adapters [8].

- Post-alignment QC: The alignment rate, the distribution of reads across genomic features (exons, introns, intergenic regions), and the coverage uniformity are assessed.

- Replicate Concordance: A high Spearman correlation (e.g., >0.9 for isogenic replicates) between the gene-level quantifications of replicates is a key indicator of a successful experiment [1].

- Principal Component Analysis (PCA): This is a critical visualization to check for the separation of experimental groups and to identify potential outliers or batch effects before differential expression testing [8] [2].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Materials for Bulk RNA-seq Experiments

| Item | Function | Example/Note |

|---|---|---|

| RNA Isolation Kit | Purifies intact total RNA from cells or tissue. | PicoPure RNA isolation kit; critical for obtaining high RNA Integrity Number (RIN). |

| Poly(A) Selection or rRNA Depletion Kit | Enriches for messenger RNA (mRNA) from total RNA. | NEBNext Poly(A) mRNA Magnetic Isolation Module; reduces ribosomal RNA reads. |

| Library Prep Kit | Converts mRNA into a sequencer-compatible cDNA library. | NEBNext Ultra DNA Library Prep Kit; used in standard protocols. |

| Early Barcoding Primers | Adds sample-specific barcodes during cDNA synthesis. | Used in Prime-seq protocol; drastically reduces library preparation costs [5]. |

| ERCC Spike-in Control Mix | Exogenous RNA added to samples before library prep. | Ambion ERCC Mix 1; used for normalization and technical quality assessment [1]. |

| Unique Molecular Identifiers (UMIs) | Short random nucleotide sequences added to each molecule. | Allows precise counting of original mRNA molecules by correcting for PCR duplication bias [5]. |

Bulk RNA-seq remains a foundational technology in modern molecular biology and translational research. Its power to quantitatively profile the average transcriptome of a tissue or cell population provides an efficient and robust means of identifying global gene expression changes driven by development, disease, or therapeutic intervention. The maturity of the field, characterized by well-defined experimental standards, rigorous QC metrics, and sophisticated statistical models for analysis, ensures the reliability and interpretability of the data generated. As protocols like Prime-seq continue to reduce costs and increase throughput, bulk RNA-seq will maintain its vital role in the scientist's toolkit, often serving as a complementary and cost-effective partner to single-cell technologies in the comprehensive dissection of biological systems.

Bulk RNA sequencing (bulk RNA-seq) remains a cornerstone method in transcriptomics, providing a quantitative snapshot of the average gene expression profile across a population of cells [9]. This technical guide details the core components of the bulk RNA-seq workflow, from initial sample collection to the final sequencing run. The process transforms biological material into digital gene expression data, enabling researchers to identify differentially expressed genes between conditions, such as healthy and diseased states, and to uncover broader expression trends [9] [10]. Framed within the broader context of transcriptomics research, this workflow balances depth, affordability, and scalability, making it a powerful tool for researchers and drug development professionals investigating homogeneous tissues or large sample cohorts [9] [5].

The Bulk RNA-Seq Workflow: A Step-by-Step Guide

The journey from a biological sample to a sequenced library involves a series of critical, interconnected steps. Each stage must be meticulously planned and executed to ensure the generation of high-quality, reliable data. The following diagram provides a high-level overview of the entire process.

Sample Collection and RNA Extraction

The workflow begins with the collection of biological material, such as tissues, cells, or blood [9]. A critical first step is cell lysis, which involves breaking open cells to release their intracellular contents, including RNA. This is achieved through mechanical methods (e.g., bead beating, homogenization), chemical lysis (using detergents), or enzymatic digestion, often in combination [9].

Following lysis, total RNA isolation is performed. Traditional methods use phenol-chloroform-based reagents like TRIzol or silica column-based purification kits to separate RNA from DNA and proteins [9]. The goal is to obtain high-quality, intact total RNA, which includes messenger RNA (mRNA), ribosomal RNA (rRNA), and various non-coding RNAs. Preserving RNA integrity by minimizing RNase activity is paramount throughout this process [9]. Innovative platforms, such as Singleron's AccuraCode, can streamline this process by using cell barcoding technology to directly label and capture RNAs from lysed cells, eliminating the need for traditional RNA extraction [9].

RNA Quality Control and mRNA Selection

Before proceeding, the quality and quantity of the isolated RNA must be rigorously assessed [9]. RNA Quality Control (QC) typically involves spectrophotometric methods (NanoDrop) or fluorometric assays (Qubit) to measure concentration and purity. More importantly, capillary electrophoresis systems like the Agilent Bioanalyzer provide an RNA Integrity Number (RIN), where a value greater than 7 typically indicates high-quality RNA suitable for sequencing [9]. Poor RNA quality at this stage can lead to biased or unreliable results.

The next step is to enrich for transcripts of interest, most commonly messenger RNA (mRNA). Two primary strategies are employed, each with distinct advantages [9]:

- Poly(A) Selection: This method uses oligo(dT) primers to bind specifically to the polyadenylated tails of mRNAs. It effectively enriches for protein-coding transcripts and is best suited for high-quality RNA samples [9].

- Ribosomal RNA (rRNA) Depletion: This method removes abundant ribosomal RNA species through hybridization-based capture. It is advantageous for analyzing degraded samples (e.g., from FFPE tissues) and for detecting non-polyadenylated transcripts like some long non-coding RNAs (lncRNAs) [9].

Library Preparation and Sequencing

RNA Fragmentation is performed to break the RNA into smaller, manageable fragments of around 200 base pairs, which facilitates efficient downstream sequencing [9]. This can be done enzymatically or chemically.

These RNA fragments are then reverse transcribed into complementary DNA (cDNA) using reverse transcriptase, often with random hexamer or oligo(dT) primers [9]. This step converts the unstable RNA molecules into stable DNA templates.

Finally, cDNA Library Construction involves several steps to make the fragments ready for sequencing [9]:

- End repair and A-tailing: The ends of cDNA fragments are blunted and adenylated.

- Adapter ligation: Short, double-stranded DNA adapters containing platform-specific sequences and sample-specific barcodes (indexes) are ligated to the cDNA fragments.

- PCR amplification: The adapter-ligated cDNA is amplified to increase the material for sequencing.

Protocols like Prime-seq have been developed to enhance cost-efficiency. Prime-seq uses early barcoding and Unique Molecular Identifiers (UMIs) during cDNA generation, allowing samples to be pooled for all subsequent steps, reducing reagent costs and hands-on time [5]. The final prepared libraries are quantified and quality-checked before being loaded onto high-throughput sequencers, such as Illumina's NovaSeq or NextSeq, for sequencing [9].

Key Experimental Considerations and Best Practices

Experimental Design and Replication

A carefully considered experimental design is the most crucial aspect of a successful RNA-seq study [10]. Key considerations include:

- Defining the Hypothesis: The study should begin with a clear hypothesis and aim, which will guide all subsequent decisions, from the model system to the sequencing depth [10].

- Biological Replicates: These are independent biological samples within the same experimental group (e.g., different animals, or cell cultures). They are essential for accounting for natural biological variation and ensuring findings are generalizable [10]. At least 3 biological replicates per condition are typically recommended, though 4-8 is ideal for most experiments to ensure robust statistical power [10].

- Technical Replicates: These involve processing the same biological sample multiple times to assess technical variation introduced by the workflow. While biological replicates are more critical, technical replicates can help monitor technical noise [10].

- Batch Effects: Systematic non-biological variations can arise when samples are processed in different groups or at different times. The experimental layout should be planned to minimize and enable correction for these batch effects during analysis [10].

- Pilot Studies: Running a small-scale pilot study is an excellent way to validate workflows, assess variability, and determine the optimal sample size for the main experiment [10].

Sequencing Depth and Quality Control Standards

Adhering to established sequencing standards is vital for generating publication-quality data. The table below summarizes key quantitative metrics from authoritative sources like the ENCODE consortium.

Table 1: Key Quantitative Standards for Bulk RNA-Seq Experiments

| Parameter | Recommended Standard | Notes and Context |

|---|---|---|

| Aligned Reads per Replicate | 20-30 million | Older projects aimed for 20M; ENCODE standards recommend 30M aligned reads [6]. |

| Replicate Concordance | Spearman correlation >0.9 (isogenic) / >0.8 (anisogenic) | Measure of reproducibility between biological replicates [6]. |

| Read Length | Minimum 50 base pairs | Defined by the ENCODE Uniform Processing Pipeline [6]. |

| Library Insert Size | Average >200 base pairs | Defines a bulk RNA-seq experiment per ENCODE standards [6]. |

| RNA Integrity Number (RIN) | >7 | Indicates high-quality, intact RNA suitable for sequencing [9]. |

The Scientist's Toolkit: Essential Research Reagents

The wet-lab workflow relies on a suite of specific reagents and materials to ensure successful library preparation.

Table 2: Key Research Reagent Solutions in Bulk RNA-Seq

| Reagent / Material | Function | Application Notes |

|---|---|---|

| TRIzol / Column Kits | For total RNA isolation from lysed cells; separates RNA from DNA and proteins. | Phenol-chloroform-based (TRIzol) or silica-membrane based (kits). Critical for obtaining high-quality input material [9]. |

| DNase I | Enzyme that degrades genomic DNA to prevent contamination in RNA samples. | Essential for accurate quantification, as gDNA can be a source of background noise [5]. |

| Oligo(dT) Beads | For poly(A) selection; binds to polyadenylated tails of mRNAs to enrich for coding transcripts. | Best for high-quality RNA. Removes majority of rRNA and other non-coding RNAs [9]. |

| rRNA Depletion Probes | For ribosomal RNA depletion; uses probes to hybridize and remove abundant rRNA. | Used for degraded samples (FFPE) or to study non-polyadenylated RNAs [9]. |

| Reverse Transcriptase | Enzyme that synthesizes complementary DNA (cDNA) from an RNA template. | High-fidelity enzymes are crucial for preserving transcript diversity and minimizing bias [9]. |

| Platform-Specific Adapters | Short, double-stranded DNA containing sequences for binding to the flow cell and sample barcodes (indexes). | Allows for multiplexing—pooling multiple samples in a single sequencing lane [9]. |

| ERCC Spike-In Controls | Synthetic RNA controls added at known concentrations to the sample. | Serves as an internal standard for assessing technical performance, sensitivity, and quantification accuracy [6] [10]. |

The bulk RNA-seq workflow is a multi-stage process that transforms biological samples into quantitative gene expression data. Its core components—sample preparation, RNA extraction, quality control, library preparation, and sequencing—must be meticulously executed. Best practices, including robust experimental design with adequate biological replication and adherence to established quality standards, are non-negotiable for generating biologically meaningful and reliable results. As the field advances, the development of more efficient protocols like Prime-seq promises to further increase the accessibility and scalability of this powerful technology [5]. When properly planned and executed, bulk RNA-seq remains an indispensable tool for researchers and drug development professionals exploring the transcriptome.

Within the broader scope of bulk RNA sequencing workflow research, a critical challenge lies in accurately quantifying gene expression from raw sequencing data. This process is inherently statistical, as it must account for two distinct but interconnected levels of uncertainty. The first level involves determining the transcript of origin for each sequenced read, a task complicated by the presence of paralogous genes and alternatively spliced transcripts. The second level concerns the conversion of these often-ambiguous read assignments into a reliable count matrix for downstream differential expression analysis. Effectively managing these uncertainties is fundamental to ensuring that biological interpretations are based on accurate and robust data, a concern of paramount importance for researchers and drug development professionals relying on RNA-seq for biomarker discovery and therapeutic target identification [7] [11].

This technical guide explores the methodologies and computational tools designed to address these challenges, providing a detailed overview of best practices within a modern bulk RNA-seq research framework.

Level 1: Read Assignment Uncertainty

The initial step in RNA-seq analysis involves assigning millions of short sequencing reads to their correct transcripts of origin. This is not a trivial task, as many reads may map equally well to multiple genes or isoforms due to sequence similarity, such as in gene families or regions shared by alternative transcripts [7].

Core Challenges and Solutions

Early bioinformatics approaches often simply discarded multi-mapping reads, leading to significant loss of information and systematic underestimation of gene expression, particularly for genes with low sequence uniqueness [12]. Modern methods have developed sophisticated strategies to handle this ambiguity:

- Probabilistic Assignment: Instead of discarding multi-mapping reads, tools like Salmon and kallisto use probabilistic models to distribute reads across all potential transcripts of origin in proportion to the likelihood of assignment [7]. This pseudo-alignment approach is computationally efficient and avoids the biases introduced by discarding reads.

- Alignment-Based Resolution: An alternative method involves first using splice-aware aligners like STAR to map reads to a genome. The resulting alignments are then processed by tools like RSEM (RNA-Seq by Expectation Maximization), which employs an expectation-maximization algorithm to resolve multi-mapping reads and estimate transcript abundances [7].

The following table summarizes the primary approaches to managing read assignment uncertainty:

Table 1: Computational Strategies for Read Assignment Uncertainty

| Method Type | Example Tools | Core Principle | Key Advantage |

|---|---|---|---|

| Pseudo-alignment | Salmon, kallisto [7] | Probabilistic assignment of reads to transcripts without full base-by-base alignment. | Speed and efficiency; direct quantification from FASTQ. |

| Alignment-Based | STAR (alignment) + RSEM (quantification) [7] | Initial genome/transcriptome alignment followed by statistical resolution of multi-mappers. | Generates alignment files (BAM) useful for quality control and visualization. |

| Integrated Workflow | nf-core/rnaseq (STAR + Salmon) [7] | Combines alignment for QC with Salmon for accurate quantification. | Provides comprehensive QC metrics alongside high-quality expression estimates. |

Level 2: Count Estimation Uncertainty

Once reads are assigned, the next level of uncertainty involves converting these assignments into a final count matrix. This step must account for the confidence (or lack thereof) in the assignments themselves.

Quantifying Inferential Uncertainty

The uncertainty in read assignment propagates to the final expression estimates. Advanced quantification pipelines can now quantify this inferential uncertainty:

- Inferential Replicates: Tools like alevin (for single-cell data) and Salmon can generate "inferential replicates" through bootstrapping or Gibbs sampling. These replicates reflect how quantification estimates might vary due to the randomness of read sampling and ambiguity in assignment [12].

- Compression Techniques: Storing and processing a full set of inferential replicates can be computationally prohibitive. Research shows that storing only the mean and variance of these replicates is sufficient to capture gene-level uncertainty, drastically reducing storage and memory requirements without sacrificing information [12]. These compressed parameters can later be used to generate "pseudo-inferential" replicates for downstream statistical analysis.

Impact on Downstream Analysis

Incorporating quantification uncertainty into differential expression testing has been shown to improve the reliability of results. For example, when statistical frameworks like Swish or tradeSeq are modified to account for this uncertainty, they demonstrate a significant reduction in false positive rates, particularly for genes with high levels of multi-mapping reads [12].

Table 2: Addressing Count Estimation Uncertainty in Downstream Analysis

| Concept | Description | Benefit in Downstream Analysis |

|---|---|---|

| Inferential Replicates | Multiple estimations of expression from the same sample, representing quantification uncertainty [12]. | Provides a measure of confidence for each gene's expression level. |

| Uncertainty-Aware Differential Expression | Statistical methods (e.g., extended Swish, tradeSeq) that incorporate inferential uncertainty [12]. |

Reduces false positives by more than a third for genes with high quantification uncertainty [12]. |

| Compression | Storing only the mean and variance of inferential replicates [12]. | Reduces disk storage to as low as 9% of original requirements, making uncertainty propagation feasible for large studies [12]. |

Integrated Experimental and Analytical Workflow

A robust bulk RNA-seq study design integrates solutions for both levels of uncertainty from the start. The following diagram illustrates a recommended workflow that combines experimental best practices with computational methods to manage uncertainty effectively.

Detailed Methodology for an Uncertainty-Aware Workflow

The nf-core/RNA-seq workflow provides a robust, reproducible pipeline that implements best practices for addressing both levels of uncertainty [7].

Input Data and Preparation:

- Input: Paired-end FASTQ files from all biological replicates. Paired-end reads are strongly recommended over single-end for more robust expression estimates [7].

- Genome Reference: A genome FASTA file and a corresponding GTF/GFF annotation file for the organism.

Core Analysis Steps:

- Spliced Alignment with STAR: The FASTQ files are aligned to the reference genome using the splice-aware aligner STAR. This step produces BAM files, which are crucial for generating detailed quality control (QC) metrics for each sample [7].

- Alignment-Based Quantification with Salmon: The genomic alignments from STAR are projected onto the transcriptome and used as input for Salmon running in alignment-based mode. Salmon leverages its statistical model to handle the two levels of uncertainty: it probabilistically resolves read assignment ambiguity (Level 1) and generates accurate transcript-level abundance estimates, often incorporating UMI-based deduplication to improve count estimation (Level 2) [7].

- Generation of Count Matrices: The nf-core workflow automatically aggregates the sample-level quantification from Salmon into a gene-level count matrix, which is the primary input for differential expression tools like DESeq2 or limma-voom [13] [7].

Uncertainty Propagation (Optional):

- For advanced analyses, run Salmon with flags that generate inferential replicates. These can be incorporated into specialized differential expression frameworks like the

fishpondpackage in R to account for quantification uncertainty in statistical testing [12].

- For advanced analyses, run Salmon with flags that generate inferential replicates. These can be incorporated into specialized differential expression frameworks like the

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions and Computational Tools

| Item / Tool Name | Function in the Workflow |

|---|---|

| STAR | Splice-aware aligner for mapping RNA-seq reads to a reference genome; generates alignment files for QC [7]. |

| Salmon | Rapid quantification tool that uses pseudoalignment or alignment to estimate transcript abundance while modeling read assignment uncertainty [7]. |

| nf-core/rnaseq | A comprehensive, community-maintained Nextflow pipeline that automates the entire workflow from FASTQ to count matrix, integrating STAR and Salmon [7]. |

| alevin | A droplet-based scRNA-seq quantification pipeline that extends Salmon's model to single-cell data and can assess quantification uncertainty via bootstrapping [12]. |

| fishpond (R package) | A Bioconductor package containing the Swish method for differential expression analysis that can incorporate inferential replicates to account for quantification uncertainty [12]. |

| DESeq2 / limma | Established R/Bioconductor packages for differential expression analysis of bulk RNA-seq count matrices [13] [7]. |

| Unique Molecular Identifiers (UMIs) | Short random sequences used during library prep to tag individual mRNA molecules, allowing for accurate counting and removal of PCR duplicates [5]. |

Successfully addressing the dual challenges of read assignment and count estimation uncertainty is not merely a computational exercise but a foundational requirement for generating biologically meaningful results from bulk RNA-seq data. By adopting integrated workflows that leverage probabilistic quantification tools like Salmon, utilizing alignment-based QC, and—for critical applications—propagating inferential uncertainty into statistical testing, researchers can significantly enhance the robustness and reliability of their findings. As the field continues to evolve, with new methods like Prime-seq offering more cost-efficient profiling, the principles of rigorously accounting for technical uncertainty will remain central to advancing transcriptomics research and its applications in drug development and personalized medicine [12] [7] [5].

In the context of broader thesis research on the bulk RNA sequencing workflow, understanding its fundamental trade-offs with single-cell RNA sequencing (scRNA-seq) is paramount for researchers and drug development professionals. The core distinction lies in the resolution trade-off: bulk RNA-seq provides a population-averaged gene expression profile from a tissue or cell population, while scRNA-seq delivers gene expression data at the individual cell level [14] [15]. This difference in resolution creates a cascade of technical and analytical consequences that dictate their appropriate application in research and development pipelines. Bulk RNA sequencing (bulk RNA-seq) is a next-generation sequencing (NGS)-based method that measures the whole transcriptome across a population of thousands to millions of cells, yielding an average expression level for each gene across all cells in the sample [14]. In contrast, single-cell RNA sequencing profiles the whole transcriptome of each individual cell within a sample, enabling the resolution of cellular heterogeneity [14] [15]. This foundational difference drives all subsequent decisions regarding experimental design, cost, computational analysis, and biological interpretation.

Technical Foundations and Workflow Comparisons

Core Methodological Differences

The experimental workflows for bulk and single-cell RNA-seq diverge significantly from the initial sample preparation stage, reflecting their distinct objectives and resolution targets.

Bulk RNA-seq Workflow: The process begins with RNA extraction directly from the entire biological sample (e.g., tissue, cell culture). The extracted RNA (either total RNA or enriched mRNA) is then converted to cDNA and processed into a sequencing library that represents the pooled genetic material of all cells [14] [16]. This workflow outputs a single expression profile per sample, where each data point represents the average expression level of a gene across the entire cell population.

Single-Cell RNA-seq Workflow: The initial, critical step involves creating a viable single-cell suspension from the sample through enzymatic or mechanical dissociation [14] [15]. Following quality control to ensure cell viability and absence of clumps, individual cells are partitioned into micro-reaction vessels. In the 10X Genomics platform, this is achieved through a microfluidics system that creates Gel Beads-in-emulsion (GEMs), where each GEM contains a single cell, a gel bead with cell-barcoded oligos, and reverse transcription reagents [14] [15]. Cell lysis occurs within each GEM, allowing captured mRNA to be barcoded with a cell-specific barcode and unique molecular identifier (UMI). This barcoding enables the pooling of all material for sequencing while maintaining the ability to trace transcripts back to their cell of origin during computational analysis [14].

Visualizing Core Workflow Divergence

The following diagram illustrates the fundamental procedural differences between the two sequencing approaches, highlighting the critical branching points that define their respective resolutions.

Quantitative Comparison: Performance Metrics and Applications

The choice between bulk and single-cell RNA-seq involves navigating a complex landscape of technical capabilities, performance trade-offs, and practical constraints. The following table synthesizes key comparative metrics essential for informed experimental design.

| Feature | Bulk RNA-Seq | Single-Cell RNA-Seq |

|---|---|---|

| Resolution | Population average [14] [17] | Individual cell level [14] [17] |

| Cost per Sample | Lower (~$300/sample) [17] | Higher (~$500-$2000/sample) [17] |

| Cell Heterogeneity Detection | Limited; masks differences [14] [17] | High; reveals subpopulations [14] [17] |

| Rare Cell Type Detection | Not possible; signals diluted [17] | Possible; can identify rare populations [17] |

| Gene Detection Sensitivity | Higher per sample (detects more genes) [17] | Lower per cell (sparse data, dropouts) [17] [18] |

| Data Complexity | Lower; simpler analysis [14] [17] | Higher; specialized tools required [14] [17] |

| Sample Input Requirement | Higher amount of tissue/RNA [17] | Lower; works with few cells [17] |

| Isoform/Splicing Analysis | More comprehensive [17] [15] | Limited with standard assays [17] |

| Primary Applications | Differential expression, biomarker discovery, pathway analysis [14] [15] | Cell atlas creation, tumor heterogeneity, rare cell discovery, developmental tracing [14] [17] [15] |

Analytical Pipelines and Computational Considerations

Bulk RNA-Seq Data Processing

The computational analysis of bulk RNA-seq data follows a established pathway with robust, standardized tools. The primary goal is to transform raw sequencing reads (FASTQ files) into a gene count matrix for differential expression testing [7] [16].

- Quality Control and Trimming: Initial QC assesses raw read quality using tools like FastQC, followed by trimming of adapters and low-quality bases with tools such as Trimmomatic [16].

- Read Alignment: Quality-controlled reads are aligned to a reference genome using spliced aligners like STAR or TopHat2 that account for exon-intron junctions [7] [16]. Alternatively, pseudoalignment tools like Salmon or kallisto can be used for faster quantification without generating base-level alignments [7].

- Expression Quantification: Aligned reads are assigned to genomic features (genes, transcripts) using count-based tools like featureCounts or HTSeq, or estimation-based methods like RSEM and Salmon [7] [16]. The nf-core/RNAseq workflow provides a comprehensive, standardized pipeline that automates these steps from FASTQ to count matrix [7].

- Differential Expression Analysis: The final count matrix is analyzed in R/Bioconductor using packages like limma, DESeq2, or edgeR to identify genes differentially expressed between conditions [7] [16].

Single-Cell RNA-Seq Data Processing

The analysis of scRNA-seq data is more complex due to its high dimensionality, technical noise, and sparsity. A typical pipeline involves:

- Raw Data Processing and Demultiplexing: Tools like Cell Ranger (10X Genomics) process raw BCL files, perform demultiplexing using the cell barcodes and UMIs, and align reads to generate a feature-barcode matrix [14] [15].

- Quality Control and Filtering: Cells are filtered based on metrics like number of detected genes, total UMI counts, and mitochondrial RNA percentage to remove low-quality cells and doublets [16].

- Normalization and Scaling: Technical variations in sequencing depth are corrected, and counts are scaled to facilitate comparisons between cells.

- Feature Selection and Dimensionality Reduction: Highly variable genes are selected, and dimensionality reduction techniques like PCA are applied.

- Clustering and Visualization: Cells are grouped into clusters based on gene expression similarity using graph-based or k-means algorithms, and visualized in 2D using t-SNE or UMAP [16].

- Cell Type Annotation and Marker Identification: Clusters are annotated to cell types by comparing expression of known marker genes, and differential expression analysis identifies marker genes for each cluster.

The Scientist's Toolkit: Essential Research Reagents and Platforms

Successful execution of transcriptomic studies requires careful selection of reagents, platforms, and analytical tools. The following table catalogues key solutions referenced in the literature.

| Category | Item/Reagent | Function in Workflow |

|---|---|---|

| Library Prep | Poly-dT Oligos | mRNA enrichment by binding poly-A tail [19] |

| rRNA Depletion Kits | Remove ribosomal RNA to enrich for mRNA and other RNA species [19] [15] | |

| Template Switching Oligos | Used in Smart-seq2 protocol for full-length cDNA amplification [16] | |

| Single-Cell Partitioning | 10X Genomics Chromium Controller/X | Microfluidics instrument for partitioning cells into GEMs [14] [15] |

| Gel Beads | Contain barcoded oligos (cell barcode, UMI, dT primer) for mRNA capture in GEMs [14] [15] | |

| Sequencing & Analysis | Illumina NGS Platforms | High-throughput sequencing of prepared libraries [14] |

| nf-core/RNAseq Pipeline | Standardized Nextflow workflow for bulk RNA-seq analysis (QC, alignment, quantification) [7] | |

| Cell Ranger Suite | 10X Genomics software for demultiplexing, alignment, and counting of scRNA-seq data [14] | |

| Bioinformatics | STAR | Spliced aligner for accurate mapping of RNA-seq reads to genome [7] [16] |

| Salmon/Kallisto | Tools for fast transcript-level quantification via pseudoalignment [7] | |

| Limma/DESeq2/edgeR | R/Bioconductor packages for differential expression analysis of bulk data [7] [16] | |

| Seurat/Scanpy | Comprehensive toolkits for downstream analysis of scRNA-seq data [16] |

Integrated and Emerging Approaches

Hybrid and Complementary Applications

Rather than being mutually exclusive, bulk and single-cell RNA-seq are increasingly used as complementary approaches. A powerful strategy uses scRNA-seq to deconvolve bulk RNA-seq data, inferring cell type-specific (CTS) expression from bulk tissue samples [20]. Methods like EPIC-unmix, bMIND, and CIBERSORTx leverage single-cell reference atlases to estimate both cell type proportions and CTS expression profiles from bulk data, enabling CTS analysis for large cohorts where single-cell profiling is cost-prohibitive [20]. This is particularly valuable for studying diseases like Alzheimer's, where bulk RNA-seq from brain tissue can be deconvolved to identify CTS differentially expressed genes and expression quantitative trait loci (eQTLs) [20].

Beyond Whole Transcriptome: Targeted scRNA-seq

For applications requiring high sensitivity for specific genes, targeted single-cell gene expression profiling provides an alternative to whole transcriptome approaches. By focusing sequencing resources on a predefined panel of genes (dozens to hundreds), targeted methods achieve superior sensitivity, reduce gene dropout, and lower costs per sample [18]. This makes them ideal for validating discoveries from initial whole transcriptome studies, interrogating specific pathways, and developing robust assays for clinical translation in drug development [18].

The choice between bulk and single-cell RNA-seq is not a matter of technological superiority but of strategic alignment with research objectives. Bulk RNA-seq remains the workhorse for hypothesis-driven studies comparing defined conditions, where the goal is to identify population-level transcriptional changes, discover biomarkers, or conduct large-scale cohort studies cost-effectively. Its strengths lie in its robust, standardized workflows, lower cost, and high gene detection sensitivity. Conversely, single-cell RNA-seq is a discovery-oriented tool that excels at unraveling cellular heterogeneity, identifying novel cell types and states, and reconstructing dynamic processes like development and disease progression. Its power comes from its unparalleled resolution, albeit at a higher cost and computational complexity. For researchers and drug development professionals, a pragmatic approach often involves using scRNA-seq for initial discovery and atlas-building, followed by bulk RNA-seq or targeted scRNA-seq for validation and scaling across larger cohorts. Furthermore, computational deconvolution methods now allow for the integration of both data types, maximizing biological insights while navigating practical constraints. Understanding this resolution trade-off is fundamental to designing efficient and informative transcriptomic studies within the broader context of genomic research.

Bulk RNA sequencing (RNA-Seq) is a foundational molecular biology technique that measures the average gene expression profile across a population of cells within a sample [19]. This powerful, large-scale method provides a holistic view of the transcriptome, enabling researchers to make quantitative comparisons between different biological conditions—such as healthy versus diseased tissue or treated versus control samples [19] [14]. By converting RNA molecules into complementary DNA (cDNA) and sequencing them using next-generation sequencing platforms, bulk RNA-Seq captures a global snapshot of transcriptional activity [19]. While newer single-cell technologies resolve cellular heterogeneity, bulk RNA-Seq remains a cornerstone for hypothesis-driven research, particularly in contexts where population-level averages are biologically meaningful or when practical constraints like budget and sample availability prevail [14]. This technical guide explores the primary applications of bulk RNA-Seq, with a focused examination of differential expression analysis and its extensions into broader research domains, framed within the complete workflow of a bulk RNA-Seq study.

The Core Workflow of a Bulk RNA-Seq Experiment

A typical bulk RNA-Seq study follows a multi-stage process, from sample preparation to biological interpretation. The workflow diagram below illustrates the key stages and their interconnections.

From Raw Data to Count Matrix

The initial phase transforms raw sequencing data into a structured gene expression count matrix. This process begins with quality control checks on raw FASTQ files using tools like FastQC, followed by read trimming to remove adapter sequences and low-quality bases [8]. The cleaned reads are then aligned to a reference genome using splice-aware aligners such as STAR [7]. Finally, expression quantification assigns reads to genomic features, generating a count matrix where rows represent genes, columns represent samples, and integer values indicate the number of reads uniquely assigned to each gene [7] [8]. Tools like HTSeq-count or alignment-free tools like Salmon perform this quantification, handling uncertainty in read assignment through statistical models [7].

Key Research Reagent Solutions

The following table details essential reagents and materials required for a successful bulk RNA-Seq experiment.

Table 1: Key Research Reagent Solutions for Bulk RNA-Seq

| Item | Function | Examples & Notes |

|---|---|---|

| RNA Extraction Kits | Isolate high-quality RNA from source material (cells, tissues). | PicoPure RNA isolation kit [2]. Consider compatibility with sample type (e.g., FFPE, blood). |

| rRNA Depletion Kits | Remove abundant ribosomal RNA (rRNA) to enrich for mRNA and other RNA types. | Critical for total RNA sequencing. Increases informational yield [19]. |

| Poly(A) Selection Kits | Enrich for messenger RNA (mRNA) by capturing polyadenylated tails. | NEBNext Poly(A) mRNA Magnetic Isolation Module [2]. Standard for mRNA-seq. |

| Library Prep Kits | Convert RNA into a sequencing-ready library; includes cDNA synthesis, adapter ligation, and indexing. | NEBNext Ultra DNA Library Prep Kit [2]. 3'-Seq kits (e.g., QuantSeq) ideal for high-throughput screens [10]. |

| Spike-in Controls | Add known quantities of exogenous RNA to monitor technical performance and aid normalization. | SIRVs; assess dynamic range, sensitivity, and quantification accuracy [10]. |

| Strandedness Reagents | Preserve the original orientation of RNA transcripts during library construction. | Specified in the library kit (e.g., "forward", "reverse", "unstranded") [7]. |

Primary Application: Differential Expression Analysis

Differential expression (DE) analysis is the most prominent application of bulk RNA-Seq, statistically identifying genes whose expression levels change significantly between predefined experimental groups [7] [8].

Statistical Foundations and Methodologies

DE analysis operates on the raw count matrix, which typically follows a negative binomial distribution [8]. The core task involves fitting a statistical model to test the null hypothesis that a gene's expression does not differ between conditions (e.g., treated vs. control). Several established tools and packages are available, with DESeq2 and limma being widely adopted for their robustness and accuracy [7] [8].

- DESeq2 employs a negative binomial generalized linear model, internally estimating size factors to account for differences in sequencing depth and dispersion for each gene [8]. It then uses the Wald test or likelihood ratio test to compute p-values for differential expression.

- limma utilizes a linear modeling framework, often with precision weights for count data ("voom" transformation), to assess differential expression [7].

A critical step in DE analysis is multiple testing correction. Due to the simultaneous testing of thousands of genes, the False Discovery Rate (FDR) correction (e.g., Benjamini-Hochberg procedure) is standard to control the expected proportion of false positives among significant results [8]. The outcome is a list of differentially expressed genes (DEGs) with statistics including log2 fold-change, p-value, and adjusted p-value (q-value).

Outputs and Interpretation of DE Analysis

The results of a DE analysis are typically presented in a comprehensive table and visualized to facilitate biological interpretation.

Table 2: Typical Outputs from Differential Expression Analysis (e.g., DESeq2)

| Output Column | Description | Biological Interpretation |

|---|---|---|

| baseMean | The mean of normalized counts for the gene across all samples. | Provides an estimate of the gene's overall expression level. |

| log2FoldChange | The log2-transformed ratio of expression between the two groups. | A value of 1 indicates a 2-fold upregulation; -1 indicates a 2-fold downregulation. |

| lfcSE | The standard error of the log2 fold-change estimate. | Measures the precision of the effect size estimate. |

| stat | The test statistic (e.g., from the Wald test). | Used to compute the p-value. |

| pvalue | The nominal p-value from the test of differential expression. | The probability of observing the data if the null hypothesis (no change) is true. |

| padj | The p-value adjusted for multiple testing (e.g., FDR). | A padj < 0.05 is commonly used to define statistically significant DEGs. |

| svalue | An optional value indicating confidence in the sign (direction) of the log2FoldChange. | Provides an additional measure of confidence in the result [8]. |

To illustrate the decision-making process in DE analysis, the following logic flow diagram outlines the key steps from raw data to a validated gene list.

Applications Beyond Differential Expression

While differential expression is a central pillar, the utility of bulk RNA-Seq extends to several other critical areas of research.

Transcriptome Characterization and Isoform Analysis

Bulk RNA-Seq is instrumental in cataloging and characterizing the transcriptome. Unlike microarray technology, it can detect novel transcripts, alternative splicing events, and gene fusions without prior knowledge of the transcriptome [14]. This is particularly valuable for annotating genomes of understudied organisms or for discovering disease-specific isoforms and fusion genes, which can serve as therapeutic targets or biomarkers [14] [21]. Specialized library preparations that preserve strand information are often used for these applications to accurately determine the boundaries and orientations of transcripts.

Pathway and Network Analysis

Moving from individual gene lists to higher-order biological meaning, pathway analysis connects DE results to known biological pathways and gene ontologies [22]. This involves testing for the enrichment of DEGs in predefined gene sets representing metabolic pathways, signaling cascades, or molecular functions. Tools like DAVID and Reactome are commonly used for this purpose, helping researchers interpret their DE findings in the context of cellular processes and systems-level biology [22]. This application is crucial in drug discovery for understanding a compound's mechanism of action and identifying potential on-target and off-target effects [10].

Specialized Applications in Drug Discovery and Development

Bulk RNA-Seq is strategically applied throughout the drug discovery and development pipeline [10]. Its applications in this context include:

- Target Identification: Comparing gene expression profiles of diseased versus healthy tissues to identify dysregulated genes and pathways that can be targeted therapeutically.

- Biomarker Discovery: Identifying RNA-based signatures for patient stratification, diagnosis, prognosis, and monitoring treatment response [14] [10].

- Mode-of-Action Studies: Profiling transcriptomic changes in response to drug treatment to elucidate a compound's biological effects and mechanisms.

- Dose-Response and Combination Studies: Assessing transcriptional changes across different drug doses or combination therapies to determine optimal treatment regimens [10].

Best Practices for Robust Experimental Design

The reliability of any bulk RNA-Seq application hinges on a well-designed experiment. Key considerations to mitigate technical artifacts and false discoveries include:

- Replication: Biological replicates (samples derived from different biological entities) are essential for capturing natural variation and ensuring findings are generalizable. A minimum of three biological replicates per condition is typical, with larger numbers (4-8) recommended for robust statistical power, especially when biological variability is high [10]. Technical replicates (repeated measurements of the same biological sample) are less critical but can help assess technical noise.

- Batch Effects: Systematic non-biological variations introduced when samples are processed in different batches can confound results. Experimental design should randomize samples across processing batches whenever possible. Statistical batch correction methods can be applied during analysis if batch effects are unavoidable [10].

- Controls: Including positive and negative controls strengthens experimental conclusions. Spike-in RNAs (e.g., SIRVs) are valuable external controls for monitoring quantification accuracy and assay performance across samples and batches [10].

- Pilot Studies: For large-scale projects, a pilot study using a representative subset of samples is highly recommended to validate wet-lab and computational workflows before committing significant resources [10].

Bulk RNA-Seq remains an indispensable tool for comprehensive transcriptome profiling. Its primary application in differential expression analysis provides a statistically rigorous framework for identifying genes altered between biological states. However, as this guide illustrates, its utility extends far beyond this core function to encompass transcriptome annotation, pathway analysis, and specialized applications in drug discovery. The successful implementation of a bulk RNA-Seq study—from a hypothesis-driven experimental design and appropriate reagent selection to the application of robust computational pipelines—empowers researchers and drug development professionals to extract profound insights into the molecular underpinnings of health, disease, and therapeutic intervention.

Executing the Bulk RNA-Seq Pipeline: Best Practices from Lab to Analysis

High-quality, intact RNA is a fundamental requirement for successful bulk RNA sequencing, as RNA integrity directly impacts the accuracy and reliability of gene expression data [23]. The susceptibility of RNA to degradation by ubiquitous RNases makes careful handling and proper quality assessment during nucleic acid isolation critical steps in the workflow [23]. Within the broader context of bulk RNA sequencing research, the sample preparation phase serves as the foundation upon which all subsequent analytical steps are built. This technical guide details the methods and considerations for ensuring RNA integrity from initial isolation through quality verification, providing researchers with the knowledge needed to prevent experimental failure and generate robust, reproducible transcriptomic data.

RNA Quality Assessment Methods

A variety of techniques are available to assess RNA concentration, purity, and integrity. Each method offers distinct advantages and limitations, and often they are used in combination to provide a comprehensive quality profile.

Table 1: Methods for RNA Quality Assessment

| Method | Principle | Information Provided | Advantages | Disadvantages/Limitations |

|---|---|---|---|---|

| UV Spectrophotometry (e.g., NanoDrop) | Measures absorbance of ultraviolet light by nucleic acids and contaminants at 260 nm, 280 nm, and 230 nm [23]. | Concentration (A260), Purity (A260/A280 and A260/A230 ratios) [23] [24]. | Fast (≤30 seconds); small sample volume (0.5–2 µl); wide detection range (2 ng/µl–12,000 ng/µl) [23]. | Not sensitive to degradation; lacks specificity between RNA/DNA; overestimation if contaminants absorb at ~260 nm [23] [24]. |

| Fluorometric Assay (e.g., Qubit, QuantiFluor) | Fluorescent dyes bind nucleic acids, undergo conformational change, and emit light [23]. | Highly accurate RNA concentration, especially for dilute samples [23] [24]. | Extremely sensitive (can detect ≤100 pg/µl); suitable for low-concentration samples [23] [24]. | Requires standard curves; dyes may not be RNA-specific (may bind DNA); provides no integrity/purity information [23]. |

| Agarose Gel Electrophoresis | Separates nucleic acid fragments by size using an electric current; visualizes with fluorescent dye [23] [25]. | Integrity via sharpness and intensity of ribosomal RNA bands (28S:18S ~2:1 for mammals); can visualize genomic DNA contamination [23] [25]. | Relatively low cost; provides visual integrity check [23]. | Requires significant RNA (≥200 ng for EtBr); time-consuming; potential safety hazards from stains [23] [25]. |

| Microfluidics Capillary Electrophoresis (e.g., Agilent Bioanalyzer/TapeStation) | Microfluidics and fluorescence dye separate RNA fragments in a chip [23] [25]. | RNA Integrity Number (RIN); precise integrity assessment; concentration and purity estimation [2] [25]. | High sensitivity (requires only 1 µl of ~10 ng/µl RNA); provides digital integrity score (RIN); fast and automated [25]. | Higher instrument cost; not suitable for assessing poly(A)-selected mRNA integrity on gels [25]. |

Detailed Experimental Protocols for RNA QC

Protocol: UV Spectrophotometry for RNA Purity Assessment

This protocol is used for a rapid initial assessment of RNA sample concentration and purity from contaminants like protein or salts [23] [24].

- Instrument Blanking: Apply 1–2 µl of the elution buffer (e.g., nuclease-free water) used to dissolve the RNA sample to the measurement pedestal. Perform a blank measurement to calibrate the instrument.

- Sample Measurement: Wipe away the blank and apply 1–2 µl of the RNA sample to the pedestal. Measure the absorbance.

- Data Interpretation: Record the concentration and the A260/A280 and A260/A230 ratios. Pure RNA typically has an A260/A280 ratio of 1.9–2.1 and an A260/A230 ratio of >1.7 (often 2.0–2.2) [23] [24]. Significant deviation from these ranges indicates potential contamination that may interfere with downstream applications.

Protocol: Agarose Gel Electrophoresis for RNA Integrity Check

This method provides a visual representation of RNA integrity based on the sharpness of ribosomal RNA bands [25].

- Gel Preparation: Prepare a 1.5% denaturing agarose gel. Denaturing conditions, typically using formaldehyde or glyoxal/DMSO, are essential to prevent RNA secondary structure from affecting migration [25].

- Sample Loading: Mix 200–500 ng of RNA with loading dye. Include an appropriate RNA ladder (size marker) in one lane [25].

- Electrophoresis: Run the gel at a constant voltage (e.g., 5 V/cm) until the dye front has migrated sufficiently.

- Staining and Visualization: Stain the gel with a fluorescent nucleic acid dye such as SYBR Gold or ethidium bromide and visualize under UV light [23] [25].

- Interpretation: Intact total RNA from a eukaryotic sample will display two sharp, clear bands: the 28S rRNA band should be approximately twice as intense as the 18S rRNA band. A smeared appearance, lack of sharp bands, or deviation from the 2:1 ratio indicates degradation [25].

The Scientist's Toolkit: Essential Reagents & Materials

Table 2: Key Research Reagent Solutions for RNA Isolation and QC

| Item | Function/Application | Examples/Specifications |

|---|---|---|

| Magnetic Nanoparticles | Bind and purify nucleic acids from complex lysates in a scalable, automatable format [26]. | Silica-coated iron oxide nanoparticles (e.g., NAxtra); used in KingFisher systems [26]. |

| DNase I, RNase-free | Digests and removes contaminating genomic DNA from RNA preparations, crucial for accurate RNA quantitation and sequencing [23]. | Included in many isolation kits or available as a standalone reagent. |

| Fluorescent Nucleic Acid Dyes | Sensitive detection and quantification of RNA in solution or in gels [23] [25]. | Qubit RNA BR/HS Assay Kits (fluorometry); SYBR Gold or SYBR Green II (gel staining, more sensitive than EtBr) [23] [25]. |

| Microfluidics Kits | Integrated chips and reagents for automated RNA quality analysis, providing a RIN [25]. | Agilent RNA 6000 Nano/Pico LabChip Kits (for Bioanalyzer) [25]. |

| ERCC Spike-in Controls | Exogenous RNA controls added to samples to provide a standard baseline for normalization and quality monitoring in RNA-seq experiments [1]. | Defined mix of synthetic RNAs at known concentrations (e.g., Ambion ERCC Spike-In Mix) [1]. |

| Lysis Buffers | Disrupt cells and inactivate RNases immediately upon sample collection to preserve RNA integrity [26]. | Often contain guanidine thiocyanate or other chaotropic salts; component of most commercial kits [23] [26]. |

Workflow Integration and Decision Pathway

The following diagram illustrates the logical sequence of steps for assessing RNA quality, from initial isolation to final qualification for downstream bulk RNA-seq applications.

The success of a bulk RNA sequencing project is profoundly dependent on the initial steps of sample preparation and nucleic acid isolation. A rigorous, multi-faceted approach to RNA quality control—integrating assessments of concentration, purity, and most critically, integrity—is not merely a preliminary check but a fundamental component of robust experimental design. By adhering to standardized protocols and leveraging the appropriate technologies from the scientist's toolkit, researchers can ensure that their data accurately reflects the biological state under investigation, thereby maximizing the scientific return from costly and time-consuming RNA-seq workflows.

Within the broader context of bulk RNA sequencing workflow research, the initial decision between mRNA and total RNA library construction protocols is a critical foundational choice. Bulk RNA sequencing is a powerful technique that measures the average gene expression level across a population of cells from samples like tissues or biopsies [27]. This method provides a global overview of transcriptomic differences between conditions, such as healthy versus diseased states or treated versus untreated samples [19]. The library construction approach fundamentally shapes all subsequent data generation and interpretation, making this selection a pivotal point in experimental design with significant implications for data quality, research outcomes, and resource allocation.

Understanding the Core Protocols: mRNA-seq vs. Total RNA-seq

The fundamental distinction between mRNA sequencing (mRNA-seq) and total RNA sequencing (Total RNA-seq) lies in which RNA molecules are captured and prepared for sequencing during library construction.

mRNA Sequencing employs a targeted enrichment strategy using poly(A) selection to isolate messenger RNA molecules that contain polyadenylated tails [28] [29]. This method specifically captures protein-coding transcripts while excluding non-coding RNA species. Since mRNAs constitute only 1-5% of total RNA in eukaryotic cells, this approach efficiently focuses sequencing resources on the coding transcriptome [28].

Total RNA Sequencing takes a comprehensive approach by sequencing all RNA molecules after selectively depleting ribosomal RNA (rRNA), which constitutes 80-90% of total cellular RNA [28] [29]. This method captures both coding and non-coding RNAs, including long non-coding RNAs (lncRNAs), microRNAs (miRNAs), transfer RNAs (tRNAs), and other non-coding RNA species [28]. The removal of rRNA allows sequencing resources to be concentrated on the remaining transcriptome.

Table 1: Fundamental Differences Between mRNA-seq and Total RNA-seq Protocols

| Feature | mRNA Sequencing | Total RNA Sequencing |

|---|---|---|

| Enrichment Method | Poly(A) selection | Ribosomal RNA depletion |

| RNA Types Captured | Protein-coding polyadenylated mRNAs | Both coding and non-coding RNAs |

| Transcript Coverage | 3'-biased (in 3' mRNA-seq) or full-length | Even coverage across transcript length |

| rRNA Removal Efficiency | High through positive selection | High through negative depletion |

Technical Comparisons and Experimental Considerations

The choice between these protocols significantly impacts multiple aspects of experimental design and data outcomes. Each method offers distinct advantages and limitations that must be weighed against research objectives.

Transcript Coverage and Strandedness differ notably between approaches. Total RNA-seq provides relatively even coverage across the entire transcript length, enabling analysis of splicing patterns, exon-intron boundaries, and transcript isoforms [28] [29]. mRNA-seq methods, particularly 3' mRNA-seq, generate coverage biased toward the 3' end of transcripts [29]. For both methods, strand-specific protocols are available and recommended, as they preserve information about which DNA strand originated the transcript, which is particularly important for distinguishing overlapping genes on opposite strands [28] [30].

Input RNA Requirements and Sample Quality considerations also vary. mRNA-seq generally performs better with limited starting material and is often preferred for low-input applications [28]. However, total RNA-seq may demonstrate superior performance with degraded RNA samples, such as those from Formalin-Fixed Paraffin-Embedded (FFPE) tissues, because it does not rely on intact poly-A tails for capture [29].

Sequencing Depth and Cost Considerations substantially differ between methods. Total RNA-seq typically requires 3 times more sequencing reads than mRNA-seq for equivalent transcriptome coverage due to its broader capture of diverse RNA species [29]. While mRNA-seq generally has lower per-sample sequencing costs, total RNA-seq provides more comprehensive transcriptome information per sample.

Table 2: Performance Characteristics and Practical Considerations

| Parameter | mRNA Sequencing | Total RNA Sequencing |

|---|---|---|

| Recommended Sequencing Depth | 25-50 million reads per sample [28] | 100-200 million reads per sample [28] |

| Ideal Sample Types | High-quality RNA, limited starting material | Various sample types, including degraded RNA |

| Key Applications | Differential gene expression of coding genes [29] | Whole transcriptome analysis, isoform identification, non-coding RNA study [29] |

| Cost Efficiency | Lower cost for coding transcript-focused studies | Higher cost but broader information content |

Decision Framework: Selecting the Appropriate Protocol

Choosing between mRNA-seq and total RNA-seq requires systematic consideration of multiple experimental factors. The following workflow diagram outlines the key decision points for selecting the optimal protocol:

This decision pathway emphasizes how research questions should drive technical selections, with practical constraints influencing the final choice.

The Scientist's Toolkit: Essential Research Reagents

Successful library construction requires specific reagents and materials tailored to each protocol. The following table details essential components for both approaches:

Table 3: Essential Reagents for RNA-seq Library Construction

| Reagent/Material | Function | Protocol Application |

|---|---|---|

| Oligo-dT Magnetic Beads | Binds to poly-A tails for mRNA enrichment | mRNA-seq |

| rRNA Depletion Probes | Hybridizes to ribosomal RNA for removal | Total RNA-seq |

| Reverse Transcriptase | Synthesizes cDNA from RNA templates | Both |

| Random Hexamer Primers | Primes cDNA synthesis across entire transcriptome | Total RNA-seq |

| Strand-Specific Adapters | Preserves strand orientation information during sequencing | Both |

| Fragmentation Enzymes | Fragments RNA or cDNA to optimal sequencing size | Both (method varies) |

| Library Amplification | Amplifies final library for sequencing | Both |

| Quality Control Assays | Assesses RNA integrity and library quality (e.g., Bioanalyzer, Qubit) [27] | Both |

Experimental Workflow: From Sample to Sequence

Both mRNA-seq and total RNA-seq share common procedural phases but differ significantly in key steps. The following diagram illustrates the complete workflow with critical divergence points:

The critical divergence occurs during library construction, where either poly-A selection (mRNA-seq) or rRNA depletion (Total RNA-seq) is applied. Subsequent steps of cDNA synthesis, adapter ligation, and library preparation follow similar pathways, though with potential protocol-specific variations in enzymes and reaction conditions.

The selection between mRNA and total RNA protocols for bulk RNA sequencing library construction represents a fundamental strategic decision with far-reaching implications for research outcomes. mRNA-seq provides a cost-effective, focused approach for differential expression analysis of protein-coding genes, while total RNA-seq offers comprehensive transcriptome coverage at higher complexity and cost. There is no universally superior option—the optimal choice emerges from careful alignment of technical capabilities with specific research questions, sample characteristics, and resource constraints. By applying the systematic decision framework presented herein, researchers can make informed choices that maximize the scientific return on their investment in transcriptomic studies, ensuring that library construction protocols effectively support their overarching research objectives within the broader context of bulk RNA sequencing workflows.

The selection of an appropriate next-generation sequencing (NGS) platform and the determination of optimal sequencing depth are fundamental decisions in bulk RNA sequencing workflow research. These choices directly impact data quality, experimental outcomes, and resource allocation, particularly in drug discovery and development contexts. With continuous innovation driving down costs, NGS has become increasingly accessible to laboratories of all sizes, enabling researchers to expand the scale and discovery power of their genomics studies [31]. The cost of sequencing has seen a dramatic 96% decrease in the average cost-per-genome since 2013, making the technology more affordable than ever [31]. However, researchers must adopt a holistic view when evaluating NGS costs, considering not just the instrument price or cost per gigabase, but the total cost of ownership, which includes setup, ancillary equipment, ease of use, support, training, and data analysis capabilities [31].

For bulk RNA-seq experiments in drug discovery, careful experimental design is the most crucial aspect for ensuring meaningful results [10]. The balance between cost and data quality requires thoughtful consideration of multiple factors, including research goals, sample availability, biological variability, and the specific questions being addressed. This technical guide provides comprehensive recommendations for selecting sequencing platforms, determining appropriate depth requirements, and implementing cost-effective strategies for bulk RNA sequencing workflows within the context of pharmaceutical and basic research applications.

Sequencing Platform Comparison and Selection

Platform Specifications and Performance Metrics

When selecting a sequencing platform for bulk RNA-seq, researchers must evaluate key performance parameters across available systems. The specifications of two representative high-throughput platforms are compared in the table below:

Table 1: Comparison of High-Throughput Sequencing Platforms

| Parameter | Illumina NovaSeq 6000 | DNBSEQ-T7 |

|---|---|---|

| Maximum Output | 6 Tb (S4 flow cell) | 7 Tb (4 flow cells) |

| Read Lengths | 50-300 bp paired-end | 100-150 bp paired-end |

| Reads per Flow Cell | Up to 10 billion (S4) | Up to 5.8 billion (4 FC) |

| Run Time (PE150) | Approximately 44 hours | 22-24 hours |

| Q30 Score | >85% (typical) | >85% |

| Key Technology | Sequencing by synthesis | DNA nanoball sequencing |

Data compiled from manufacturer specifications [32] and industry standards [31].

The DNBSEQ-T7 platform exemplifies recent advancements, offering flexible run configurations with four independent flow cells, enabling researchers to process multiple projects simultaneously with varying scale requirements [32]. This platform demonstrates low GC bias and consistent data quality across diverse sample types, making it suitable for various RNA-seq applications in clinical research, population studies, and microbiology [32]. Similarly, Illumina platforms continue to evolve with innovations focused on operational simplicity and streamlined workflows, benefiting from a comprehensive ecosystem of supported applications and analysis tools [31].

Cost Considerations Beyond Instrument Price

The initial instrument cost represents only one component of the total investment required for successful NGS implementation. A comprehensive assessment must include:

- Running expenses: Cost per experiment including DNA/RNA isolation, library preparation, and sequencing reagents [31]

- Laboratory infrastructure: Nucleic acid quantitation instruments, quality analyzers, thermocyclers, centrifuges, and other ancillary equipment [31]

- Data management: Storage solutions, server maintenance, software licenses, and computational resources for analysis [31]

- Personnel costs: Training requirements, hands-on time, and technical expertise needed for operation and troubleshooting [31]

Economies of scale can significantly reduce costs for higher-output applications, with multiplex sequencing enabling exponential increases in sample throughput without proportional cost increases [31]. Researchers should also investigate equipment trade-in programs, leasing options, and reagent bundles to optimize financial outlay [31].

Sequencing Depth Recommendations for Bulk RNA-Seq

Coverage Guidelines by Application

Sequencing depth requirements vary significantly across different NGS applications. The following table summarizes recommended coverage levels for common genomic analyses:

Table 2: Sequencing Coverage Recommendations by Method

| Sequencing Method | Recommended Coverage | Key Considerations |

|---|---|---|

| Whole Genome Sequencing (WGS) | 30× to 50× for human | Dependent on application and statistical model |

| Whole-Exome Sequencing | 100× | Standard for variant calling |

| Bulk RNA Sequencing | 10-50 million reads per sample | Dependent on gene expression levels and experimental goals |

| ChIP-Seq | 100× | Standard for transcription factor binding studies |

Based on Illumina recommendations [33] and community standards.