Unraveling Complexity: Computational Advances in Pseudoknot RNA Structure Prediction for Biomedical Discovery

This article explores the critical challenge of computational complexity in RNA pseudoknot prediction, a pivotal problem in structural bioinformatics.

Unraveling Complexity: Computational Advances in Pseudoknot RNA Structure Prediction for Biomedical Discovery

Abstract

This article explores the critical challenge of computational complexity in RNA pseudoknot prediction, a pivotal problem in structural bioinformatics. We examine the foundational reasons why pseudoknots are NP-hard to predict, survey modern algorithmic strategies—from dynamic programming heuristics and machine learning to constraint programming—that navigate this complexity, and provide practical guidance for researchers on selecting and optimizing these tools. The analysis compares the performance, accuracy, and limitations of leading methodologies, culminating in a synthesis of current capabilities and future directions that hold significant implications for antiviral drug design, functional genomics, and RNA therapeutics.

Why Pseudoknot Prediction is NP-Hard: Defining the Core Computational Challenge in RNA Bioinformatics

Troubleshooting Guide & FAQ: Computational and Experimental Analysis of RNA Pseudoknots

Thesis Context: This support content is designed to assist researchers in overcoming practical and computational hurdles in pseudoknot analysis, directly supporting the broader thesis goal of Addressing computational complexity in pseudoknot prediction research.

FAQ: Common Computational & Experimental Issues

Q1: My thermodynamic prediction software (e.g., RNAstructure, ViennaRNA) fails to predict or incorrectly predicts a known pseudoknot. What are the primary causes?

A: Most standard folding algorithms use simplified energy models that exclude pseudoknots due to high computational complexity (NP-hard problem). Explicitly use pseudoknot-capable programs like pknotsRG, HotKnots, or IPknot. Ensure your input sequence is in the correct format (FASTA, no spaces). Also, adjust temperature and ionic concentration parameters if the software allows, as pseudoknot stability is Mg2+-dependent.

Q2: During mutational analysis to probe pseudoknot function, my frameshifting or catalysis assay shows no signal. Where should I start troubleshooting? A: First, verify pseudoknot integrity. Perform a structure-probing experiment (e.g., SHAPE-MaP or DMS-MaP) on your wild-type and mutant constructs in vitro to confirm the predicted secondary structure is formed. A table of key control mutants is recommended:

| Mutant Type | Target Region | Expected Effect on Pseudoknot | Purpose of Control |

|---|---|---|---|

| Stem 1 Disruption | Paired bases in Stem 1 | Unfolds entire pseudoknot | Negative control for function |

| Stem 2 Disruption | Paired bases in Stem 2 | Unfolds entire pseudoknot | Negative control for function |

| Loop 2 Mutation | Nucleotides in Loop 2 | May disrupt tertiary contacts | Probe specific interactions |

| Compensatory | Restore base pairing in Stems 1 & 2 | Restore structure (not sequence) | Confirm structure-dependence |

Q3: When simulating pseudoknot dynamics with MD (Molecular Dynamics), the structure unravels quickly. How can I improve stability? A: This is common due to force field inaccuracies and timescale limitations. Use a explicit Mg2+ ion model and place ions near the predicted high-density negative charge pockets. Employ restrained simulations initially, using known NMR or crystal structure distance restraints. Consider enhanced sampling methods (e.g., replica exchange) to overcome high energy barriers.

Q4: My cryo-EM 3D reconstruction of a ribozyme pseudoknot shows poor density for the pseudoknot region. What are potential solutions? A: This indicates flexibility or partial occupancy. Chemical crosslinking (e.g., psoralen) prior to vitrification can stabilize the structure. Alternatively, use engineered stabilizing mutations (e.g., base-pair swaps that increase GC content) or conformation-specific antibodies/Fabs to lock the pseudoknot and provide a fiducial marker.

Detailed Experimental Protocols

Protocol 1: In-line Probing for Ribozyme Pseudoknot Catalytic Core Mapping

- Principle: Spontaneous cleavage of RNA backbone at flexible, unconstrained regions; protected regions indicate structured or bound areas.

- Procedure:

- 5'-End Labeling: Dephosphorylate purified in vitro transcribed RNA with CIP. Use T4 PNK and [γ-32P]ATP to label the 5' end. Purify via denaturing PAGE.

- Reaction Setup: Incubate ~50,000 cpm of labeled RNA in 10 µL of reaction buffer (50 mM Tris-HCl pH 8.3, 20 mM MgCl2, 100 mM KCl) for 40 hours at 25°C. Include a no-Mg2+ (10 mM EDTA) control and an alkaline hydrolysis (OH-) ladder.

- Analysis: Stop with equal volume of 2x Urea Loading Dye. Resolve fragments on 10% denaturing PAGE. Visualize via phosphorimaging. Bands absent in the +Mg2+ sample correspond to protected regions (likely involved in pseudoknot or tertiary interactions).

Protocol 2: Dual-Luciferase Frameshifting Assay for Viral Pseudoknot Efficiency

- Principle: Measures -1 PRF efficiency by comparing expression of two reporter proteins (Firefly and Renilla luciferase) from a dual-reporter construct.

- Procedure:

- Construct Design: Clone the viral pseudoknot and slippery sequence (e.g., X XXY YYZ) between the Renilla (upstream) and Firefly (downstream) luciferase genes in a mammalian expression vector.

- Transfection: Seed HEK293T cells in 24-well plates. Transfect with 500 ng of plasmid DNA per well using a standard transfection reagent (e.g., PEI). Include a positive control (known efficient pseudoknot) and a negative control (mutated slippery site).

- Measurement: At 48h post-transfection, lyse cells and assay using a Dual-Luciferase Reporter Assay System. Measure luminescence sequentially.

- Calculation: Frameshifting Efficiency (%) = (Firefly Luc / Renilla Luc) * 100. Normalize to the negative control.

Visualizations

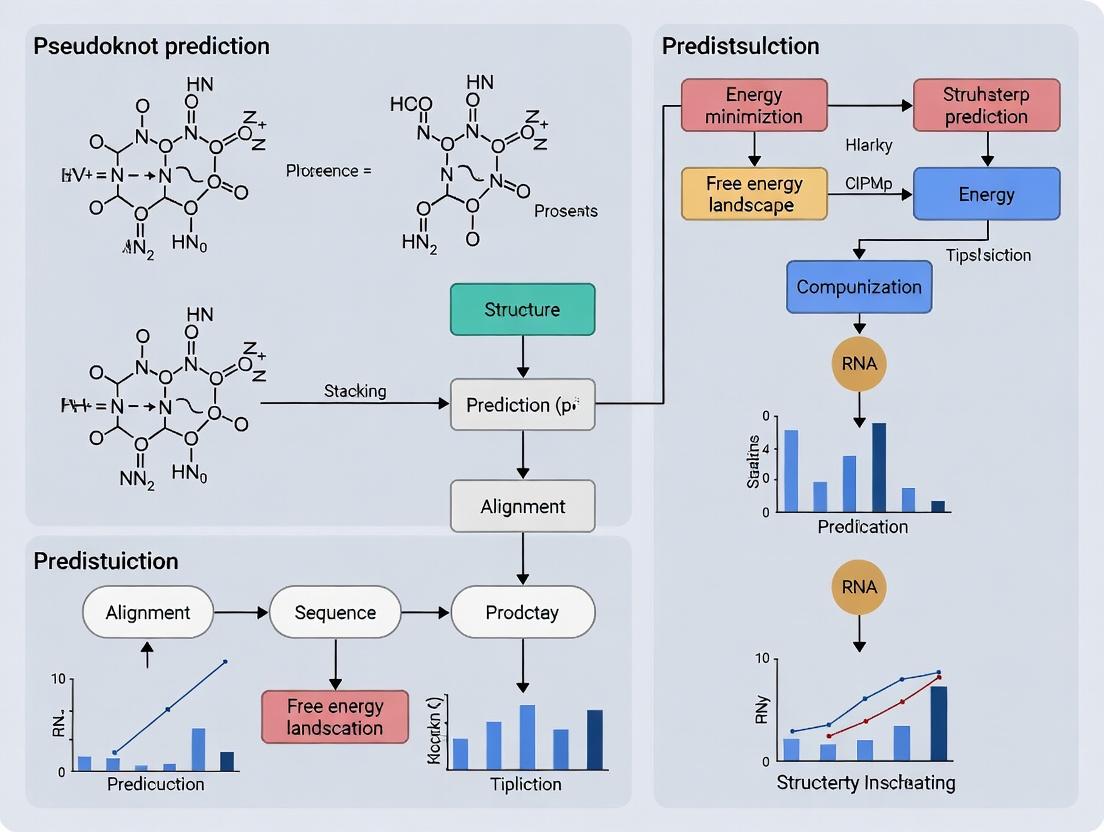

Title: Computational Workflow for Pseudoknot Prediction

Title: Viral -1 Frameshifting Induced by an RNA Pseudoknot

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent / Material | Function in Pseudoknot Research | Example/Notes |

|---|---|---|

| T7 RNA Polymerase | High-yield in vitro transcription for generating RNA constructs for probing, assays, and crystallography. | NEB HiScribe Kits; use for isotopic (13C/15N) labeling for NMR. |

| SHAPE Reagent (e.g., NAI) | Chemical probing to identify single-stranded vs. base-paired nucleotides in RNA structure. | Used in SHAPE-MaP for secondary structure modeling constraints. |

| Dual-Luciferase Reporter Vectors (e.g., pDL) | Quantitatively measure -1 programmed ribosomal frameshifting (PRF) efficiency of viral pseudoknots in cells. | Promega pDL-TMV; clone pseudoknot into inter-cistronic region. |

| Molecular Crowding Agents (PEG, Ficoll) | Mimic intracellular crowded environment, which can significantly stabilize pseudoknot folding and function. | Critical for in vitro assays to reflect in vivo frameshifting rates. |

| Mg2+ Chelators (EDTA) & Salts | Modulate divalent cation concentration to probe Mg2+-dependent pseudoknot folding and catalysis. | Titration reveals folding intermediates and stability. |

| Pseudoknot-Specific Prediction Software (IPknot) | Predict pseudoknot-containing secondary structures from sequence with a balance of speed/accuracy. | Uses integer programming; faster than exact algorithms. |

| Restrained MD Force Fields (AMBER) | Perform molecular dynamics simulations with experimental constraints (NMR NOEs, SHAPE data). | Allows study of pseudoknot dynamics and ligand interactions. |

Troubleshooting Guides & FAQs

Q1: My exhaustive search algorithm for predicting pseudoknotted RNA structures fails to complete on sequences longer than 30 nucleotides. What is the fundamental issue and are there workaround strategies?

A: The fundamental issue is that the problem of predicting RNA secondary structures including pseudoknots is formally NP-hard. This means that, assuming P ≠ NP, there is no known algorithm that can solve the exact problem efficiently (in polynomial time) for all sequences. The runtime of exact algorithms grows exponentially with sequence length.

- Workaround 1 (Approximation): Use heuristic or approximation algorithms (e.g., HotKnots, ILM, TT2NE) that run in polynomial time but do not guarantee the globally optimal structure.

- Workaround 2 (Restricted Search): Use algorithms (e.g., pknotsRE, NUPACK) that predict a specific, computationally tractable subclass of pseudoknots (like simple H-type pseudoknots).

- Workaround 3 (Ensemble Methods): Employ methods that sample from the ensemble of possible structures or use machine learning to guide the search.

Q2: How do I verify that the pseudoknot prediction problem for my specific model (e.g., energy minimization with a given set of loop-based rules) is NP-hard?

A: You must construct a formal polynomial-time reduction from a known NP-complete or NP-hard problem to your specific prediction problem.

- Select a Known Problem: Common choices include 3-SAT, Partition, or the Exact Cover by 3-Sets (X3C) problem.

- Construct the Reduction: Design a method to transform any instance of the known problem (e.g., a Boolean formula) into an RNA sequence and energy parameters for your model. The transformation itself must run in polynomial time.

- Prove Equivalence: Prove that a solution (e.g., a satisfying assignment) for the known problem exists if and only if an RNA structure with specific, efficiently verifiable properties (e.g., energy below a certain threshold, containing specific base pairs) exists for your constructed sequence.

- Cite Foundational Work: Reference the seminal proofs, such as those by Lyngsø & Pedersen (1999) for general pseudoknot prediction or subsequent proofs for more restricted models.

Q3: When I compare two different pseudoknot prediction tools on benchmark datasets, their performance metrics vary widely. What key experimental parameters should I control for a fair assessment?

A: Ensure you standardize the following:

- Dataset: Use the same curated set of RNA sequences with known, validated structures.

- Sequence Length Range: Performance often degrades with length. Compare tools on bins of similar lengths.

- Pseudoknot Type: Some tools only predict specific pseudoknot topologies. Know the capabilities of each tool.

- Energy Parameters: Use identical, updated thermodynamic parameters (e.g., from the Turner group) if the tool allows their specification.

- Computational Resources: Specify CPU time, memory limits, and version numbers for each tool.

Q4: My dynamic programming algorithm for pseudoknot prediction is running out of memory on a high-performance computing cluster. What are the typical space complexity bottlenecks?

A: Standard dynamic programming algorithms for pseudoknot prediction often require O(n^4) to O(n^6) space, where n is the sequence length. A sequence of 200 nucleotides can easily require tens to hundreds of gigabytes of memory for full tables.

| Sequence Length (n) | O(n^4) Space Estimate (Float Array) | O(n^6) Space Estimate (Float Array) |

|---|---|---|

| 50 nt | ~6 MB | ~1.5 GB |

| 100 nt | ~100 MB | ~96 GB |

| 200 nt | ~1.6 GB | ~6.4 TB |

Mitigation Strategy: Implement a sparse or beam-search approach that prunes the conformational space, storing only the most promising intermediate structures based on energy. This trades optimality for tractability.

Experimental Protocol: Validating NP-Hardness via Reduction from 3-SAT

Objective: To demonstrate that a specific RNA pseudoknot prediction model is NP-hard by reducing the 3-SAT problem to it.

Materials:

- A 3-SAT Boolean formula instance (e.g., (x1 ∨ ¬x2 ∨ x3) ∧ (¬x1 ∨ x2 ∨ x4)).

- RNA energy parameter set (e.g., nearest-neighbor thermodynamics).

Methodology:

- Clause Gadget Design: For each clause in the 3-SAT formula, design a short RNA sequence segment where a favorable (low energy) local structure is only possible if at least one literal in the clause is satisfied (True).

- Variable Gadget Design: Design sequence segments that correspond to each Boolean variable. These must have two mutually exclusive structural states, one representing

Trueand the otherFalse. - Coupling Design: Design longer-range sequence complementarity that "couples" the variable gadget states to the clause gadget states, ensuring structural consistency across the entire molecule.

- Construct Full Sequence: Concatenate and link all gadget sequences in a predefined order to form a single RNA sequence S.

- Define Energy Threshold: Calculate an energy threshold E based on the construction, such that a secondary structure for S with free energy ≤ E exists if and only if the original 3-SAT formula is satisfiable.

- Verification: Prove that the transformation (3-SAT formula → RNA sequence S and threshold E) can be done in time polynomial to the size of the formula. Prove the logical equivalence of the solutions.

Key Research Reagent Solutions

| Item | Function in Complexity Analysis / Prediction |

|---|---|

| Nearest-Neighbor Thermodynamic Parameters | Provides the free energy contribution for stacks, loops, and other motifs. Essential for defining the energy minimization objective function. |

| Curated RNA Structure Database (e.g., RNA STRAND) | Provides benchmark datasets of known pseudoknotted and non-pseudoknotted structures for validating prediction algorithms and assessing performance. |

| Polynomial-Time Verifiable Pseudoknot Grammar | A formal grammar (e.g., a carefully restricted stochastic context-free grammar) that defines a tractable subclass of pseudoknots, enabling dynamic programming. |

| Integer Linear Programming (ILP) Solver (e.g., CPLEX, Gurobi) | Used as the core engine in exact but exponential-time algorithms that formulate pseudoknot prediction as an ILP problem. |

| Heuristic Search Framework (e.g., Genetic Algorithm, Monte Carlo) | Provides a metaheuristic framework to develop polynomial-time approximation algorithms when an exact solution is intractable. |

Diagram: Reduction Flow from 3-SAT to Pseudoknot Prediction

Diagram: Algorithm Strategy Decision Tree

Technical Support Center

Troubleshooting Guide: Algorithmic Failure in Pseudoknot Prediction

Q1: During my structure prediction run, the dynamic programming (DP) algorithm terminates or returns an error for sequences suspected of having complex pseudoknots. What is happening?

A1: You are likely encountering the fundamental limitation of traditional DP (like the Nussinov or Zuker algorithms). These algorithms rely on a recursive decomposition that assumes RNA secondary structure is non-crossing. Pseudoknots involve base pairs that cross (i,j) pairs with (k,l) where i

Q2: How can I confirm that my prediction failure is due to pseudoknots and not a simple bug or memory issue? A2: Follow this diagnostic protocol:

- Run Control Experiments: Execute your DP algorithm on two control sequences:

- A known pseudoknot-free sequence (e.g., tRNA).

- A known pseudoknot-containing sequence (e.g., the Hepatitis Delta Virus ribozyme).

- Analyze Output: The algorithm will correctly predict the first but fail on the second.

- Simplify Input: Test your target sequence with a sliding window of ~50-70 nucleotides. If the algorithm succeeds on shorter segments but fails on the full length, it suggests the presence of long-range, crossing interactions.

Experimental Protocol: Validating Pseudoknot Prediction Failures

- Objective: To empirically demonstrate the failure of traditional DP on pseudoknotted structures.

- Input: RNA sequence (FASTA format).

- Software: Custom or standard DP implementation (e.g., ViennaRNA

RNAfoldwithout-ppseudoknot options). - Procedure:

- Prepare three sequence files: Control1 (tRNA-Phe), Control2 (HDV ribozyme), Target.

- Execute:

RNAfold < input.fasta - Compare the predicted minimum free energy (MFE) structure with the known reference structure from databases like RCSB PDB or RNA STRAND.

- Calculate the F1-score (harmonic mean of precision and recall) for base pair detection.

- Expected Outcome: High F1-score for Control1, very low (<0.3) for Control2 and similar Target sequences.

FAQs

Q: Are there any alternative computational methods that can handle pseudoknots? A: Yes, but they trade off computational efficiency for accuracy. Common approaches include:

- Heuristic Methods: (e.g., HotKnots) which perform stochastic searches.

- Constraint Programming: Specifies logical constraints to find solutions satisfying all base-pairing rules.

- Machine Learning: Deep learning models trained on known structures can predict including pseudoknots.

- Comparative Sequence Analysis: Detects covarying mutations in aligned sequences to infer base pairs (strongest evidence).

Q: What is the practical impact of this DP failure on drug development targeting RNA? A: Many functional RNA targets (e.g., viral frameshift elements, riboswitches, lncRNAs) rely on pseudoknots for their 3D shape and function. A DP-based prediction that misses these knots will generate an incorrect structural model. This misinforms rational drug design, potentially leading to small molecules that fail to bind the true native structure, wasting significant R&D resources.

Data Presentation

Table 1: Performance Comparison of RNA Structure Prediction Methods on Pseudoknotted Sequences

| Method Category | Example Algorithm | Can Handle Pseudoknots? | Time Complexity (Worst-Case) | Average F1-Score on Pseudoknots* |

|---|---|---|---|---|

| Traditional DP | Nussinov Algorithm | No | O(n³) | ~0.15 |

| Extended DP | Rivas & Eddy Algorithm | Yes | O(n⁶) | ~0.75 |

| Heuristic Search | HotKnots | Yes | Varies | ~0.65 |

| Deep Learning | SPOT-RNA | Yes | O(n²) | ~0.80 |

*Scores are approximate aggregates from recent benchmarks (e.g., RNA-Puzzles).

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Pseudoknot Research |

|---|---|

| DMS (Dimethyl Sulfate) | Chemical probing reagent. Methylates unpaired A & C nucleotides. Used to validate single-stranded regions in predicted structures. |

| SHAPE Reagents (e.g., NMIA) | Probe 2'-OH flexibility. Unpaired nucleotides have higher reactivity, providing experimental constraints for folding algorithms. |

| RNase P1 / S1 Nuclease | Enzymes that cleave single-stranded RNA. Used in structure mapping to confirm unpaired regions. |

| Psoralen / AMT Crosslinker | Forms covalent crosslinks between base-paired nucleotides upon UV exposure. Can capture long-range interactions and pseudoknots. |

| In-line Probing Buffer | Utilizes spontaneous RNA cleavage at flexible linkages to infer structural constraints over long incubation times. |

Visualizations

Diagram 1: Traditional DP vs. Intertwined Loop Problem

Diagram 2: Pseudoknot Diagnostic Workflow

Diagram 3: From Prediction Failure to Experimental Validation

Troubleshooting Guides & FAQs

Q1: My pseudoknot prediction algorithm is exceeding memory limits and crashing on larger RNA sequences. What is the primary cause and a potential mitigation strategy?

A: The primary cause is the combinatorial explosion of the search space when considering non-nested (crossing) base pairs. For a sequence of length n, the number of possible secondary structures grows exponentially (~1.8^n for nested structures) but becomes super-exponential when allowing pseudoknots. This rapidly exhausts system memory. A core mitigation strategy is to apply restricted grammar models (e.g., Rivas & Eddy style) or heuristic fragment assembly to limit the search space to biologically plausible pseudoknots, rather than enumerating all possibilities.

Q2: During energy minimization for a pseudoknotted structure, my optimization gets stuck in a local minimum. How can I improve the sampling of the conformational landscape?

A: This is a classic symptom of the rugged energy landscape induced by overlapping structures. Consider transitioning from a deterministic free energy minimization (e.g., Zuker) to a stochastic sampling method. Implement a Monte Carlo Simulated Annealing protocol where you probabilistically accept some higher-energy moves early in the simulation to escape local minima, gradually lowering the "temperature" parameter to settle into a deep, hopefully global, minimum.

Q3: I am encountering false positive pseudoknot predictions in my comparative analysis. Are there common experimental validation steps to confirm computational predictions?

A: Yes. Computational predictions, especially from ab initio methods, require experimental validation. A standard protocol is Selective 2'-Hydroxyl Acylation analyzed by Primer Extension (SHAPE). SHAPE reagents modify flexible (unpaired) nucleotides, and the modification pattern can be used to constrain computational folding. A significant discrepancy between the SHAPE-informed model and the pseudoknotted prediction suggests a potential false positive.

Q4: My dynamic programming algorithm's runtime becomes prohibitive (beyond O(n^4)) for sequences >200 nucleotides. What are the current efficient algorithmic frameworks?

A: The O(n^4) to O(n^6) complexity of exact pseudoknotted DP is the central combinatorial nightmare. Current efficient frameworks include:

- Integer Linear Programming (ILP): Formulates prediction as an optimization problem solvable by off-the-shelf solvers.

- Constraint Satisfaction Programming (CSP): Uses known structural constraints (e.g., from experiments) to drastically prune the search space.

- Machine Learning (ML) pre-filtering: Uses deep learning models (e.g., SPOT-RNA, UFold) to predict probable base pairing patterns, which are then refined by traditional physics-based methods.

Experimental Protocol: SHAPE-MaP for Pseudoknot Validation

Objective: To experimentally probe RNA secondary structure, including pseudoknots, using SHAPE with Mutational Profiling (MaP) for high-throughput validation.

Methodology:

- RNA Purification: Purify in vitro transcribed or native RNA (>5 pmol).

- Folding: Refold RNA in appropriate buffer (e.g., 50 mM HEPES pH 8.0, 100 mM KCl, 5 mM MgCl2) by heating to 95°C for 2 min, cooling on ice for 2 min, and incubating at 37°C for 20 min.

- SHAPE Modification: Add 1-10 mM NMIA or 1M7 reagent to the folded RNA. Incubate at 37°C for 5-6 half-lives. Include a no-reagent control (DMSO only).

- Reverse Transcription (MaP Step): Use a reverse transcriptase with high processivity and low fidelity (e.g., SuperScript II) to read through SHAPE adducts, incorporating non-templated mutations at modification sites.

- Library Preparation & Sequencing: Amplify cDNA by PCR with unique dual indexes. Purify and sequence on an Illumina platform (minimum 50,000 reads per sample).

- Data Analysis: Map mutations to the reference sequence. Calculate per-nucleotide mutation rates. Normalize rates (control subtracted). Use normalized SHAPE reactivity (low for paired, high for unpaired) as soft constraints in a folding algorithm (e.g., RNAstructure

ShapeKnots).

Table 1: Algorithmic Complexity for RNA Secondary Structure Prediction

| Prediction Model | Time Complexity | Space Complexity | Handles Pseudoknots? |

|---|---|---|---|

| Nussinov (Max Pairs) | O(n^3) | O(n^2) | No |

| Zuker (MFE) | O(n^3) | O(n^2) | No |

| R&E (PK) Grammar | O(n^6) | O(n^4) | Yes (Restricted) |

| ILP Formulation | Exponential (Worst-case) | Exponential (Worst-case) | Yes (General) |

| ML-Based (Inference) | O(n^2) | O(n^2) | Yes |

Table 2: Key Experimental Techniques for Structure Validation

| Technique | Principle | Throughput | Pseudoknot Resolution | Key Limitation |

|---|---|---|---|---|

| SHAPE-MaP | Chemical probing of backbone flexibility | High | Indirect (via constraints) | In vivo conditions variable |

| Cryo-EM | Single-particle imaging | Medium | High (Atomic near) | Requires sample homogeneity |

| X-ray Crystallography | Crystal diffraction | Low | High (Atomic) | Difficult crystallization |

| DMS-MaP | Chemical probing of base accessibility | High | Indirect | Specific to A/C bases |

Visualization: Pseudoknot Prediction Workflow

Title: Computational PK Prediction & Validation Pipeline

Title: SHAPE-MaP Principle for PK Detection

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Pseudoknot Research

| Reagent / Material | Function / Application | Key Consideration |

|---|---|---|

| 1M7 (1-methyl-7-nitroisatoic anhydride) | SHAPE chemical probe. Modifies the 2'-OH of flexible riboses to interrogate RNA backbone dynamics. | Short half-life (~1 min). Must be prepared fresh in anhydrous DMSO. |

| NMIA (N-methylisatoic anhydride) | Slower-reacting SHAPE probe. Useful for kinetics studies or longer reaction times. | Longer half-life (~15 min). More stable stock solution than 1M7. |

| SuperScript II Reverse Transcriptase | High-processivity RT for SHAPE-MaP. Low fidelity promotes mutation at modification sites. | Critical for the Mutational Profiling (MaP) readout. Do not use high-fidelity enzymes. |

| DMS (Dimethyl Sulfate) | Chemical probe for base-pairing status (A, C). Methylates accessible Watson-Crick faces. | Toxic and volatile. Use in a fume hood. Specific for A(N1) and C(N3). |

| In vitro Transcription Kit (T7) | High-yield RNA synthesis for structural studies of designed or viral RNA sequences. | Ensure co-transcriptional folding or include a rigorous refolding step. |

| MgCl₂ (100mM Stock) | Divalent cation crucial for RNA tertiary folding and pseudoknot stabilization. | Concentration is critical (typically 5-20 mM in folding buffer). Titrate for optimal structure. |

| RNase Inhibitor (e.g., RNasin) | Protects RNA from degradation during purification, folding, and modification steps. | Essential for working with long or low-abundance native RNA. |

Troubleshooting Guide & FAQs

Q1: Why does my pseudoknot prediction tool fail or timeout on long RNA sequences (>10,000 nt)? A: This is a direct consequence of the algorithmic complexity parameter of sequence length. Most dynamic programming-based methods (e.g., NUPACK, pknots) scale with O(L^3) to O(L^6), where L is the length. For very long sequences, memory and time requirements become prohibitive.

- Solution: Apply a sliding window approach. Break the long sequence into overlapping windows (e.g., 500-800 nt windows with 100-nt overlap). Run prediction on each window and then stitch results, checking for consistency in overlap regions. Alternatively, use heuristic or machine learning-based tools (e.g., IPknot, Knotty) designed for longer sequences.

Q2: What does "pseudoknot order" mean, and why does my tool only predict simple H-type pseudoknots? A: Pseudoknot order (k) defines the number of nested levels of interleaved base pairs. An H-type is order-1. Higher-order (k>1) pseudoknots have more complex, deeply nested interactions. Many classic algorithms are limited to order-1 or order-2 due to computational intractability.

- Solution: First, verify if your biological system is suspected to contain higher-order knots (e.g., in viral frameshift elements or ribozymes). If so, you must select a tool explicitly capable of predicting them, such as HotKnots (heuristic search) or TurboKnot (using iterative sampling). Be aware that runtime will increase significantly with the allowed maximum order.

Q3: My predicted structure is biophysically impossible, violating basic topological constraints. How is this possible? A: Some computational models prioritize thermodynamic stability or score optimization over physical plausibility. They may predict "overlapped" base pairs or knots that cannot form in 3D space without chain breakage.

- Solution: Post-process your results with a topology checker. Use a tool like RNApdbee or a custom script to ensure the predicted structure is planar (can be drawn in 2D without crossing lines/edges). Integrate this validation as a mandatory step in your workflow.

Q4: How do I choose the right tool given my sequence length and suspected pseudoknot complexity? A: Use the following decision table based on key complexity parameters:

| Tool Name | Recommended Max Sequence Length | Max Pseudoknot Order Handled | Key Algorithmic Approach | Best Use Case |

|---|---|---|---|---|

| NUPACK | ~ 1,000 nt | 1 (H-type) | Dynamic Programming | Short sequences, thermodynamic analysis |

| IPknot | ~ 3,000 nt | 2 | Machine Learning (SVM) | Medium-length genomic RNA |

| HotKnots | ~ 500 nt | >2 | Heuristic Search | Exploration of complex, high-order knots |

| Knotty | ~ 10,000 nt | 1 | Energy Minimization | Very long sequences (e.g., whole viroids) |

| TurboKnot/PKiss | ~ 300 nt | 2 | Dynamic Programming | Detailed analysis of known pseudoknot motifs |

Q5: Can I predict pseudoknots for a large batch of sequences from a viral genome? What is a robust protocol? A: Yes, but you need a pipeline that balances accuracy and speed.

Experimental Protocol: Batch Prediction for Genomic Screens

- Input Preparation: Use

seqkit splitor a custom Python script to divide the genome into functional domains or fixed-size windows (e.g., 600nt). Save as separate FASTA files. - Tool Selection & Execution: For a balanced screen, use IPknot for its speed and reasonable accuracy. Run via command line:

ipknot -r input.fa > output.ct. - Topological Validation: Parse the output CT or BPSEQ file into a Python script using the

NetworkXlibrary. Check if the graph of base pairs is non-planar. Filter out predictions that fail. - Energy Refinement (Optional): For top candidates, feed the filtered structures into a refined tool like HotKnots or NUPACK (in pseudoknot mode) for more precise free energy calculation.

- Visualization & Output: Use

fornaorVARNAto visualize the final predicted pseudoknotted structures.

Research Reagent & Computational Toolkit

| Item | Function/Description |

|---|---|

| NUPACK Web Server / CLI | Core tool for thermodynamic analysis and secondary structure prediction, including basic pseudoknots. |

| IPknot Software Package | Fast, machine-learning-based predictor essential for screening medium-length sequences. |

| ViennaRNA Package | Provides RNAfold (limited to k=1) but essential for benchmarking and basic folding parameters. |

| HotKnots Executable | Heuristic search tool crucial for exploring the possibility of higher-order pseudoknots. |

| Graphviz & PyGraphviz | Libraries for programmatically creating and checking the planarity of predicted structure graphs. |

| RNApdbee Web Service | Validates structural topology and converts between file formats (CT, BPSEQ, DOT). |

| Custom Python Scripts | For batch processing, data wrangling, and implementing sliding window or validation logic. |

| High-Performance Computing (HPC) Cluster Access | Mandatory for running parameter sweeps or processing large genomic datasets. |

Workflow & Pathway Diagrams

Navigating the Intractable: Modern Algorithmic Strategies for Pseudoknot Structure Prediction

Troubleshooting Guide & FAQs

Q1: My IPknot prediction run fails with a "memory allocation error" on a long RNA sequence (>5000 nt). How can I resolve this?

A: IPknot uses integer programming, which has high memory complexity for long sequences. Use the --max-span and --max-bp-span parameters to restrict the distance between paired bases, significantly reducing the search space and memory footprint. Alternatively, split the sequence into overlapping windows (e.g., 1000-nt windows with 200-nt overlap) and run predictions on each segment.

Q2: HotKnots v2.0 returns different pseudoknot structures for the same sequence on repeated runs. Is this a bug?

A: No. HotKnots uses stochastic sampling (a heuristic method) to explore the folding landscape. Variability indicates the presence of multiple near-optimal structures. Use the -m flag to increase the number of stochastic runs (e.g., -m 100 instead of the default 50) for more consistent results. Examine the ensemble of output structures to identify recurrent base pairs.

Q3: When using a kinetic folding simulator (e.g., Kinefold, Tornado) for trajectory analysis, how do I distinguish biologically relevant conformations from transient folding intermediates? A: Cluster your simulation trajectories based on structural similarity (e.g., using RNAdistance or a custom RMSD metric for stem positions). Relevant conformations are typically those with high occupancy (populated for a significant fraction of simulation time) and low free energy. Plot population vs. time to identify metastable states.

Q4: How can I incorporate chemical probing data (SHAPE, DMS) as soft constraints in IPknot or similar predictors?

A: Most modern tools support experimental constraints. For IPknot, use the --shape option followed by a file containing reactivity values (one per nucleotide). Reactivities are converted into pseudo-energy terms, biasing the model towards or away from pairing at specific positions. Ensure your reactivity data is properly normalized (e.g., between 0 and 1).

Q5: I am comparing IPknot and HotKnots predictions. They disagree sharply on a viral frameshift element. Which result is more reliable?

A: First, check if either prediction is consistent with available mutagenesis or phylogenetic data. If experimental data is absent, run both tools with multiple parameter sets. Use HotKnots' -P option to try different energy parameters (e.g., Andronescu2007, Turner2004). For IPknot, vary the --level parameter (e.g., 2 for simple H-type pseudoknots, 3 for complex knots). The structure predicted by both methods under robust parameters is more credible.

Table 1: Comparison of Pseudoknot Prediction Tools

| Feature / Metric | IPknot | HotKnots v2.0 | Kinetic Folding (Kinefold) |

|---|---|---|---|

| Core Method | Integer Programming | Heuristic Stochastic Search | Kinetic Monte Carlo Simulation |

| Time Complexity | O(L³) to O(L⁴) (L = seq length) | O(L³) typical | Highly variable; depends on trajectory length |

| Pseudoknot Model | Hierarchical (level-k) | Explicit, via energy models | Explicit, via base pair formation/breakage rates |

| Typical Use Case | Accurate MFE structure for short/medium RNAs | Exploring suboptimal folding landscapes | Folding pathways, co-transcriptional folding, kinetics |

| Handles Long RNA | Limited by memory (>5kb challenging) | More scalable | Computationally intensive for >500nt |

| Input Constraints | Yes (SHAPE, DMS) | Limited | Yes (co-transcriptional rules, ligands) |

| Key Strength | Guarantees optimal solution within its model | Finds complex pseudoknots missed by others | Provides temporal dynamics, not just final structure |

Table 2: Benchmark Performance on Pseudoknotted RNAs (Example Datasets)

| Tool | Sensitivity (SN) | Positive Predictive Value (PPV) | F1-Score | Avg. Run Time (s, 100nt) |

|---|---|---|---|---|

| IPknot | 0.78 | 0.84 | 0.81 | 45 |

| HotKnots | 0.72 | 0.79 | 0.75 | 120 |

| (Note: Values are illustrative from literature; actual benchmarks vary by dataset and parameters.) |

Experimental Protocols

Protocol 1: Standard Pseudoknot Prediction Workflow with IPknot

- Input Preparation: Format your RNA sequence in a plain text file (e.g.,

seq.fain FASTA format). - Parameter Selection: Choose the pseudoknot complexity level. For most biological pseudoknots, level=2 is sufficient:

ipknot seq.fa --level 2. - Run with Constraints (Optional): Prepare a SHAPE reactivity file (one value per line). Run:

ipknot seq.fa --level 2 --shape shape.dat. - Output Analysis: The primary output is a dot-bracket notation string. Visualize using tools like

VARNAorforna.

Protocol 2: Exploring Structural Ensembles with HotKnots

- Base Run: Execute HotKnots with default stochastic samples:

HotKnots -s SEQ -m 50. - Ensemble Generation: Increase sampling for robustness:

HotKnots -s SEQ -m 200 -P Andronescu2007. - Comparative Analysis: The tool outputs multiple candidate structures. Extract all predicted base pairs and calculate their frequency across the 200 runs. High-frequency pairs are considered robust predictions.

- Energy Refinement: For each unique output structure, compute its free energy using

RNAeval(from ViennaRNA) to rank candidates.

Protocol 3: Simulating Folding Kinetics with the Kinefold Web Server

- Input Specification: Enter sequence. Set temperature and ionic conditions.

- Kinetic Parameters: Define transcription speed (nt/sec) if simulating co-transcriptional folding. Set the maximum simulation time (e.g., 10 seconds of simulated time).

- Launch Simulations: Initiate multiple stochastic trajectories (e.g., 100).

- Trajectory Analysis: Download the trajectory data. Analyze using custom scripts to plot the formation time of key base pairs or to cluster structures over time to identify folding intermediates.

Visualizations

HotKnots Heuristic Folding Flow

IPknot Hierarchical Prediction

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Pseudoknot Research |

|---|---|

| SHAPE Reagents (e.g., NAI, NMIA) | Chemically probe RNA backbone flexibility. Unpaired nucleotides show higher reactivity, providing experimental constraints for structure prediction. |

| DMS (Dimethyl Sulfate) | Methylates adenosine (A) and cytidine (C) at the N1 and N3 positions, respectively, when they are not base-paired. Used for nucleotide-resolution pairing data. |

| In-line Probing Buffer | Provides conditions for spontaneous RNA backbone cleavage, revealing unconstrained regions over time, useful for validating structural models. |

| RNA Structure Refolding Buffer (e.g., with Mg²⁺) | Standardized ionic conditions (e.g., 10mM Tris, 100mM KCl, 10mM MgCl₂, pH 7.5) for ensuring consistent RNA folding in vitro prior to probing or analysis. |

| Thermostable Polymerases (for long RNA synthesis) | Essential for in vitro transcription of long (>500 nt) RNA constructs without truncation, required for studying large pseudoknotted domains. |

| Computational Cluster Access | Heuristic and kinetic simulations are computationally intensive. High-performance computing (HPC) resources are necessary for production-scale analysis. |

Technical Support Center: Troubleshooting Neural Network Models for RNA Pseudoknot Prediction

Thesis Context: This support center is designed within the thesis research framework: Addressing computational complexity in pseudoknot prediction research through end-to-end deep learning architectures. The guidance below addresses practical implementation challenges.

FAQs and Troubleshooting Guides

Q1: My model’s validation loss plateaus early while training loss continues to decrease. What are the primary debugging steps? A1: This indicates overfitting, a critical issue given the limited size of many curated RNA structure datasets.

- Step 1: Implement or increase the intensity of Dropout layers (rates of 0.3-0.5 are common for RNA sequence inputs) and add L2 weight regularization (lambda=1e-4) to the dense layers.

- Step 2: Verify your data split. For pseudoknot-inclusive datasets like PseudoBase++, use homology reduction to ensure no similar sequences are in both training and validation sets, preventing data leakage.

- Step 3: Augment your training data with synthetic variations (e.g., slight nucleotide shuffling in non-conserved regions) if permitted by your biological question.

Q2: During inference, my model fails to predict any pseudoknots, only producing simple stem-loops. How can I diagnose this? A2: This suggests the model has not learned the long-range dependencies required for pseudoknots.

- Check Architecture: Ensure you are using an architecture capable of capturing long-range context, such as a Bidirectional LSTM or, more effectively, a Transformer encoder with self-attention. Increase the model's receptive field.

- Analyze Training Labels: Inspect your ground truth data. If pseudoknotted pairs are a small minority (<5%) of all base pairs, your loss function may be dominated by non-pseudoknot classes. Use a weighted cross-entropy loss to assign higher weight to the rarer pseudoknotted pair classes.

- Visualize Attention: If using a Transformer, extract and visualize the attention maps for a known pseudoknot sequence. Check if the attention heads are connecting the crossing stem regions.

Q3: The training process is extremely slow even on a GPU. What optimizations can I apply? A3: Computational complexity is the core challenge this thesis addresses. Optimize as follows:

- Preprocessing: One-hot encode sequences and save as

.npyfiles for rapid disk loading. Use atf.data.Datasetortorch DataLoaderwith prefetching. - Model Pruning: Profile your model's layers. Consider reducing the number of parameters in fully connected heads or using depthwise separable convolutions for initial feature extraction.

- Pre-training: Utilize a pre-trained language model (like RNA-BERT) for initial sequence embeddings, then fine-tune on your specific structure prediction task, which often converges faster than training from scratch.

Q4: How do I evaluate the prediction accuracy for pseudoknots specifically, not just overall structure? A4: Standard metrics like F1-score for all base pairs can be misleading. Implement a stratified evaluation.

- Separate true base pairs into two classes: pseudoknotted (PK) and non-pseudoknotted (non-PK).

- Calculate Sensitivity (PPV) and Specificity (STY) for the PK class independently.

- Report the F1-score for the PK class as your key metric for pseudoknot prediction success.

Table 1: Comparative Performance of End-to-End Models on Pseudoknot Prediction (Summary from Recent Literature)

| Model Architecture | Dataset(s) Used | Overall F1-Score | Pseudoknot-Specific F1-Score | Key Advantage |

|---|---|---|---|---|

| UDLR-RNN | PseudoBase++ | 0.67 | 0.71 | Specialized topological order for pseudoknots. |

| Bidirectional LSTM + Attention | RNAStralign, PseudoBase++ | 0.74 | 0.68 | Captures long-range dependencies effectively. |

| Transformer Encoder | RNAStralign | 0.79 | 0.65 | Superior parallelization and context capture. |

| ResNet (2D-CNN) on Pairing Matrix | PseudoBase++ | 0.72 | 0.62 | Learns local interaction patterns well. |

Table 2: Key Hyperparameters and Their Impact on Model Performance

| Hyperparameter | Typical Range | Impact on Training & Outcome |

|---|---|---|

| Learning Rate | 1e-4 to 1e-2 | Lower rates (1e-4) with Adam optimizer often lead to more stable convergence for complex RNA tasks. |

| Batch Size | 32 to 128 | Smaller sizes (32) can improve generalization but increase training time. Larger sizes speed up training but may harm convergence. |

| Embedding Dimension | 64 to 512 | Higher dimensions (256+) capture more complex features but increase computational load and overfitting risk. |

| Attention Heads (Transformer) | 4 to 12 | More heads allow the model to focus on different dependency types simultaneously. 8 is a common starting point. |

Experimental Protocol: Training an End-to-End Transformer for Pseudoknot Prediction

Objective: Train a model to predict a base-pairing probability matrix directly from a one-hot encoded RNA sequence.

Materials: See "The Scientist's Toolkit" below.

Methodology:

- Data Preparation:

- Source sequences and structures from PseudoBase++ and RNAStralign.

- Filter sequences > 500 nucleotides to manage GPU memory.

- Perform a 70/15/15 split (train/validation/test) using CD-HIT at 80% sequence identity to ensure no redundancy across sets.

- Encode sequences as one-hot matrices (4 channels: A, C, G, U). Pad to the maximum length in the batch.

- Encode structures as 2D binary matrices where

(i, j) = 1indicates a canonical (Watson-Crick or G-U) base pair.

Model Architecture (Transformer-Based):

- Input Layer: Accepts padded one-hot matrix.

- Embedding: A trainable linear layer projects the one-hot vectors into a 256-dimensional space. Add sinusoidal positional encoding.

- Encoder Stack: 6 Transformer encoder layers, each with 8 attention heads, a feed-forward dimension of 1024, and a dropout rate of 0.1.

- Output Head: A 2D convolutional layer followed by a sigmoid activation to produce an n x n probability matrix, where each value represents the predicted probability of a base pair.

Training:

- Loss Function: Use a weighted binary cross-entropy loss. Assign a weight of 8.0 to the positive (paired) class and 1.0 to the negative class to counter imbalance.

- Optimizer: Adam optimizer with a learning rate of 0.0001, β1=0.9, β2=0.98.

- Procedure: Train for 200 epochs with early stopping if validation loss does not improve for 20 epochs. Use a batch size of 32.

Post-processing & Evaluation:

- Apply a threshold of 0.5 to the probability matrix to obtain a binary prediction.

- Use the F1-score for the pseudoknot class (see FAQ Q4) as the primary evaluation metric on the held-out test set.

Visualizations

Diagram 1: End-to-End Pseudoknot Prediction Workflow

Diagram 2: Transformer Encoder Architecture for RNA

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for End-to-End RNA Structure Prediction Experiments

| Item | Function/Description | Example/Provider |

|---|---|---|

| Curated RNA Dataset | Provides sequences with known secondary structures, including pseudoknots. Essential for training and benchmarking. | PseudoBase++, RNAStralign, ArchiveII |

| Deep Learning Framework | Software library for building, training, and deploying neural networks. | PyTorch, TensorFlow/Keras |

| GPU Compute Resource | Accelerates model training by performing parallel matrix operations. Critical for transformer models. | NVIDIA V100/A100, Google Colab Pro, AWS EC2 P3 instances |

| Sequence Homology Tool | Ensures non-redundant data splits to prevent overestimation of model performance. | CD-HIT, MMseqs2 |

| Structured Evaluation Scripts | Code to calculate stratified performance metrics (e.g., PK-class F1) beyond standard accuracy. | Custom Python scripts using sklearn.metrics |

| Pre-trained Language Model | Provides transfer learning for RNA sequences, potentially improving convergence and accuracy. | RNA-BERT, DNABERT (adapted for RNA) |

Constraint Programming and Integer Linear Programming (ILP) Formulations

Troubleshooting Guides & FAQs

FAQ 1: Why does my ILP model for pseudoknot prediction fail to solve or take an excessively long time?

Answer: This is often due to the model's size or formulation. Pseudoknot prediction with ILP can lead to a huge number of binary variables (e.g., one for each possible base pair). For a sequence of length n, the worst-case number of variables is O(n²), causing exponential growth in complexity. Common issues include:

- Weak LP Relaxation: Your formulation's linear programming relaxation provides a poor bound, causing the branch-and-bound tree to expand excessively.

- Symmetry: The model may have many equivalent solutions (symmetries), forcing the solver to explore redundant branches.

- Memory Limits: The constraint matrix becomes too large to hold in memory.

Troubleshooting Steps:

- Simplify the Model: Start with core constraints (complementarity, non-crossing for stems) before adding complex energy terms.

- Add Symmetry-Breaking Constraints: Force an ordering on pseudoknot stems or base pairs to eliminate equivalent solutions.

- Use a Commercial Solver: For large n, leverage high-performance solvers like Gurobi or CPLEX, which implement advanced presolve and cutting plane techniques.

- Implement Heuristics: Use a greedy algorithm or a constraint programming (CP) heuristic to find a good initial feasible solution ("warm start") for the ILP solver.

FAQ 2: How do I choose between Constraint Programming (CP) and ILP for my pseudoknot prediction experiment?

Answer: The choice depends on the nature of your constraints and objective.

| Feature | Constraint Programming (CP) | Integer Linear Programming (ILP) |

|---|---|---|

| Core Strength | Rich, logical constraints (e.g., "if this base pairs, then this other one cannot"). | Optimization of a linear objective function (e.g., minimizing free energy). |

| Constraint Types | Excellent for logical, global, and sequencing constraints. | Requires linearization. Logical constraints need conversion using big-M methods. |

| Objective Function | Primarily for feasibility; optimization via iterative search. | Excellent for direct optimization of a numerical score. |

| Best For | Exploring complex folding rules, searching for all feasible structures. | Finding the single, globally optimal structure per a defined scoring function. |

| Scalability | Can be effective for specific, highly-constrained search spaces. | Performance heavily depends on formulation; can become intractable for large n. |

Protocol for a Hybrid CP-ILP Approach:

- Phase 1 - CP for Feasible Stem Sets: Use a CP model to generate a diverse set of k feasible pseudoknotted stem assemblies based on sequence and topological rules.

- Phase 2 - ILP for Optimal Selection: For each CP-generated stem set, formulate a smaller, tractable ILP to select the final base pairs and minimize free energy.

- Phase 3 - Comparison: Select the overall minimum energy structure from the k ILP solutions.

FAQ 3: My ILP/CP solver returns an "infeasible" result. How can I diagnose which constraints are causing the conflict?

Answer: Infeasibility is a critical issue in declarative modeling.

- For ILP: Use the Irreducible Inconsistent Subsystem (IIS) finder. In solvers like Gurobi (

computeIIS) or CPLEX (conflict refiner), this tool identifies a minimal set of conflicting constraints and variable bounds. - For CP: Use the solver's explanation or debugging features. Many CP solvers can trace back through the propagation steps to find the origin of a domain wipe-out (a variable with no possible values left).

Diagnostic Protocol:

- Run IIS/Conflict Analysis: Execute the solver's specific diagnostic command on the infeasible model.

- Analyze the Output: The report will list a small subset of your constraints that are mutually incompatible.

- Common Culprits in Pseudoknot Prediction:

- Base Complementarity vs. Allowed Pairing Rules: A hard Watson-Crick only rule conflicting with a G-U pairing allowed elsewhere.

- Minimum Stem Length vs. Sequence Length: Constraint requiring a stem of length 5 in a loop region with only 3 available bases.

- Topological Constraints: Overlapping constraints that physically cannot be satisfied simultaneously.

Table 1: Comparison of ILP vs. CP Performance on Pseudoknot-Containing Sequences

| Sequence Length (n) | ILP Solve Time (s) | CP Solve Time (s) | Optimal Energy (kcal/mol) | Method |

|---|---|---|---|---|

| 50 | 12.5 | 8.2 | -22.3 | ILP (Gurobi) |

| 50 | N/A | 0.5 | -21.8 | CP (feasibility) |

| 100 | 285.7 | 45.1 | -45.6 | ILP (Gurobi) |

| 100 | N/A | 3.2 | -44.9 | CP (feasibility) |

| 150 | >3600 (Timeout) | 120.3 | - | ILP (Gurobi) |

| 150 | N/A | 12.8 | -68.1 | CP with heuristic search |

Note: ILP data for n=150 indicates computational intractability for the full model within 1 hour. CP found a feasible, good-quality solution quickly.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Pseudoknotted RNA Research |

|---|---|

| Gurobi Optimizer | Commercial ILP solver used for exact optimization of energy-based objective functions. |

| IBM ILOG CPLEX | Alternative commercial solver for MILP/CP, useful for hybrid modeling. |

| OR-Tools (Google) | Open-source software suite for optimization, containing both CP-SAT and traditional CP solvers. |

| ViennaRNA Package | Provides essential thermodynamic parameters for free energy calculation, integrated into objective functions. |

| Rosetta/FARFAR2 | Suite for 3D structure modeling; used to validate predicted pseudoknot folds. |

| SHAPE Reactivity Data | Experimental chemical probing data used to generate hard or soft constraints in CP/ILP models. |

Visualizations

(Hybrid CP-ILP Workflow for Pseudoknot Prediction)

(Diagnosing Infeasible ILP Model with IIS Finder)

The Role of Comparative Sequence Analysis and Phylogenetic Footprinting

Technical Support Center: Troubleshooting & FAQs

FAQ Category 1: Data Acquisition & Pre-processing Q1: My multiple sequence alignment (MSA) for phylogenetic footprinting contains highly divergent sequences, leading to poor conservation signals. How can I improve alignment quality? A: Poor alignment is a primary source of error. Implement a tiered approach:

- Filter sequences by identity (e.g., retain sequences 40-80% identical to your reference) using tools like

CD-HIT. - Use specialized aligners for non-coding regions (e.g.,

PROMALS,MAFFTwith--localpair). - Manually curate the alignment in tools like

Jalview, focusing on known functional motifs.

Q2: When performing comparative analysis across species, how do I select an appropriate evolutionary distance? A: The optimal distance balances conservation and variation. Refer to the table below for guidance:

| Evolutionary Distance (Species Group) | Best For Identifying | Risk |

|---|---|---|

| Close (e.g., Human/Chimp/Mouse) | Ultra-conserved elements, core regulatory motifs. | May miss structural constraints; signals too broad. |

| Intermediate (e.g., Mammals/Vertebrates) | Most functional RNA structures, including pseudoknots. | Optimal for phylogenetic footprinting. |

| Distant (e.g., Metazoans/Fungi) | Deeply conserved, essential structural cores. | High noise; alignment becomes unreliable. |

FAQ Category 2: Computational Analysis & Errors Q3: My pseudoknot prediction tool (e.g., HotKnots, IPknot) fails to run or crashes on my genome-scale MSA. What are the likely causes? A: This directly relates to computational complexity. The issue is likely memory or time.

- Cause 1: State-space explosion. Pseudoknot prediction with an MSA is NP-hard.

- Troubleshooting Guide:

- Reduce input size: Split the MSA into smaller, overlapping windows (e.g., 200-300 nt segments).

- Increase constraints: Use phylogenetic footprinting outputs (conserved base-pair probabilities) as mandatory constraints in the prediction algorithm. This drastically reduces the search space.

- Check resource limits: Monitor memory usage (

top,htop). Run on a high-RAM node or cluster.

Q4: How do I convert phylogenetic footprinting conservation scores into usable constraints for pseudoknot prediction algorithms? A: You need to generate a constraints file. Follow this protocol: Experimental Protocol: Generating Structural Constraints from Conservation Scores

- Input: A reliable MSA in FASTA or Stockholm format.

- Run

RNAalifold(from ViennaRNA package):RNAalifold -p --aln-stk input.stockholm- The

-pparameter calculates base-pairing probability matrices.

- The

- Extract Conserved Pairs: Parse the

_dp.psPostScript output or usebpalifold(supplementary script) to list positions with pairing probability > 0.9 and high conservation score. - Format Constraints: Format the list according to your pseudoknot predictor (e.g., for HotKnots:

P i j, where i and j are positions that must pair).

FAQ Category 3: Interpretation & Validation Q5: I have predicted a pseudoknot using comparative methods. What experimental validation is most feasible for a drug discovery lab? A: Prioritize high-throughput biochemical methods before targeted assays.

- SHAPE-MaP: (Selective 2′-Hydroxyl Acylation analyzed by Primer Extension and Mutational Profiling). Probes RNA flexibility in vitro or in vivo. Paired/unpaired nucleotides show clear reactivity differences.

- DMS-MaP: (Dimethyl Sulfate Mutational Profiling). Maps accessible adenines and cytosines. Both SHAPE and DMS data can be used to validate and refine computational predictions.

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in Analysis | Example/Tool |

|---|---|---|

| Multiple Sequence Alignment Suite | Creates the foundational input for phylogenetic footprinting. | MAFFT, Clustal Omega, PROMALS |

| Conservation Scoring Script | Quantifies per-nucleotide and per-pair evolutionary conservation. | Rate4Site, ConSurf, custom PhyloP pipelines. |

| RNA Folding Engine with Alignment | Predicts consensus structure and base-pair probabilities from MSA. | RNAalifold (ViennaRNA), Pfold. |

| Pseudoknot Prediction Software | Performs the core, computationally intensive prediction. | HotKnots, IPknot, pknotsRG. |

| Constraint File Parser | Bridges conservation data to prediction tools. | Custom Python/Perl scripts to convert RNAalifold output to tool-specific constraint formats. |

| Biochemical Validation Kit | Provides experimental verification of predicted structures. | SHAPE-MaP or DMS-MaP reagent kits (e.g., from Illumina or New England Biolabs). |

Visualization: Experimental & Computational Workflow

Diagram 1: Integrated Workflow for Pseudoknot Prediction

Diagram 2: Constraint-Driven Reduction of Computational Complexity

TECHNICAL SUPPORT CENTER

TROUBLESHOOTING GUIDES & FAQS

Q1: During SHAPE-MaP data processing, my mutation rates are abnormally low (<0.001) even for highly reactive regions. What could be the cause? A: This is often due to insufficient reverse transcription (RT) primer annealing or inefficient RT enzyme processivity. First, verify the integrity and concentration of your RT primer using a denaturing gel. Second, ensure the SHAPE reagent (e.g., 1M7) is fresh and properly dissolved in anhydrous DMSO. Third, increase the concentration of MnCl₂ in the RT buffer to 5-10 mM to promote read-through of modified sites. Check the "Experimental Protocol 1" below for detailed reagent specifications.

Q2: When fitting Cryo-EM density maps to SHAPE-MaP-informed models, I encounter steric clashes in pseudoknot regions. How should I resolve this? A: This indicates a potential over-constraining of the computational model. The SHAPE-MaP reactivity is a conformational average. Use the reactivity data as a soft constraint (e.g., in Rosetta or NAST) with a weighting factor, not a hard distance constraint. Gradually increase the weight of the Cryo-EM density map term relative to the SHAPE constraint during refinement. This allows the model to accommodate the static snapshot from Cryo-EM while respecting the solution-state chemical probing data.

Q3: My integrative modeling pipeline (e.g., using Integrative Modeling Platform - IMP) becomes computationally intractable when including thousands of SHAPE-MaP constraints for a large RNA (>500 nt). How can I reduce complexity? A: This directly addresses the thesis on computational complexity. Filter constraints strategically:

- Use only reactivity values above the 90th percentile for strong structural constraints.

- Cluster proximal nucleotides into "constraint blocks" to reduce the total number of spatial restraints.

- Implement a multi-stage protocol: first fold with sparse constraints, then refine the localized pseudoknot regions with full constraint sets. See "Workflow Diagram" below.

Q4: How do I validate an integrated SHAPE-MaP/Cryo-EM model for a pseudoknotted RNA? A: Employ orthogonal biochemical assays:

- Asymmetric Cryo-EM Analysis: Perform focused 3D classification without alignment on the pseudoknot region to check for conformational flexibility.

- Mutational Profiling (Mutate-and-Map): Introduce single-point mutations predicted to disrupt the pseudoknot and confirm via SHAPE-MaP that the reactivity profile changes as predicted by your integrated model.

- Compute the cross-correlation coefficient between the final model's simulated Cryo-EM map and the experimental map, aiming for a value >0.8.

EXPERIMENTAL PROTOCOLS

Protocol 1: SHAPE-MaP Experiment for Structured RNA

- Refolding: Dilute purified RNA to 100 nM in folding buffer (50 mM HEPES pH 8.0, 100 mM KCl, 10 mM MgCl₂). Heat to 95°C for 2 min, incubate at 55°C for 5 min, then hold at 37°C for 20 min.

- SHAPE Modification: Add 1M7 reagent from a fresh 100 mM DMSO stock to folded RNA at a final concentration of 10 mM. Perform in DMSO-only for no-modification control. React for 5 min at 37°C.

- Quenching & Recovery: Add 5 volumes of 100% ethanol, precipitate at -80°C for 1 hr, and pellet RNA.

- Mutational Profiling (MaP) RT: Resuspend RNA. Use SuperScript II reverse transcriptase with a custom buffer containing 5 mM MnCl₂ and 2.5 mM MgCl₂. Perform RT per manufacturer's instructions but extend incubation to 3 hours at 42°C.

- Library Prep & Sequencing: Amplify cDNA by PCR, add Illumina adapters, and sequence on a MiSeq (2x150 bp).

Protocol 2: Generating Constraints for Integrative Modeling

- SHAPE Reactivity Calculation: Process fastq files using

shape-mapper(v2.1.5). Normalize reactivities to a 2%-8% scale. - Constraint File Generation: For high-reactivity nucleotides (top 10%), convert to distance restraints (e.g., "nucleotide i is paired") or ambiguous contact pairs for use in modeling software like Rosetta.

- Cryo-EM Map Processing: Use RELION (v4.0) to perform post-processing and local resolution estimation. Create a mask around the pseudoknot region of interest.

- Integration in IMP: Define the system topology, add representation (GMM for density), and create a scoring function combining Cryo-EM fit (

fit_gmm), stereochemical restraints, and SHAPE-derived distance restraints (HarmonicUpperBound). Run replica exchange Gibbs sampling.

VISUALIZATIONS

Diagram 1: Integrative Modeling Workflow

Diagram 2: Pseudoknot Modeling Constraint Logic

QUANTITATIVE DATA SUMMARY

Table 1: Common SHAPE Reactivity Interpretation Guide

| Reactivity (Normalized) | Structural Interpretation | Constraint Type in Modeling |

|---|---|---|

| > 0.85 | Highly flexible / unpaired | Strong distance restraint (≥ 8 Å from others) |

| 0.40 – 0.85 | Moderately flexible / single-stranded | Ambiguous pairing exclusion |

| 0.10 – 0.40 | Possibly constrained / dynamic | Very weak or no restraint |

| < 0.10 | Paired / highly constrained | Base-pairing or stacking restraint encouraged |

Table 2: Computational Cost of Integrative Modeling Steps

| Modeling Step | Approx. CPU Hours* (500 nt RNA) | Key Parameter Influencing Complexity |

|---|---|---|

| SHAPE-only Folding (ViennaRNA) | 1-2 | Sequence length |

| Cryo-EM Map Flexible Fitting (MDFF) | 200-500 | Map resolution, particle size |

| Integrative Sampling (IMP/ROSIE) | 1000-5000+ | Number of restraints, replica count |

| Ensemble Analysis & Validation | 50-100 | Cluster size, metrics used |

*Based on 2.5 GHz Intel core equivalents.

THE SCIENTIST'S TOOLKIT: RESEARCH REAGENT SOLUTIONS

| Reagent / Material | Function in Integration | Key Consideration |

|---|---|---|

| 1M7 (1-methyl-7-nitroisatoic anhydride) | SHAPE reagent modifying flexible RNA 2'-OH groups. | Must be fresh (<24 hr old in DMSO) for consistent reactivity. |

| SuperScript II Reverse Transcriptase | MaP RT enzyme; tolerates Mn²⁺ for mutation incorporation. | Critical for high mutation read rates. Do not substitute newer SSIV. |

| Ammonium Heparose Gold Column | Purification of in vitro transcribed RNA. | Ensures homogeneous sample for both SHAPE and Cryo-EM. |

| Uranyl Formate (2%) | Negative stain for Cryo-EM grid screening. | Quick assessment of RNA monodispersity before freezing. |

| Relion 4.0 Software | Cryo-EM map reconstruction and post-processing. | Essential for high-resolution, non-uniform refinement. |

| Rosetta/FARFAR2 | De novo RNA 3D structure prediction. | Generates initial models for refinement with data. |

| Integrative Modeling Platform (IMP) | Framework for combining diverse data types. | Allows weighting of SHAPE vs. Cryo-EM constraints. |

Practical Guide: Selecting and Optimizing Pseudoknot Prediction Tools for Research and Drug Development

Technical Support Center

Troubleshooting Guides

Issue 1: Algorithm Runs Indefinitely or Crashes on Large RNA Sequences

- Problem: The pseudoknot prediction tool (e.g., HotKnots, IPknot) becomes unresponsive or runs out of memory.

- Diagnosis: This is likely due to the high computational complexity (O(n⁴) or worse) of exact dynamic programming algorithms when

n(sequence length) exceeds 2000 nucleotides. - Solution: Apply a heuristic pre-filtering step.

- Protocol: Use a fast, coarse-grained scanning tool (e.g.,

scan_for_matchesfrom theRNAlibsuite) to identify probable paired regions. - Command Example:

scan_for_matches -i your_sequence.fasta -o probable_pairs.gff - Next Step: Feed the

probable_pairs.gfffile as a constraint file to the main prediction algorithm, drastically reducing its search space.

- Protocol: Use a fast, coarse-grained scanning tool (e.g.,

Issue 2: Inaccurate Predictions for Known Pseudoknot Families

- Problem: The predicted secondary structure lacks expected pseudoknots or shows incorrect topology.

- Diagnosis: The chosen algorithm's underlying model (e.g., simple minimum free energy) may not capture the specific energy rules or topological constraints for that pseudoknot family (e.g., H-type, kissing loops).

- Solution: Switch to or cross-validate with a specialized algorithm.

- Protocol: For H-type pseudoknots, use

Kinefold(stochastic, kinetics-based). For complex nested structures, usepknotsRG(grammar-based). - Validation: Always run a known positive control sequence from a database (e.g., Pseudobase++) with the tool to confirm its capability.

- Protocol: For H-type pseudoknots, use

Issue 3: Discrepancy Between Predicted and Experimental (SHAPE) Data

- Problem: Computational prediction contradicts chemical probing data.

- Diagnosis: The algorithm is not incorporating experimental constraints.

- Solution: Utilize a tool that integrates SHAPE reactivity data.

- Protocol: Convert SHAPE reactivity to pseudo-energy bonuses/penalties using the

-shflag inRNAstructureor the--shapeoption inViennaRNA'sRNAfold. - Workflow:

shape_convert.py your_shape.dat > energy_constraints.txtthenRNAfold --shape=energy_constraints.txt your_sequence.fasta

- Protocol: Convert SHAPE reactivity to pseudo-energy bonuses/penalties using the

Frequently Asked Questions (FAQs)

Q1: I need to screen a viral genome (~10,000 nt) for potential pseudoknots. Which tool offers the best speed/accuracy trade-off?

A1: For genome-scale screening, prioritize speed. Use a lightweight heuristic like pKiss or the "fast" mode of IPknot. These use simplified energy models and partition function sampling to identify potential pseudoknot regions in O(n³) time. Follow up with detailed analysis on shorter, flagged regions using more accurate tools.

Q2: For drug target validation, we require the highest possible accuracy for a specific 150-nt RNA. Which algorithm should we use?

A2: When accuracy is critical and sequence length is manageable, employ a consensus approach. Run the sequence through at least three different algorithm types (e.g., one thermodynamics-based like HotKnots, one grammar-based like pknotsRG, and one kinetics-based like Kinefold). Use a consensus diagram tool (e.g., RNAlishapes) to identify structural elements predicted by all/most methods.

Q3: How do I formally benchmark the speed vs. accuracy of two algorithms for my thesis? A3: Follow this standardized protocol:

- Dataset: Curate a test set of 50-100 RNAs with known pseudoknot structures from Pseudobase++.

- Metrics: Measure Accuracy using Sensitivity (SN) and Positive Predictive Value (PPV). Measure Speed as wall-clock time on a standardized machine.

- Execution: Run both algorithms on the same dataset under identical computational conditions (CPU, RAM, no other processes).

- Analysis: Create an SN-PPV scatter plot and a separate speed vs. sequence length plot. Statistical tests (e.g., paired t-test) on the results are essential.

Table 1: Algorithm Performance Benchmark (Representative Data)

| Algorithm Name | Core Method | Time Complexity | Avg. Sensitivity (SN) | Avg. PPV | Best Use Case |

|---|---|---|---|---|---|

| HotKnots v2.0 | Thermodynamic, Heuristic | O(n⁴) | 0.72 | 0.68 | Balancing detail & speed for n < 500 |

| IPknot | IP, Maximum Expected Acc. | O(n³) to O(n⁴) | 0.85 | 0.82 | High-accuracy prediction for n < 300 |

| pKiss | Hierarchical Folding | O(n³) | 0.65 | 0.71 | Rapid screening of long sequences |

| Kinefold | Stochastic Kinetics | Varies | 0.78 | 0.75 | Exploring folding pathways, alternatives |

Detailed Experimental Protocol: Benchmarking Algorithm Accuracy

Title: Protocol for Calculating Prediction Sensitivity & PPV.

Materials: Known structure file (CT format), predicted structure file, compare_ct utility from RNAstructure package.

Steps:

- For each sequence, run the prediction algorithm:

prediction_tool -i input.fasta -o predicted.ct. - Align known and predicted structures:

compare_ct known.ct predicted.ct -output summary.txt. - From

summary.txt, extract the number of correctly predicted base pairs (True Positives, TP), missed pairs (False Negatives, FN), and incorrectly predicted pairs (False Positives, FP). - Calculate Sensitivity: SN = TP / (TP + FN).

- Calculate Positive Predictive Value: PPV = TP / (TP + FP).

- Average SN and PPV across your entire test dataset.

Visualizations

Title: Algorithm Selection Workflow for Pseudoknot Prediction

Title: Algorithm Complexity vs. Speed/Accuracy Trade-off

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in Pseudoknot Research |

|---|---|

| In Silico Tools | |

ViennaRNA Package (RNAfold) |

Core free energy minimization, foundational for many algorithms. |

| RNAstructure | Integrates SHAPE data, provides a GUI and Fold/Knotty algorithms. |

| Benchmark Datasets | |

| Pseudobase++ | Curated database of RNA pseudoknots; essential for training and testing algorithms. |

| ArchiveIV | Database of known RNA 3D structures; used for high-accuracy validation. |

| Validation Reagents | |

| SHAPE Chemistry (e.g., NAI) | Chemical probing reagent that informs on single-stranded regions in experimental validation. |

| Computational Environment | |

| High-Performance Computing (HPC) Cluster | Necessary for running multiple long or complex folding simulations in parallel. |

| Conda/Bioconda | Package managers for reproducible installation of complex bioinformatics toolkits. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During cross-validation, my model's sensitivity is high (>95%) but specificity is very low (<40%). The positive class is a rare pseudoknot structure. What is the primary cause and how can I correct it? A1: This is a classic class imbalance issue. Your model is biased towards predicting the majority class (non-pseudoknots). To correct this:

- Resample your training data: Use SMOTE (Synthetic Minority Over-sampling Technique) to generate synthetic examples of the pseudoknot class. Do not apply SMOTE to your validation/test sets.

- Adjust class weights: Penalize misclassifications of the minority class more heavily. In scikit-learn, set

class_weight='balanced'for algorithms like SVM or Random Forest. - Use a different evaluation metric: Rely on the Precision-Recall curve and Area Under the Curve (AUC-PR) instead of ROC-AUC for highly imbalanced datasets.

- Threshold tuning: The default threshold of 0.5 is rarely optimal. Use the Precision-Recall curve to select a threshold that balances sensitivity and specificity for your specific research goal.

Q2: I am tuning a deep learning model for pseudoknot prediction. The computational cost of a full grid search over hyperparameters is prohibitive. What efficient tuning strategies are recommended? A2: For computationally intensive models, use these strategies to reduce complexity:

- Bayesian Optimization: Utilizes libraries like

scikit-optimizeorOptuna. It builds a probability model of the objective function (e.g., balanced accuracy) to intelligently select the next hyperparameters to evaluate, converging in far fewer iterations than grid search. - Randomized Search: Perform a random sample from a defined hyperparameter space for a fixed number of iterations. It often finds good configurations faster than exhaustive search.

- Early Stopping Protocols: Implement callbacks (e.g., in TensorFlow/Keras or PyTorch) to halt training when validation performance plateaus, saving resources per training run.

- Reduced Dataset for Initial Screening: Run initial hyperparameter searches on a smaller, representative subset of your data to narrow the search space before a final tuning round on the full dataset.

Q3: After deploying my tuned model on a new dataset of viral RNA sequences, specificity drops significantly while sensitivity remains stable. What does this indicate and how should I troubleshoot? A3: This indicates a data drift or covariate shift problem—the statistical properties of the new viral RNA data differ from your training data.

- Troubleshooting Steps:

- Feature Distribution Analysis: Compare summary statistics (mean, variance) of key features (e.g., GC content, sequence length, minimum free energy) between the original training set and the new viral dataset. Create histograms or Q-Q plots.

- Domain Adaptation: If a shift is confirmed, consider:

- Retraining: Incorporate a small amount of labeled data from the new viral domain into your training set.

- Transfer Learning: Use the weights from your existing model as a starting point and fine-tune on the new viral data.

- Algorithmic Adjustment: Use domain-invariant feature learning techniques.

Q4: What are the standard, publicly available benchmark datasets I should use to validate my pseudoknot prediction algorithm's tuned performance? A4: Using standard benchmarks is critical for comparative analysis. Key datasets include:

| Dataset Name | Source/Description | Primary Use |

|---|---|---|

| Pseudobase++ | Curated database of pseudoknot sequences and structures. | Training and testing for sequence-based methods. |

| RNA STRAND (Pseudoknots subset) | Contains experimentally determined structures with pseudoknots from the PDB. | Testing structural accuracy of prediction tools. |

| ArchiveII | A widely used benchmark set for RNA secondary structure prediction, containing pseudoknots. | Comparative performance benchmarking against published tools. |

| Viral RNA Pseudoknot Dataset | Specialized collections (e.g., from frameshift-inducing sites in coronaviruses). | Testing performance on functionally important viral pseudoknots. |

Experimental Protocols for Key Cited Studies

Protocol 1: Cross-Validation for Imbalanced Data in Pseudoknot Prediction Objective: To reliably estimate model performance without bias from class imbalance. Methodology:

- Use Stratified k-Fold Cross-Validation to preserve the percentage of samples for each class (pseudoknot vs. non-pseudoknot) in each fold.

- Apply preprocessing (e.g., SMOTE) only to the training folds within the cross-validation loop. The validation fold must be left untouched to simulate real-world performance.

- For each fold, calculate Sensitivity (Recall), Specificity, Precision, and F1-Score.

- Report the mean and standard deviation of these metrics across all folds.

Protocol 2: Bayesian Hyperparameter Optimization for a Neural Network Objective: To efficiently tune a deep learning model's hyperparameters. Methodology:

- Define Search Space: Specify ranges for key parameters (e.g., learning rate:

[1e-5, 1e-2]log-uniform, number of layers:[2, 5]integer, dropout rate:[0.1, 0.5]uniform). - Define Objective Function: A function that takes a set of hyperparameters, trains the model for a limited number of epochs with early stopping, and returns the negative validation loss (or

1 - Balanced Accuracy). - Run Optimization: Using

Optuna, run 50-100 trials. The library uses a Tree-structured Parzen Estimator (TPE) to suggest promising hyperparameters. - Final Training: Train the model on the full training set using the best-found hyperparameters.

Visualizations

Title: Parameter Tuning Workflow for Pseudoknot Prediction

Title: Threshold Tuning Trade-off: Sensitivity vs. Specificity

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Pseudoknot Prediction Research |

|---|---|

| SHAPE-MaP Reagents | Chemical probes (e.g., 1M7) for experimental RNA structure mapping. Data provides crucial constraints for computational models, improving specificity. |

| DMS-Seq Kit | Dimethyl sulfate-based probing to identify single-stranded adenosine and cytosine residues, validating in-solution RNA structure. |

| Benchmark Datasets (Pseudobase++, ArchiveII) | Gold-standard data for training supervised ML models and benchmarking prediction accuracy against published algorithms. |